|

|

|

|

|

|

| Entropy articles |

|---|

Entropy is a scientific concept as well as a measurable physical property that is most commonly associated with a state of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the microscopic description of nature in statistical physics, and to the principles of information theory. It has found far-ranging applications in chemistry and physics, in biological systems and their relation to life, in cosmology, economics, sociology, weather science, climate change, and information systems including the transmission of information in telecommunication.

The thermodynamic concept was referred to by Scottish scientist and engineer Macquorn Rankine in 1850 with the names thermodynamic function and heat-potential. In 1865, German physicist Rudolf Clausius, one of the leading founders of the field of thermodynamics, defined it as the quotient of an infinitesimal amount of heat to the instantaneous temperature. He initially described it as transformation-content, in German Verwandlungsinhalt, and later coined the term entropy from a Greek word for transformation. Referring to microscopic constitution and structure, in 1862, Clausius interpreted the concept as meaning disgregation.

A consequence of entropy is that certain processes are irreversible or impossible, aside from the requirement of not violating the conservation of energy, the latter being expressed in the first law of thermodynamics. Entropy is central to the second law of thermodynamics, which states that the entropy of isolated systems left to spontaneous evolution cannot decrease with time, as they always arrive at a state of thermodynamic equilibrium, where the entropy is highest.

Austrian physicist Ludwig Boltzmann explained entropy as the measure of the number of possible microscopic arrangements or states of individual atoms and molecules of a system that comply with the macroscopic condition of the system. He thereby introduced the concept of statistical disorder and probability distributions into a new field of thermodynamics, called statistical mechanics, and found the link between the microscopic interactions, which fluctuate about an average configuration, to the macroscopically observable behavior, in form of a simple logarithmic law, with a proportionality constant, the Boltzmann constant, that has become one of the defining universal constants for the modern International System of Units (SI).

In 1948, Bell Labs scientist Claude Shannon developed similar statistical concepts of measuring microscopic uncertainty and multiplicity to the problem of random losses of information in telecommunication signals. Upon John von Neumann's suggestion, Shannon named this entity of missing information in analogous manner to its use in statistical mechanics as entropy, and gave birth to the field of information theory. This description has been identified as a universal definition of the concept of entropy.

History

In his 1803 paper, Fundamental Principles of Equilibrium and Movement, the French mathematician Lazare Carnot proposed that in any machine, the accelerations and shocks of the moving parts represent losses of moment of activity; in any natural process there exists an inherent tendency towards the dissipation of useful energy. In 1824, building on that work, Lazare's son, Sadi Carnot, published Reflections on the Motive Power of Fire, which posited that in all heat-engines, whenever "caloric" (what is now known as heat) falls through a temperature difference, work or motive power can be produced from the actions of its fall from a hot to cold body. He used an analogy with how water falls in a water wheel. That was an early insight into the second law of thermodynamics. Carnot based his views of heat partially on the early 18th-century "Newtonian hypothesis" that both heat and light were types of indestructible forms of matter, which are attracted and repelled by other matter, and partially on the contemporary views of Count Rumford, who showed in 1789 that heat could be created by friction, as when cannon bores are machined. Carnot reasoned that if the body of the working substance, such as a body of steam, is returned to its original state at the end of a complete engine cycle, "no change occurs in the condition of the working body".

The first law of thermodynamics, deduced from the heat-friction experiments of James Joule in 1843, expresses the concept of energy, and its conservation in all processes; the first law, however, is unsuitable to separately quantify the effects of friction and dissipation.

In the 1850s and 1860s, German physicist Rudolf Clausius objected to the supposition that no change occurs in the working body, and gave that change a mathematical interpretation, by questioning the nature of the inherent loss of usable heat when work is done, e.g., heat produced by friction. He described his observations as a dissipative use of energy, resulting in a transformation-content (Verwandlungsinhalt in German), of a thermodynamic system or working body of chemical species during a change of state. That was in contrast to earlier views, based on the theories of Isaac Newton, that heat was an indestructible particle that had mass. Clausius discovered that the non-usable energy increases as steam proceeds from inlet to exhaust in a steam engine. From the prefix en-, as in 'energy', and from the Greek word τροπή [tropē], which is translated in an established lexicon as turning or change and that he rendered in German as Verwandlung, a word often translated into English as transformation, in 1865 Clausius coined the name of that property as entropy. The word was adopted into the English language in 1868.

Later, scientists such as Ludwig Boltzmann, Josiah Willard Gibbs, and James Clerk Maxwell gave entropy a statistical basis. In 1877, Boltzmann visualized a probabilistic way to measure the entropy of an ensemble of ideal gas particles, in which he defined entropy as proportional to the natural logarithm of the number of microstates such a gas could occupy. The proportionality constant in this definition, called the Boltzmann constant, has become one of the defining universal constants for the modern International System of Units (SI). Henceforth, the essential problem in statistical thermodynamics has been to determine the distribution of a given amount of energy E over N identical systems. Constantin Carathéodory, a Greek mathematician, linked entropy with a mathematical definition of irreversibility, in terms of trajectories and integrability.

Etymology

In 1865, Clausius named the concept of "the differential of a quantity which depends on the configuration of the system," entropy (Entropie) after the Greek word for 'transformation'. He gave "transformational content" (Verwandlungsinhalt) as a synonym, paralleling his "thermal and ergonal content" (Wärme- und Werkinhalt) as the name of , but preferring the term entropy as a close parallel of the word energy, as he found the concepts nearly "analogous in their physical significance." This term was formed by replacing the root of ἔργον ('ergon', 'work') by that of τροπή ('tropy', 'transformation').

In more detail, Clausius explained his choice of "entropy" as a name as follows:

I prefer going to the ancient languages for the names of important scientific quantities, so that they may mean the same thing in all living tongues. I propose, therefore, to call S the entropy of a body, after the Greek word "transformation". I have designedly coined the word entropy to be similar to energy, for these two quantities are so analogous in their physical significance, that an analogy of denominations seems to me helpful.

Leon Cooper added that in this way "he succeeded in coining a word that meant the same thing to everybody: nothing."

Definitions and descriptions

Any method involving the notion of entropy, the very existence of which depends on the second law of thermodynamics, will doubtless seem to many far-fetched, and may repel beginners as obscure and difficult of comprehension.

Willard Gibbs, Graphical Methods in the Thermodynamics of Fluids

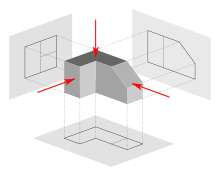

The concept of entropy is described by two principal approaches, the macroscopic perspective of classical thermodynamics, and the microscopic description central to statistical mechanics. The classical approach defines entropy in terms of macroscopically measurable physical properties, such as bulk mass, volume, pressure, and temperature. The statistical definition of entropy defines it in terms of the statistics of the motions of the microscopic constituents of a system – modeled at first classically, e.g. Newtonian particles constituting a gas, and later quantum-mechanically (photons, phonons, spins, etc.). The two approaches form a consistent, unified view of the same phenomenon as expressed in the second law of thermodynamics, which has found universal applicability to physical processes.

State variables and functions of state

Many thermodynamic properties are defined by physical variables that define a state of thermodynamic equilibrium; these are state variables. State variables depend only on the equilibrium condition, not on the path evolution to that state. State variables can be functions of state, also called state functions, in a sense that one state variable is a mathematical function of other state variables. Often, if some properties of a system are determined, they are sufficient to determine the state of the system and thus other properties' values. For example, temperature and pressure of a given quantity of gas determine its state, and thus also its volume via the ideal gas law. A system composed of a pure substance of a single phase at a particular uniform temperature and pressure is determined, and is thus a particular state, and has not only a particular volume but also a specific entropy. The fact that entropy is a function of state makes it useful. In the Carnot cycle, the working fluid returns to the same state that it had at the start of the cycle, hence the change or line integral of any state function, such as entropy, over this reversible cycle is zero.

Reversible process

Total entropy may be conserved during a reversible process. The entropy change of the system (not including the surroundings) is well-defined as heat transferred to the system divided by the system temperature , . A reversible process is a quasistatic one that deviates only infinitesimally from thermodynamic equilibrium and avoids friction or other dissipation. Any process that happens quickly enough to deviate from thermal equilibrium cannot be reversible, total entropy increases, and the potential for maximum work to be done in the process is also lost. For example, in the Carnot cycle, while the heat flow from the hot reservoir to the cold reservoir represents an increase in entropy, the work output, if reversibly and perfectly stored in some energy storage mechanism, represents a decrease in entropy that could be used to operate the heat engine in reverse and return to the previous state; thus the total entropy change may still be zero at all times if the entire process is reversible. An irreversible process increases the total entropy of system and surroundings.

Carnot cycle

The concept of entropy arose from Rudolf Clausius's study of the Carnot cycle. In a Carnot cycle, heat QH is absorbed isothermally at temperature TH from a 'hot' reservoir and given up isothermally as heat QC to a 'cold' reservoir at TC. According to Carnot's principle, work can only be produced by the system when there is a temperature difference, and the work should be some function of the difference in temperature and the heat absorbed (QH). Carnot did not distinguish between QH and QC, since he was using the incorrect hypothesis that caloric theory was valid, and hence heat was conserved (the incorrect assumption that QH and QC were equal in magnitude) when, in fact, QH is greater than the magnitude of QC. Through the efforts of Clausius and Kelvin, it is now known that the maximum work that a heat engine can produce is the product of the Carnot efficiency and the heat absorbed from the hot reservoir:

(1) |

To derive the Carnot efficiency, which is 1 − TC/TH (a number less than one), Kelvin had to evaluate the ratio of the work output to the heat absorbed during the isothermal expansion with the help of the Carnot–Clapeyron equation, which contained an unknown function called the Carnot function. The possibility that the Carnot function could be the temperature as measured from a zero point of temperature was suggested by Joule in a letter to Kelvin. This allowed Kelvin to establish his absolute temperature scale. It is also known that the net work W produced by the system in one cycle is the net heat absorbed, which is the sum (or difference of the magnitudes) of the heat QH > 0 absorbed from the hot reservoir and the waste heat QC < 0 given off to the cold reservoir:

(2) |

Since the latter is valid over the entire cycle, this gave Clausius the hint that at each stage of the cycle, work and heat would not be equal, but rather their difference would be the change of a state function that would vanish upon completion of the cycle. The state function was called the internal energy central to the first law of thermodynamics.

Now equating (1) and (2) gives

This implies that there is a function of state whose change is Q/T and that is conserved over a complete cycle of the Carnot cycle. Clausius called this state function entropy. One can see that entropy was discovered through mathematics rather than through laboratory results. It is a mathematical construct and has no easy physical analogy. This makes the concept somewhat obscure or abstract, akin to how the concept of energy arose.

Clausius then asked what would happen if there should be less work produced by the system than that predicted by Carnot's principle. The right-hand side of the first equation would be the upper bound of the work output by the system, which would now be converted into an inequality

When the second equation is used to express the work as a net or total heat exchanged in a cycle, we get

So more heat is given up to the cold reservoir than in the Carnot cycle. If we denote the entropy changes by ΔSi = Qi/Ti for the two stages of the process, then the above inequality can be written as a decrease in the entropy

The magnitude of the entropy that leaves the system is greater than the entropy that enters the system, implying that some irreversible process prevents the cycle from producing the maximum amount of work predicted by the Carnot equation.

The Carnot cycle and efficiency are useful because they define the upper bound of the possible work output and the efficiency of any classical thermodynamic heat engine. Other cycles, such as the Otto cycle, Diesel cycle and Brayton cycle, can be analyzed from the standpoint of the Carnot cycle. Any machine or cyclic process that converts heat to work and is claimed to produce an efficiency greater than the Carnot efficiency is not viable because it violates the second law of thermodynamics. For very small numbers of particles in the system, statistical thermodynamics must be used. The efficiency of devices such as photovoltaic cells requires an analysis from the standpoint of quantum mechanics.

Classical thermodynamics

|

Conjugate variables of thermodynamics | ||||||||

|

The thermodynamic definition of entropy was developed in the early 1850s by Rudolf Clausius and essentially describes how to measure the entropy of an isolated system in thermodynamic equilibrium with its parts. Clausius created the term entropy as an extensive thermodynamic variable that was shown to be useful in characterizing the Carnot cycle. Heat transfer along the isotherm steps of the Carnot cycle was found to be proportional to the temperature of a system (known as its absolute temperature). This relationship was expressed in increments of entropy equal to the ratio of incremental heat transfer divided by temperature, which was found to vary in the thermodynamic cycle but eventually return to the same value at the end of every cycle. Thus it was found to be a function of state, specifically a thermodynamic state of the system.

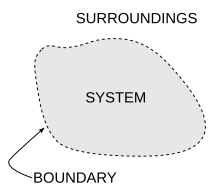

While Clausius based his definition on a reversible process, there are also irreversible processes that change entropy. Following the second law of thermodynamics, entropy of an isolated system always increases for irreversible processes. The difference between an isolated system and closed system is that energy may not flow to and from an isolated system, but energy flow to and from a closed system is possible. Nevertheless, for both closed and isolated systems, and indeed, also in open systems, irreversible thermodynamics processes may occur.

According to the Clausius equality, for a reversible cyclic process: . This means the line integral is path-independent.

So we can define a state function S called entropy, which satisfies .

To find the entropy difference between any two states of a system, the integral must be evaluated for some reversible path between the initial and final states. Since entropy is a state function, the entropy change of the system for an irreversible path is the same as for a reversible path between the same two states. However, the heat transferred to or from, and the entropy change of, the surroundings is different.

We can only obtain the change of entropy by integrating the above formula. To obtain the absolute value of the entropy, we need the third law of thermodynamics, which states that S = 0 at absolute zero for perfect crystals.

From a macroscopic perspective, in classical thermodynamics the entropy is interpreted as a state function of a thermodynamic system: that is, a property depending only on the current state of the system, independent of how that state came to be achieved. In any process where the system gives up energy ΔE, and its entropy falls by ΔS, a quantity at least TR ΔS of that energy must be given up to the system's surroundings as heat (TR is the temperature of the system's external surroundings). Otherwise the process cannot go forward. In classical thermodynamics, the entropy of a system is defined only if it is in physical thermodynamic equilibrium (but chemical equilibrium is not required: the entropy of a mixture of two moles of hydrogen and one mole of oxygen at 1 bar pressure and 298 K is well-defined).

Statistical mechanics

The statistical definition was developed by Ludwig Boltzmann in the 1870s by analyzing the statistical behavior of the microscopic components of the system. Boltzmann showed that this definition of entropy was equivalent to the thermodynamic entropy to within a constant factor—known as Boltzmann's constant. In summary, the thermodynamic definition of entropy provides the experimental definition of entropy, while the statistical definition of entropy extends the concept, providing an explanation and a deeper understanding of its nature.

The interpretation of entropy in statistical mechanics is the measure of uncertainty, disorder, or mixedupness in the phrase of Gibbs, which remains about a system after its observable macroscopic properties, such as temperature, pressure and volume, have been taken into account. For a given set of macroscopic variables, the entropy measures the degree to which the probability of the system is spread out over different possible microstates. In contrast to the macrostate, which characterizes plainly observable average quantities, a microstate specifies all molecular details about the system including the position and velocity of every molecule. The more such states are available to the system with appreciable probability, the greater the entropy. In statistical mechanics, entropy is a measure of the number of ways a system can be arranged, often taken to be a measure of "disorder" (the higher the entropy, the higher the disorder). This definition describes the entropy as being proportional to the natural logarithm of the number of possible microscopic configurations of the individual atoms and molecules of the system (microstates) that could cause the observed macroscopic state (macrostate) of the system. The constant of proportionality is the Boltzmann constant.

Boltzmann's constant, and therefore entropy, have dimensions of energy divided by temperature, which has a unit of joules per kelvin (J⋅K−1) in the International System of Units (or kg⋅m2⋅s−2⋅K−1 in terms of base units). The entropy of a substance is usually given as an intensive property – either entropy per unit mass (SI unit: J⋅K−1⋅kg−1) or entropy per unit amount of substance (SI unit: J⋅K−1⋅mol−1).

Specifically, entropy is a logarithmic measure of the number of system states with significant probability of being occupied:

( is the probability that the system is in th state, usually given by the Boltzmann distribution; if states are defined in a continuous manner, the summation is replaced by an integral over all possible states) or, equivalently, the expected value of the logarithm of the probability that a microstate is occupied

where kB is the Boltzmann constant, equal to 1.38065×10−23 J/K. The summation is over all the possible microstates of the system, and pi is the probability that the system is in the i-th microstate. This definition assumes that the basis set of states has been picked so that there is no information on their relative phases. In a different basis set, the more general expression is

where is the density matrix, is trace and is the matrix logarithm. This density matrix formulation is not needed in cases of thermal equilibrium so long as the basis states are chosen to be energy eigenstates. For most practical purposes, this can be taken as the fundamental definition of entropy since all other formulas for S can be mathematically derived from it, but not vice versa.

In what has been called the fundamental assumption of statistical thermodynamics or the fundamental postulate in statistical mechanics, among system microstates of the same energy (degenerate microstates) each microstate is assumed to be populated with equal probability; this assumption is usually justified for an isolated system in equilibrium. Then for an isolated system pi = 1/Ω, where Ω is the number of microstates whose energy equals the system's energy, and the previous equation reduces to

In thermodynamics, such a system is one in which the volume, number of molecules, and internal energy are fixed (the microcanonical ensemble).

For a given thermodynamic system, the excess entropy is defined as the entropy minus that of an ideal gas at the same density and temperature, a quantity that is always negative because an ideal gas is maximally disordered. This concept plays an important role in liquid-state theory. For instance, Rosenfeld's excess-entropy scaling principle states that reduced transport coefficients throughout the two-dimensional phase diagram are functions uniquely determined by the excess entropy.

The most general interpretation of entropy is as a measure of the extent of uncertainty about a system. The equilibrium state of a system maximizes the entropy because it does not reflect all information about the initial conditions, except for the conserved variables. This uncertainty is not of the everyday subjective kind, but rather the uncertainty inherent to the experimental method and interpretative model.

The interpretative model has a central role in determining entropy. The qualifier "for a given set of macroscopic variables" above has deep implications: if two observers use different sets of macroscopic variables, they see different entropies. For example, if observer A uses the variables U, V and W, and observer B uses U, V, W, X, then, by changing X, observer B can cause an effect that looks like a violation of the second law of thermodynamics to observer A. In other words: the set of macroscopic variables one chooses must include everything that may change in the experiment, otherwise one might see decreasing entropy.

Entropy can be defined for any Markov processes with reversible dynamics and the detailed balance property.

In Boltzmann's 1896 Lectures on Gas Theory, he showed that this expression gives a measure of entropy for systems of atoms and molecules in the gas phase, thus providing a measure for the entropy of classical thermodynamics.

Entropy of a system

Entropy arises directly from the Carnot cycle. It can also be described as the reversible heat divided by temperature. Entropy is a fundamental function of state.

In a thermodynamic system, pressure and temperature tend to become uniform over time because the equilibrium state has higher probability (more possible combinations of microstates) than any other state.

As an example, for a glass of ice water in air at room temperature, the difference in temperature between the warm room (the surroundings) and the cold glass of ice and water (the system and not part of the room) decreases as portions of the thermal energy from the warm surroundings spread to the cooler system of ice and water. Over time the temperature of the glass and its contents and the temperature of the room become equal. In other words, the entropy of the room has decreased as some of its energy has been dispersed to the ice and water, of which the entropy has increased.

However, as calculated in the example, the entropy of the system of ice and water has increased more than the entropy of the surrounding room has decreased. In an isolated system such as the room and ice water taken together, the dispersal of energy from warmer to cooler always results in a net increase in entropy. Thus, when the "universe" of the room and ice water system has reached a temperature equilibrium, the entropy change from the initial state is at a maximum. The entropy of the thermodynamic system is a measure of how far the equalization has progressed.

Thermodynamic entropy is a non-conserved state function that is of great importance in the sciences of physics and chemistry. Historically, the concept of entropy evolved to explain why some processes (permitted by conservation laws) occur spontaneously while their time reversals (also permitted by conservation laws) do not; systems tend to progress in the direction of increasing entropy. For isolated systems, entropy never decreases. This fact has several important consequences in science: first, it prohibits "perpetual motion" machines; and second, it implies the arrow of entropy has the same direction as the arrow of time. Increases in the total entropy of system and surroundings correspond to irreversible changes, because some energy is expended as waste heat, limiting the amount of work a system can do.

Unlike many other functions of state, entropy cannot be directly observed but must be calculated. Absolute standard molar entropy of a substance can be calculated from the measured temperature dependence of its heat capacity. The molar entropy of ions is obtained as a difference in entropy from a reference state defined as zero entropy. The second law of thermodynamics states that the entropy of an isolated system must increase or remain constant. Therefore, entropy is not a conserved quantity: for example, in an isolated system with non-uniform temperature, heat might irreversibly flow and the temperature become more uniform such that entropy increases. Chemical reactions cause changes in entropy and system entropy, in conjunction with enthalpy, plays an important role in determining in which direction a chemical reaction spontaneously proceeds.

One dictionary definition of entropy is that it is "a measure of thermal energy per unit temperature that is not available for useful work" in a cyclic process. For instance, a substance at uniform temperature is at maximum entropy and cannot drive a heat engine. A substance at non-uniform temperature is at a lower entropy (than if the heat distribution is allowed to even out) and some of the thermal energy can drive a heat engine.

A special case of entropy increase, the entropy of mixing, occurs when two or more different substances are mixed. If the substances are at the same temperature and pressure, there is no net exchange of heat or work – the entropy change is entirely due to the mixing of the different substances. At a statistical mechanical level, this results due to the change in available volume per particle with mixing.

Equivalence of definitions

Proofs of equivalence between the definition of entropy in statistical mechanics (the Gibbs entropy formula ) and in classical thermodynamics ( together with the fundamental thermodynamic relation) are known for the microcanonical ensemble, the canonical ensemble, the grand canonical ensemble, and the isothermal–isobaric ensemble. These proofs are based on the probability density of microstates of the generalized Boltzmann distribution and the identification of the thermodynamic internal energy as the ensemble average . Thermodynamic relations are then employed to derive the well-known Gibbs entropy formula. However, the equivalence between the Gibbs entropy formula and the thermodynamic definition of entropy is not a fundamental thermodynamic relation but rather a consequence of the form of the generalized Boltzmann distribution.

Furthermore, it has been shown that the definitions of entropy in statistical mechanics is the only entropy that is equivalent to the classical thermodynamics entropy under the following postulates:

- The probability density function is proportional to some function of the ensemble parameters and random variables.

- Thermodynamic state functions are described by ensemble averages of random variables.

- At infinite temperature, all the microstates have the same probability.

Second law of thermodynamics

The second law of thermodynamics requires that, in general, the total entropy of any system does not decrease other than by increasing the entropy of some other system. Hence, in a system isolated from its environment, the entropy of that system tends not to decrease. It follows that heat cannot flow from a colder body to a hotter body without the application of work to the colder body. Secondly, it is impossible for any device operating on a cycle to produce net work from a single temperature reservoir; the production of net work requires flow of heat from a hotter reservoir to a colder reservoir, or a single expanding reservoir undergoing adiabatic cooling, which performs adiabatic work. As a result, there is no possibility of a perpetual motion machine. It follows that a reduction in the increase of entropy in a specified process, such as a chemical reaction, means that it is energetically more efficient.

It follows from the second law of thermodynamics that the entropy of a system that is not isolated may decrease. An air conditioner, for example, may cool the air in a room, thus reducing the entropy of the air of that system. The heat expelled from the room (the system), which the air conditioner transports and discharges to the outside air, always makes a bigger contribution to the entropy of the environment than the decrease of the entropy of the air of that system. Thus, the total of entropy of the room plus the entropy of the environment increases, in agreement with the second law of thermodynamics.

In mechanics, the second law in conjunction with the fundamental thermodynamic relation places limits on a system's ability to do useful work. The entropy change of a system at temperature absorbing an infinitesimal amount of heat in a reversible way, is given by . More explicitly, an energy is not available to do useful work, where is the temperature of the coldest accessible reservoir or heat sink external to the system. For further discussion, see Exergy.

Statistical mechanics demonstrates that entropy is governed by probability, thus allowing for a decrease in disorder even in an isolated system. Although this is possible, such an event has a small probability of occurring, making it unlikely.

The applicability of a second law of thermodynamics is limited to systems in or sufficiently near equilibrium state, so that they have defined entropy. Some inhomogeneous systems out of thermodynamic equilibrium still satisfy the hypothesis of local thermodynamic equilibrium, so that entropy density is locally defined as an intensive quantity. For such systems, there may apply a principle of maximum time rate of entropy production. It states that such a system may evolve to a steady state that maximizes its time rate of entropy production. This does not mean that such a system is necessarily always in a condition of maximum time rate of entropy production; it means that it may evolve to such a steady state.

Applications

The fundamental thermodynamic relation

The entropy of a system depends on its internal energy and its external parameters, such as its volume. In the thermodynamic limit, this fact leads to an equation relating the change in the internal energy to changes in the entropy and the external parameters. This relation is known as the fundamental thermodynamic relation. If external pressure bears on the volume as the only external parameter, this relation is:

Since both internal energy and entropy are monotonic functions of temperature , implying that the internal energy is fixed when one specifies the entropy and the volume, this relation is valid even if the change from one state of thermal equilibrium to another with infinitesimally larger entropy and volume happens in a non-quasistatic way (so during this change the system may be very far out of thermal equilibrium and then the whole-system entropy, pressure, and temperature may not exist).

The fundamental thermodynamic relation implies many thermodynamic identities that are valid in general, independent of the microscopic details of the system. Important examples are the Maxwell relations and the relations between heat capacities.

Entropy in chemical thermodynamics

Thermodynamic entropy is central in chemical thermodynamics, enabling changes to be quantified and the outcome of reactions predicted. The second law of thermodynamics states that entropy in an isolated system – the combination of a subsystem under study and its surroundings – increases during all spontaneous chemical and physical processes. The Clausius equation of introduces the measurement of entropy change, . Entropy change describes the direction and quantifies the magnitude of simple changes such as heat transfer between systems – always from hotter to cooler spontaneously.

The thermodynamic entropy therefore has the dimension of energy divided by temperature, and the unit joule per kelvin (J/K) in the International System of Units (SI).

Thermodynamic entropy is an extensive property, meaning that it scales with the size or extent of a system. In many processes it is useful to specify the entropy as an intensive property independent of the size, as a specific entropy characteristic of the type of system studied. Specific entropy may be expressed relative to a unit of mass, typically the kilogram (unit: J⋅kg−1⋅K−1). Alternatively, in chemistry, it is also referred to one mole of substance, in which case it is called the molar entropy with a unit of J⋅mol−1⋅K−1.

Thus, when one mole of substance at about 0 K is warmed by its surroundings to 298 K, the sum of the incremental values of constitute each element's or compound's standard molar entropy, an indicator of the amount of energy stored by a substance at 298 K. Entropy change also measures the mixing of substances as a summation of their relative quantities in the final mixture.

Entropy is equally essential in predicting the extent and direction of complex chemical reactions. For such applications, must be incorporated in an expression that includes both the system and its surroundings, . This expression becomes, via some steps, the Gibbs free energy equation for reactants and products in the system: [the Gibbs free energy change of the system] [the enthalpy change] [the entropy change].

World's technological capacity to store and communicate entropic information

A 2011 study in Science (journal) estimated the world's technological capacity to store and communicate optimally compressed information normalized on the most effective compression algorithms available in the year 2007, therefore estimating the entropy of the technologically available sources. The author's estimate that human kind's technological capacity to store information grew from 2.6 (entropically compressed) exabytes in 1986 to 295 (entropically compressed) exabytes in 2007. The world's technological capacity to receive information through one-way broadcast networks was 432 exabytes of (entropically compressed) information in 1986, to 1.9 zettabytes in 2007. The world's effective capacity to exchange information through two-way telecommunication networks was 281 petabytes of (entropically compressed) information in 1986, to 65 (entropically compressed) exabytes in 2007.

Entropy balance equation for open systems

In chemical engineering, the principles of thermodynamics are commonly applied to "open systems", i.e. those in which heat, work, and mass flow across the system boundary. Flows of both heat () and work, i.e. (shaft work) and (pressure-volume work), across the system boundaries, in general cause changes in the entropy of the system. Transfer as heat entails entropy transfer , where is the absolute thermodynamic temperature of the system at the point of the heat flow. If there are mass flows across the system boundaries, they also influence the total entropy of the system. This account, in terms of heat and work, is valid only for cases in which the work and heat transfers are by paths physically distinct from the paths of entry and exit of matter from the system.

To derive a generalized entropy balanced equation, we start with the general balance equation for the change in any extensive quantity in a thermodynamic system, a quantity that may be either conserved, such as energy, or non-conserved, such as entropy. The basic generic balance expression states that , i.e. the rate of change of in the system, equals the rate at which enters the system at the boundaries, minus the rate at which leaves the system across the system boundaries, plus the rate at which is generated within the system. For an open thermodynamic system in which heat and work are transferred by paths separate from the paths for transfer of matter, using this generic balance equation, with respect to the rate of change with time of the extensive quantity entropy , the entropy balance equation is:

where

- is the net rate of entropy flow due to the flows of mass into and out of the system (where is entropy per unit mass).

- is the rate of entropy flow due to the flow of heat across the system boundary.

- is the rate of entropy production within the system. This entropy production arises from processes within the system, including chemical reactions, internal matter diffusion, internal heat transfer, and frictional effects such as viscosity occurring within the system from mechanical work transfer to or from the system.

If there are multiple heat flows, the term is replaced by where is the heat flow and is the temperature at the th heat flow port into the system.

Note that the nomenclature "entropy balance" is misleading and often deemed inappropriate because entropy is not a conserved quantity. In other words, the term is never a known quantity but always a derived one based on the expression above. Therefore, the open system version of the second law is more appropriately described as the "entropy generation equation" since it specifies that , with zero for reversible processes or greater than zero for irreversible ones.

Entropy change formulas for simple processes

For certain simple transformations in systems of constant composition, the entropy changes are given by simple formulas.

Isothermal expansion or compression of an ideal gas

For the expansion (or compression) of an ideal gas from an initial volume and pressure to a final volume and pressure at any constant temperature, the change in entropy is given by:

Here is the amount of gas (in moles) and is the ideal gas constant. These equations also apply for expansion into a finite vacuum or a throttling process, where the temperature, internal energy and enthalpy for an ideal gas remain constant.

Cooling and heating

For pure heating or cooling of any system (gas, liquid or solid) at constant pressure from an initial temperature to a final temperature , the entropy change is

provided that the constant-pressure molar heat capacity (or specific heat) CP is constant and that no phase transition occurs in this temperature interval.

Similarly at constant volume, the entropy change is

where the constant-volume molar heat capacity Cv is constant and there is no phase change.

At low temperatures near absolute zero, heat capacities of solids quickly drop off to near zero, so the assumption of constant heat capacity does not apply.

Since entropy is a state function, the entropy change of any process in which temperature and volume both vary is the same as for a path divided into two steps – heating at constant volume and expansion at constant temperature. For an ideal gas, the total entropy change is

Similarly if the temperature and pressure of an ideal gas both vary,

Phase transitions

Reversible phase transitions occur at constant temperature and pressure. The reversible heat is the enthalpy change for the transition, and the entropy change is the enthalpy change divided by the thermodynamic temperature. For fusion (melting) of a solid to a liquid at the melting point Tm, the entropy of fusion is

Similarly, for vaporization of a liquid to a gas at the boiling point Tb, the entropy of vaporization is

Approaches to understanding entropy

As a fundamental aspect of thermodynamics and physics, several different approaches to entropy beyond that of Clausius and Boltzmann are valid.

Standard textbook definitions

The following is a list of additional definitions of entropy from a collection of textbooks:

- a measure of energy dispersal at a specific temperature.

- a measure of disorder in the universe or of the availability of the energy in a system to do work.

- a measure of a system's thermal energy per unit temperature that is unavailable for doing useful work.

In Boltzmann's analysis in terms of constituent particles, entropy is a measure of the number of possible microscopic states (or microstates) of a system in thermodynamic equilibrium.

Order and disorder

Entropy is often loosely associated with the amount of order or disorder, or of chaos, in a thermodynamic system. The traditional qualitative description of entropy is that it refers to changes in the status quo of the system and is a measure of "molecular disorder" and the amount of wasted energy in a dynamical energy transformation from one state or form to another. In this direction, several recent authors have derived exact entropy formulas to account for and measure disorder and order in atomic and molecular assemblies. One of the simpler entropy order/disorder formulas is that derived in 1984 by thermodynamic physicist Peter Landsberg, based on a combination of thermodynamics and information theory arguments. He argues that when constraints operate on a system, such that it is prevented from entering one or more of its possible or permitted states, as contrasted with its forbidden states, the measure of the total amount of "disorder" in the system is given by:

Similarly, the total amount of "order" in the system is given by:

In which CD is the "disorder" capacity of the system, which is the entropy of the parts contained in the permitted ensemble, CI is the "information" capacity of the system, an expression similar to Shannon's channel capacity, and CO is the "order" capacity of the system.

Energy dispersal

Ambiguities in the terms disorder and chaos, which usually have meanings directly opposed to equilibrium, contribute to widespread confusion and hamper comprehension of entropy for most students. As the second law of thermodynamics shows, in an isolated system internal portions at different temperatures tend to adjust to a single uniform temperature and thus produce equilibrium. A recently developed educational approach avoids ambiguous terms and describes such spreading out of energy as dispersal, which leads to loss of the differentials required for work even though the total energy remains constant in accordance with the first law of thermodynamics (compare discussion in next section). Physical chemist Peter Atkins, in his textbook Physical Chemistry, introduces entropy with the statement that "spontaneous changes are always accompanied by a dispersal of energy or matter and often both".

Relating entropy to energy usefulness

Following on from the above, it is possible (in a thermal context) to regard lower entropy as an indicator or measure of the effectiveness or usefulness of a particular quantity of energy. This is because energy supplied at a higher temperature (i.e. with low entropy) tends to be more useful than the same amount of energy available at a lower temperature. Mixing a hot parcel of a fluid with a cold one produces a parcel of intermediate temperature, in which the overall increase in entropy represents a "loss" that can never be replaced.

Thus, the fact that the entropy of the universe is steadily increasing, means that its total energy is becoming less useful: eventually, this leads to the "heat death of the Universe."

Entropy and adiabatic accessibility

A definition of entropy based entirely on the relation of adiabatic accessibility between equilibrium states was given by E.H.Lieb and J. Yngvason in 1999. This approach has several predecessors, including the pioneering work of Constantin Carathéodory from 1909 and the monograph by R. Giles. In the setting of Lieb and Yngvason one starts by picking, for a unit amount of the substance under consideration, two reference states and such that the latter is adiabatically accessible from the former but not vice versa. Defining the entropies of the reference states to be 0 and 1 respectively the entropy of a state is defined as the largest number such that is adiabatically accessible from a composite state consisting of an amount in the state and a complementary amount, , in the state . A simple but important result within this setting is that entropy is uniquely determined, apart from a choice of unit and an additive constant for each chemical element, by the following properties: It is monotonic with respect to the relation of adiabatic accessibility, additive on composite systems, and extensive under scaling.

Entropy in quantum mechanics

In quantum statistical mechanics, the concept of entropy was developed by John von Neumann and is generally referred to as "von Neumann entropy",

where ρ is the density matrix and Tr is the trace operator.

This upholds the correspondence principle, because in the classical limit, when the phases between the basis states used for the classical probabilities are purely random, this expression is equivalent to the familiar classical definition of entropy,

i.e. in such a basis the density matrix is diagonal.

Von Neumann established a rigorous mathematical framework for quantum mechanics with his work Mathematische Grundlagen der Quantenmechanik. He provided in this work a theory of measurement, where the usual notion of wave function collapse is described as an irreversible process (the so-called von Neumann or projective measurement). Using this concept, in conjunction with the density matrix he extended the classical concept of entropy into the quantum domain.

Information theory

I thought of calling it "information", but the word was overly used, so I decided to call it "uncertainty". [...] Von Neumann told me, "You should call it entropy, for two reasons. In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. In the second place, and more important, nobody knows what entropy really is, so in a debate you will always have the advantage."

Conversation between Claude Shannon and John von Neumann regarding what name to give to the attenuation in phone-line signals

When viewed in terms of information theory, the entropy state function is the amount of information in the system that is needed to fully specify the microstate of the system. Entropy is the measure of the amount of missing information before reception. Often called Shannon entropy, it was originally devised by Claude Shannon in 1948 to study the size of information of a transmitted message. The definition of information entropy is expressed in terms of a discrete set of probabilities so that

In the case of transmitted messages, these probabilities were the probabilities that a particular message was actually transmitted, and the entropy of the message system was a measure of the average size of information of a message. For the case of equal probabilities (i.e. each message is equally probable), the Shannon entropy (in bits) is just the number of binary questions needed to determine the content of the message.

Most researchers consider information entropy and thermodynamic entropy directly linked to the same concept, while others argue that they are distinct. Both expressions are mathematically similar. If is the number of microstates that can yield a given macrostate, and each microstate has the same a priori probability, then that probability is . The Shannon entropy (in nats) is:

- and if entropy is measured in units of per nat, then the entropy is given by:

which is the Boltzmann entropy formula, where is Boltzmann's constant, which may be interpreted as the thermodynamic entropy per nat. Some authors argue for dropping the word entropy for the function of information theory and using Shannon's other term, "uncertainty", instead.

Measurement

The entropy of a substance can be measured, although in an indirect way. The measurement, known as entropymetry, is done on a closed system (with particle number N and volume V being constants) and uses the definition of temperature in terms of entropy, while limiting energy exchange to heat ().

The resulting relation describes how entropy changes when a small amount of energy is introduced into the system at a certain temperature .

The process of measurement goes as follows. First, a sample of the substance is cooled as close to absolute zero as possible. At such temperatures, the entropy approaches zero – due to the definition of temperature. Then, small amounts of heat are introduced into the sample and the change in temperature is recorded, until the temperature reaches a desired value (usually 25 °C). The obtained data allows the user to integrate the equation above, yielding the absolute value of entropy of the substance at the final temperature. This value of entropy is called calorimetric entropy.

Interdisciplinary applications

Although the concept of entropy was originally a thermodynamic concept, it has been adapted in other fields of study, including information theory, psychodynamics, thermoeconomics/ecological economics, and evolution.

Philosophy and theoretical physics

Entropy is the only quantity in the physical sciences that seems to imply a particular direction of progress, sometimes called an arrow of time. As time progresses, the second law of thermodynamics states that the entropy of an isolated system never decreases in large systems over significant periods of time. Hence, from this perspective, entropy measurement is thought of as a clock in these conditions.

Biology

Chiavazzo et al. proposed that where cave spiders choose to lay their eggs can be explained through entropy minimization.

Entropy has been proven useful in the analysis of base pair sequences in DNA. Many entropy-based measures have been shown to distinguish between different structural regions of the genome, differentiate between coding and non-coding regions of DNA, and can also be applied for the recreation of evolutionary trees by determining the evolutionary distance between different species.

Cosmology

Assuming that a finite universe is an isolated system, the second law of thermodynamics states that its total entropy is continually increasing. It has been speculated, since the 19th century, that the universe is fated to a heat death in which all the energy ends up as a homogeneous distribution of thermal energy so that no more work can be extracted from any source.

If the universe can be considered to have generally increasing entropy, then – as Roger Penrose has pointed out – gravity plays an important role in the increase because gravity causes dispersed matter to accumulate into stars, which collapse eventually into black holes. The entropy of a black hole is proportional to the surface area of the black hole's event horizon. Jacob Bekenstein and Stephen Hawking have shown that black holes have the maximum possible entropy of any object of equal size. This makes them likely end points of all entropy-increasing processes, if they are totally effective matter and energy traps. However, the escape of energy from black holes might be possible due to quantum activity (see Hawking radiation).

The role of entropy in cosmology remains a controversial subject since the time of Ludwig Boltzmann. Recent work has cast some doubt on the heat death hypothesis and the applicability of any simple thermodynamic model to the universe in general. Although entropy does increase in the model of an expanding universe, the maximum possible entropy rises much more rapidly, moving the universe further from the heat death with time, not closer. This results in an "entropy gap" pushing the system further away from the posited heat death equilibrium. Other complicating factors, such as the energy density of the vacuum and macroscopic quantum effects, are difficult to reconcile with thermodynamical models, making any predictions of large-scale thermodynamics extremely difficult.

Current theories suggest the entropy gap to have been originally opened up by the early rapid exponential expansion of the universe.

Economics

Romanian American economist Nicholas Georgescu-Roegen, a progenitor in economics and a paradigm founder of ecological economics, made extensive use of the entropy concept in his magnum opus on The Entropy Law and the Economic Process. Due to Georgescu-Roegen's work, the laws of thermodynamics now form an integral part of the ecological economics school. Although his work was blemished somewhat by mistakes, a full chapter on the economics of Georgescu-Roegen has approvingly been included in one elementary physics textbook on the historical development of thermodynamics.

In economics, Georgescu-Roegen's work has generated the term 'entropy pessimism'. Since the 1990s, leading ecological economist and steady-state theorist Herman Daly – a student of Georgescu-Roegen – has been the economics profession's most influential proponent of the entropy pessimism position.