The

holographic principle is a property of

string theories and a supposed property of

quantum gravity that states that the description of a volume of

space can be thought of as encoded on a

boundary to the region—preferably a

light-like boundary like a

gravitational horizon. First proposed by

Gerard 't Hooft, it was given a precise string-theory interpretation by

Leonard Susskind[1] who combined his ideas with previous ones of 't Hooft and

Charles Thorn.

[1][2] As pointed out by

Raphael Bousso,

[3] Thorn observed in 1978 that string theory admits a lower-dimensional description in which gravity emerges from it in what would now be called a holographic way.

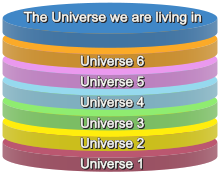

In a larger sense, the theory suggests that the entire

universe can be seen as a

two-dimensional information structure "painted" on the

cosmological horizon[clarification needed], such that the

three dimensions we observe are an effective description only at

macroscopic scales and at

low energies. Cosmological holography has not been made mathematically precise, partly because the

particle horizon has a non-zero area and grows with time.

[4][5]

The holographic principle was inspired by

black hole thermodynamics, which conjectures that the maximal

entropy in any region scales with the radius

squared, and not cubed as might be expected. In the case of a

black hole, the insight was that the informational content of all the objects that have fallen into the hole might be entirely contained in surface fluctuations of the event horizon. The holographic principle resolves the

black hole information paradox within the framework of string theory.

[6] However, there exist classical solutions to the Einstein equations that allow values of the entropy larger than those allowed by an area law, hence in principle larger than those of a black hole.

These are the so-called "Wheeler's bags of gold". The existence of such solutions conflicts with the holographic interpretation, and their effects in a quantum theory of gravity including the holographic principle are not yet fully understood.

[7]

Black hole entropy

An object with

entropy is microscopically random, like a hot gas. A known configuration of classical fields has zero entropy: there is nothing random about

electric and

magnetic fields, or

gravitational waves. Since black holes are exact solutions of

Einstein's equations, they were thought not to have any entropy either.

But

Jacob Bekenstein noted that this leads to a violation of the

second law of thermodynamics. If one throws a hot gas with entropy into a black hole, once it crosses the

event horizon, the entropy would disappear. The random properties of the gas would no longer be seen once the black hole had absorbed the gas and settled down. One way of salvaging the second law is if black holes are in fact random objects, with an enormous

entropy whose increase is greater than the entropy carried by the gas.

Bekenstein assumed that black holes are maximum entropy objects—that they have more entropy than anything else in the same volume. In a sphere of radius

R, the entropy in a relativistic gas increases as the energy increases. The only known limit is

gravitational; when there is too much energy the gas collapses into a black hole. Bekenstein used this to put an

upper bound on the entropy in a region of space, and the bound was proportional to the area of the region. He concluded that the black hole entropy is directly proportional to the area of the

event horizon.

[8]

Stephen Hawking had shown earlier that the total horizon area of a collection of black holes always increases with time. The horizon is a boundary defined by light-like

geodesics; it is those light rays that are just barely unable to escape. If neighboring geodesics start moving toward each other they eventually collide, at which point their extension is inside the black hole. So the geodesics are always moving apart, and the number of geodesics which generate the boundary, the area of the horizon, always increases. Hawking's result was called the second law of

black hole thermodynamics, by analogy with the

law of entropy increase, but at first, he did not take the analogy too seriously.

Hawking knew that if the horizon area were an actual entropy, black holes would have to radiate. When heat is added to a thermal system, the change in entropy is the increase in

mass-energy divided by temperature:

-

If black holes have a finite entropy, they should also have a finite temperature. In particular, they would come to equilibrium with a thermal gas of photons. This means that black holes would not only absorb photons, but they would also have to emit them in the right amount to maintain

detailed balance.

Time independent solutions to field equations do not emit radiation, because a time independent background conserves energy. Based on this principle, Hawking set out to show that black holes do not radiate. But, to his surprise, a careful analysis convinced him that

they do, and in just the right way to come to equilibrium with a gas at a finite temperature. Hawking's calculation fixed the constant of proportionality at 1/4; the entropy of a black hole is one quarter its horizon area in

Planck units.

[9]

The entropy is proportional to the logarithm of the number of

microstates, the ways a system can be configured microscopically while leaving the macroscopic description unchanged. Black hole entropy is deeply puzzling — it says that the

logarithm of the number of states of a black hole is proportional to the area of the horizon, not the volume in the interior.

[10]

Later,

Raphael Bousso came up with a

covariant version of the bound based upon null sheets.

Black hole information paradox

Hawking's calculation suggested that the radiation which black holes emit is not related in any way to the matter that they absorb. The outgoing light rays start exactly at the edge of the black hole and spend a long time near the horizon, while the infalling matter only reaches the horizon much later. The infalling and outgoing mass/energy only interact when they cross. It is implausible that the outgoing state would be completely determined by some tiny residual scattering.

Hawking interpreted this to mean that when black holes absorb some photons in a pure state described by a

wave function, they re-emit new

photons in a thermal mixed state described by a

density matrix. This would mean that quantum mechanics would have to be modified, because in quantum mechanics, states which are superpositions with probability amplitudes never become states which are probabilistic mixtures of different possibilities.

[note 1]

Troubled by this paradox,

Gerard 't Hooft analyzed the emission of

Hawking radiation in more detail. He noted that when Hawking radiation escapes, there is a way in which incoming particles can modify the outgoing particles. Their

gravitational field would deform the horizon of the black hole, and the deformed horizon could produce different outgoing particles than the undeformed horizon. When a particle falls into a black hole, it is boosted relative to an outside observer, and its gravitational field assumes a universal form. 't Hooft showed that this field makes a logarithmic tent-pole shaped bump on the horizon of a black hole, and like a shadow, the bump is an alternate description of the particle's location and mass. For a four-dimensional spherical uncharged black hole, the deformation of the horizon is similar to the type of deformation which describes the emission and absorption of particles on a string-theory

world sheet. Since the deformations on the surface are the only imprint of the incoming particle, and since these deformations would have to completely determine the outgoing particles, 't Hooft believed that the correct description of the black hole would be by some form of string theory.

This idea was made more precise by

Leonard Susskind, who had also been developing holography, largely independently. Susskind argued that the oscillation of the horizon of a black hole is a complete description

[note 2] of both the infalling and outgoing matter, because the world-sheet theory of string theory was just such a holographic description. While short strings have zero entropy, he could identify long highly excited string states with ordinary black holes. This was a deep advance because it revealed that strings have a classical interpretation in terms of black holes.

This work showed that the black hole information paradox is resolved when quantum gravity is described in an unusual string-theoretic way assuming the string-theoretical description is complete, unambiguous and non-redundant.

[12] The space-time in quantum gravity would emerge as an effective description of the theory of oscillations of a lower-dimensional black-hole horizon, and suggest that any black hole with appropriate properties, not just strings, would serve as a basis for a description of string theory.

In 1995, Susskind, along with collaborators

Tom Banks,

Willy Fischler, and

Stephen Shenker, presented a formulation of the new

M-theory using a holographic description in terms of charged point black holes, the D0

branes of

type IIA string theory. The Matrix theory they proposed was first suggested as a description of two branes in 11-dimensional

supergravity by

Bernard de Wit,

Jens Hoppe, and

Hermann Nicolai. The later authors reinterpreted the same matrix models as a description of the dynamics of point black holes in particular limits.

Holography allowed them to conclude that the dynamics of these black holes give a complete

non-perturbative formulation of M-theory. In 1997,

Juan Maldacena gave the first holographic descriptions of a higher-dimensional object, the 3+1-dimensional

type IIB membrane, which resolved a long-standing problem of finding a string description which describes a

gauge theory. These developments simultaneously explained how string theory is related to some forms of supersymmetric quantum field theories.

Limit on information density

Entropy, if considered as information (see

information entropy), is measured in

bits. The total quantity of bits is related to the total

degrees of freedom of matter/energy.

For a given energy in a given volume, there is an upper limit to the density of information (the

Bekenstein bound) about the whereabouts of all the particles which compose matter in that volume, suggesting that matter itself cannot be subdivided infinitely many times and there must be an ultimate level of

fundamental particles. As the

degrees of freedom of a particle are the product of all the degrees of freedom of its sub-particles, were a particle to have infinite subdivisions into lower-level particles, then the degrees of freedom of the original particle must be infinite, violating the maximal limit of entropy density. The holographic principle thus implies that the subdivisions must stop at some level, and that the fundamental particle is a bit (1 or 0) of information.

The most rigorous realization of the holographic principle is the

AdS/CFT correspondence by

Juan Maldacena. However, J.D. Brown and

Marc Henneaux had rigorously proved already in 1986, that the asymptotic symmetry of 2+1 dimensional gravity gives rise to a

Virasoro algebra, whose corresponding quantum theory is a 2-dimensional conformal field theory.

[13]

High-level summary

The physical universe is widely seen to be composed of "matter" and "energy". In his 2003 article published in

Scientific American magazine,

Jacob Bekenstein summarized a current trend started by

John Archibald Wheeler, which suggests scientists may

"regard the physical world as made of information, with energy and matter as incidentals." Bekenstein asks "Could we, as

William Blake memorably penned, 'see a world in a grain of sand,' or is that idea no more than '

poetic license,'"

[14] referring to the holographic principle.

Unexpected connection

Bekenstein's topical overview "A Tale of Two Entropies"

[15] describes potentially profound implications of Wheeler's trend, in part by noting a previously unexpected connection between the world of

information theory and classical physics. This connection was first described shortly after the seminal 1948 papers of American applied mathematician

Claude E. Shannon introduced today's most widely used measure of information content, now known as

Shannon entropy. As an objective measure of the quantity of information, Shannon entropy has been enormously useful, as the design of all modern communications and data storage devices, from cellular phones to

modems to hard disk drives and

DVDs, rely on Shannon entropy.

In

thermodynamics (the branch of physics dealing with heat), entropy is popularly described as a measure of the "

disorder" in a physical system of matter and energy. In 1877 Austrian physicist

Ludwig Boltzmann described it more precisely in terms of the

number of distinct microscopic states that the particles composing a macroscopic "chunk" of matter could be in while still

looking like the same macroscopic "chunk". As an example, for the air in a room, its thermodynamic entropy would equal the logarithm of the count of all the ways that the individual gas molecules could be distributed in the room, and all the ways they could be moving.

Energy, matter, and information equivalence

Shannon's efforts to find a way to quantify the information contained in, for example, an e-mail message, led him unexpectedly to a formula with the same form as

Boltzmann's. In an article in the August 2003 issue of Scientific American titled "Information in the Holographic Universe", Bekenstein summarizes that

"Thermodynamic entropy and Shannon entropy are conceptually equivalent: the number of arrangements that are counted by Boltzmann entropy reflects the amount of Shannon information one would need to implement any particular arrangement..." of matter and energy. The only salient difference between the thermodynamic entropy of physics and Shannon's entropy of information is in the units of measure; the former is expressed in units of energy divided by temperature, the latter in

essentially dimensionless "bits" of information, and so the difference is merely a matter of convention.

The holographic principle states that the entropy of

ordinary mass (not just black holes) is also proportional to surface area and not volume; that volume itself is illusory and the universe is really a

hologram which is

isomorphic to the information "inscribed" on the surface of its boundary.

[10]

Experimental tests

The

Fermilab physicist

Craig Hogan claims that the holographic principle would imply quantum fluctuations in spatial position

[16] that would lead to apparent background noise or "holographic noise" measurable at gravitational wave detectors, in particular

GEO 600.

[17] However these claims have not been widely accepted, or cited, among quantum gravity researchers and appear to be in direct conflict with string theory calculations.

[18]

Analyses in 2011 of measurements of gamma ray burst

GRB 041219A in 2004 by the

INTEGRAL space observatory launched in 2002 by the

European Space Agency shows that Craig Hogan's noise is absent down to a scale of 10

−48 meters, as opposed to scale of 10

−35 meters predicted by Hogan, and the scale of 10

−16 meters found in measurements of the

GEO 600 instrument.

[19] Research continues at Fermilab under Hogan as of 2013.

[20]

Jacob Bekenstein also claims to have found a way to test the holographic principle with a tabletop photon experiment.

[21]

Tests of Maldacena's conjecture

Hyakutake et al. in 2013/4 published two papers

[22] that bring computational evidence that Maldacena’s conjecture is true. One paper computes the internal energy of a black hole, the position of its event horizon, its entropy and other properties based on the predictions of

string theory and the effects of

virtual particles. The other paper calculates the internal energy of the corresponding lower-dimensional cosmos with no gravity. The two simulations match. The papers are not an actual proof of Maldacena's conjecture for all cases but a demonstration that the conjecture works for a particular theoretical case and a verification of the AdS/CFT correspondence for a particular situation.

[23]