From Wikipedia, the free encyclopedia

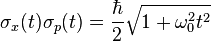

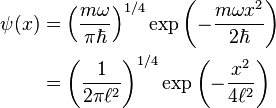

In quantum mechanics, the uncertainty principle, also known as Heisenberg's uncertainty principle, is any of a variety of mathematical inequalities asserting a fundamental limit to the precision with which certain pairs of physical properties of a particle known as complementary variables, such as position x and momentum p, can be known simultaneously. Introduced first in 1927, by the German physicist Werner Heisenberg, it states that the more precisely the position of some particle is determined, the less precisely its momentum can be known, and vice versa.[1] The formal inequality relating the standard deviation of position σx and the standard deviation of momentum σp was derived by Earle Hesse Kennard[2] later that year and by Hermann Weyl[3] in 1928:

Historically, the uncertainty principle has been confused[4][5] with a somewhat similar effect in physics, called the observer effect, which notes that measurements of certain systems cannot be made without affecting the systems.

Heisenberg offered such an observer effect at the quantum level (see below) as a physical "explanation" of quantum uncertainty.[6] It has since become clear, however, that the uncertainty principle is inherent in the properties of all wave-like systems,[7] and that it arises in quantum mechanics simply due to the matter wave nature of all quantum objects. Thus, the uncertainty principle actually states a fundamental property of quantum systems, and is not a statement about the observational success of current technology.[8] It must be emphasized that measurement does not mean only a process in which a physicist-observer takes part, but rather any interaction between classical and quantum objects regardless of any observer.[9]

Since the uncertainty principle is such a basic result in quantum mechanics, typical experiments in quantum mechanics routinely observe aspects of it. Certain experiments, however, may deliberately test a particular form of the uncertainty principle as part of their main research program. These include, for example, tests of number–phase uncertainty relations in superconducting[10] or quantum optics[11] systems. Applications dependent on the uncertainty principle for their operation include extremely low noise technology such as that required in gravitational-wave interferometers.[12]

Introduction

The evolution of an initially very localized gaussian wave function of a free particle in two-dimensional space, with colour and intensity indicating phase and amplitude. The spreading of the wave function in all directions shows that the initial momentum has a spread of values, unmodified in time; while the spread in position increases in time: as a result, the uncertainty Δx Δp increases in time.

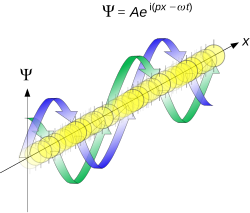

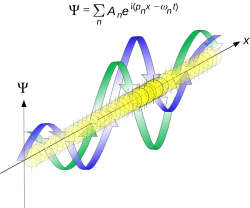

The superposition of several plane waves to form a wave packet. This wave packet becomes increasingly localized with the addition of many waves. The Fourier transform is a mathematical operation that separates a wave packet into its individual plane waves. Note that the waves shown here are real for illustrative purposes only, whereas in quantum mechanics the wave function is generally complex.

As a fundamental constraint, higher level descriptions of the universe must supervene on quantum mechanical descriptions which includes Heisenberg's uncertainty relationship. However, humans do not form (possess?) an intuitive understanding of this uncertainty principle in everyday life. This is because the constraint is not readily apparent on the macroscopic scales of everyday experience. So it may be helpful to demonstrate how it is integral to more easily understood physical situations. Two alternative conceptualizations of quantum physics can be examined with the goal of demonstrating the key role the uncertainty principle plays. A wave mechanics picture of the uncertainty principle provides for a more visually intuitive demonstration, and the somewhat more abstract matrix mechanics picture provides for a demonstration of the uncertainty principle that is more easily generalized to cover a multitude of physical contexts.[citation needed]

Mathematically, in wave mechanics, the uncertainty relation between position and momentum arises because the expressions of the wavefunction in the two corresponding orthonormal bases in Hilbert space are Fourier transforms of one another (i.e., position and momentum are conjugate variables). A nonzero function and its Fourier transform cannot both be sharply localized. A similar tradeoff between the variances of Fourier conjugates arises in all systems underlain by Fourier analysis, for example in sound waves: A pure tone is a sharp spike at a single frequency, while its Fourier transform gives the shape of the sound wave in the time domain, which is a completely delocalized sine wave. In quantum mechanics, the two key points are that the position of the particle takes the form of a matter wave, and momentum is its Fourier conjugate, assured by the de Broglie relation p = ħk, where k is the wavenumber.[citation needed]

In matrix mechanics, the mathematical formulation of quantum mechanics, any pair of non-commuting self-adjoint operators representing observables are subject to similar uncertainty limits. An eigenstate of an observable represents the state of the wavefunction for a certain measurement value (the eigenvalue). For example, if a measurement of an observable A is performed, then the system is in a particular eigenstate Ψ of that observable.

However, the particular eigenstate of the observable A need not be an eigenstate of another observable B: If so, then it does not have a unique associated measurement for it, as the system is not in an eigenstate of that observable.[13]

Wave mechanics interpretation[9][page needed]

Propagation of de Broglie waves in 1d—real part of the complex amplitude is blue, imaginary part is green. The probability (shown as the colour opacity) of finding the particle at a given point x is spread out like a waveform, there is no definite position of the particle. As the amplitude increases above zero the curvature reverses sign, so the amplitude begins to decrease again, and vice versa—the result is an alternating amplitude: a wave.

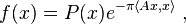

. The time-independent wave function of a single-moded plane wave of wavenumber k0 or momentum p0 is

. The time-independent wave function of a single-moded plane wave of wavenumber k0 or momentum p0 is

is a uniform distribution. In other words, the particle position is extremely uncertain in the sense that it could be essentially anywhere along the wave packet. Consider a wave function that is a sum of many waves, however, we may write this as

is a uniform distribution. In other words, the particle position is extremely uncertain in the sense that it could be essentially anywhere along the wave packet. Consider a wave function that is a sum of many waves, however, we may write this as representing the amplitude of these modes and is called the wave function in momentum space. In mathematical terms, we say that

representing the amplitude of these modes and is called the wave function in momentum space. In mathematical terms, we say that  is the Fourier transform of

is the Fourier transform of  and that x and p are conjugate variables.

and that x and p are conjugate variables.Adding together all of these plane waves comes at a cost, namely the momentum has become less precise, having become a mixture of waves of many different momenta.

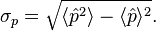

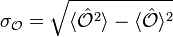

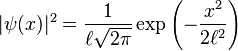

One way to quantify the precision of the position and momentum is the standard deviation σ. Since

is a probability density function for position, we calculate its standard deviation.

is a probability density function for position, we calculate its standard deviation.The precision of the position is improved, i.e. reduced σx, by using many plane waves, thereby weakening the precision of the momentum, i.e. increased σp. Another way of stating this is that σx and σp have an inverse relationship or are at least bounded from below. This is the uncertainty principle, the exact limit of which is the Kennard bound. Click the show button below to see a semi-formal derivation of the Kennard inequality using wave mechanics.

Matrix mechanics interpretation[9][page needed]

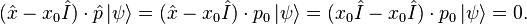

In matrix mechanics, observables such as position and momentum are represented by self-adjoint operators. When considering pairs of observables, an important quantity is the commutator. For a pair of operators  and B̂, one defines their commutator as be a right eigenstate of position with a constant eigenvalue x0. By definition, this means that

be a right eigenstate of position with a constant eigenvalue x0. By definition, this means that  Applying the commutator to

Applying the commutator to  yields

yieldsSuppose, for the sake of proof by contradiction, that

is also a right eigenstate of momentum, with constant eigenvalue p0. If this were true, then one could write

is also a right eigenstate of momentum, with constant eigenvalue p0. If this were true, then one could writeWhen a state is measured, it is projected onto an eigenstate in the basis of the relevant observable. For example, if a particle's position is measured, then the state amounts to a position eigenstate. This means that the state is not a momentum eigenstate, however, but rather it can be represented as a sum of multiple momentum basis eigenstates.

In other words, the momentum must be less precise. This precision may be quantified by the standard deviations,

Robertson–Schrödinger uncertainty relations

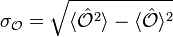

The most common general form of the uncertainty principle is the Robertson uncertainty relation.[14]For an arbitrary Hermitian operator

we can associate a standard deviation

we can associate a standard deviation-

-

,

,

-

indicate an expectation value. For a pair of operators  and B̂, we may define their commutator as

indicate an expectation value. For a pair of operators  and B̂, we may define their commutator as-

-

![[\hat{A},\hat{B}]=\hat{A}\hat{B}-\hat{B}\hat{A}](//upload.wikimedia.org/math/b/8/6/b86e94693709831c521a381b9225a8fc.png) ,

,

-

-

-

![\sigma_{A}\sigma_{B} \geq \left| \frac{1}{2i}\langle[\hat{A},\hat{B}]\rangle \right| = \frac{1}{2}\left|\langle[\hat{A},\hat{B}]\rangle \right|](//upload.wikimedia.org/math/7/d/5/7d56b7dd58c260f2ea75e836eed7d980.png) ,

,

-

-

![\sigma_{A}^2\sigma_{B}^2 \geq \left| \frac{1}{2}\langle\{\hat{A},\hat{B}\}\rangle - \langle \hat{A} \rangle\langle \hat{B}\rangle \right|^{2}+ \left|\frac{1}{2i}\langle[\hat{A},\hat{B}]\rangle\right|^{2}](//upload.wikimedia.org/math/1/a/c/1ace48ae641dd63c20628ef723bd3bc1.png) ,

,

-

-

.

.

-

- For position and linear momentum, the canonical commutation relation

![[\hat{x},\hat{p}]=i\hbar](//upload.wikimedia.org/math/4/8/0/48027576a2df700499372764cdaedfc7.png) implies the Kennard inequality from above:

implies the Kennard inequality from above:

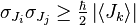

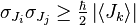

- For two orthogonal components of the total angular momentum operator of an object:

-

,

,- where i, j, k are distinct and Ji denotes angular momentum along the xi axis. This relation implies that unless all three components vanish together, only a single component of a system's angular momentum can be defined with arbitrary precision, normally the component parallel to an external (magnetic or electric) field. Moreover, for

![[{J_x}, {J_y}] = i \hbar \epsilon_{xyz} {J_z}](//upload.wikimedia.org/math/f/0/3/f03a13c7d247c8cfaf41a331662cd4a2.png) , a choice

, a choice  , in angular momentum multiplets, ψ = |j, m 〉, bounds the Casimir invariant (angular momentum squared,

, in angular momentum multiplets, ψ = |j, m 〉, bounds the Casimir invariant (angular momentum squared,  ) from below and thus yields useful constraints such as j (j + 1) ≥ m (m + 1), and hence j ≥ m, among others.

) from below and thus yields useful constraints such as j (j + 1) ≥ m (m + 1), and hence j ≥ m, among others.

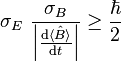

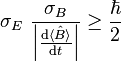

- In non-relativistic mechanics, time is privileged as an independent variable. Nevertheless, in 1945, L. I. Mandelshtam and I. E. Tamm derived a non-relativistic time–energy uncertainty relation, as follows.[23] For a quantum system in a non-stationary state ψ and an observable B represented by a self-adjoint operator

, the following formula holds:

, the following formula holds:

-

,

,

- where σE is the standard deviation of the energy operator (Hamiltonian) in the state ψ, σB stands for the standard deviation of B. Although the second factor in the left-hand side has dimension of time, it is different from the time parameter that enters the Schrödinger equation. It is a lifetime of the state ψ with respect to the observable B: In other words, this is the time interval (Δt) after which the expectation value

changes appreciably.

changes appreciably.

An informal, heuristic meaning of the principle is the following: A state that only exists for a short time cannot have a definite energy. To have a definite energy, the frequency of the state must be defined accurately, and this requires the state to hang around for many cycles, the reciprocal of the required accuracy. For example, in spectroscopy, excited states have a finite lifetime. By the time–energy uncertainty principle, they do not have a definite energy, and, each time they decay, the energy they release is slightly different. The average energy of the outgoing photon has a peak at the theoretical energy of the state, but the distribution has a finite width called the natural linewidth. Fast-decaying states have a broad linewidth, while slow decaying states have a narrow linewidth.[24]

The same linewidth effect also makes it difficult to specify the rest mass of unstable, fast-decaying particles in particle physics. The faster the particle decays (the shorter its lifetime), the less certain is its mass (the larger the particle's width).

- For the number of electrons in a superconductor and the phase of its Ginzburg–Landau order parameter[25][26]

-

.

.

Examples[9][page needed][16]

Quantum harmonic oscillator stationary states

Consider a one-dimensional quantum harmonic oscillator (QHO). It is possible to express the position and momentum operators in terms of the creation and annihilation operators:

.

.

,

,

.

.

Quantum harmonic oscillator with Gaussian initial condition

In a quantum harmonic oscillator of characteristic angular frequency ω, place a state that is offset from the bottom of the potential by some displacement x0 as

,

,

,

,

to denote a normal distribution of mean μ and variance σ2. Copying the variances above and applying trigonometric identities, we can write the product of the standard deviations as

to denote a normal distribution of mean μ and variance σ2. Copying the variances above and applying trigonometric identities, we can write the product of the standard deviations as ,

,

.

.

Coherent states

A coherent state is a right eigenstate of the annihilation operator, ,

,

.

.

.

.

in a "balanced" way. Moreover every squeezed coherent state also saturates the Kennard bound although the individual contributions of position and momentum need not be balanced in general.

in a "balanced" way. Moreover every squeezed coherent state also saturates the Kennard bound although the individual contributions of position and momentum need not be balanced in general.Particle in a box

Consider a particle in a one-dimensional box of length . The eigenfunctions in position and momentum space are

. The eigenfunctions in position and momentum space are

,

,

and we have used the de Broglie relation

and we have used the de Broglie relation  . The variances of

. The variances of  and

and  can be calculated explicitly:

can be calculated explicitly:

.

.

, the quantity

, the quantity  is greater than 1, so the uncertainty principle is never violated. For numerical concreteness, the smallest value occurs when

is greater than 1, so the uncertainty principle is never violated. For numerical concreteness, the smallest value occurs when  , in which case

, in which case .

.

Constant momentum

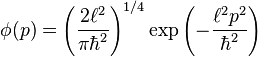

Assume a particle initially has a momentum space wave function described by a normal distribution around some constant momentum p0 according to

, with

, with  describing the width of the distribution−−cf. nondimensionalization. If the state is allowed to evolve in free space, then the time-dependent momentum and position space wave functions are

describing the width of the distribution−−cf. nondimensionalization. If the state is allowed to evolve in free space, then the time-dependent momentum and position space wave functions are and

and  this can be interpreted as a particle moving along with constant momentum at arbitrarily high precision. On the other hand, the standard deviation of the position is

this can be interpreted as a particle moving along with constant momentum at arbitrarily high precision. On the other hand, the standard deviation of the position isAdditional uncertainty relations

Mixed states

The Robertson–Schrödinger uncertainty relation may be generalized in a straightforward way to describe mixed states.[27]Phase space

In the phase space formulation of quantum mechanics, the Robertson–Schrödinger relation follows from a positivity condition on a real star-square function. Given a Wigner function with star product ★ and a function f, the following is generally true:[28]

with star product ★ and a function f, the following is generally true:[28] , we arrive at

, we arrive atSystematic error

The inequalities above focus on the statistical imprecision of observables as quantified by the standard deviation. Heisenberg's original version, however, was interested in systematic error, incurred by a disturbance of a quantum system by the measuring apparatus, i.e., an observer effect. If we let represent the error (i.e., accuracy) of a measurement of an observable

represent the error (i.e., accuracy) of a measurement of an observable  and

and  represent its disturbance by the measurement process, then the following inequality holds:[5]

represent its disturbance by the measurement process, then the following inequality holds:[5]-

-

(Heisenberg).

(Heisenberg).

-

Entropic uncertainty principle

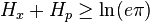

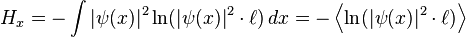

For many distributions, the standard deviation is not a particularly natural way of quantifying the structure. For example, uncertainty relations in which one of the observables is an angle has little physical meaning for fluctuations larger than one period.[21][31][32][33] Other examples include highly bimodal distributions, or unimodal distributions with divergent variance.A solution that overcomes these issues is an uncertainty based on entropic uncertainty instead of the product of variances. While formulating the many-worlds interpretation of quantum mechanics in 1957, Hugh Everett III conjectured a stronger extension of the uncertainty principle based on entropic certainty.[34] This conjecture, also studied by Hirschman[35] and proven in 1975 by Beckner[36] and by Iwo Bialynicki-Birula and Jerzy Mycielski[37] is

.

.From the inverse logarithmic Sobolev inequalites[38]

Harmonic analysis

In the context of harmonic analysis, a branch of mathematics, the uncertainty principle implies that one cannot at the same time localize the value of a function and its Fourier transform. To wit, the following inequality holds,Signal processing

In the context of signal processing, and in particular time–frequency analysis, uncertainty principles are referred to as the Gabor limit, after Dennis Gabor, or sometimes the Heisenberg–Gabor limit. The basic result, which follows from "Benedicks's theorem", below, is that a function cannot be both time limited and band limited (a function and its Fourier transform cannot both have bounded domain)—see bandlimited versus timelimited.Stated alternatively, "One cannot simultaneously sharply localize a signal (function f ) in both the time domain and frequency domain ( ƒ̂, its Fourier transform)".

When applied to filters, the result implies that one cannot achieve high temporal resolution and frequency resolution at the same time; a concrete example are the resolution issues of the short-time Fourier transform—if one uses a wide window, one achieves good frequency resolution at the cost of temporal resolution, while a narrow window has the opposite trade-off.

Alternate theorems give more precise quantitative results, and, in time–frequency analysis, rather than interpreting the (1-dimensional) time and frequency domains separately, one instead interprets the limit as a lower limit on the support of a function in the (2-dimensional) time–frequency plane. In practice, the Gabor limit limits the simultaneous time–frequency resolution one can achieve without interference; it is possible to achieve higher resolution, but at the cost of different components of the signal interfering with each other.

Benedicks's theorem

Amrein-Berthier[42] and Benedicks's theorem[43] intuitively says that the set of points where f is non-zero and the set of points where ƒ̂ is nonzero cannot both be small.Specifically, it is impossible for a function f in L2(R) and its Fourier transform ƒ̂ to both be supported on sets of finite Lebesgue measure. A more quantitative version is[44][45]

may be replaced by

may be replaced by  , which is only known if either S or Σ is convex.

, which is only known if either S or Σ is convex.Hardy's uncertainty principle

The mathematician G. H. Hardy formulated the following uncertainty principle:[46] it is not possible for f and ƒ̂ to both be "very rapidly decreasing." Specifically, if f in L2(R) is such that (

( an integer),

an integer),

This result was stated in Beurling's complete works without proof and proved in Hörmander[47] (the case

) and Bonami, Demange, and Jaming[48] for the general case. Note that Hörmander–Beurling's version implies the case ab > 1 in Hardy's Theorem while the version by Bonami–Demange–Jaming covers the full strength of Hardy's Theorem. A different proof of Beurling's theorem based on Liouville's theorem appeared in ref.[49]

) and Bonami, Demange, and Jaming[48] for the general case. Note that Hörmander–Beurling's version implies the case ab > 1 in Hardy's Theorem while the version by Bonami–Demange–Jaming covers the full strength of Hardy's Theorem. A different proof of Beurling's theorem based on Liouville's theorem appeared in ref.[49]A full description of the case ab < 1 as well as the following extension to Schwarz class distributions appears in ref.[50]

Theorem. If a tempered distribution

is such that

is such thatHistory

Werner Heisenberg formulated the Uncertainty Principle at Niels Bohr's institute in Copenhagen, while working on the mathematical foundations of quantum mechanics.[51]In 1925, following pioneering work with Hendrik Kramers, Heisenberg developed matrix mechanics, which replaced the ad hoc old quantum theory with modern quantum mechanics. The central premise was that the classical concept of motion does not fit at the quantum level, as electrons in an atom do not travel on sharply defined orbits. Rather, their motion is smeared out in a strange way: the Fourier transform of its time dependence only involves those frequencies that could be observed in the quantum jumps of their radiation.

Heisenberg's paper did not admit any unobservable quantities like the exact position of the electron in an orbit at any time; he only allowed the theorist to talk about the Fourier components of the motion. Since the Fourier components were not defined at the classical frequencies, they could not be used to construct an exact trajectory, so that the formalism could not answer certain overly precise questions about where the electron was or how fast it was going.

In March 1926, working in Bohr's institute, Heisenberg realized that the non-commutativity implies the uncertainty principle. This implication provided a clear physical interpretation for the non-commutativity, and it laid the foundation for what became known as the Copenhagen interpretation of quantum mechanics. Heisenberg showed that the commutation relation implies an uncertainty, or in Bohr's language a complementarity.[52] Any two variables that do not commute cannot be measured simultaneously—the more precisely one is known, the less precisely the other can be known. Heisenberg wrote:

It can be expressed in its simplest form as follows: One can never know with perfect accuracy both of those two important factors which determine the movement of one of the smallest particles—its position and its velocity. It is impossible to determine accurately both the position and the direction and speed of a particle at the same instant.[53]In his celebrated 1927 paper, "Über den anschaulichen Inhalt der quantentheoretischen Kinematik und Mechanik" ("On the Perceptual Content of Quantum Theoretical Kinematics and Mechanics"), Heisenberg established this expression as the minimum amount of unavoidable momentum disturbance caused by any position measurement,[1] but he did not give a precise definition for the uncertainties Δx and Δp. Instead, he gave some plausible estimates in each case separately. In his Chicago lecture[54] he refined his principle:

-

(1)

(1)

-

(2)

(2)

Terminology and translation

Throughout the main body of his original 1927 paper, written in German, Heisenberg used the word, "Ungenauigkeit" ("indeterminacy"),[1] to describe the basic theoretical principle. Only in the endnote did he switch to the word, "Unsicherheit" ("uncertainty"). When the English-language version of Heisenberg's textbook, The Physical Principles of the Quantum Theory, was published in 1930, however, the translation "uncertainty" was used, and it became the more commonly used term in the English language thereafter.[55]Heisenberg's microscope

Heisenberg's gamma-ray microscope for locating an electron (shown in blue). The incoming gamma ray (shown in green) is scattered by the electron up into the microscope's aperture angle θ. The scattered gamma-ray is shown in red. Classical optics shows that the electron position can be resolved only up to an uncertainty Δx that depends on θ and the wavelength λ of the incoming light.

He imagines an experimenter trying to measure the position and momentum of an electron by shooting a photon at it.

- Problem 1 – If the photon has a short wavelength, and therefore, a large momentum, the position can be measured accurately. But the photon scatters in a random direction, transferring a large and uncertain amount of momentum to the electron. If the photon has a long wavelength and low momentum, the collision does not disturb the electron's momentum very much, but the scattering will reveal its position only vaguely.

- Problem 2 – If a large aperture is used for the microscope, the electron's location can be well resolved (see Rayleigh criterion); but by the principle of conservation of momentum, the transverse momentum of the incoming photon and hence, the new momentum of the electron resolves poorly. If a small aperture is used, the accuracy of both resolutions is the other way around.

Critical reactions

The Copenhagen interpretation of quantum mechanics and Heisenberg's Uncertainty Principle were, in fact, seen as twin targets by detractors who believed in an underlying determinism and realism. According to the Copenhagen interpretation of quantum mechanics, there is no fundamental reality that the quantum state describes, just a prescription for calculating experimental results. There is no way to say what the state of a system fundamentally is, only what the result of observations might be.Albert Einstein believed that randomness is a reflection of our ignorance of some fundamental property of reality, while Niels Bohr believed that the probability distributions are fundamental and irreducible, and depend on which measurements we choose to perform. Einstein and Bohr debated the uncertainty principle for many years. Some experiments within the first decade of the twenty-first century have cast doubt on how extensively the uncertainty principle applies.[57]

Einstein's slit

The first of Einstein's thought experiments challenging the uncertainty principle went as follows:- Consider a particle passing through a slit of width d. The slit introduces an uncertainty in momentum of approximately h/d because the particle passes through the wall. But let us determine the momentum of the particle by measuring the recoil of the wall. In doing so, we find the momentum of the particle to arbitrary accuracy by conservation of momentum.

A similar analysis with particles diffracting through multiple slits is given by Richard Feynman.[58]

In another thought experiment Lawrence Marq Goldberg theorized that one could, for example, determine the position of a particle and then travel back in time to a point before the first reading to measure the velocity, then time travel back to a point before the second (earlier) reading was taken to deliver the resulting measurements before the particle was disturbed so that the measurements did not need to be taken. This, of course, would result in a temporal paradox. But it does support his contention that "the problems inherent to the uncertainly principle lay in the measuring not in the "uncertainty" of physics."[citation needed]

Einstein's box

Bohr was present when Einstein proposed the thought experiment which has become known as Einstein's box. Einstein argued that "Heisenberg's uncertainty equation implied that the uncertainty in time was related to the uncertainty in energy, the product of the two being related to Planck's constant."[59] Consider, he said, an ideal box, lined with mirrors so that it can contain light indefinitely. The box could be weighed before a clockwork mechanism opened an ideal shutter at a chosen instant to allow one single photon to escape. "We now know, explained Einstein, precisely the time at which the photon left the box."[60] "Now, weigh the box again. The change of mass tells the energy of the emitted light. In this manner, said Einstein, one could measure the energy emitted and the time it was released with any desired precision, in contradiction to the uncertainty principle."[59]Bohr spent a sleepless night considering this argument, and eventually realized that it was flawed. He pointed out that if the box were to be weighed, say by a spring and a pointer on a scale, "since the box must move vertically with a change in its weight, there will be uncertainty in its vertical velocity and therefore an uncertainty in its height above the table. ... Furthermore, the uncertainty about the elevation above the earth's surface will result in an uncertainty in the rate of the clock,"[61] because of Einstein's own theory of gravity's effect on time. "Through this chain of uncertainties, Bohr showed that Einstein's light box experiment could not simultaneously measure exactly both the energy of the photon and the time of its escape."[62]

EPR paradox for entangled particles

Bohr was compelled to modify his understanding of the uncertainty principle after another thought experiment by Einstein. In 1935, Einstein, Podolsky and Rosen (see EPR paradox) published an analysis of widely separated entangled particles. Measuring one particle, Einstein realized, would alter the probability distribution of the other, yet here the other particle could not possibly be disturbed. This example led Bohr to revise his understanding of the principle, concluding that the uncertainty was not caused by a direct interaction.[63]But Einstein came to much more far-reaching conclusions from the same thought experiment. He believed the "natural basic assumption" that a complete description of reality, would have to predict the results of experiments from "locally changing deterministic quantities", and therefore, would have to include more information than the maximum possible allowed by the uncertainty principle.

In 1964, John Bell showed that this assumption can be falsified, since it would imply a certain inequality between the probabilities of different experiments. Experimental results confirm the predictions of quantum mechanics, ruling out Einstein's basic assumption that led him to the suggestion of his hidden variables. Ironically this fact is one of the best pieces of evidence supporting Karl Popper's philosophy of invalidation of a theory by falsification-experiments. That is to say, here Einstein's "basic assumption" became falsified by experiments based on Bell's inequalities. For the objections of Karl Popper to the Heisenberg inequality itself, see below.

While it is possible to assume that quantum mechanical predictions are due to nonlocal, hidden variables, and in fact David Bohm invented such a formulation, this resolution is not satisfactory to the vast majority of physicists. The question of whether a random outcome is predetermined by a nonlocal theory can be philosophical, and it can be potentially intractable. If the hidden variables are not constrained, they could just be a list of random digits that are used to produce the measurement outcomes. To make it sensible, the assumption of nonlocal hidden variables is sometimes augmented by a second assumption—that the size of the observable universe puts a limit on the computations that these variables can do. A nonlocal theory of this sort predicts that a quantum computer would encounter fundamental obstacles when attempting to factor numbers of approximately 10,000 digits or more; a potentially achievable task in quantum mechanics.[64]

Popper's criticism

Karl Popper approached the problem of indeterminacy as a logician and metaphysical realist.[65] He disagreed with the application of the uncertainty relations to individual particles rather than to ensembles of identically prepared particles, referring to them as "statistical scatter relations".[65][66] In this statistical interpretation, a particular measurement may be made to arbitrary precision without invalidating the quantum theory. This directly contrasts with the Copenhagen interpretation of quantum mechanics, which is non-deterministic but lacks local hidden variables.In 1934, Popper published Zur Kritik der Ungenauigkeitsrelationen (Critique of the Uncertainty Relations) in Naturwissenschaften,[67] and in the same year Logik der Forschung (translated and updated by the author as The Logic of Scientific Discovery in 1959), outlining his arguments for the statistical interpretation. In 1982, he further developed his theory in Quantum theory and the schism in Physics, writing:

[Heisenberg's] formulae are, beyond all doubt, derivable statistical formulae of the quantum theory. But they have been habitually misinterpreted by those quantum theorists who said that these formulae can be interpreted as determining some upper limit to the precision of our measurements.[original emphasis][68]Popper proposed an experiment to falsify the uncertainty relations, although he later withdrew his initial version after discussions with Weizsäcker, Heisenberg, and Einstein; this experiment may have influenced the formulation of the EPR experiment.[65][69]

![\operatorname P [a \leq X \leq b] = \int_a^b |\psi(x)|^2 \, \mathrm{d}x ~.](http://upload.wikimedia.org/math/d/b/d/dbd1b6189efb3bf4724e428287398c95.png)

![[\hat{A},\hat{B}]=\hat{A}\hat{B}-\hat{B}\hat{A}.](http://upload.wikimedia.org/math/e/2/3/e23a00629cd00fab0f6dfba5d63aa709.png)

![[\hat{x},\hat{p}]=i \hbar.](http://upload.wikimedia.org/math/2/3/a/23a7e22ff4e4236725ea319f343dd032.png)

![[\hat{x},\hat{p}] | \psi \rangle = (\hat{x}\hat{p}-\hat{p}\hat{x}) | \psi \rangle = (\hat{x} - x_0 \hat{I}) \cdot \hat{p} \, | \psi \rangle = i \hbar | \psi \rangle,](http://upload.wikimedia.org/math/3/a/0/3a00a6bbc9711b1b8c6252d0c9fe4fd6.png)

![[\hat{x},\hat{p}] | \psi \rangle=i \hbar | \psi \rangle \ne 0.](http://upload.wikimedia.org/math/8/6/a/86a344b490481ec227e81f013f39e501.png)

,

,![[\hat{A},\hat{B}]=\hat{A}\hat{B}-\hat{B}\hat{A}](http://upload.wikimedia.org/math/b/8/6/b86e94693709831c521a381b9225a8fc.png) ,

,![\sigma_{A}\sigma_{B} \geq \left| \frac{1}{2i}\langle[\hat{A},\hat{B}]\rangle \right| = \frac{1}{2}\left|\langle[\hat{A},\hat{B}]\rangle \right|](http://upload.wikimedia.org/math/7/d/5/7d56b7dd58c260f2ea75e836eed7d980.png) ,

,![\sigma_{A}^2\sigma_{B}^2 \geq \left| \frac{1}{2}\langle\{\hat{A},\hat{B}\}\rangle - \langle \hat{A} \rangle\langle \hat{B}\rangle \right|^{2}+ \left|\frac{1}{2i}\langle[\hat{A},\hat{B}]\rangle\right|^{2}](http://upload.wikimedia.org/math/1/a/c/1ace48ae641dd63c20628ef723bd3bc1.png) ,

, .

.![[\hat{x},\hat{p}]=i\hbar](http://upload.wikimedia.org/math/4/8/0/48027576a2df700499372764cdaedfc7.png) implies the Kennard inequality from above:

implies the Kennard inequality from above:

,

,![[{J_x}, {J_y}] = i \hbar \epsilon_{xyz} {J_z}](http://upload.wikimedia.org/math/f/0/3/f03a13c7d247c8cfaf41a331662cd4a2.png) , a choice

, a choice  , in angular momentum multiplets, ψ = |j, m 〉, bounds the

, in angular momentum multiplets, ψ = |j, m 〉, bounds the  ) from below and thus yields useful constraints such as j (j + 1) ≥ m (m + 1), and hence j ≥ m, among others.

) from below and thus yields useful constraints such as j (j + 1) ≥ m (m + 1), and hence j ≥ m, among others. , the following formula holds:

, the following formula holds: ,

, changes appreciably.

changes appreciably. .

.

.

.

,

,

.

.

,

,

,

,

,

, .

. ,

,

.

. .

.

,

,

.

.

.

.

![\sigma_{A}^{2}\sigma_{B}^{2}\geq \left(\frac{1}{2}\mathrm{tr}(\rho\{A,B\})-\operatorname{tr}(\rho A)\mathrm{tr}(\rho B)\right)^{2}+\left(\frac{1}{2i}\mathrm{tr}(\rho[A,B])\right)^{2}](http://upload.wikimedia.org/math/0/9/5/0950012887951d5d50dc75bd1fbe34d5.png)

![\epsilon_A \eta_B + \epsilon_A \sigma_B + \sigma_A \eta_B \ge \left| \frac{1}{2i} \langle [\hat{A},\hat{B}] \rangle \right|](http://upload.wikimedia.org/math/8/8/5/885d64a69bee66691c86758ba00aebb8.png)

(Heisenberg).

(Heisenberg).

![\begin{align}H_x &= - \int |\psi(x)|^2 \ln (|\psi(x)|^2 \cdot \ell ) \,dx \\

&= -\frac{1}{\ell \sqrt{2\pi}} \int_{-\infty}^{\infty} \exp{\left( -\frac{x^2}{2\ell^2}\right)} \ln \left[\frac{1}{\sqrt{2\pi}} \exp{\left( -\frac{x^2}{2\ell^2}\right)}\right] \, dx \\

&= \frac{1}{\sqrt{2\pi}} \int_{-\infty}^{\infty} \exp{\left( -\frac{u^2}{2}\right)} \left[\ln(\sqrt{2\pi}) + \frac{u^2}{2}\right] \, du\\

&= \ln(\sqrt{2\pi}) + \frac{1}{2}.\end{align}](http://upload.wikimedia.org/math/0/c/5/0c5a59b8dc9d13d2f32a12658647485d.png)

![\begin{align}H_p &= - \int |\phi(p)|^2 \ln (|\phi(p)|^2 \cdot \hbar / \ell ) \,dp \\

&= -\sqrt{\frac{2 \ell^2}{\pi \hbar^2}} \int_{-\infty}^{\infty} \exp{\left( -\frac{2\ell^2 p^2}{\hbar^2}\right)} \ln \left[\sqrt{\frac{2}{\pi}} \exp{\left( -\frac{2\ell^2 p^2}{\hbar^2}\right)}\right] \, dp \\

&= \sqrt{\frac{2}{\pi}} \int_{-\infty}^{\infty} \exp{\left( -2v^2\right)} \left[\ln\left(\sqrt{\frac{\pi}{2}}\right) + 2v^2 \right] \, dv \\

&= \ln\left(\sqrt{\frac{\pi}{2}}\right) + \frac{1}{2}.\end{align}](http://upload.wikimedia.org/math/f/0/e/f0e11edecbefec0bdf61037f3798c290.png)

(

( an integer),

an integer),