Gene duplication (or chromosomal duplication or gene amplification) is a major mechanism through which new genetic material is generated during molecular evolution. It can be defined as any duplication of a region of DNA that contains a gene. Gene duplications can arise as products of several types of errors in DNA replication and repair machinery as well as through fortuitous capture by selfish genetic elements. Common sources of gene duplications include ectopic recombination, retrotransposition event, aneuploidy, polyploidy, and replication slippage.

Mechanisms of duplication

Ectopic recombination

Duplications arise from an event termed unequal crossing-over that occurs during meiosis between misaligned homologous chromosomes. The chance of it happening is a function of the degree of sharing of repetitive elements between two chromosomes. The products of this recombination are a duplication at the site of the exchange and a reciprocal deletion. Ectopic recombination is typically mediated by sequence similarity at the duplicate breakpoints, which form direct repeats. Repetitive genetic elements such as transposable elements offer one source of repetitive DNA that can facilitate recombination, and they are often found at duplication breakpoints in plants and mammals.

Replication slippage

Replication slippage is an error in DNA replication that can produce duplications of short genetic sequences. During replication DNA polymerase begins to copy the DNA. At some point during the replication process, the polymerase dissociates from the DNA and replication stalls. When the polymerase reattaches to the DNA strand, it aligns the replicating strand to an incorrect position and incidentally copies the same section more than once. Replication slippage is also often facilitated by repetitive sequences, but requires only a few bases of similarity.

Retrotransposition

Retrotransposons, mainly L1, can occasionally act on cellular mRNA. Transcripts are reverse transcribed to DNA and inserted into random place in the genome, creating retrogenes. Resulting sequence usually lack introns and often contain poly, sequences that are also integrated into the genome. Many retrogenes display changes in gene regulation in comparison to their parental gene sequences, which sometimes results in novel functions. Retrogenes can move between different chromosomes to shape chromosomal evolution.

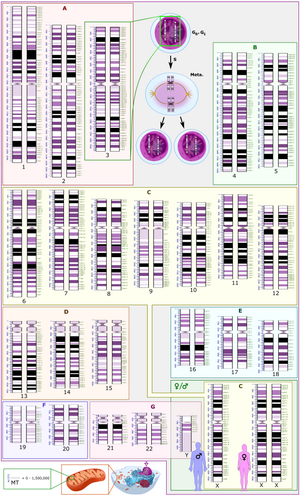

Aneuploidy

Aneuploidy occurs when nondisjunction at a single chromosome results in an abnormal number of chromosomes. Aneuploidy is often harmful and in mammals regularly leads to spontaneous abortions (miscarriages). Some aneuploid individuals are viable, for example trisomy 21 in humans, which leads to Down syndrome. Aneuploidy often alters gene dosage in ways that are detrimental to the organism; therefore, it is unlikely to spread through populations.

Polyploidy

Polyploidy, or whole genome duplication is a product of nondisjunction during meiosis which results in additional copies of the entire genome. Polyploidy is common in plants, but it has also occurred in animals, with two rounds of whole genome duplication (2R event) in the vertebrate lineage leading to humans. It has also occurred in the hemiascomycete yeasts ~100 mya.

After a whole genome duplication, there is a relatively short period of genome instability, extensive gene loss, elevated levels of nucleotide substitution and regulatory network rewiring. In addition, gene dosage effects play a significant role. Thus, most duplicates are lost within a short period, however, a considerable fraction of duplicates survive. Interestingly, genes involved in regulation are preferentially retained. Furthermore, retention of regulatory genes, most notably the Hox genes, has led to adaptive innovation.

Rapid evolution and functional divergence have been observed at the level of the transcription of duplicated genes, usually by point mutations in short transcription factor binding motifs. Furthermore, rapid evolution of protein phosphorylation motifs, usually embedded within rapidly evolving intrinsically disordered regions is another contributing factor for survival and rapid adaptation/neofunctionalization of duplicate genes. Thus, a link seems to exist between gene regulation (at least at the post-translational level) and genome evolution.

Polyploidy is also a well known source of speciation, as offspring, which have different numbers of chromosomes compared to parent species, are often unable to interbreed with non-polyploid organisms. Whole genome duplications are thought to be less detrimental than aneuploidy as the relative dosage of individual genes should be the same.

As an evolutionary event

Rate of gene duplication

Comparisons of genomes demonstrate that gene duplications are common in most species investigated. This is indicated by variable copy numbers (copy number variation) in the genome of humans or fruit flies. However, it has been difficult to measure the rate at which such duplications occur. Recent studies yielded a first direct estimate of the genome-wide rate of gene duplication in C. elegans, the first multicellular eukaryote for which such as estimate became available. The gene duplication rate in C. elegans is on the order of 10−7 duplications/gene/generation, that is, in a population of 10 million worms, one will have a gene duplication per generation. This rate is two orders of magnitude greater than the spontaneous rate of point mutation per nucleotide site in this species. Older (indirect) studies reported locus-specific duplication rates in bacteria, Drosophila, and humans ranging from 10−3 to 10−7/gene/generation.

Neofunctionalization

Gene duplications are an essential source of genetic novelty that can lead to evolutionary innovation. Duplication creates genetic redundancy, where the second copy of the gene is often free from selective pressure—that is, mutations of it have no deleterious effects to its host organism. If one copy of a gene experiences a mutation that affects its original function, the second copy can serve as a 'spare part' and continue to function correctly. Thus, duplicate genes accumulate mutations faster than a functional single-copy gene, over generations of organisms, and it is possible for one of the two copies to develop a new and different function. Some examples of such neofunctionalization is the apparent mutation of a duplicated digestive gene in a family of ice fish into an antifreeze gene and duplication leading to a novel snake venom gene and the synthesis of 1 beta-hydroxytestosterone in pigs.

Gene duplication is believed to play a major role in evolution; this stance has been held by members of the scientific community for over 100 years. Susumu Ohno was one of the most famous developers of this theory in his classic book Evolution by gene duplication (1970). Ohno argued that gene duplication is the most important evolutionary force since the emergence of the universal common ancestor. Major genome duplication events can be quite common. It is believed that the entire yeast genome underwent duplication about 100 million years ago. Plants are the most prolific genome duplicators. For example, wheat is hexaploid (a kind of polyploid), meaning that it has six copies of its genome.

Subfunctionalization

Another possible fate for duplicate genes is that both copies are equally free to accumulate degenerative mutations, so long as any defects are complemented by the other copy. This leads to a neutral "subfunctionalization" (a process of constructive neutral evolution) or DDC (duplication-degeneration-complementation) model, in which the functionality of the original gene is distributed among the two copies. Neither gene can be lost, as both now perform important non-redundant functions, but ultimately neither is able to achieve novel functionality.

Subfunctionalization can occur through neutral processes in which mutations accumulate with no detrimental or beneficial effects. However, in some cases subfunctionalization can occur with clear adaptive benefits. If an ancestral gene is pleiotropic and performs two functions, often neither one of these two functions can be changed without affecting the other function. In this way, partitioning the ancestral functions into two separate genes can allow for adaptive specialization of subfunctions, thereby providing an adaptive benefit.

Loss

Often the resulting genomic variation leads to gene dosage dependent neurological disorders such as Rett-like syndrome and Pelizaeus–Merzbacher disease. Such detrimental mutations are likely to be lost from the population and will not be preserved or develop novel functions. However, many duplications are, in fact, not detrimental or beneficial, and these neutral sequences may be lost or may spread through the population through random fluctuations via genetic drift.

Identifying duplications in sequenced genomes

Criteria and single genome scans

The two genes that exist after a gene duplication event are called paralogs and usually code for proteins with a similar function and/or structure. By contrast, orthologous genes present in different species which are each originally derived from the same ancestral sequence. (See Homology of sequences in genetics).

It is important (but often difficult) to differentiate between paralogs and orthologs in biological research. Experiments on human gene function can often be carried out on other species if a homolog to a human gene can be found in the genome of that species, but only if the homolog is orthologous. If they are paralogs and resulted from a gene duplication event, their functions are likely to be too different. One or more copies of duplicated genes that constitute a gene family may be affected by insertion of transposable elements that causes significant variation between them in their sequence and finally may become responsible for divergent evolution. This may also render the chances and the rate of gene conversion between the homologs of gene duplicates due to less or no similarity in their sequences.

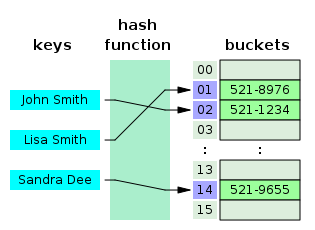

Paralogs can be identified in single genomes through a sequence comparison of all annotated gene models to one another. Such a comparison can be performed on translated amino acid sequences (e.g. BLASTp, tBLASTx) to identify ancient duplications or on DNA nucleotide sequences (e.g. BLASTn, megablast) to identify more recent duplications. Most studies to identify gene duplications require reciprocal-best-hits or fuzzy reciprocal-best-hits, where each paralog must be the other's single best match in a sequence comparison.

Most gene duplications exist as low copy repeats (LCRs), rather highly repetitive sequences like transposable elements. They are mostly found in pericentronomic, subtelomeric and interstitial regions of a chromosome. Many LCRs, due to their size (>1Kb), similarity, and orientation, are highly susceptible to duplications and deletions.

Genomic microarrays detect duplications

Technologies such as genomic microarrays, also called array comparative genomic hybridization (array CGH), are used to detect chromosomal abnormalities, such as microduplications, in a high throughput fashion from genomic DNA samples. In particular, DNA microarray technology can simultaneously monitor the expression levels of thousands of genes across many treatments or experimental conditions, greatly facilitating the evolutionary studies of gene regulation after gene duplication or speciation.

Next generation sequencing

Gene duplications can also be identified through the use of next-generation sequencing platforms. The simplest means to identify duplications in genomic resequencing data is through the use of paired-end sequencing reads. Tandem duplications are indicated by sequencing read pairs which map in abnormal orientations. Through a combination of increased sequence coverage and abnormal mapping orientation, it is possible to identify duplications in genomic sequencing data.

Nomenclature

The International System for Human Cytogenomic Nomenclature (ISCN) is an international standard for human chromosome nomenclature, which includes band names, symbols and abbreviated terms used in the description of human chromosome and chromosome abnormalities. Abbreviations include dup for duplications of parts of a chromosome. For example, dup(17p12) causes Charcot–Marie–Tooth disease type 1A.

As amplification

Gene duplication does not necessarily constitute a lasting change in a species' genome. In fact, such changes often don't last past the initial host organism. From the perspective of molecular genetics, gene amplification is one of many ways in which a gene can be overexpressed. Genetic amplification can occur artificially, as with the use of the polymerase chain reaction technique to amplify short strands of DNA in vitro using enzymes, or it can occur naturally, as described above. If it's a natural duplication, it can still take place in a somatic cell, rather than a germline cell (which would be necessary for a lasting evolutionary change).

Role in cancer

Duplications of oncogenes are a common cause of many types of cancer. In such cases the genetic duplication occurs in a somatic cell and affects only the genome of the cancer cells themselves, not the entire organism, much less any subsequent offspring. Recent comprehensive patient-level classification and quantification of driver events in TCGA cohorts revealed that there are on average 12 driver events per tumor, of which 1.5 are amplifications of oncogenes.

| Cancer type | Associated gene amplifications |

Prevalence of amplification in cancer type (percent) |

|---|---|---|

| Breast cancer | MYC | 20% |

| ERBB2 (HER2) | 20% | |

| CCND1 (Cyclin D1) | 15–20% | |

| FGFR1 | 12% | |

| FGFR2 | 12% | |

| Cervical cancer | MYC | 25–50% |

| ERBB2 | 20% | |

| Colorectal cancer | HRAS | 30% |

| KRAS | 20% | |

| MYB | 15–20% | |

| Esophageal cancer | MYC | 40% |

| CCND1 | 25% | |

| MDM2 | 13% | |

| Gastric cancer | CCNE (Cyclin E) | 15% |

| KRAS | 10% | |

| MET | 10% | |

| Glioblastoma | ERBB1 (EGFR) | 33–50% |

| CDK4 | 15% | |

| Head and neck cancer | CCND1 | 50% |

| ERBB1 | 10% | |

| MYC | 7–10% | |

| Hepatocellular cancer | CCND1 | 13% |

| Neuroblastoma | MYCN | 20–25% |

| Ovarian cancer | MYC | 20–30% |

| ERBB2 | 15–30% | |

| AKT2 | 12% | |

| Sarcoma | MDM2 | 10–30% |

| CDK4 | 10% | |

| Small cell lung cancer | MYC | 15–20% |

Whole-genome duplications are also frequent in cancers, detected in 30% to 36% of tumors from the most common cancer types.

Their exact role in carcinogenesis is unclear, but they in some cases

lead to loss of chromatin segregation leading to chromatin conformation

changes that in turn lead to oncogenic epigenetic and transcriptional

modifications.