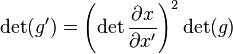

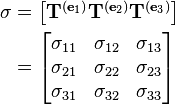

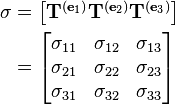

Cauchy stress tensor, a second-order tensor. The tensor's components, in a three-dimensional Cartesian

coordinate system, form the matrix

whose columns are the stresses (forces per unit area) acting on the

e1,

e2, and

e3 faces of the cube.

Tensors are

geometric objects that describe

linear relations between

vectors,

scalars, and other tensors. Elementary

examples of such relations include the

dot product, the

cross product, and

linear maps. Vectors and scalars themselves are also tensors. A tensor can be represented as a

multi-dimensional array of numerical values. The

order (also

degree) of a tensor is the dimensionality of the array needed to represent it, or equivalently, the number of indices needed to label a component of that array. For example, a linear map can be represented by a matrix (a 2-dimensional array) and therefore is a 2nd-order tensor. A vector can be represented as a 1-dimensional array and is a 1st-order tensor. Scalars are single numbers and are thus 0th-order tensors. The dimensionality of the array should not be confused with the dimension of the underlying vector space.

Tensors are used to represent correspondences between sets of

geometric vectors; for

applications in engineering and Newtonian physics these are normally

Euclidean vectors. For example, the

Cauchy stress tensor T takes a direction

v as input and produces the stress

T(v) on the surface normal to this vector for output thus expressing a relationship between these two vectors, shown in the figure (right).

Because they express a relationship between vectors, tensors themselves must be

independent of a particular choice of

coordinate system. Finding the representation of a tensor in terms of a coordinate

basis results in an organized multidimensional array representing the tensor in that basis or

frame of reference. The coordinate independence of a tensor then takes the form of a

"covariant" transformation law that relates the array computed in one coordinate system to that computed in another one. The precise form of the transformation law determines the

type (or

valence) of the tensor. The tensor type is a pair of natural numbers

(n, m) where

n is the number of

contravariant indices and

m is the number of

covariant indices. The total order of a tensor is the sum of these two numbers.

Tensors are important in physics because they provide a concise mathematical framework for formulating and solving physics problems in areas such as elasticity, fluid mechanics, and general relativity. Tensors were first conceived by

Tullio Levi-Civita and

Gregorio Ricci-Curbastro, who continued the earlier work of

Bernhard Riemann and

Elwin Bruno Christoffel and others, as part of the

absolute differential calculus. The concept enabled an alternative formulation of the intrinsic

differential geometry of a

manifold in the form of the

Riemann curvature tensor.

[1]

Definition

There are several approaches to defining tensors. Although seemingly different, the approaches just describe the same geometric concept using different languages and at different levels of abstraction.

As multidimensional arrays

Just as a

vector with respect to a given

basis is represented by an array of one dimension, any tensor with respect to a basis is represented by a multidimensional array. The numbers in the array are known as the

scalar components of the tensor or simply its

components. They are denoted by indices giving their position in the array, as

subscripts and superscripts, after the symbolic name of the tensor. In most cases, the indices of a tensor are either covariant or contravariant, designated by subscript or superscript, respectively. The total number of indices required to uniquely

select each component is equal to the

dimension of the array, and is called the

order,

degree or

rank of the tensor.

[Note 1] For example, the entries of an order 2 tensor

T would be denoted

Tij,

Ti j,

Tij, or

Tij, where

i and

j are indices running from 1 to the

dimension of the related vector space.

[Note 2] When the basis and its

dual coincide (i.e. for an

orthonormal basis), the distinction between contravariant and covariant indices may be ignored; in these cases

Tij or

Tij could be used interchangeably.

[Note 3]

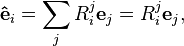

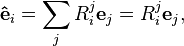

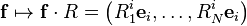

Just as the components of a vector change when we change the

basis of the vector space, the entries of a tensor also change under such a transformation. Each tensor comes equipped with a

transformation law that details how the components of the tensor respond to a

change of basis. The components of a vector can respond in two distinct ways to a

change of basis (see

covariance and contravariance of vectors), where the new basis vectors

are expressed in terms of the old basis vectors

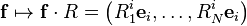

as,

where

Ri j is a matrix and in the second expression the summation sign was suppressed (

a notational convenience introduced by Einstein that will be used throughout this article).

[Note 4] The components,

vi, of a regular (or column) vector,

v, transform with the

inverse of the matrix

R,

where the hat denotes the components in the new basis. While the components,

wi, of a covector (or row vector),

w transform with the matrix R itself,

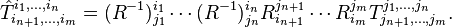

The components of a tensor transform in a similar manner with a transformation matrix for each index. If an index transforms like a vector with the inverse of the basis transformation, it is called

contravariant and is traditionally denoted with an upper index, while an index that transforms with the basis transformation itself is called

covariant and is denoted with a lower index. The transformation law for an order-

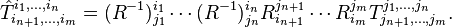

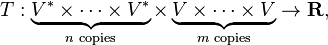

m tensor with

n contravariant indices and

m − n covariant indices is thus given as,

Such a tensor is said to be of order or

type (n, m−n).

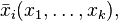

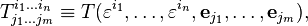

[Note 5] This discussion motivates the following formal definition:

[2]

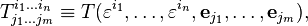

Definition. A tensor of type (n, m − n) is an assignment of a multidimensional array

![T^{i_1\dots i_n}_{i_{n+1}\dots i_m}[\mathbf{f}]](//upload.wikimedia.org/math/0/7/c/07cad2ce50095d3416dd44cfcd0e391b.png)

to each basis f = (e1,...,eN) such that, if we apply the change of basis

then the multidimensional array obeys the transformation law

![T^{i_1\dots i_n}_{i_{n+1}\dots i_m}[\mathbf{f}\cdot R] = (R^{-1})^{i_1}_{j_1}\cdots(R^{-1})^{i_n}_{j_n} R^{j_{n+1}}_{i_{n+1}}\cdots R^{j_{m}}_{i_{m}}T^{j_1,\ldots,j_n}_{j_{n+1},\ldots,j_m}[\mathbf{f}].](//upload.wikimedia.org/math/6/1/1/6118b3fd6ec2a036f8a0f6725af92b41.png)

The definition of a tensor as a multidimensional array satisfying a transformation law traces back to the work of Ricci.

[1] Nowadays, this definition is still used in some physics and engineering text books.

[3][4]

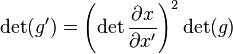

Tensor fields

In many applications, especially in differential geometry and physics, it is natural to consider a tensor with components that are functions of the point in a space. This was the setting of Ricci's original work. In modern mathematical terminology such an object is called a

tensor field, often referred to simply as a tensor.

[1]

In this context, a

coordinate basis is often chosen for the tangent vector space. The transformation law may then be expressed in terms of

partial derivatives of the coordinate functions,

defining a coordinate transformation,

[1]

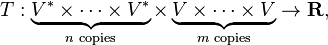

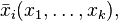

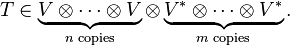

As multilinear maps

A downside to the definition of a tensor using the multidimensional array approach is that it is not apparent from the definition that the defined object is indeed basis independent, as is expected from an intrinsically geometric object. Although it is possible to show that transformation laws indeed ensure independence from the basis, sometimes a more intrinsic definition is preferred. One approach is to define a tensor as a

multilinear map. In that approach a type

(n, m) tensor

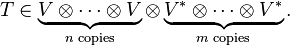

T is defined as a map,

where

V is a (finite-dimensional)

vector space and

V* is the corresponding

dual space of covectors, which is linear in each of its arguments.

By applying a multilinear map

T of type

(n, m) to a basis {

ej} for

V and a canonical cobasis {

εi} for

V*,

an (

n+

m)-dimensional array of components can be obtained. A different choice of basis will yield different components. But, because

T is linear in all of its arguments, the components satisfy the tensor transformation law used in the multilinear array definition. The multidimensional array of components of

T thus form a tensor according to that definition. Moreover, such an array can be realised as the components of some multilinear map

T. This motivates viewing multilinear maps as the intrinsic objects underlying tensors.

Using tensor products

For some mathematical applications, a more abstract approach is sometimes useful. This can be achieved by defining tensors in terms of elements of

tensor products of vector spaces, which in turn are defined through a

universal property. A type

(n, m) tensor is defined in this context as an element of the tensor product of vector spaces,

[5]

If

vi is a basis of

V and

wj is a basis of

W, then the tensor product

V ⊗ W has a natural basis

vi ⊗ wj.

The components of a tensor

T are the coefficients of the tensor with respect to the basis obtained from a basis

{ei} for

V and its dual

{εj}, i.e.

Using the properties of the tensor product, it can be shown that these components satisfy the transformation law for a type

(m, n) tensor. Moreover, the universal property of the tensor product gives a

1-to-1 correspondence between tensors defined in this way and tensors defined as multilinear maps.

Tensors in infinite dimensions

This discussion of tensors so far assumes finite dimensionality of the spaces involved. All of this can be generalized, essentially without modification, to

vector bundles or

coherent sheaves.

[6] For infinite-dimensional vector spaces, inequivalent topologies lead to inequivalent notions of tensor, and these various isomorphisms may or may not hold depending on what exactly is meant by a tensor (see

topological tensor product). In some applications, it is the

tensor product of Hilbert spaces that is intended, whose properties are the most similar to the finite-dimensional case. A more modern view is that it is the tensors' structure as a

symmetric monoidal category that encodes their most important properties, rather than the specific models of those categories.

Examples

This table shows important examples of tensors, including both tensors on vector spaces and tensor fields on manifolds. The tensors are classified according to their type

(n, m), where

n is the number of contravariant indices,

m is the number of covariant indices, and

n + m gives the total order of the tensor. For example, a

bilinear form is the same thing as a

(0, 2)-tensor; an

inner product is an example of a

(0, 2)-tensor, but not all

(0, 2)-tensors are inner products. In the

(0, M)-entry of the table,

M denotes the dimensionality of the underlying vector space or manifold because for each dimension of the space, a separate index is needed to select that dimension to get a maximally covariant antisymmetric tensor.

| n, m |

n = 0 |

n = 1 |

n = 2 |

... |

n |

... |

| m = 0 |

scalar, e.g. scalar curvature |

vector (e.g. direction vector) |

inverse metric tensor, bivector (e.g., a Poisson structure) |

|

n-vector, a sum of n-blades |

|

| m = 1 |

covector, linear functional, 1-form (e.g. gradient of a scalar field) |

linear transformation,[7] Kronecker delta |

|

|

|

|

| m = 2 |

bilinear form, e.g. inner product, metric tensor, Ricci curvature, 2-form, symplectic form |

e.g. cross product in three dimensions |

e.g. elasticity tensor |

|

|

|

| m = 3 |

e.g. 3-form |

e.g. Riemann curvature tensor |

|

|

|

|

| ... |

|

|

|

|

|

|

| m = M |

e.g. M-form i.e. volume form |

|

|

|

|

|

| ... |

|

|

|

|

|

|

Raising an index on an

(n, m)-tensor produces an

(n + 1, m − 1)-tensor; this can be visualized as moving diagonally up and to the right on the table. Symmetrically, lowering an index can be visualized as moving diagonally down and to the left on the table.

Contraction of an upper with a lower index of an

(n, m)-tensor produces an

(n − 1, m − 1)-tensor; this can be visualized as moving diagonally up and to the left on the table.

Orientation defined by an ordered set of vectors.

Reversed orientation corresponds to negating the exterior product.

Geometric interpretation of grade

n elements in a real

exterior algebra for

n = 0 (signed point), 1 (directed line segment, or vector), 2 (oriented plane element), 3 (oriented volume). The exterior product of

n vectors can be visualized as any

n-dimensional shape (e.g.

n-

parallelotope,

n-

ellipsoid); with magnitude (

hypervolume), and

orientation defined by that on its

n − 1-dimensional boundary and on which side the interior is.

[8][9]

Notation

Ricci calculus

Ricci calculus is the modern formalism and notation for tensor indices: indicating

inner and

outer products,

covariance and contravariance,

summations of tensor components,

symmetry and

antisymmetry, and

partial and

covariant derivatives.

Einstein summation convention

The

Einstein summation convention dispenses with writing

summation signs, leaving the summation implicit. Any repeated index symbol is summed over: if the index

i is used twice in a given term of a tensor expression, it means that the term is to be summed for all

i. Several distinct pairs of indices may be summed this way.

Penrose graphical notation

Penrose graphical notation is a diagrammatic notation which replaces the symbols for tensors with shapes, and their indices by lines and curves. It is independent of basis elements, and requires no symbols for the indices.

Abstract index notation

The

abstract index notation is a way to write tensors such that the indices are no longer thought of as numerical, but rather are

indeterminates. This notation captures the expressiveness of indices and the basis-independence of index-free notation.

Component-free notation

A

component-free treatment of tensors uses notation that emphasises that tensors do not rely on any basis, and is defined in terms of the

tensor product of vector spaces.

Operations

There are a number of basic operations that may be conducted on tensors that again produce a tensor. The linear nature of tensor implies that two tensors of the same type may be added together, and that tensors may be multiplied by a scalar with results analogous to the

scaling of a vector. On components, these operations are simply performed component for component. These operations do not change the type of the tensor, however there also exist operations that change the type of the tensors.

Tensor product

The

tensor product takes two tensors,

S and

T, and produces a new tensor,

S ⊗ T, whose order is the sum of the orders of the original tensors. When described as multilinear maps, the tensor product simply multiplies the two tensors, i.e.

which again produces a map that is linear in all its arguments. On components the effect similarly is to multiply the components of the two input tensors, i.e.

If

S is of type (

l,

k) and

T is of type (

n,

m), then the tensor product

S ⊗ T has type

(l+n,k+m).

Contraction

Tensor contraction is an operation that reduces the total order of a tensor by two. More precisely, it reduces a type

(n, m) tensor to a type

(n − 1, m − 1) tensor. In terms of components, the operation is achieved by summing over one contravariant and one covariant index of tensor. For example, a

(1, 1)-tensor

can be contracted to a scalar through

.

.

Where the summation is again implied. When the

(1, 1)-tensor is interpreted as a linear map, this operation is known as the

trace.

The contraction is often used in conjunction with the tensor product to contract an index from each tensor.

The contraction can also be understood in terms of the definition of a tensor as an element of a tensor product of copies of the space

V with the space

V* by first decomposing the tensor into a linear combination of simple tensors, and then applying a factor from

V* to a factor from

V. For example, a tensor

can be written as a linear combination

The contraction of

T on the first and last slots is then the vector

Raising or lowering an index

When a vector space is equipped with a

nondegenerate bilinear form (or

metric tensor as it is often called in this context), operations can be defined that convert a contravariant (upper) index into a covariant (lower) index and vice versa. A metric tensor is a (symmetric) (

0, 2)-tensor, it is thus possible to contract an upper index of a tensor with one of lower indices of the metric tensor in the product. This produces a new tensor with the same index structure as the previous, but with lower index in the position of the contracted upper index. This operation is quite graphically known as

lowering an index.

Conversely, the inverse operation can be defined, and is called

raising an index. This is equivalent to a similar contraction on the product with a

(2, 0)-tensor. This

inverse metric tensor has components that are the matrix inverse of those if the metric tensor.

Applications

Continuum mechanics

Important examples are provided by continuum mechanics. The stresses inside a

solid body or

fluid are described by a tensor. The

stress tensor and

strain tensor are both second-order tensors, and are related in a general linear elastic material by a fourth-order

elasticity tensor. In detail, the tensor quantifying stress in a 3-dimensional solid object has components that can be conveniently represented as a 3 × 3 array. The three faces of a cube-shaped infinitesimal volume segment of the solid are each subject to some given force. The force's vector components are also three in number. Thus, 3 × 3, or 9 components are required to describe the stress at this cube-shaped infinitesimal segment. Within the bounds of this solid is a whole mass of varying stress quantities, each requiring 9 quantities to describe. Thus, a second-order tensor is needed.

If a particular

surface element inside the material is singled out, the material on one side of the surface will apply a force on the other side. In general, this force will not be orthogonal to the surface, but it will depend on the orientation of the surface in a linear manner. This is described by a tensor of

type (2, 0), in

linear elasticity, or more precisely by a tensor field of type

(2, 0), since the stresses may vary from point to point.

Other examples from physics

Common applications include

Applications of tensors of order > 2

The concept of a tensor of order two is often conflated with that of a matrix. Tensors of higher order do however capture ideas important in science and engineering, as has been shown successively in numerous areas as they develop. This happens, for instance, in the field of

computer vision, with the

trifocal tensor generalizing the

fundamental matrix.

The field of

nonlinear optics studies the changes to material

polarization density under extreme electric fields. The polarization waves generated are related to the generating

electric fields through the nonlinear susceptibility tensor. If the polarization

P is not linearly proportional to the electric field

E, the medium is termed

nonlinear. To a good approximation (for sufficiently weak fields, assuming no permanent dipole moments are present),

P is given by a

Taylor series in

E whose coefficients are the nonlinear susceptibilities:

Here

is the linear susceptibility,

gives the

Pockels effect and

second harmonic generation, and

gives the

Kerr effect. This expansion shows the way higher-order tensors arise naturally in the subject matter.

Generalizations

Tensor products of vector spaces

The vector spaces of a

tensor product need not be the same, and sometimes the elements of such a more general tensor product are called "tensors". For example, an element of the tensor product space

V ⊗ W is a second-order "tensor" in this more general sense,

[10] and an order-

d tensor may likewise be defined as an element of a tensor product of

d different vector spaces.

[11] A type

(n, m) tensor, in the sense defined previously, is also a tensor of order

n + m in this more general sense.

Tensors in infinite dimensions

The notion of a tensor can be generalized in a variety of ways to

infinite dimensions. One, for instance, is via the

tensor product of

Hilbert spaces.

[12] Another way of generalizing the idea of tensor, common in

nonlinear analysis, is via the

multilinear maps definition where instead of using finite-dimensional vector spaces and their

algebraic duals, one uses infinite-dimensional

Banach spaces and their

continuous dual.

[13] Tensors thus live naturally on

Banach manifolds.

[14]

Tensor densities

The concept of a

tensor field can be generalized by considering objects that transform differently. An object that transforms as an ordinary tensor field under coordinate transformations, except that it is also multiplied by the determinant of the

Jacobian of the inverse coordinate transformation to the

power, is called a tensor density with weight

.

[15] Invariantly, in the language of multilinear algebra, one can think of tensor densities as

multilinear maps taking their values in a

density bundle such as the (1-dimensional) space of

n-forms (where

n is the dimension of the space), as opposed to taking their values in just

R. Higher "weights" then just correspond to taking additional tensor products with this space in the range.

A special case are the scalar densities. Scalar 1-densities are especially important because it makes sense to define their integral over a manifold. They appear, for instance, in the

Einstein–Hilbert action in general relativity. The most common example of a scalar 1-density is the

volume element, which in the presence of a metric tensor

g is the square root of its

determinant in coordinates, denoted

. The metric tensor is a covariant tensor of order 2, and so its determinant scales by the square of the coordinate transition:

which is the transformation law for a scalar density of weight +2.

More generally, any tensor density is the product of an ordinary tensor with a scalar density of the appropriate weight. In the language of

vector bundles, the determinant bundle of the

tangent bundle is a

line bundle that can be used to 'twist' other bundles

w times. While locally the more general transformation law can indeed be used to recognise these tensors, there is a global question that arises, reflecting that in the transformation law one may write either the Jacobian determinant, or its absolute value. Non-integral powers of the (positive) transition functions of the bundle of densities make sense, so that the weight of a density, in that sense, is not restricted to integer values. Restricting to changes of coordinates with positive Jacobian determinant is possible on

orientable manifolds, because there is a consistent global way to eliminate the minus signs; but otherwise the line bundle of densities and the line bundle of

n-forms are distinct. For more on the intrinsic meaning, see

density on a manifold.

Spinors

When changing from one

orthonormal basis (called a

frame) to another by a rotation, the components of a tensor transform by that same rotation. This transformation does not depend on the path taken through the space of frames. However, the space of frames is not

simply connected (see

orientation entanglement and

plate trick): there are continuous paths in the space of frames with the same beginning and ending configurations that are not deformable one into the other. It is possible to attach an additional discrete invariant to each frame called the "spin" that incorporates this path dependence, and which turns out to have values of ±1. A

spinor is an object that transforms like a tensor under rotations in the frame, apart from a possible sign that is determined by the spin.

History

The concepts of later tensor analysis arose from the work of

Carl Friedrich Gauss in differential geometry, and the formulation was much influenced by the theory of

algebraic forms and invariants developed during the middle of the nineteenth century.

[16] The word "tensor" itself was introduced in 1846 by

William Rowan Hamilton[17] to describe something different from what is now meant by a tensor.

[Note 6] The contemporary usage was introduced by

Woldemar Voigt in 1898.

[18]

Tensor calculus was developed around 1890 by

Gregorio Ricci-Curbastro under the title

absolute differential calculus, and originally presented by Ricci in 1892.

[19] It was made accessible to many mathematicians by the publication of Ricci and

Tullio Levi-Civita's 1900 classic text

Méthodes de calcul différentiel absolu et leurs applications (Methods of absolute differential calculus and their applications).

[20]

In the 20th century, the subject came to be known as

tensor analysis, and achieved broader acceptance with the introduction of

Einstein's theory of

general relativity, around 1915. General relativity is formulated completely in the language of tensors. Einstein had learned about them, with great difficulty, from the geometer

Marcel Grossmann.

[21] Levi-Civita then initiated a correspondence with Einstein to correct mistakes Einstein had made in his use of tensor analysis. The correspondence lasted 1915–17, and was characterized by mutual respect:

I admire the elegance of your method of computation; it must be nice to ride through these fields upon the horse of true mathematics while the like of us have to make our way laboriously on foot.

—Albert Einstein,

The Italian Mathematicians of Relativity[22]

Tensors were also found to be useful in other fields such as

continuum mechanics. Some well-known examples of tensors in

differential geometry are

quadratic forms such as

metric tensors, and the

Riemann curvature tensor. The

exterior algebra of

Hermann Grassmann, from the middle of the nineteenth century, is itself a tensor theory, and highly geometric, but it was some time before it was seen, with the theory of

differential forms, as naturally unified with tensor calculus. The work of

Élie Cartan made differential forms one of the basic kinds of tensors used in mathematics.

From about the 1920s onwards, it was realised that tensors play a basic role in

algebraic topology (for example in the

Künneth theorem).

[23] Correspondingly there are types of tensors at work in many branches of

abstract algebra, particularly in

homological algebra and

representation theory. Multilinear algebra can be developed in greater generality than for scalars coming from a

field. For example, scalars can come from a

ring. But the theory is then less geometric and computations more technical and less algorithmic.

[24] Tensors are generalized within

category theory by means of the concept of

monoidal category, from the 1960s.

[25]

are expressed in terms of the old basis vectors

are expressed in terms of the old basis vectors  as,

as,

![T^{i_1\dots i_n}_{i_{n+1}\dots i_m}[\mathbf{f}]](http://upload.wikimedia.org/math/0/7/c/07cad2ce50095d3416dd44cfcd0e391b.png)

![T^{i_1\dots i_n}_{i_{n+1}\dots i_m}[\mathbf{f}\cdot R] = (R^{-1})^{i_1}_{j_1}\cdots(R^{-1})^{i_n}_{j_n} R^{j_{n+1}}_{i_{n+1}}\cdots R^{j_{m}}_{i_{m}}T^{j_1,\ldots,j_n}_{j_{n+1},\ldots,j_m}[\mathbf{f}].](http://upload.wikimedia.org/math/6/1/1/6118b3fd6ec2a036f8a0f6725af92b41.png)

can be contracted to a scalar through

can be contracted to a scalar through

.

.

is the linear susceptibility,

is the linear susceptibility,  gives the

gives the  gives the

gives the  power, is called a tensor density with weight

power, is called a tensor density with weight  .

. . The metric tensor is a covariant tensor of order 2, and so its determinant scales by the square of the coordinate transition:

. The metric tensor is a covariant tensor of order 2, and so its determinant scales by the square of the coordinate transition: