Explainable AI (XAI), also known as Interpretable AI, or Explainable Machine Learning (XML), is artificial intelligence (AI) in which humans can understand the reasoning behind decisions or predictions made by the AI. It contrasts with the "black box" concept in machine learning, where even the AI's designers cannot explain why it arrived at a specific decision.

XAI hopes to help users of AI-powered systems perform more effectively by improving their understanding of how those systems reason. XAI may be an implementation of the social right to explanation. Even if there is no such legal right or regulatory requirement, XAI can improve the user experience of a product or service by helping end users trust that the AI is making good decisions. XAI aims to explain what has been done, what is being done, and what will be done next, and to unveil which information these actions are based on. This makes it possible to confirm existing knowledge, challenge existing knowledge, and generate new assumptions.

Machine learning (ML) algorithms used in AI can be categorized as white-box or black-box. White-box models provide results that are understandable to experts in the domain. Black-box models, on the other hand, are extremely hard to explain and can hardly be understood even by domain experts. XAI algorithms follow the three principles of transparency, interpretability, and explainability. A model is transparent “if the processes that extract model parameters from training data and generate labels from testing data can be described and motivated by the approach designer.” Interpretability describes the possibility of comprehending the ML model and presenting the underlying basis for decision-making in a way that is understandable to humans. Explainability is a concept that is recognized as important, but a consensus definition is not available. One possibility is “the collection of features of the interpretable domain that have contributed, for a given example, to producing a decision (e.g., classification or regression)”. If algorithms fulfill these principles, they provide a basis for justifying decisions, tracking them and thereby verifying them, improving the algorithms, and exploring new facts.

Sometimes it is also possible to achieve a high-accuracy result with a white-box ML algorithm that is interpretable in itself. This is especially important in domains like medicine, defense, finance, and law, where it is crucial to understand decisions and build trust in the algorithms. Many researchers argue that, at least for supervised machine learning, the way forward is symbolic regression, where the algorithm searches the space of mathematical expressions to find the model that best fits a given dataset.

AI systems optimize behavior to satisfy a mathematically specified goal system chosen by the system designers, such as the command "maximize accuracy of assessing how positive film reviews are in the test dataset." The AI may learn useful general rules from the test set, such as "reviews containing the word "horrible" are likely to be negative." However, it may also learn inappropriate rules, such as "reviews containing '"Daniel Day-Lewis" are usually positive"; such rules may be undesirable if they are likely to fail to generalize outside the training set, or if people consider the rule to be "cheating" or "unfair." A human can audit rules in an XAI to get an idea of how likely the system is to generalize to future real-world data outside the test set.

Goals

Cooperation between agents --- in this case, algorithms and humans—depends on trust. If humans are to accept algorithmic prescriptions, they need to trust them. Incompleteness in the formalization of trust criteria is a barrier to straightforward optimization approaches. Transparency, interpretability, and explainability are intermediate goals on the road to these more comprehensive trust criteria. This is particularly relevant in medicine, especially with clinical decision support systems (CDSS), in which medical professionals should be able to understand how and why a machine-based decision was made in order to trust the decision and augment their decision-making process.

AI systems sometimes learn undesirable tricks that do an optimal job of satisfying explicit pre-programmed goals on the training data but do not reflect the more nuanced implicit desires of the human system designers or the full complexity of the domain data. For example, a 2017 system tasked with image recognition learned to "cheat" by looking for a copyright tag that happened to be associated with horse pictures rather than learning how to tell if a horse was actually pictured. In another 2017 system, a supervised learning AI tasked with grasping items in a virtual world learned to cheat by placing its manipulator between the object and the viewer in a way such that it falsely appeared to be grasping the object.

One transparency project, the DARPA XAI program, aims to produce "glass box" models that are explainable to a "human-in-the-loop" without greatly sacrificing AI performance. Human users of such a system can understand the AI's cognition (both in real-time and after the fact) and can determine whether to trust the AI. Other applications of XAI are knowledge extraction from black-box models and model comparisons. In the context of monitoring systems for ethical and socio-legal compliance, the term "glass box" is commonly used to refer to tools that track the inputs and outputs of the system in question, and provide value-based explanations for their behavior. These tools aim to ensure that the system operates in accordance with ethical and legal standards, and that its decision-making processes are transparent and accountable. The term "glass box" is often used in contrast to "black box" systems, which lack transparency and can be more difficult to monitor and regulate. The term is also used to name a voice assistant that produces counterfactual statements as explanations.

History and methods

During the 1970s to 1990s, symbolic reasoning systems, such as MYCIN, GUIDON, SOPHIE, and PROTOS could represent, reason about, and explain their reasoning for diagnostic, instructional, or machine-learning (explanation-based learning) purposes. MYCIN, developed in the early 1970s as a research prototype for diagnosing bacteremia infections of the bloodstream, could explain which of its hand-coded rules contributed to a diagnosis in a specific case. Research in intelligent tutoring systems resulted in developing systems such as SOPHIE that could act as an "articulate expert", explaining problem-solving strategy at a level the student could understand, so they would know what action to take next. For instance, SOPHIE could explain the qualitative reasoning behind its electronics troubleshooting, even though it ultimately relied on the SPICE circuit simulator. Similarly, GUIDON added tutorial rules to supplement MYCIN's domain-level rules so it could explain strategy for medical diagnosis. Symbolic approaches to machine learning, especially those relying on explanation-based learning, such as PROTOS, explicitly relied on representations of explanations, both to explain their actions and to acquire new knowledge.

In the 1980s through early 1990s, truth maintenance systems (TMS) extended the capabilities of causal-reasoning, rule-based, and logic-based inference systems. A TMS explicitly tracks alternate lines of reasoning, justifications for conclusions, and lines of reasoning that lead to contradictions, allowing future reasoning to avoid these dead ends. To provide explanation, they trace reasoning from conclusions to assumptions through rule operations or logical inferences, allowing explanations to be generated from the reasoning traces. As an example, consider a rule-based problem solver with just a few rules about Socrates that concludes he has died from poison:

By just tracing through the dependency structure the problem solver can construct the following explanation: "Socrates died because he was mortal and drank poison, and all mortals die when they drink poison. Socrates was mortal because he was a man and all men are mortal. Socrates drank poison because he held dissident beliefs, the government was conservative, and those holding conservative dissident beliefs under conservative governments must drink poison."

By the 1990s researchers began studying whether it is possible to meaningfully extract the non-hand-coded rules being generated by opaque trained neural networks. Researchers in clinical expert systems creating neural network-powered decision support for clinicians sought to develop dynamic explanations that allow these technologies to be more trusted and trustworthy in practice. In the 2010s public concerns about racial and other bias in the use of AI for criminal sentencing decisions and findings of creditworthiness may have led to increased demand for transparent artificial intelligence. As a result, many academics and organizations are developing tools to help detect bias in their systems.

Marvin Minsky et al. raised the issue that AI can function as a form of surveillance, with the biases inherent in surveillance, suggesting HI (Humanistic Intelligence) as a way to create a more fair and balanced "human-in-the-loop" AI.

Modern complex AI techniques, such as deep learning and genetic algorithms, are naturally opaque. To address this issue, methods have been developed to make new models more explainable and interpretable. This includes layerwise relevance propagation (LRP), a technique for determining which features in a particular input vector contribute most strongly to a neural network's output. Other techniques explain some particular prediction made by a (nonlinear) black-box model, a goal referred to as "local interpretability". The mere transposition of the concepts of local interpretability into a remote context (where the black-box model is executed at a third party) is currently under scrutiny.

There has been work on making glass-box models which are more transparent to inspection. This includes decision trees, Bayesian networks, sparse linear models, and more. The Association for Computing Machinery Conference on Fairness, Accountability, and Transparency (ACM FAccT) was established in 2018 to study transparency and explainability in the context of socio-technical systems, many of which include artificial intelligence.

Some techniques allow visualisations of the inputs which individual software neurons respond to most strongly. Several groups found that neurons can be aggregated into circuits that perform human-comprehensible functions, some of which reliably arise across different networks trained independently.

There are various techniques to extract compressed representations of the features of given inputs, which can then be analysed by standard clustering techniques. Alternatively, networks can be trained to output linguistic explanations of their behaviour, which are then directly human-interpretable. Model behaviour can also be explained with reference to training data—for example, by evaluating which training inputs influenced a given behaviour the most.

Regulation

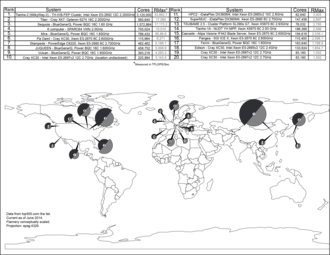

As regulators, official bodies, and general users come to depend on AI-based dynamic systems, clearer accountability will be required for automated decision-making processes to ensure trust and transparency. The first global conference exclusively dedicated to this emerging discipline was the 2017 International Joint Conference on Artificial Intelligence: Workshop on Explainable Artificial Intelligence (XAI).

The European Union introduced a right to explanation in the General Data Protection Right (GDPR) to address potential problems stemming from the rising importance of algorithms. The implementation of the regulation began in 2018. However, the right to explanation in GDPR covers only the local aspect of interpretability. In the United States, insurance companies are required to be able to explain their rate and coverage decisions. In France the Loi pour une République numérique (Digital Republic Act) grants subjects the right to request and receive information pertaining to the implementation of algorithms that process data about them.

Limitations

Despite efforts to increase the explainability of AI models, they still have a number of limitations.

Adversarial parties

By making an AI system more explainable, we also reveal more of its inner workings. For example, the explainability method of feature importance identifies features or variables that are most important in determining the model's output, while the influential samples method identifies the training samples that are most influential in determining the output, given a particular input. Adversarial parties could take advantage of this knowledge.

For example, competitor firms could replicate aspects of the original AI system in their own product, thus reducing competitive advantage. An explainable AI system is also susceptible to being “gamed”—influenced in a way that undermines its intended purpose. One study gives the example of a predictive policing system; in this case, those who could potentially “game” the system are the criminals subject to the system's decisions. In this study, developers of the system discussed the issue of criminal gangs looking to illegally obtain passports, and they expressed concerns that, if given an idea of what factors might trigger an alert in the passport application process, those gangs would be able to “send guinea pigs” to test those triggers, eventually finding a loophole that would allow them to “reliably get passports from under the noses of the authorities”.

Technical complexity

A fundamental barrier to making AI systems explainable is the technical complexity of such systems. End users often lack the coding knowledge required to understand software of any kind. Current methods used to explain AI are mainly technical ones, geared toward machine learning engineers for debugging purposes, rather than toward the end users who are ultimately affected by the system, causing “a gap between explainability in practice and the goal of transparency”. Proposed solutions to address the issue of technical complexity include either promoting the coding education of the general public so technical explanations would be more accessible to end users, or providing explanations in layperson terms.

The solution must avoid oversimplification. It is important to strike a balance between accuracy – how faithfully the explanation reflects the process of the AI system – and explainability – how well end users understand the process. This is a difficult balance to strike, since the complexity of machine learning makes it difficult for even ML engineers to fully understand, let alone non-experts.

Understanding versus trust

The goal of explainability to end users of AI systems is to increase trust in the systems, even “address concerns about lack of ‘fairness’ and discriminatory effects”. However, even with a good understanding of an AI system, end users may not necessarily trust the system. In one study, participants were presented with combinations of white-box and black-box explanations, and static and interactive explanations of AI systems. While these explanations served to increase both their self-reported and objective understanding, it had no impact on their level of trust, which remained skeptical.

This outcome was especially true for decisions that impacted the end user in a significant way, such as graduate school admissions. Participants judged algorithms to be too inflexible and unforgiving in comparison to human decision-makers; instead of rigidly adhering to a set of rules, humans are able to consider exceptional cases as well as appeals to their initial decision. For such decisions, explainability will not necessarily cause end users to accept the use of decision-making algorithms. We will need to either turn to another method to increase trust and acceptance of decision-making algorithms, or question the need to rely solely on AI for such impactful decisions in the first place.

Criticism

Scholars have suggested that explainability in AI should be considered a goal secondary to AI effectiveness, and that encouraging the exclusive development of XAI may limit the functionality of AI more broadly. Critiques of XAI rely on developed concepts of mechanistic and empiric reasoning from evidence-based medicine to suggest that AI technologies can be clinically validated even when their function cannot be understood by their operators.

Moreover, XAI systems have primarily focused on making AI systems understandable to AI practitioners rather than end users, and their results on user perceptions of these systems have been somewhat fragmented. Some researchers advocate the use of inherently interpretable machine learning models, rather than using post-hoc explanations in which a second model is created to explain the first. This is partly because post-hoc models increase the complexity in a decision pathway and partly because it is often unclear how faithfully a post-hoc explanation can mimic the computations of an entirely separate model. However, another view is that what is important is that the explanation accomplishes the given task at hand, and whether it is pre or post-hoc doesn't matter. If a post-hoc explanation method helps a doctor diagnose cancer better, it is of secondary importance whether it is a correct/incorrect explanation.

The goals of XAI amount to a form of lossy compression that will become less effective as AI models grow in their number of parameters. Along with other factors this leads to a theoretical limit for explainability.