From Wikipedia, the free encyclopedia

Poisson Distribution|

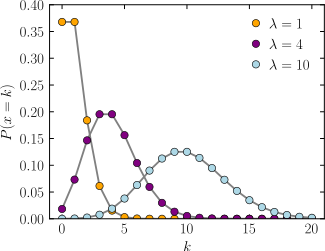

Probability mass function The horizontal axis is the index k, the number of occurrences. λ is the expected rate of occurrences. The vertical axis is the probability of k occurrences given λ. The function is defined only at integer values of k; the connecting lines are only guides for the eye. |

|

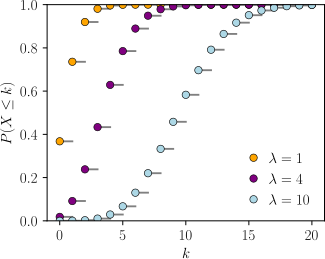

Cumulative distribution function The horizontal axis is the index k, the number of occurrences. The CDF is discontinuous at the integers of k and flat everywhere else because a variable that is Poisson distributed takes on only integer values. |

| Notation |

|

|---|

| Parameters |

(rate) (rate) |

|---|

| Support |

(Natural numbers starting from 0) (Natural numbers starting from 0) |

|---|

| PMF |

|

|---|

| CDF |

, or , or  , or , or

(for  , where , where  is the upper incomplete gamma function, is the upper incomplete gamma function,  is the floor function, and Q is the regularized gamma function) is the floor function, and Q is the regularized gamma function) |

|---|

| Mean |

|

|---|

| Median |

|

|---|

| Mode |

|

|---|

| Variance |

|

|---|

| Skewness |

|

|---|

| Ex. kurtosis |

|

|---|

| Entropy |

![\lambda [1-\log(\lambda )]+e^{-\lambda }\sum _{k=0}^{\infty }{\frac {\lambda ^{k}\log(k!)}{k!}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cf6cf37058d59e89453fd5bf9a1ece59a8c81d1a) (for large

(for large  ) )

|

|---|

| MGF |

![{\displaystyle \exp[\lambda (e^{t}-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/37f90da880784209b7d467c2ee82a15ef35544bd) |

|---|

| CF |

![{\displaystyle \exp[\lambda (e^{it}-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/827b1fc5eade6309eb6c3847ef256b239d3d046b) |

|---|

| PGF |

![{\displaystyle \exp[\lambda (z-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ba700aec4d72e190d3991306786e0cfc0cf2e40e) |

|---|

| Fisher information |

|

|---|

In probability theory and statistics, the Poisson distribution is a discrete probability distribution

that expresses the probability of a given number of events occurring in

a fixed interval of time or space if these events occur with a known

constant mean rate and independently of the time since the last event. It is named after French mathematician Siméon Denis Poisson (; French pronunciation: [pwasɔ̃]).

The Poisson distribution can also be used for the number of events in

other specified interval types such as distance, area or volume.

For instance, a call center receives an average of 180 calls per

hour, 24 hours a day. The calls are independent; receiving one does not

change the probability of when the next one will arrive. The number of

calls received during any minute has a Poisson probability

distribution: the most likely numbers are 2 and 3 but 1 and 4 are also

likely and there is a small probability of it being as low as zero and a

very small probability it could be 10. Another example is the number

of decay events that occur from a radioactive source during a defined

observation period.

History

The distribution was first introduced by Siméon Denis Poisson (1781–1840) and published together with his probability theory in his work Recherches sur la probabilité des jugements en matière criminelle et en matière civile (1837). The work theorized about the number of wrongful convictions in a given country by focusing on certain random variables N

that count, among other things, the number of discrete occurrences

(sometimes called "events" or "arrivals") that take place during a time-interval of given length. The result had already been given in 1711 by Abraham de Moivre in De Mensura Sortis seu; de Probabilitate Eventuum in Ludis a Casu Fortuito Pendentibus. This makes it an example of Stigler's law and it has prompted some authors to argue that the Poisson distribution should bear the name of de Moivre.

In 1860, Simon Newcomb fitted the Poisson distribution to the number of stars found in a unit of space.

A further practical application of this distribution was made by Ladislaus Bortkiewicz

in 1898 when he was given the task of investigating the number of

soldiers in the Prussian army killed accidentally by horse kicks; this experiment introduced the Poisson distribution to the field of reliability engineering.

Definitions

Probability mass function

A discrete random variable X is said to have a Poisson distribution, with parameter  , if it has a probability mass function given by:

, if it has a probability mass function given by:

where

- k is the number of occurrences (

)

) - e is Euler's number (

)

) - ! is the factorial function.

The positive real number λ is equal to the expected value of X and also to its variance.

The Poisson distribution can be applied to systems with a large number of possible events, each of which is rare.

The number of such events that occur during a fixed time interval is,

under the right circumstances, a random number with a Poisson

distribution.

The equation can be adapted if, instead of the average number of events  , we are given the average rate

, we are given the average rate  at which events occur. Then

at which events occur. Then  , and

, and

Example

Chewing gum on a sidewalk. The number of chewing gums on a single tile is approximately Poisson distributed.

The Poisson distribution may be useful to model events such as:

- the number of meteorites greater than 1 meter diameter that strike Earth in a year;

- the number of patients arriving in an emergency room between 10 and 11 pm; and

- the number of laser photons hitting a detector in a particular time interval.

Assumptions and validity

The Poisson distribution is an appropriate model if the following assumptions are true:

- k is the number of times an event occurs in an interval and k can take values 0, 1, 2, ….

- The occurrence of one event does not affect the probability that a second event will occur. That is, events occur independently.

- The average rate at which events occur is independent of any

occurrences. For simplicity, this is usually assumed to be constant, but

may in practice vary with time.

- Two events cannot occur at exactly the same instant; instead, at

each very small sub-interval, either exactly one event occurs, or no

event occurs.

If these conditions are true, then k is a Poisson random variable, and the distribution of k is a Poisson distribution.

The Poisson distribution is also the limit of a binomial distribution, for which the probability of success for each trial equals λ divided by the number of trials, as the number of trials approaches infinity (see Related distributions).

Examples of probability for Poisson distributions

Once in an interval events: The special case of λ = 1 and k = 0

Suppose that astronomers estimate that large meteorites (above a certain size) hit the earth on average once every 100 years (λ = 1 event per 100 years), and that the number of meteorite hits follows a Poisson distribution. What is the probability of k = 0 meteorite hits in the next 100 years?

Under these assumptions, the probability that no large meteorites hit

the earth in the next 100 years is roughly 0.37. The remaining

1 − 0.37 = 0.63 is the probability of 1, 2, 3, or more large meteorite

hits in the next 100 years.

In an example above, an overflow flood occurred once every 100 years (λ = 1). The probability of no overflow floods in 100 years was roughly 0.37, by the same calculation.

In general, if an event occurs on average once per interval (λ = 1), and the events follow a Poisson distribution, then P(0 events in next interval) = 0.37. In addition, P(exactly one event in next interval) = 0.37, as shown in the table for overflow floods.

Examples that violate the Poisson assumptions

The number of students who arrive at the student union

per minute will likely not follow a Poisson distribution, because the

rate is not constant (low rate during class time, high rate between

class times) and the arrivals of individual students are not independent

(students tend to come in groups).

The number of magnitude 5 earthquakes per year in a country may

not follow a Poisson distribution if one large earthquake increases the

probability of aftershocks of similar magnitude.

Examples in which at least one event is guaranteed are not Poisson distributed; but may be modeled using a zero-truncated Poisson distribution.

Count distributions in which the number of intervals with zero

events is higher than predicted by a Poisson model may be modeled using a

zero-inflated model.

Properties

Descriptive statistics

- The expected value and variance of a Poisson-distributed random variable are both equal to λ.

- The coefficient of variation is

, while the index of dispersion is 1.

, while the index of dispersion is 1. - The mean absolute deviation about the mean is

![{\displaystyle \operatorname {E} [|X-\lambda |]={\frac {2\lambda ^{\lfloor \lambda \rfloor +1}e^{-\lambda }}{\lfloor \lambda \rfloor !}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2d2a28e8d0c95b951904e617de82eb5ab5d74155)

- The mode of a Poisson-distributed random variable with non-integer λ is equal to

, which is the largest integer less than or equal to λ. This is also written as floor(λ). When λ is a positive integer, the modes are λ and λ − 1.

, which is the largest integer less than or equal to λ. This is also written as floor(λ). When λ is a positive integer, the modes are λ and λ − 1. - All of the cumulants of the Poisson distribution are equal to the expected value λ. The nth factorial moment of the Poisson distribution is λn.

- The expected value of a Poisson process is sometimes decomposed into the product of intensity and exposure (or more generally expressed as the integral of an "intensity function" over time or space, sometimes described as “exposure”).

Median

Bounds for the median ( ) of the distribution are known and are sharp:

) of the distribution are known and are sharp:

Higher moments

The higher non-centered moments, mk of the Poisson distribution, are Touchard polynomials in λ:

where the {braces} denote

Stirling numbers of the second kind. The coefficients of the polynomials have a

combinatorial meaning. In fact, when the expected value of the Poisson distribution is 1, then

Dobinski's formula says that the

nth moment equals the number of

partitions of a set of size

n.

A simple bound is

![{\displaystyle m_{k}=E[X^{k}]\leq \left({\frac {k}{\log(k/\lambda +1)}}\right)^{k}\leq \lambda ^{k}\exp \left({\frac {k^{2}}{2\lambda }}\right).}](https://wikimedia.org/api/rest_v1/media/math/render/svg/18d2997208e48609996f73dc03db70e997b01b29)

Sums of Poisson-distributed random variables

If  for

for  are independent, then

are independent, then  A converse is Raikov's theorem,

which says that if the sum of two independent random variables is

Poisson-distributed, then so are each of those two independent random

variables.

A converse is Raikov's theorem,

which says that if the sum of two independent random variables is

Poisson-distributed, then so are each of those two independent random

variables.

Other properties

- The Poisson distributions are infinitely divisible probability distributions.

- The directed Kullback–Leibler divergence of

from

from  is given by

is given by

- Bounds for the tail probabilities of a Poisson random variable

can be derived using a Chernoff bound argument.

can be derived using a Chernoff bound argument.

- The upper tail probability can be tightened (by a factor of at least two) as follows:

is the directed Kullback–Leibler divergence, as described above.

is the directed Kullback–Leibler divergence, as described above. - Inequalities that relate the distribution function of a Poisson random variable

to the Standard normal distribution function

to the Standard normal distribution function  are as follows:

are as follows:

is again the directed Kullback–Leibler divergence.

is again the directed Kullback–Leibler divergence.

Poisson races

Let  and

and  be independent random variables, with

be independent random variables, with  , then we have that

, then we have that

The upper bound is proved using a standard Chernoff bound.

The lower bound can be proved by noting that  is the probability that

is the probability that  , where

, where  , which is bounded below by

, which is bounded below by  , where

, where  is relative entropy (See the entry on bounds on tails of binomial distributions for details). Further noting that

is relative entropy (See the entry on bounds on tails of binomial distributions for details). Further noting that  ,

and computing a lower bound on the unconditional probability gives the

result. More details can be found in the appendix of Kamath et al..

,

and computing a lower bound on the unconditional probability gives the

result. More details can be found in the appendix of Kamath et al..

Related distributions

General

- If

and

and  are independent, then the difference

are independent, then the difference  follows a Skellam distribution.

follows a Skellam distribution. - If

and

and  are independent, then the distribution of

are independent, then the distribution of  conditional on

conditional on  is a binomial distribution. Specifically, if

is a binomial distribution. Specifically, if  , then

, then  . More generally, if X1, X2, …, Xn are independent Poisson random variables with parameters λ1, λ2, …, λn then

. More generally, if X1, X2, …, Xn are independent Poisson random variables with parameters λ1, λ2, …, λn then

- given

it follows that

it follows that  . In fact,

. In fact,  .

.

- If

and the distribution of

and the distribution of  , conditional on X = k, is a binomial distribution,

, conditional on X = k, is a binomial distribution,  , then the distribution of Y follows a Poisson distribution

, then the distribution of Y follows a Poisson distribution  . In fact, if

. In fact, if  , conditional on X = k, follows a multinomial distribution,

, conditional on X = k, follows a multinomial distribution,  , then each

, then each  follows an independent Poisson distribution

follows an independent Poisson distribution  .

. - The Poisson distribution can be derived as a limiting case to the

binomial distribution as the number of trials goes to infinity and the expected number of successes remains fixed — see law of rare events below. Therefore, it can be used as an approximation of the binomial distribution if n is sufficiently large and p

is sufficiently small. There is a rule of thumb stating that the

Poisson distribution is a good approximation of the binomial

distribution if n is at least 20 and p is smaller than or equal to 0.05, and an excellent approximation if n ≥ 100 and np ≤ 10.

- The Poisson distribution is a special case of the discrete compound Poisson distribution (or stuttering Poisson distribution) with only a parameter.

The discrete compound Poisson distribution can be deduced from the

limiting distribution of univariate multinomial distribution. It is also

a special case of a compound Poisson distribution.

- For sufficiently large values of λ, (say λ>1000), the normal distribution with mean λ and variance λ (standard deviation

)

is an excellent approximation to the Poisson distribution. If λ is

greater than about 10, then the normal distribution is a good

approximation if an appropriate continuity correction is performed, i.e., if P(X ≤ x), where x is a non-negative integer, is replaced by P(X ≤ x + 0.5).

)

is an excellent approximation to the Poisson distribution. If λ is

greater than about 10, then the normal distribution is a good

approximation if an appropriate continuity correction is performed, i.e., if P(X ≤ x), where x is a non-negative integer, is replaced by P(X ≤ x + 0.5).

- Variance-stabilizing transformation: If

, then

, then

increases) is far faster than the untransformed variable. Other, slightly more complicated, variance stabilizing transformations are available, one of which is Anscombe transform. See Data transformation (statistics) for more general uses of transformations.

increases) is far faster than the untransformed variable. Other, slightly more complicated, variance stabilizing transformations are available, one of which is Anscombe transform. See Data transformation (statistics) for more general uses of transformations. - If for every t > 0 the number of arrivals in the time interval [0, t] follows the Poisson distribution with mean λt, then the sequence of inter-arrival times are independent and identically distributed exponential random variables having mean 1/λ.

- The cumulative distribution functions of the Poisson and chi-squared distributions are related in the following ways:

Poisson approximation

Assume  where

where  , then

, then  is multinomially distributed

is multinomially distributed

conditioned on

conditioned on  .

.

This means , among other things, that for any nonnegative function  ,

if

,

if  is multinomially distributed, then

is multinomially distributed, then

![{\displaystyle \operatorname {E} [f(Y_{1},Y_{2},\dots ,Y_{n})]\leq e{\sqrt {m}}\operatorname {E} [f(X_{1},X_{2},\dots ,X_{n})]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2d196f4817af3673334cad96f9aa090d2ae3cb7e)

where

.

The factor of  can be replaced by 2 if

can be replaced by 2 if  is further assumed to be monotonically increasing or decreasing.

is further assumed to be monotonically increasing or decreasing.

Bivariate Poisson distribution

This distribution has been extended to the bivariate case. The generating function for this distribution is

![{\displaystyle g(u,v)=\exp[(\theta _{1}-\theta _{12})(u-1)+(\theta _{2}-\theta _{12})(v-1)+\theta _{12}(uv-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0d994b2c4f3b36c80cfd0b97ed72fe289c0855d4)

with

The marginal distributions are Poisson(θ1) and Poisson(θ2) and the correlation coefficient is limited to the range

A simple way to generate a bivariate Poisson distribution  is to take three independent Poisson distributions

is to take three independent Poisson distributions  with means

with means  and then set

and then set  . The probability function of the bivariate Poisson distribution is

. The probability function of the bivariate Poisson distribution is

Free Poisson distribution

The free Poisson distribution with jump size  and rate

and rate  arises in free probability theory as the limit of repeated free convolution

arises in free probability theory as the limit of repeated free convolution

as

N → ∞.

In other words, let  be random variables so that

be random variables so that  has value

has value  with probability

with probability  and value 0 with the remaining probability. Assume also that the family

and value 0 with the remaining probability. Assume also that the family  are freely independent. Then the limit as

are freely independent. Then the limit as  of the law of

of the law of  is given by the Free Poisson law with parameters

is given by the Free Poisson law with parameters  .

.

This definition is analogous to one of the ways in which the

classical Poisson distribution is obtained from a (classical) Poisson

process.

The measure associated to the free Poisson law is given by

where

and has support

![[\alpha (1-\sqrt{\lambda})^2,\alpha (1+\sqrt{\lambda})^2]](https://wikimedia.org/api/rest_v1/media/math/render/svg/e4f29bfa6afda41f2042de51314c69ffa22c703c)

.

This law also arises in random matrix theory as the Marchenko–Pastur law. Its free cumulants are equal to  .

.

Some transforms of this law

We give values of some important transforms of the free Poisson law; the computation can be found in e.g. in the book Lectures on the Combinatorics of Free Probability by A. Nica and R. Speicher.

The R-transform of the free Poisson law is given by

The Cauchy transform (which is the negative of the Stieltjes transformation) is given by

The S-transform is given by

in the case that

.

Weibull and Stable count

Poisson's probability mass function  can be expressed in a form similar to the product distribution of a Weibull distribution and a variant form of the stable count distribution.

The variable

can be expressed in a form similar to the product distribution of a Weibull distribution and a variant form of the stable count distribution.

The variable  can be regarded as inverse of Lévy's stability parameter in the stable count distribution:

can be regarded as inverse of Lévy's stability parameter in the stable count distribution:

![{\displaystyle f(k;\lambda )=\displaystyle \int _{0}^{\infty }{\frac {1}{u}}\,W_{k+1}({\frac {\lambda }{u}})\left[\left(k+1\right)u^{k}\,{\mathfrak {N}}_{\frac {1}{k+1}}\left(u^{k+1}\right)\right]\,du,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4ff582454e495f55331a2e139a452be39d0981a)

where

is a standard stable count distribution of shape

, and

is a standard Weibull distribution of shape

.

Statistical inference

Parameter estimation

Given a sample of n measured values  , for i = 1, …, n, we wish to estimate the value of the parameter λ of the Poisson population from which the sample was drawn. The maximum likelihood estimate is

, for i = 1, …, n, we wish to estimate the value of the parameter λ of the Poisson population from which the sample was drawn. The maximum likelihood estimate is

Since each observation has expectation λ so does the sample mean. Therefore, the maximum likelihood estimate is an unbiased estimator of λ. It is also an efficient estimator since its variance achieves the Cramér–Rao lower bound (CRLB). Hence it is minimum-variance unbiased.

Also it can be proven that the sum (and hence the sample mean as it is a

one-to-one function of the sum) is a complete and sufficient statistic

for λ.

To prove sufficiency we may use the factorization theorem.

Consider partitioning the probability mass function of the joint

Poisson distribution for the sample into two parts: one that depends

solely on the sample  (called

(called  ) and one that depends on the parameter

) and one that depends on the parameter  and the sample

and the sample  only through the function

only through the function  . Then

. Then  is a sufficient statistic for

is a sufficient statistic for  .

.

The first term,  , depends only on

, depends only on  . The second term,

. The second term,  , depends on the sample only through

, depends on the sample only through  . Thus,

. Thus,  is sufficient.

is sufficient.

To find the parameter λ that maximizes the probability function

for the Poisson population, we can use the logarithm of the likelihood

function:

We take the derivative of  with respect to λ and compare it to zero:

with respect to λ and compare it to zero:

Solving for λ gives a stationary point.

So λ is the average of the ki values. Obtaining the sign of the second derivative of L at the stationary point will determine what kind of extreme value λ is.

Evaluating the second derivative at the stationary point gives:

which is the negative of n times the reciprocal of the average of the ki.

This expression is negative when the average is positive. If this is

satisfied, then the stationary point maximizes the probability function.

For completeness, a family of distributions is said to be complete if and only if  implies that

implies that  for all

for all  . If the individual

. If the individual  are iid

are iid  , then

, then  . Knowing the distribution we want to investigate, it is easy to see that the statistic is complete.

. Knowing the distribution we want to investigate, it is easy to see that the statistic is complete.

For this equality to hold,  must be 0. This follows from the fact that none of the other terms will be 0 for all

must be 0. This follows from the fact that none of the other terms will be 0 for all  in the sum and for all possible values of

in the sum and for all possible values of  . Hence,

. Hence,  for all

for all  implies that

implies that  , and the statistic has been shown to be complete.

, and the statistic has been shown to be complete.

Confidence interval

The confidence interval

for the mean of a Poisson distribution can be expressed using the

relationship between the cumulative distribution functions of the

Poisson and chi-squared distributions. The chi-squared distribution is itself closely related to the gamma distribution, and this leads to an alternative expression. Given an observation k from a Poisson distribution with mean μ, a confidence interval for μ with confidence level 1 – α is

or equivalently,

where  is the quantile function (corresponding to a lower tail area p) of the chi-squared distribution with n degrees of freedom and

is the quantile function (corresponding to a lower tail area p) of the chi-squared distribution with n degrees of freedom and  is the quantile function of a gamma distribution with shape parameter n and scale parameter 1. This interval is 'exact' in the sense that its coverage probability is never less than the nominal 1 – α.

is the quantile function of a gamma distribution with shape parameter n and scale parameter 1. This interval is 'exact' in the sense that its coverage probability is never less than the nominal 1 – α.

When quantiles of the gamma distribution are not available, an

accurate approximation to this exact interval has been proposed (based

on the Wilson–Hilferty transformation):

where  denotes the standard normal deviate with upper tail area α / 2.

denotes the standard normal deviate with upper tail area α / 2.

For application of these formulae in the same context as above (given a sample of n measured values ki each drawn from a Poisson distribution with mean λ), one would set

calculate an interval for μ = nλ, and then derive the interval for λ.

Bayesian inference

In Bayesian inference, the conjugate prior for the rate parameter λ of the Poisson distribution is the gamma distribution. Let

denote that λ is distributed according to the gamma density g parameterized in terms of a shape parameter α and an inverse scale parameter β:

Then, given the same sample of n measured values ki as before, and a prior of Gamma(α, β), the posterior distribution is

Note that the posterior mean is linear and is given by

![{\displaystyle E[\lambda |k_{1},...k_{n}]={\frac {\alpha +\sum _{i=1}^{n}k_{i}}{\beta +n}}.\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c48db001e3086a0e47656072076eb89621ac13c)

It can be show that gamma distribution is the only prior that induces

linearity of the conditional mean. Moreover, a converse results exists

which states that if the conditional mean is close to a linear function

in the  distance than the prior distribution of λ must be close to gamma distribution in Levy distance.

distance than the prior distribution of λ must be close to gamma distribution in Levy distance.

The posterior mean E[λ] approaches the maximum likelihood estimate  in the limit as

in the limit as  , which follows immediately from the general expression of the mean of the gamma distribution.

, which follows immediately from the general expression of the mean of the gamma distribution.

The posterior predictive distribution for a single additional observation is a negative binomial distribution, sometimes called a gamma–Poisson distribution.

Simultaneous estimation of multiple Poisson means

Suppose  is a set of independent random variables from a set of

is a set of independent random variables from a set of  Poisson distributions, each with a parameter

Poisson distributions, each with a parameter  ,

,  , and we would like to estimate these parameters. Then, Clevenson and Zidek show that under the normalized squared error loss

, and we would like to estimate these parameters. Then, Clevenson and Zidek show that under the normalized squared error loss  , when

, when  , then, similar as in Stein's example for the Normal means, the MLE estimator

, then, similar as in Stein's example for the Normal means, the MLE estimator  is inadmissible.

is inadmissible.

In this case, a family of minimax estimators is given for any  and

and  as

as

Occurrence and applications

Applications of the Poisson distribution can be found in many fields including:

The Poisson distribution arises in connection with Poisson processes.

It applies to various phenomena of discrete properties (that is, those

that may happen 0, 1, 2, 3, … times during a given period of time or in a

given area) whenever the probability of the phenomenon happening is

constant in time or space. Examples of events that may be modelled as a Poisson distribution include:

- The number of soldiers killed by horse-kicks each year in each corps in the Prussian cavalry. This example was used in a book by Ladislaus Bortkiewicz (1868–1931).

- The number of yeast cells used when brewing Guinness beer. This example was used by William Sealy Gosset (1876–1937).

- The number of phone calls arriving at a call centre within a minute. This example was described by A.K. Erlang (1878–1929).

- Internet traffic.

- The number of goals in sports involving two competing teams.

- The number of deaths per year in a given age group.

- The number of jumps in a stock price in a given time interval.

- Under an assumption of homogeneity, the number of times a web server is accessed per minute.

- The number of mutations in a given stretch of DNA after a certain amount of radiation.

- The proportion of cells that will be infected at a given multiplicity of infection.

- The number of bacteria in a certain amount of liquid.

- The arrival of photons on a pixel circuit at a given illumination and over a given time period.

- The targeting of V-1 flying bombs on London during World War II investigated by R. D. Clarke in 1946.

Gallagher showed in 1976 that the counts of prime numbers in short intervals obey a Poisson distribution provided a certain version of the unproved prime r-tuple conjecture of Hardy-Littlewood is true.

Law of rare events

Comparison of the Poisson distribution (black lines) and the

binomial distribution with

n = 10 (red circles),

n = 20 (blue circles),

n = 1000 (green circles). All distributions have a mean of 5. The horizontal axis shows the number of events

k. As

n

gets larger, the Poisson distribution becomes an increasingly better

approximation for the binomial distribution with the same mean.

The rate of an event is related to the probability of an event

occurring in some small subinterval (of time, space or otherwise). In

the case of the Poisson distribution, one assumes that there exists a

small enough subinterval for which the probability of an event occurring

twice is "negligible". With this assumption one can derive the Poisson

distribution from the Binomial one, given only the information of

expected number of total events in the whole interval.

Let the total number of events in the whole interval be denoted by  . Divide the whole interval into

. Divide the whole interval into  subintervals

subintervals  of equal size, such that

of equal size, such that  (since we are interested in only very small portions of the interval

this assumption is meaningful). This means that the expected number of

events in each of the n subintervals is equal to

(since we are interested in only very small portions of the interval

this assumption is meaningful). This means that the expected number of

events in each of the n subintervals is equal to  .

.

Now we assume that the occurrence of an event in the whole interval can be seen as a sequence of n Bernoulli trials, where the  -th Bernoulli trial corresponds to looking whether an event happens at the subinterval

-th Bernoulli trial corresponds to looking whether an event happens at the subinterval  with probability

with probability  . The expected number of total events in

. The expected number of total events in  such trials would be

such trials would be  ,

the expected number of total events in the whole interval. Hence for

each subdivision of the interval we have approximated the occurrence of

the event as a Bernoulli process of the form

,

the expected number of total events in the whole interval. Hence for

each subdivision of the interval we have approximated the occurrence of

the event as a Bernoulli process of the form  . As we have noted before we want to consider only very small subintervals. Therefore, we take the limit as

. As we have noted before we want to consider only very small subintervals. Therefore, we take the limit as  goes to infinity.

goes to infinity.

In this case the binomial distribution converges to what is known as the Poisson distribution by the Poisson limit theorem.

In several of the above examples — such as, the number of

mutations in a given sequence of DNA—the events being counted are

actually the outcomes of discrete trials, and would more precisely be

modelled using the binomial distribution, that is

In such cases n is very large and p is very small (and so the expectation np is of intermediate magnitude). Then the distribution may be approximated by the less cumbersome Poisson distribution

This approximation is sometimes known as the law of rare events, since each of the n individual Bernoulli events rarely occurs.

The name "law of rare events" may be misleading because the total

count of success events in a Poisson process need not be rare if the

parameter np is not small. For example, the number of telephone

calls to a busy switchboard in one hour follows a Poisson distribution

with the events appearing frequent to the operator, but they are rare

from the point of view of the average member of the population who is

very unlikely to make a call to that switchboard in that hour.

The variance of the binomial distribution is 1 − p times that of the Poisson distribution, so almost equal when p is very small.

The word law is sometimes used as a synonym of probability distribution, and convergence in law means convergence in distribution.

Accordingly, the Poisson distribution is sometimes called the "law of

small numbers" because it is the probability distribution of the number

of occurrences of an event that happens rarely but has very many

opportunities to happen. The Law of Small Numbers is a book by Ladislaus Bortkiewicz about the Poisson distribution, published in 1898.

Poisson point process

The Poisson distribution arises as the number of points of a Poisson point process located in some finite region. More specifically, if D is some region space, for example Euclidean space Rd, for which |D|, the area, volume or, more generally, the Lebesgue measure of the region is finite, and if N(D) denotes the number of points in D, then

Poisson regression and negative binomial regression

Poisson regression

and negative binomial regression are useful for analyses where the

dependent (response) variable is the count (0, 1, 2, …) of the number of

events or occurrences in an interval.

Other applications in science

In a Poisson process, the number of observed occurrences fluctuates about its mean λ with a standard deviation  . These fluctuations are denoted as Poisson noise or (particularly in electronics) as shot noise.

. These fluctuations are denoted as Poisson noise or (particularly in electronics) as shot noise.

The correlation of the mean and standard deviation in counting

independent discrete occurrences is useful scientifically. By monitoring

how the fluctuations vary with the mean signal, one can estimate the

contribution of a single occurrence, even if that contribution is too small to be detected directly. For example, the charge e on an electron can be estimated by correlating the magnitude of an electric current with its shot noise. If N electrons pass a point in a given time t on the average, the mean current is  ; since the current fluctuations should be of the order

; since the current fluctuations should be of the order  (i.e., the standard deviation of the Poisson process), the charge

(i.e., the standard deviation of the Poisson process), the charge  can be estimated from the ratio

can be estimated from the ratio  .

.

An everyday example is the graininess that appears as photographs

are enlarged; the graininess is due to Poisson fluctuations in the

number of reduced silver grains, not to the individual grains themselves. By correlating

the graininess with the degree of enlargement, one can estimate the

contribution of an individual grain (which is otherwise too small to be

seen unaided). Many other molecular applications of Poisson noise have been developed, e.g., estimating the number density of receptor molecules in a cell membrane.

In causal set theory the discrete elements of spacetime follow a Poisson distribution in the volume.

Computational methods

The Poisson distribution poses two different tasks for dedicated software libraries: Evaluating the distribution  , and drawing random numbers according to that distribution.

, and drawing random numbers according to that distribution.

Evaluating the Poisson distribution

Computing  for given

for given  and

and  is a trivial task that can be accomplished by using the standard definition of

is a trivial task that can be accomplished by using the standard definition of  in terms of exponential, power, and factorial functions. However, the

conventional definition of the Poisson distribution contains two terms

that can easily overflow on computers: λk and k!. The fraction of λk to k! can also produce a rounding error that is very large compared to e−λ,

and therefore give an erroneous result. For numerical stability the

Poisson probability mass function should therefore be evaluated as

in terms of exponential, power, and factorial functions. However, the

conventional definition of the Poisson distribution contains two terms

that can easily overflow on computers: λk and k!. The fraction of λk to k! can also produce a rounding error that is very large compared to e−λ,

and therefore give an erroneous result. For numerical stability the

Poisson probability mass function should therefore be evaluated as

![{\displaystyle \!f(k;\lambda )=\exp \left[k\ln \lambda -\lambda -\ln \Gamma (k+1)\right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/83a8a374612dfba5c3e699450970a5b2330925c3)

which is mathematically equivalent but numerically stable. The natural logarithm of the Gamma function can be obtained using the lgamma function in the C standard library (C99 version) or R, the gammaln function in MATLAB or SciPy, or the log_gamma function in Fortran 2008 and later.

Some computing languages provide built-in functions to evaluate the Poisson distribution, namely

- R: function

dpois(x, lambda); - Excel: function

POISSON( x, mean, cumulative), with a flag to specify the cumulative distribution; - Mathematica: univariate Poisson distribution as

PoissonDistribution[ ]

], bivariate Poisson distribution as MultivariatePoissonDistribution[ ,{

,{  ,

,  }]

}].

Random drawing from the Poisson distribution

The less trivial task is to draw random integers from the Poisson distribution with given  .

.

Solutions are provided by:

Generating Poisson-distributed random variables

A simple algorithm to generate random Poisson-distributed numbers (pseudo-random number sampling) has been given by Knuth:

algorithm poisson random number (Knuth):

init:

Let L ← e−λ, k ← 0 and p ← 1.

do:

k ← k + 1.

Generate uniform random number u in [0,1] and let p ← p × u.

while p > L.

return k − 1.

The complexity is linear in the returned value k, which is λ on average. There are many other algorithms to improve this. Some are given in Ahrens & Dieter.

For large values of λ, the value of L = e−λ may

be so small that it is hard to represent. This can be solved by a

change to the algorithm which uses an additional parameter STEP such

that e−STEP does not underflow:

algorithm poisson random number (Junhao, based on Knuth):

init:

Let λLeft ← λ, k ← 0 and p ← 1.

do:

k ← k + 1.

Generate uniform random number u in (0,1) and let p ← p × u.

while p < 1 and λLeft > 0:

if λLeft > STEP:

p ← p × eSTEP

λLeft ← λLeft − STEP

else:

p ← p × eλLeft

λLeft ← 0

while p > 1.

return k − 1.

The choice of STEP depends on the threshold of overflow. For double precision floating point format the threshold is near e700, so 500 should be a safe STEP.

Other solutions for large values of λ include rejection sampling and using Gaussian approximation.

Inverse transform sampling is simple and efficient for small values of λ, and requires only one uniform random number u per sample. Cumulative probabilities are examined in turn until one exceeds u.

algorithm Poisson generator based upon the inversion by sequential search:

init:

Let x ← 0, p ← e−λ, s ← p.

Generate uniform random number u in [0,1].

while u > s do:

x ← x + 1.

p ← p × λ / x.

s ← s + p.

return x.

![\lambda [1-\log(\lambda )]+e^{-\lambda }\sum _{k=0}^{\infty }{\frac {\lambda ^{k}\log(k!)}{k!}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cf6cf37058d59e89453fd5bf9a1ece59a8c81d1a)

![{\displaystyle \exp[\lambda (e^{t}-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/37f90da880784209b7d467c2ee82a15ef35544bd)

![{\displaystyle \exp[\lambda (e^{it}-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/827b1fc5eade6309eb6c3847ef256b239d3d046b)

![{\displaystyle \exp[\lambda (z-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ba700aec4d72e190d3991306786e0cfc0cf2e40e)

![{\displaystyle \operatorname {E} [|X-\lambda |]={\frac {2\lambda ^{\lfloor \lambda \rfloor +1}e^{-\lambda }}{\lfloor \lambda \rfloor !}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2d2a28e8d0c95b951904e617de82eb5ab5d74155)

![{\displaystyle m_{k}=E[X^{k}]\leq \left({\frac {k}{\log(k/\lambda +1)}}\right)^{k}\leq \lambda ^{k}\exp \left({\frac {k^{2}}{2\lambda }}\right).}](https://wikimedia.org/api/rest_v1/media/math/render/svg/18d2997208e48609996f73dc03db70e997b01b29)

![{\displaystyle \operatorname {E} [f(Y_{1},Y_{2},\dots ,Y_{n})]\leq e{\sqrt {m}}\operatorname {E} [f(X_{1},X_{2},\dots ,X_{n})]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2d196f4817af3673334cad96f9aa090d2ae3cb7e)

![{\displaystyle g(u,v)=\exp[(\theta _{1}-\theta _{12})(u-1)+(\theta _{2}-\theta _{12})(v-1)+\theta _{12}(uv-1)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0d994b2c4f3b36c80cfd0b97ed72fe289c0855d4)

![[\alpha (1-\sqrt{\lambda})^2,\alpha (1+\sqrt{\lambda})^2]](https://wikimedia.org/api/rest_v1/media/math/render/svg/e4f29bfa6afda41f2042de51314c69ffa22c703c)

![{\displaystyle f(k;\lambda )=\displaystyle \int _{0}^{\infty }{\frac {1}{u}}\,W_{k+1}({\frac {\lambda }{u}})\left[\left(k+1\right)u^{k}\,{\mathfrak {N}}_{\frac {1}{k+1}}\left(u^{k+1}\right)\right]\,du,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a4ff582454e495f55331a2e139a452be39d0981a)

![{\displaystyle E[\lambda |k_{1},...k_{n}]={\frac {\alpha +\sum _{i=1}^{n}k_{i}}{\beta +n}}.\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c48db001e3086a0e47656072076eb89621ac13c)

![{\displaystyle \!f(k;\lambda )=\exp \left[k\ln \lambda -\lambda -\ln \Gamma (k+1)\right],}](https://wikimedia.org/api/rest_v1/media/math/render/svg/83a8a374612dfba5c3e699450970a5b2330925c3)