A plot of CPU transistor counts against dates of introduction.

Moore's law is the observation that the number of transistors in a dense integrated circuit doubles about every two years. The observation is named after Gordon Moore, the co-founder of Fairchild Semiconductor and CEO of Intel, whose 1965 paper described a doubling every year in the number of components per integrated circuit, and projected this rate of growth would continue for at least another decade. In 1975, looking forward to the next decade, he revised the forecast to doubling every two years.

The period is often quoted as 18 months because of a prediction by

Intel executive David House (being a combination of the effect of more

transistors and the transistors being faster).

Moore's prediction proved accurate for several decades, and has been used in the semiconductor industry to guide long-term planning and to set targets for research and development.

Advancements in digital electronics are strongly linked to Moore's law: quality-adjusted microprocessor prices, memory capacity, sensors and even the number and size of pixels in digital cameras. Digital electronics has contributed to world economic growth in the late twentieth and early twenty-first centuries.

Moore's law describes a driving force of technological and social change, productivity, and economic growth.

Moore's law is an observation and projection of a historical trend and not a physical or natural law.

Although the rate held steady from 1975 until around 2012, the rate was

faster during the first decade. In general, it is not logically sound

to extrapolate from the historical growth rate into the indefinite

future. For example, the 2010 update to the International Technology Roadmap for Semiconductors predicted that growth would slow around 2013, and in 2015 Gordon Moore foresaw that the rate of progress would reach saturation: "I see Moore's law dying here in the next decade or so."

Intel stated in 2015 that the pace of advancement has slowed, starting at the 22 nm feature width around 2012, and continuing at 14 nm. Brian Krzanich,

the former CEO of Intel, announced, "Our cadence today is closer to two

and a half years than two." Intel is expected to reach the 10 nm node in 2018, a three-year cadence. Intel also stated in 2017 that hyperscaling

would be able to continue the trend of Moore's law and offset the

increased cadence by aggressively scaling beyond the typical doubling of

transistors.

Krzanich cited Moore's 1975 revision as a precedent for the current

deceleration, which results from technical challenges and is "a natural

part of the history of Moore's law".

History

Gordon Moore in 2004

In 1959, Douglas Engelbart discussed the projected downscaling of integrated circuit size in the article "Microelectronics, and the Art of Similitude". Engelbart presented his ideas at the 1960 International Solid-State Circuits Conference, where Moore was present in the audience.

For the thirty-fifth anniversary issue of Electronics

magazine, which was published on April 19, 1965, Gordon E. Moore, who

was working as the director of research and development at Fairchild Semiconductor

at the time, was asked to predict what was going to happen in the

semiconductor components industry over the next ten years. His response

was a brief article entitled, "Cramming more components onto integrated

circuits".

Within his editorial, he speculated that by 1975 it would be possible

to contain as many as 65,000 components on a single quarter-inch

semiconductor.

The complexity for minimum component costs has increased at a rate of roughly a factor of two per year. Certainly over the short term this rate can be expected to continue, if not to increase. Over the longer term, the rate of increase is a bit more uncertain, although there is no reason to believe it will not remain nearly constant for at least 10 years.

His reasoning was a log-linear relationship between device complexity (higher circuit density at reduced cost) and time.

At the 1975 IEEE International Electron Devices Meeting, Moore revised the forecast rate.

Semiconductor complexity would continue to double annually until about

1980 after which it would decrease to a rate of doubling approximately

every two years. He outlined several contributing factors for this exponential behavior:

- die sizes were increasing at an exponential rate and as defective densities decreased, chip manufacturers could work with larger areas without losing reduction yields;

- simultaneous evolution to finer minimum dimensions;

- and what Moore called "circuit and device cleverness".

Shortly after 1975, Caltech professor Carver Mead popularized the term "Moore's law".

Despite a popular misconception, Moore is adamant that he did not

predict a doubling "every 18 months". Rather, David House, an Intel

colleague, had factored in the increasing performance of transistors to

conclude that integrated circuits would double in performance every 18 months.

An Osborne Executive portable computer, from 1982, with a Zilog Z80 4 MHz CPU, and a 2007 Apple iPhone with a 412 MHz ARM11

CPU; the Executive weighs 100 times as much, has nearly 500 times the

volume, costs approximately 10 times as much (adjusted for inflation),

and has about 1/100th the clock frequency of the smartphone.

Moore's law came to be widely accepted as a goal for the industry, and it was cited by competitive semiconductor

manufacturers as they strove to increase processing power. Moore viewed

his eponymous law as surprising and optimistic: "Moore's law is a

violation of Murphy's law. Everything gets better and better." The observation was even seen as a self-fulfilling prophecy. However, the rate of improvement in physical dimensions known as Dennard scaling

has slowed in recent years; and the industry shifted in about 2016 from

using semiconductor scaling as a driver to more of a focus on meeting

the needs of major computing applications.

In April 2005, Intel offered US$10,000 to purchase a copy of the original Electronics issue in which Moore's article appeared. An engineer living in the United Kingdom was the first to find a copy and offer it to Intel.

Moore's second law

As the cost of computer power to the consumer

falls, the cost for producers to fulfill Moore's law follows an

opposite trend: R&D, manufacturing, and test costs have increased

steadily with each new generation of chips. Rising manufacturing costs

are an important consideration for the sustaining of Moore's law.

This had led to the formulation of Moore's second law, also called Rock's law, which is that the capital cost of a semiconductor fab also increases exponentially over time.

Major enabling factors

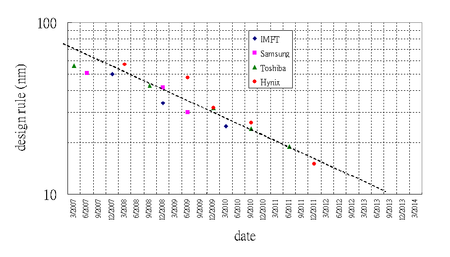

The

trend of scaling for NAND flash memory allows doubling of components

manufactured in the same wafer area in less than 18 months.

Numerous innovations by scientists and engineers have sustained

Moore's law since the beginning of the integrated circuit (IC) era. Some

of the key innovations are listed below, as examples of breakthroughs

that have advanced integrated circuit technology by more than seven orders of magnitude in less than five decades:

- The foremost contribution, which is the raison d'être for Moore's law, is the invention of the integrated circuit, credited contemporaneously to Jack Kilby at Texas Instruments and Robert Noyce at Fairchild Semiconductor.

- The invention of the complementary metal-oxide-semiconductor (CMOS) process by Frank Wanlass in 1963, and a number of advances in CMOS technology by many workers in the semiconductor field since the work of Wanlass, have enabled the extremely dense and high-performance ICs that the industry makes today.

- The invention of dynamic random-access memory (DRAM) technology by Robert Dennard at IBM in 1967 made it possible to fabricate single-transistor memory cells, and the invention of flash memory by Fujio Masuoka at Toshiba in the 1980s led to low-cost, high-capacity memory in diverse electronic products.

- The invention of chemically-amplified photoresist by Hiroshi Ito, C. Grant Willson and J. M. J. Fréchet at IBM c. 1980 that was 5-10 times more sensitive to ultraviolet light. IBM introduced chemically amplified photoresist for DRAM production in the mid-1980s.

- The invention of deep UV excimer laser photolithography by Kanti Jain at IBM c.1980 has enabled the smallest features in ICs to shrink from 800 nanometers in 1990 to as low as 10 nanometers in 2016. Prior to this, excimer lasers had been mainly used as research devices since their development in the 1970s. From a broader scientific perspective, the invention of excimer laser lithography has been highlighted as one of the major milestones in the 50-year history of the laser.

- The interconnect innovations of the late 1990s, including chemical-mechanical polishing or chemical mechanical planarization (CMP), trench isolation, and copper interconnects—although not directly a factor in creating smaller transistors—have enabled improved wafer yield, additional layers of metal wires, closer spacing of devices, and lower electrical resistance.

Computer industry technology road maps predicted in 2001 that Moore's

law would continue for several generations of semiconductor chips.

Depending on the doubling time used in the calculations, this could mean

up to a hundredfold increase in transistor count per chip within a

decade. The semiconductor industry technology roadmap used a three-year

doubling time for microprocessors, leading to a tenfold increase in a decade. Intel was reported in 2005 as stating that the downsizing of silicon chips with good economics could continue during the following decade, and in 2008 as predicting the trend through 2029.

Recent trends

An atomistic simulation for electron density as gate voltage (Vg) varies in a nanowire

MOSFET. The threshold voltage is around 0.45 V. Nanowire MOSFETs lie

toward the end of the ITRS road map for scaling devices below 10 nm gate

lengths. A FinFET

has three sides of the channel covered by gate, while some nanowire

transistors have gate-all-around structure, providing better gate

control.

One of the key challenges of engineering future nanoscale transistors

is the design of gates. As device dimension shrinks, controlling the

current flow in the thin channel becomes more difficult. Compared to FinFETs, which have gate dielectric on three sides of the channel, gate-all-around structure has ever better gate control.

- In 2010, researchers at the Tyndall National Institute in Cork, Ireland announced a junctionless transistor. A control gate wrapped around a silicon nanowire can control the passage of electrons without the use of junctions or doping. They claim these may be produced at 10-nanometer scale using existing fabrication techniques.

- In 2011, researchers at the University of Pittsburgh announced the development of a single-electron transistor, 1.5 nanometers in diameter, made out of oxide based materials. Three "wires" converge on a central "island" that can house one or two electrons. Electrons tunnel from one wire to another through the island. Conditions on the third wire result in distinct conductive properties including the ability of the transistor to act as a solid state memory. Nanowire transistors could spur the creation of microscopic computers.

- In 2012, a research team at the University of New South Wales announced the development of the first working transistor consisting of a single atom placed precisely in a silicon crystal (not just picked from a large sample of random transistors). Moore's law predicted this milestone to be reached for ICs in the lab by 2020.

- In 2015, IBM demonstrated 7 nm node chips with silicon-germanium transistors produced using EUVL. The company believes this transistor density would be four times that of current 14 nm chips.

Revolutionary technology advances may help sustain Moore's law

through improved performance with or without reduced feature size.

- In 2008, researchers at HP Labs announced a working memristor, a fourth basic passive circuit element whose existence only had been theorized previously. The memristor's unique properties permit the creation of smaller and better-performing electronic devices.

- In 2014, bioengineers at Stanford University developed a circuit modeled on the human brain. Sixteen "Neurocore" chips simulate one million neurons and billions of synaptic connections, claimed to be 9,000 times faster as well as more energy efficient than a typical PC.

- In 2015, Intel and Micron announced 3D XPoint, a non-volatile memory claimed to be significantly faster with similar density compared to NAND. Production scheduled to begin in 2016 was delayed until the second half of 2017.

While physical limits to transistor scaling such as source-to-drain

leakage, limited gate metals, and limited options for channel material

have been reached, new avenues for continued scaling are open. The most

promising of these approaches rely on using the spin state of electron spintronics, tunnel junctions,

and advanced confinement of channel materials via nano-wire geometry. A

comprehensive list of available device choices shows that a wide range

of device options is open for continuing Moore's law into the next few

decades. Spin-based logic and memory options are being developed actively in industrial labs, as well as academic labs.

Alternative materials research

The vast majority of current transistors on ICs are composed principally of doped silicon and its alloys. As silicon is fabricated into single nanometer transistors, short-channel effects

adversely change desired material properties of silicon as a functional

transistor. Below are several non-silicon substitutes in the

fabrication of small nanometer transistors.

One proposed material is indium gallium arsenide,

or InGaAs. Compared to their silicon and germanium counterparts, InGaAs

transistors are more promising for future high-speed, low-power logic

applications. Because of intrinsic characteristics of III-V compound semiconductors, quantum well and

tunnel effect transistors based on InGaAs have been proposed as alternatives to more traditional MOSFET designs.

- In 2009, Intel announced the development of 80-nanometer InGaAs quantum well transistors. Quantum well devices contain a material sandwiched between two layers of material with a wider band gap. Despite being double the size of leading pure silicon transistors at the time, the company reported that they performed equally as well while consuming less power.

- In 2011, researchers at Intel demonstrated 3-D tri-gate InGaAs transistors with improved leakage characteristics compared to traditional planar designs. The company claims that their design achieved the best electrostatics of any III-V compound semiconductor transistor. At the 2015 International Solid-State Circuits Conference, Intel mentioned the use of III-V compounds based on such an architecture for their 7 nanometer node.

- In 2011, researchers at the University of Texas at Austin developed an InGaAs tunneling field-effect transistors capable of higher operating currents than previous designs. The first III-V TFET designs were demonstrated in 2009 by a joint team from Cornell University and Pennsylvania State University.

- In 2012, a team in MIT's Microsystems Technology Laboratories developed a 22 nm transistor based on InGaAs which, at the time, was the smallest non-silicon transistor ever built. The team used techniques currently used in silicon device fabrication and aims for better electrical performance and a reduction to 10-nanometer scale.

Research is also showing how biological micro-cells are capable of impressive computational power while being energy efficient.

Scanning probe microscopy image of graphene in its hexagonal lattice structure

Various forms of graphene are being studied for graphene electronics, eg. Graphene nanoribbon transistors have shown great promise since its appearance in publications in 2008. (Bulk graphene has a band gap

of zero and thus cannot be used in transistors because of its constant

conductivity, an inability to turn off. The zigzag edges of the

nanoribbons introduce localized energy states in the conduction and

valence bands and thus a bandgap that enables switching when fabricated

as a transistor. As an example, a typical GNR of width of 10 nm has a

desirable bandgap energy of 0.4eV.)

More research will need to be performed, however, on sub 50 nm graphene

layers, as its resistivity value increases and thus electron mobility

decreases.

Driving the future via an application focus

Most semiconductor industry forecasters, including Gordon Moore, expect Moore's law will end by around 2025.

In April 2005, Gordon Moore

stated in an interview that the projection cannot be sustained

indefinitely: "It can't continue forever. The nature of exponentials is

that you push them out and eventually disaster happens." He also noted

that transistors eventually would reach the limits of miniaturization at atomic levels:

In terms of size [of transistors] you can see that we're approaching the size of atoms which is a fundamental barrier, but it'll be two or three generations before we get that far—but that's as far out as we've ever been able to see. We have another 10 to 20 years before we reach a fundamental limit. By then they'll be able to make bigger chips and have transistor budgets in the billions.

In 2016 the International Technology Roadmap for Semiconductors,

after using Moore's Law to drive the industry since 1998, produced its

final roadmap. It no longer centered its research and development plan

on Moore's law. Instead, it outlined what might be called the More than

Moore strategy in which the needs of applications drive chip

development, rather than a focus on semiconductor scaling. Application

drivers range from smartphones to AI to data centers.

A new initiative for a more generalized roadmapping was started through IEEE's initiative Rebooting Computing, named the International Roadmap for Devices and Systems (IRDS).

Consequences

Technological change is a combination of more and of better technology. A 2011 study in the journal Science

showed that the peak of the rate of change of the world's capacity to

compute information was in 1998, when the world's technological capacity

to compute information on general-purpose computers grew at 88% per

year.

Since then, technological change clearly has slowed. In recent times,

every new year allowed humans to carry out roughly 60% more computation

than possibly could have been executed by all existing general-purpose

computers in the year before. This still is exponential, but shows that the rate of technological change varies over time.

The primary driving force of economic growth is the growth of productivity,

and Moore's law factors into productivity. Moore (1995) expected that

"the rate of technological progress is going to be controlled from

financial realities". The reverse could and did occur around the late-1990s, however, with economists reporting that "Productivity growth is the key economic indicator of innovation."

An acceleration in the rate of semiconductor progress contributed to a surge in U.S. productivity growth, which reached 3.4% per year in 1997–2004, outpacing the 1.6% per year during both 1972–1996 and 2005–2013.

As economist Richard G. Anderson notes, "Numerous studies have traced

the cause of the productivity acceleration to technological innovations

in the production of semiconductors that sharply reduced the prices of

such components and of the products that contain them (as well as

expanding the capabilities of such products)."

Intel transistor gate length trend – transistor scaling has slowed down significantly at advanced (smaller) nodes

An alternative source of improved performance is in microarchitecture techniques exploiting the growth of available transistor count. Out-of-order execution and on-chip caching and prefetching

reduce the memory latency bottleneck at the expense of using more

transistors and increasing the processor complexity. These increases are

described empirically by Pollack's Rule,

which states that performance increases due to microarchitecture

techniques approximate the square root of the complexity (number of

transistors or the area) of a processor.

For years, processor makers delivered increases in clock rates and instruction-level parallelism, so that single-threaded code executed faster on newer processors with no modification. Now, to manage CPU power dissipation, processor makers favor multi-core chip designs, and software has to be written in a multi-threaded

manner to take full advantage of the hardware. Many multi-threaded

development paradigms introduce overhead, and will not see a linear

increase in speed vs number of processors. This is particularly true

while accessing shared or dependent resources, due to lock

contention. This effect becomes more noticeable as the number of

processors increases. There are cases where a roughly 45% increase in

processor transistors has translated to roughly 10–20% increase in

processing power.

On the other hand, processor manufacturers are taking advantage

of the 'extra space' that the transistor shrinkage provides to add

specialized processing units to deal with features such as graphics,

video, and cryptography. For one example, Intel's Parallel JavaScript

extension not only adds support for multiple cores, but also for the

other non-general processing features of their chips, as part of the

migration in client side scripting toward HTML5.

A negative implication of Moore's law is obsolescence,

that is, as technologies continue to rapidly "improve", these

improvements may be significant enough to render predecessor

technologies obsolete rapidly. In situations in which security and

survivability of hardware or data are paramount, or in which resources

are limited, rapid obsolescence may pose obstacles to smooth or

continued operations.

Because of the toxic materials used in the production of modern

computers, obsolescence, if not properly managed, may lead to harmful

environmental impacts. On the other hand, obsolescence may sometimes be

desirable to a company which can profit immensely from the regular

purchase of what is often expensive new equipment instead of retaining

one device for a longer period of time. Those in the industry are well

aware of this, and may utilize planned obsolescence as a method of increasing profits.

Moore's law has affected the performance of other technologies significantly: Michael S. Malone wrote of a Moore's War following the apparent success of shock and awe in the early days of the Iraq War. Progress in the development of guided weapons depends on electronic technology.

Improvements in circuit density and low-power operation associated with

Moore's law also have contributed to the development of technologies

including mobile telephones and 3-D printing.

Other formulations and similar observations

Several

measures of digital technology are improving at exponential rates

related to Moore's law, including the size, cost, density, and speed of

components. Moore wrote only about the density of components, "a

component being a transistor, resistor, diode or capacitor", at minimum cost.

Transistors per integrated circuit – The most popular formulation is of the doubling of the number of transistors on integrated circuits

every two years. At the end of the 1970s, Moore's law became known as

the limit for the number of transistors on the most complex chips. The

graph at the top shows this trend holds true today.

- As of 2017, the commercially available processor possessing the highest number of transistors is the 48 core Centriq with over 18 billion transistors.

Density at minimum cost per transistor – This is the formulation given in Moore's 1965 paper.

It is not just about the density of transistors that can be achieved,

but about the density of transistors at which the cost per transistor is

the lowest.

As more transistors are put on a chip, the cost to make each transistor

decreases, but the chance that the chip will not work due to a defect

increases. In 1965, Moore examined the density of transistors at which

cost is minimized, and observed that, as transistors were made smaller

through advances in photolithography, this number would increase at "a rate of roughly a factor of two per year".

Dennard scaling

– This suggests that power requirements are proportional to area (both

voltage and current being proportional to length) for transistors.

Combined with Moore's law, performance per watt would grow at roughly the same rate as transistor density, doubling every 1–2 years. According to Dennard scaling

transistor dimensions are scaled by 30% (0.7x) every technology

generation, thus reducing their area by 50%. This reduces the delay by

30% (0.7x) and therefore increases operating frequency by about 40%

(1.4x). Finally, to keep electric field constant, voltage is reduced by

30%, reducing energy by 65% and power (at 1.4x frequency) by 50%.

Therefore, in every technology generation transistor density doubles,

circuit becomes 40% faster, while power consumption (with twice the

number of transistors) stays the same.

The exponential processor transistor growth predicted by Moore

does not always translate into exponentially greater practical CPU

performance. Since around 2005–2007, Dennard scaling appears to have

broken down, so even though Moore's law continued for several years

after that, it has not yielded dividends in improved performance.

The primary reason cited for the breakdown is that at small sizes,

current leakage poses greater challenges, and also causes the chip to

heat up, which creates a threat of thermal runaway and therefore, further increases energy costs.

The breakdown of Dennard scaling prompted a switch among some

chip manufacturers to a greater focus on multicore processors, but the

gains offered by switching to more cores are lower than the gains that

would be achieved had Dennard scaling continued. In another departure from Dennard scaling, Intel microprocessors adopted a non-planar tri-gate FinFET at 22 nm in 2012 that is faster and consumes less power than a conventional planar transistor.

Quality adjusted price of IT equipment – The price of information technology (IT), computers and peripheral equipment, adjusted for quality and inflation, declined 16% per year on average over the five decades from 1959 to 2009.

The pace accelerated, however, to 23% per year in 1995–1999 triggered by faster IT innovation, and later, slowed to 2% per year in 2010–2013.

The rate of quality-adjusted

microprocessor price improvement likewise varies, and is not linear on a

log scale. Microprocessor price improvement accelerated during the late

1990s, reaching 60% per year (halving every nine months) versus the

typical 30% improvement rate (halving every two years) during the years

earlier and later. Laptop microprocessors in particular improved 25–35% per year in 2004–2010, and slowed to 15–25% per year in 2010–2013.

The number of transistors per chip cannot explain quality-adjusted microprocessor prices fully.

Moore's 1995 paper does not limit Moore's law to strict linearity or to

transistor count, "The definition of 'Moore's Law' has come to refer to

almost anything related to the semiconductor industry that when plotted

on semi-log paper approximates a straight line. I hesitate to review

its origins and by doing so restrict its definition."

Hard disk drive areal density – A similar observation (sometimes called Kryder's law) was made in 2005 for hard disk drive areal density.

Several decades of rapid progress in areal density advancement slowed significantly around 2010, because of noise related to smaller grain size of the disk media, thermal stability, and writability using available magnetic fields.

Fiber-optic capacity – The number of bits per second that can be sent down an optical fiber increases exponentially, faster than Moore's law. Keck's law, in honor of Donald Keck.

Network capacity – According to Gerry/Gerald Butters, the former head of Lucent's Optical Networking Group at Bell Labs, there is another version, called Butters' Law of Photonics,

a formulation that deliberately parallels Moore's law. Butters' law

says that the amount of data coming out of an optical fiber is doubling

every nine months. Thus, the cost of transmitting a bit over an optical network decreases by half every nine months. The availability of wavelength-division multiplexing

(sometimes called WDM) increased the capacity that could be placed on a

single fiber by as much as a factor of 100. Optical networking and dense wavelength-division multiplexing

(DWDM) is rapidly bringing down the cost of networking, and further

progress seems assured. As a result, the wholesale price of data traffic

collapsed in the dot-com bubble. Nielsen's Law says that the bandwidth available to users increases by 50% annually.

Pixels per dollar – Similarly, Barry Hendy of Kodak

Australia has plotted pixels per dollar as a basic measure of value for a

digital camera, demonstrating the historical linearity (on a log scale)

of this market and the opportunity to predict the future trend of

digital camera price, LCD and LED screens, and resolution.

The great Moore's law compensator (TGMLC), also known as Wirth's law – generally is referred to as software bloat

and is the principle that successive generations of computer software

increase in size and complexity, thereby offsetting the performance

gains predicted by Moore's law. In a 2008 article in InfoWorld, Randall C. Kennedy, formerly of Intel, introduces this term using successive versions of Microsoft Office

between the year 2000 and 2007 as his premise. Despite the gains in

computational performance during this time period according to Moore's

law, Office 2007 performed the same task at half the speed on a

prototypical year 2007 computer as compared to Office 2000 on a year

2000 computer.

Library expansion – was calculated in 1945 by Fremont Rider to double in capacity every 16 years, if sufficient space were made available. He advocated replacing bulky, decaying printed works with miniaturized microform

analog photographs, which could be duplicated on-demand for library

patrons or other institutions. He did not foresee the digital technology

that would follow decades later to replace analog microform with

digital imaging, storage, and transmission media. Automated, potentially

lossless digital technologies allowed vast increases in the rapidity of

information growth in an era that now sometimes is called the Information Age.

Carlson curve – is a term coined by The Economist to describe the biotechnological equivalent of Moore's law, and is named after author Rob Carlson.

Carlson accurately predicted that the doubling time of DNA sequencing

technologies (measured by cost and performance) would be at least as

fast as Moore's law.

Carlson Curves illustrate the rapid (in some cases hyperexponential)

decreases in cost, and increases in performance, of a variety of

technologies, including DNA sequencing, DNA synthesis, and a range of

physical and computational tools used in protein expression and in

determining protein structures.

Eroom's law

– is a pharmaceutical drug development observation which was

deliberately written as Moore's Law spelled backwards in order to

contrast it with the exponential advancements of other forms of

technology (such as transistors) over time. It states that the cost of

developing a new drug roughly doubles every nine years.

Experience curve effects

says that each doubling of the cumulative production of virtually any

product or service is accompanied by an approximate constant percentage

reduction in the unit cost. The acknowledged first documented

qualitative description of this dates from 1885. A power curve was used to describe this phenomenon in a 1936 discussion of the cost of airplanes.