Thermodynamic temperature is the absolute measure of temperature and is one of the principal parameters of thermodynamics.

Thermodynamic temperature is defined by the third law of thermodynamics in which the theoretically lowest temperature is the null or zero point. At this point, absolute zero, the particle constituents of matter have minimal motion and can become no colder. In the quantum-mechanical description, matter at absolute zero is in its ground state, which is its state of lowest energy. Thermodynamic temperature is often also called absolute temperature, for two reasons: one, proposed by Kelvin, that it does not depend on the properties of a particular material; two that it refers to an absolute zero according to the properties of the ideal gas.

The International System of Units specifies a particular scale for thermodynamic temperature. It uses the kelvin scale for measurement and selects the triple point of water at 273.16 K as the fundamental fixing point. Other scales have been in use historically. The Rankine scale, using the degree Fahrenheit as its unit interval, is still in use as part of the English Engineering Units in the United States in some engineering fields. ITS-90 gives a practical means of estimating the thermodynamic temperature to a very high degree of accuracy.

Roughly, the temperature of a body at rest is a measure of the mean of the energy of the translational, vibrational and rotational motions of matter's particle constituents, such as molecules, atoms, and subatomic particles. The full variety of these kinetic motions, along with potential energies of particles, and also occasionally certain other types of particle energy in equilibrium with these, make up the total internal energy of a substance. Internal energy is loosely called the heat energy or thermal energy in conditions when no work is done upon the substance by its surroundings, or by the substance upon the surroundings. Internal energy may be stored in a number of ways within a substance, each way constituting a "degree of freedom". At equilibrium, each degree of freedom will have on average the same energy: where is the Boltzmann constant, unless that degree of freedom is in the quantum regime. The internal degrees of freedom (rotation, vibration, etc.) may be in the quantum regime at room temperature, but the translational degrees of freedom will be in the classical regime except at extremely low temperatures (fractions of kelvins) and it may be said that, for most situations, the thermodynamic temperature is specified by the average translational kinetic energy of the particles.

Thermodynamic temperature is defined by the third law of thermodynamics in which the theoretically lowest temperature is the null or zero point. At this point, absolute zero, the particle constituents of matter have minimal motion and can become no colder. In the quantum-mechanical description, matter at absolute zero is in its ground state, which is its state of lowest energy. Thermodynamic temperature is often also called absolute temperature, for two reasons: one, proposed by Kelvin, that it does not depend on the properties of a particular material; two that it refers to an absolute zero according to the properties of the ideal gas.

The International System of Units specifies a particular scale for thermodynamic temperature. It uses the kelvin scale for measurement and selects the triple point of water at 273.16 K as the fundamental fixing point. Other scales have been in use historically. The Rankine scale, using the degree Fahrenheit as its unit interval, is still in use as part of the English Engineering Units in the United States in some engineering fields. ITS-90 gives a practical means of estimating the thermodynamic temperature to a very high degree of accuracy.

Roughly, the temperature of a body at rest is a measure of the mean of the energy of the translational, vibrational and rotational motions of matter's particle constituents, such as molecules, atoms, and subatomic particles. The full variety of these kinetic motions, along with potential energies of particles, and also occasionally certain other types of particle energy in equilibrium with these, make up the total internal energy of a substance. Internal energy is loosely called the heat energy or thermal energy in conditions when no work is done upon the substance by its surroundings, or by the substance upon the surroundings. Internal energy may be stored in a number of ways within a substance, each way constituting a "degree of freedom". At equilibrium, each degree of freedom will have on average the same energy: where is the Boltzmann constant, unless that degree of freedom is in the quantum regime. The internal degrees of freedom (rotation, vibration, etc.) may be in the quantum regime at room temperature, but the translational degrees of freedom will be in the classical regime except at extremely low temperatures (fractions of kelvins) and it may be said that, for most situations, the thermodynamic temperature is specified by the average translational kinetic energy of the particles.

Overview

Temperature is a measure of the random submicroscopic motions and vibrations of the particle constituents of matter. These motions comprise the internal energy

of a substance. More specifically, the thermodynamic temperature of any

bulk quantity of matter is the measure of the average kinetic energy

per classical (i.e., non-quantum) degree of freedom of its constituent

particles. "Translational motions" are almost always in the classical

regime. Translational motions are ordinary, whole-body movements in three-dimensional space in which particles move about and exchange energy in collisions. Figure 1 below shows translational motion in gases; Figure 4 below shows translational motion in solids. Thermodynamic temperature's null

point, absolute zero, is the temperature at which the particle

constituents of matter are as close as possible to complete rest; that

is, they have minimal motion, retaining only quantum mechanical motion. Zero kinetic energy remains in a substance at absolute zero.

Throughout the scientific world where measurements are made in SI units, thermodynamic temperature is measured in kelvins (symbol: K). Many engineering fields in the U.S. however, measure thermodynamic temperature using the Rankine scale.

By international agreement, the unit kelvin and its scale are defined by two points: absolute zero, and the triple point of Vienna Standard Mean Ocean Water

(water with a specified blend of hydrogen and oxygen isotopes).

Absolute zero, the lowest possible temperature, is defined as being

precisely 0 K and −273.15 °C. The triple point of water is defined as being precisely 273.16 K and 0.01 °C. This definition does three things:

- It fixes the magnitude of the kelvin unit as being precisely 1 part in 273.16 parts the difference between absolute zero and the triple point of water;

- It establishes that one kelvin has precisely the same magnitude as a one-degree increment on the Celsius scale; and

- It establishes the difference between the two scales' null points as being precisely 273.15 kelvins (0 K = −273.15 °C and 273.16 K = 0.01 °C).

Temperatures expressed in kelvins (TK) are converted to degrees Rankine (T°R) simply by multiplying by 1.8 (T°R = 1.8 × TK). Temperatures expressed in degrees Rankine are converted to kelvins by dividing by 1.8 (TK = T°R ÷ 1.8).

Practical realization

Although the kelvin and Celsius scales are defined using absolute

zero (0 K) and the triple point of water (273.16 K and 0.01 °C), it is

impractical to use this definition at temperatures that are very

different from the triple point of water. ITS-90

is then designed to represent the thermodynamic temperature as closely

as possible throughout its range. Many different thermometer designs

are required to cover the entire range. These include helium vapor

pressure thermometers, helium gas thermometers, standard platinum resistance thermometers (known as SPRTs, PRTs or Platinum RTDs) and monochromatic radiation thermometers.

For some types of thermometer the relationship between the

property observed (e.g., length of a mercury column) and temperature, is

close to linear, so for most purposes a linear scale is sufficient,

without point-by-point calibration. For others a calibration curve or

equation is required. The mercury thermometer, invented before the

thermodynamic temperature was understood, originally defined the

temperature scale; its linearity made readings correlate well with true

temperature, i.e. the "mercury" temperature scale was a close fit to the

true scale.

The relationship of temperature, motions, conduction, and thermal energy

Fig. 1 The translational motion of fundamental particles of nature such as atoms and molecules are directly related to temperature. Here, the size of helium atoms relative to their spacing is shown to scale under 1950 atmospheres

of pressure. These room-temperature atoms have a certain average speed

(slowed down here two trillion-fold). At any given instant however, a

particular helium atom may be moving much faster than average while

another may be nearly motionless. Five atoms are colored red to

facilitate following their motions.

The nature of kinetic energy, translational motion, and temperature

The thermodynamic temperature is a measure of the average energy of the translational, vibrational and rotational motions of matter's particle constituents (molecules, atoms, and subatomic particles).

The full variety of these kinetic motions, along with potential

energies of particles, and also occasionally certain other types of

particle energy in equilibrium with these, contribute the total internal energy (loosely, the thermal energy)

of a substance. Thus, internal energy may be stored in a number of ways

(degrees of freedom) within a substance. When the degrees of freedom

are in the classical regime ("unfrozen") the temperature is very simply

related to the average energy of those degrees of freedom at

equilibrium. The three translational degrees of freedom are unfrozen

except at the very lowest temperatures, and their kinetic energy is

simply related to the thermodynamic temperature over the widest range.

The heat capacity, which relates heat input and temperature change, is discussed below.

The relationship of kinetic energy, mass, and velocity is given by the formula Ek = 1⁄2mv2.

Accordingly, particles with one unit of mass moving at one unit of

velocity have precisely the same kinetic energy, and precisely the same

temperature, as those with four times the mass but half the velocity.

Except in the quantum regime at extremely low temperatures, the thermodynamic temperature of any bulk quantity

of a substance (a statistically significant quantity of particles) is

directly proportional to the mean average kinetic energy of a specific

kind of particle motion known as translational motion. These simple movements in the three x, y, and z–axis dimensions of space means the particles move in the three spatial degrees of freedom. The temperature derived from this translational kinetic energy is sometimes referred to as kinetic temperature and is equal to the thermodynamic temperature over a very wide range of temperatures. Since there are three translational

degrees of freedom (e.g., motion along the x, y, and z axes), the

translational kinetic energy is related to the kinetic temperature by:

where:

- is the mean kinetic energy in joules (J) and is pronounced “E bar”

- kB = 1.3806504(24)×10−23 J/K is the Boltzmann constant and is pronounced “Kay sub bee”

- is the kinetic temperature in kelvins (K) and is pronounced “Tee sub kay”

Fig. 2

The translational motions of helium atoms occur across a range of

speeds. Compare the shape of this curve to that of a Planck curve in Fig. 5 below.

While the Boltzmann constant is useful for finding the mean kinetic

energy of a particle, it's important to note that even when a substance

is isolated and in thermodynamic equilibrium

(all parts are at a uniform temperature and no heat is going into or

out of it), the translational motions of individual atoms and molecules

occur across a wide range of speeds. At any one instant, the proportion of particles moving at a

given speed within this range is determined by probability as described

by the Maxwell–Boltzmann distribution. The graph shown here in Fig. 2 shows the speed distribution of 5500 K helium atoms. They have a most probable

speed of 4.780 km/s. However, a certain proportion of atoms at any

given instant are moving faster while others are moving relatively

slowly; some are momentarily at a virtual standstill (off the x–axis to the right). This graph uses inverse speed for its x–axis so the shape of the curve can easily be compared to the curves in Figure 5 below. In both graphs, zero on the x–axis represents infinite temperature. Additionally, the x and y–axis on both graphs are scaled proportionally.

The high speeds of translational motion

Although

very specialized laboratory equipment is required to directly detect

translational motions, the resultant collisions by atoms or molecules

with small particles suspended in a fluid produces Brownian motion that can be seen with an ordinary microscope. The translational motions of elementary particles are very fast and temperatures close to absolute zero are required to directly observe them. For instance, when scientists at the NIST achieved a record-setting cold temperature of 700 nK (billionths of a kelvin) in 1994, they used optical lattice laser equipment to adiabatically cool caesium

atoms. They then turned off the entrapment lasers and directly measured

atom velocities of 7 mm per second in order to calculate their

temperature. Formulas for calculating the velocity and speed of translational motion are given in the following footnote.

Because of their internal structure and flexibility, molecules can store kinetic energy in internal degrees of freedom which contribute to the heat capacity.

There are other forms of internal energy besides the kinetic

energy of translational motion. As can be seen in the animation at

right, molecules are complex objects; they are a population of atoms and thermal agitation can strain their internal chemical bonds in three different ways: via rotation, bond length, and bond angle movements. These are all types of internal degrees of freedom. This makes molecules distinct from monatomic substances (consisting of individual atoms) like the noble gases helium and argon,

which have only the three translational degrees of freedom. Kinetic

energy is stored in molecules' internal degrees of freedom, which gives

them an internal temperature. Even though these motions are called internal, the external portions of molecules still move—rather like the jiggling of a stationary water balloon.

This permits the two-way exchange of kinetic energy between internal

motions and translational motions with each molecular collision.

Accordingly, as energy is removed from molecules, both their kinetic

temperature (the temperature derived from the kinetic energy of

translational motion) and their internal temperature simultaneously

diminish in equal proportions. This phenomenon is described by the equipartition theorem,

which states that for any bulk quantity of a substance in equilibrium,

the kinetic energy of particle motion is evenly distributed among all

the active (i.e. unfrozen) degrees of freedom available to the

particles. Since the internal temperature of molecules is usually equal

to their kinetic temperature, the distinction is usually of interest

only in the detailed study of non-local thermodynamic equilibrium (LTE) phenomena such as combustion, the sublimation of solids, and the diffusion of hot gases in a partial vacuum.

The kinetic energy stored internally in molecules causes

substances to contain more internal energy at any given temperature and

to absorb additional internal energy for a given temperature increase.

This is because any kinetic energy that is, at a given instant, bound in

internal motions is not at that same instant contributing to the

molecules' translational motions.

This extra thermal energy simply increases the amount of energy a

substance absorbs for a given temperature rise. This property is known

as a substance's specific heat capacity.

Different molecules absorb different amounts of thermal energy

for each incremental increase in temperature; that is, they have

different specific heat capacities. High specific heat capacity arises,

in part, because certain substances' molecules possess more internal

degrees of freedom than others do. For instance, nitrogen, which is a diatomic molecule, has five

active degrees of freedom at room temperature: the three comprising

translational motion plus two rotational degrees of freedom internally.

Since the two internal degrees of freedom are essentially unfrozen, in

accordance with the equipartition theorem, nitrogen has five-thirds the

specific heat capacity per mole (a specific number of molecules) as do the monatomic gases. Another example is gasoline. Gasoline can absorb a large amount

of thermal energy per mole with only a modest temperature change

because each molecule comprises an average of 21 atoms and therefore has

many internal degrees of freedom. Even larger, more complex molecules

can have dozens of internal degrees of freedom.

The diffusion of thermal energy: Entropy, phonons, and mobile conduction electrons

Fig. 4 The temperature-induced translational motion of particles in solids takes the form of phonons. Shown here are phonons with identical amplitudes but with wavelengths ranging from 2 to 12 molecules.

Heat conduction is

the diffusion of thermal energy from hot parts of a system to cold. A

system can be either a single bulk entity or a plurality of discrete

bulk entities. The term bulk in this context means a

statistically significant quantity of particles (which can be a

microscopic amount). Whenever thermal energy diffuses within an isolated

system, temperature differences within the system decrease (and entropy increases).

One particular heat conduction mechanism occurs when

translational motion, the particle motion underlying temperature,

transfers momentum from particle to particle in collisions. In gases, these translational motions are of the nature shown above in Fig. 1. As

can be seen in that animation, not only does momentum (heat) diffuse

throughout the volume of the gas through serial collisions, but entire

molecules or atoms can move forward into new territory, bringing their

kinetic energy with them. Consequently, temperature differences equalize

throughout gases very quickly—especially for light atoms or molecules; convection speeds this process even more.

Translational motion in solids, however, takes the form of phonons (see Fig. 4

at right). Phonons are constrained, quantized wave packets that travel

at a given substance's speed of sound. The manner in which phonons

interact within a solid determines a variety of its properties,

including its thermal conductivity. In electrically insulating solids,

phonon-based heat conduction is usually inefficient and such solids are considered thermal insulators

(such as glass, plastic, rubber, ceramic, and rock). This is because in

solids, atoms and molecules are locked into place relative to their

neighbors and are not free to roam.

Metals

however, are not restricted to only phonon-based heat conduction.

Thermal energy conducts through metals extraordinarily quickly because

instead of direct molecule-to-molecule collisions, the vast majority of

thermal energy is mediated via very light, mobile conduction electrons. This is why there is a near-perfect correlation between metals' thermal conductivity and their electrical conductivity. Conduction electrons imbue metals with their extraordinary conductivity because they are delocalized (i.e., not tied to a specific atom) and behave rather like a sort of quantum gas due to the effects of zero-point energy. Furthermore, electrons are relatively light with a rest mass only 1⁄1836th that of a proton. This is about the same ratio as a .22 Short bullet (29 grains or 1.88 g) compared to the rifle that shoots it. As Isaac Newton wrote with his third law of motion,

Law #3: All forces occur in pairs, and these two forces are equal in magnitude and opposite in direction.

However, a bullet accelerates faster than a rifle given an equal

force. Since kinetic energy increases as the square of velocity, nearly

all the kinetic energy goes into the bullet, not the rifle, even though

both experience the same force from the expanding propellant gases. In

the same manner, because they are much less massive, thermal energy is

readily borne by mobile conduction electrons. Additionally, because

they're delocalized and very fast, kinetic thermal energy conducts extremely quickly through metals with abundant conduction electrons.

The diffusion of thermal energy: Black-body radiation

Fig. 5

The spectrum of black-body radiation has the form of a Planck curve. A

5500 K black-body has a peak emittance wavelength of 527 nm. Compare the

shape of this curve to that of a Maxwell distribution in Fig. 2 above.

Thermal radiation

is a byproduct of the collisions arising from various vibrational

motions of atoms. These collisions cause the electrons of the atoms to

emit thermal photons (known as black-body

radiation). Photons are emitted anytime an electric charge is

accelerated (as happens when electron clouds of two atoms collide). Even

individual molecules with internal temperatures greater than

absolute zero also emit black-body radiation from their atoms. In any

bulk quantity of a substance at equilibrium, black-body photons are

emitted across a range of wavelengths in a spectrum that has a bell curve-like shape called a Planck curve (see graph in Fig. 5 at right). The top of a Planck curve (the peak emittance wavelength) is located in a particular part of the electromagnetic spectrum depending on the temperature of the black-body. Substances at extreme cryogenic temperatures emit at long radio wavelengths whereas extremely hot temperatures produce short gamma rays.

Black-body radiation diffuses thermal energy throughout a

substance as the photons are absorbed by neighboring atoms, transferring

momentum in the process. Black-body photons also easily escape from a

substance and can be absorbed by the ambient environment; kinetic energy

is lost in the process.

As established by the Stefan–Boltzmann law,

the intensity of black-body radiation increases as the fourth power of

absolute temperature. Thus, a black-body at 824 K (just short of glowing

dull red) emits 60 times the radiant power

as it does at 296 K (room temperature). This is why one can so easily

feel the radiant heat from hot objects at a distance. At higher

temperatures, such as those found in an incandescent lamp, black-body radiation can be the principal mechanism by which thermal energy escapes a system.

Table of thermodynamic temperatures

The full range of the thermodynamic temperature scale, from absolute zero to absolute hot, and some notable points between them are shown in the table below.

|

|

kelvin | Peak emittance wavelength of black-body photons |

| Absolute zero (precisely by definition) |

0 K | ∞ |

| Coldest measured temperature |

450 pK | 6,400 kilometers |

| One millikelvin (precisely by definition) |

0.001 K | 2.897 77 meters (Radio, FM band) |

| Cosmic Microwave Background Radiation | 2.725 48(57) K | 1.063 mm (peak wavelength) |

| Water's triple point (precisely by definition) |

273.16 K | 10,608.3 nm (Long wavelength I.R.) |

| Incandescent lampB | 2500 K | 1160 nm (Near infrared)C |

| Sun’s visible surfaceC | 5778 K | 501.5 nm (Green light) |

| Lightning bolt's channel |

28,000 K | 100 nm (Far Ultraviolet light) |

| Sun's core | 16 MK | 0.18 nm (X-rays) |

| Thermonuclear explosion (peak temperature) |

350 MK | 8.3 × 10−3 nm (Gamma rays) |

| Sandia National Labs’ Z machine D |

2 GK | 1.4 × 10−3 nm (Gamma rays) |

| Core of a high–mass star on its last day |

3 GK | 1 × 10−3 nm (Gamma rays) |

| Merging binary neutron star system |

350 GK | 8 × 10−6 nm (Gamma rays) |

| Gamma-ray burst progenitors |

1 TK | 3 × 10−6 nm (Gamma rays) |

| Relativistic Heavy Ion Collider |

1 TK | 3 × 10−6 nm (Gamma rays) |

| CERN’s proton vs. nucleus collisions |

10 TK | 3 × 10−7 nm (Gamma rays) |

| Universe 5.391 × 10−44 s after the Big Bang |

1.417 × 1032 K | 1.616 × 10−26 nm (Planck frequency) |

A The 2500 K value is approximate.

B For a true blackbody (which tungsten filaments are not).

Tungsten filaments' emissivity is greater at shorter wavelengths, which

makes them appear whiter.

C Effective photosphere temperature.

D For a true blackbody (which the plasma was not). The Z

machine's dominant emission originated from 40 MK electrons (soft x–ray

emissions) within the plasma.

The heat of phase changes

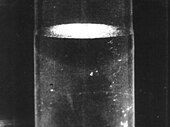

Fig. 6 Ice and water: two phases of the same substance

The kinetic energy of particle motion is just one contributor to the total thermal energy in a substance; another is phase transitions, which are the potential energy of molecular bonds that can form in a substance as it cools (such as during condensing and freezing). The thermal energy required for a phase transition is called latent heat. This phenomenon may more easily be grasped by considering it in the reverse direction: latent heat is the energy required to break chemical bonds (such as during evaporation and melting). Almost everyone is familiar with the effects of phase transitions; for instance, steam at 100 °C can cause severe burns much faster than the 100 °C air from a hair dryer. This occurs because a large amount of latent heat is liberated as steam condenses into liquid water on the skin.

Even though thermal energy is liberated or absorbed during phase transitions, pure chemical elements, compounds, and eutectic alloys exhibit no temperature change whatsoever while they undergo them (see Fig. 7, below right). Consider one particular type of phase transition: melting. When a solid is melting, crystal lattice chemical bonds are being broken apart; the substance is transitioning from what is known as a more ordered state to a less ordered state. In Fig. 7, the melting of ice is shown within the lower left box heading from blue to green.

Fig. 7

Water's temperature does not change during phase transitions as heat

flows into or out of it. The total heat capacity of a mole of water in

its liquid phase (the green line) is 7.5507 kJ.

At one specific thermodynamic point, the melting point

(which is 0 °C across a wide pressure range in the case of water), all

the atoms or molecules are, on average, at the maximum energy threshold

their chemical bonds can withstand without breaking away from the

lattice. Chemical bonds are all-or-nothing forces: they either hold

fast, or break; there is no in-between state. Consequently, when a

substance is at its melting point, every joule of added thermal energy only breaks the bonds of a specific quantity of its atoms or molecules,

converting them into a liquid of precisely the same temperature; no

kinetic energy is added to translational motion (which is what gives

substances their temperature). The effect is rather like popcorn:

at a certain temperature, additional thermal energy can't make the

kernels any hotter until the transition (popping) is complete. If the

process is reversed (as in the freezing of a liquid), thermal energy

must be removed from a substance.

As stated above, the thermal energy required for a phase transition is called latent heat. In the specific cases of melting and freezing, it's called enthalpy of fusion or heat of fusion.

If the molecular bonds in a crystal lattice are strong, the heat of

fusion can be relatively great, typically in the range of 6 to 30 kJ per

mole for water and most of the metallic elements.

If the substance is one of the monatomic gases, (which have little

tendency to form molecular bonds) the heat of fusion is more modest,

ranging from 0.021 to 2.3 kJ per mole.

Relatively speaking, phase transitions can be truly energetic events.

To completely melt ice at 0 °C into water at 0 °C, one must add roughly

80 times the thermal energy as is required to increase the temperature

of the same mass of liquid water by one degree Celsius. The metals'

ratios are even greater, typically in the range of 400 to 1200 times. And the phase transition of boiling is much more energetic than freezing. For instance, the energy required to completely boil or vaporize water (what is known as enthalpy of vaporization) is roughly 540 times that required for a one-degree increase.

Water's sizable enthalpy of vaporization is why one's skin can be

burned so quickly as steam condenses on it (heading from red to green

in Fig. 7 above). In the opposite direction, this is why one's

skin feels cool as liquid water on it evaporates (a process that occurs

at a sub-ambient wet-bulb temperature that is dependent on relative humidity). Water's highly energetic enthalpy of vaporization is also an important factor underlying why solar pool covers (floating, insulated blankets that cover swimming pools

when not in use) are so effective at reducing heating costs: they

prevent evaporation. For instance, the evaporation of just 20 mm of

water from a 1.29-meter-deep pool chills its water 8.4 degrees Celsius

(15.1 °F).

Internal energy

The

total energy of all particle motion translational and internal,

including that of conduction electrons, plus the potential energy of

phase changes, plus zero-point energy comprise the internal energy of a substance.

Fig. 8 When many of the chemical elements, such as the noble gases and platinum-group metals, freeze to a solid — the most ordered state of matter — their crystal structures have a close-packed arrangement. This yields the greatest possible packing density and the lowest energy state.

Internal energy at absolute zero

As

a substance cools, different forms of internal energy and their related

effects simultaneously decrease in magnitude: the latent heat of

available phase transitions is liberated as a substance changes from a

less ordered state to a more ordered state; the translational motions of

atoms and molecules diminish (their kinetic temperature decreases); the

internal motions of molecules diminish (their internal temperature

decreases); conduction electrons (if the substance is an electrical

conductor) travel somewhat slower;

and black-body radiation's peak emittance wavelength increases (the

photons' energy decreases). When the particles of a substance are as

close as possible to complete rest and retain only ZPE-induced quantum

mechanical motion, the substance is at the temperature of absolute zero (T=0).

Note that whereas absolute zero is the point of zero

thermodynamic temperature and is also the point at which the particle

constituents of matter have minimal motion, absolute zero is not

necessarily the point at which a substance contains zero thermal energy;

one must be very precise with what one means by internal energy. Often, all the phase changes that can occur in a substance, will have occurred by the time it reaches absolute zero. However, this is not always the case. Notably, T=0 helium remains liquid at room pressure and must be under a pressure of at least 25 bar (2.5 MPa)

to crystallize. This is because helium's heat of fusion (the energy

required to melt helium ice) is so low (only 21 joules per mole) that

the motion-inducing effect of zero-point energy is sufficient to prevent

it from freezing at lower pressures. Only if under at least 25 bar

(2.5 MPa) of pressure will this latent thermal energy be liberated as

helium freezes while approaching absolute zero. A further complication

is that many solids change their crystal structure to more compact

arrangements at extremely high pressures (up to millions of bars, or

hundreds of gigapascals). These are known as solid-solid phase transitions wherein latent heat is liberated as a crystal lattice changes to a more thermodynamically favorable, compact one.

The above complexities make for rather cumbersome blanket statements regarding the internal energy in T=0 substances. Regardless of pressure though, what can be said is that at absolute zero, all solids with a lowest-energy crystal lattice such those with a closest-packed arrangement (see Fig. 8, above left) contain minimal internal energy, retaining only that due to the ever-present background of zero-point energy. One can also say that for a given substance at constant pressure, absolute zero is the point of lowest enthalpy (a measure of work potential that takes internal energy, pressure, and volume into consideration). Lastly, it is always true to say that all T=0 substances contain zero kinetic thermal energy.

Practical applications for thermodynamic temperature

Helium-4, is a superfluid at or below 2.17 kelvins, (2.17 Celsius degrees above absolute zero)

Thermodynamic temperature is useful not only for scientists, it can

also be useful for lay-people in many disciplines involving gases. By

expressing variables in absolute terms and applying Gay–Lussac's law

of temperature/pressure proportionality, solutions to everyday problems

are straightforward; for instance, calculating how a temperature change

affects the pressure inside an automobile tire. If the tire has a cold

pressure of 200 kPa-gage , then in absolute terms (relative to a vacuum), its pressure is 300 kPa-absolute.

Room temperature ("cold" in tire terms) is 296 K. If the tire

temperature is 20 °C hotter (20 kelvins), the solution is calculated as 316 K⁄296 K = 6.8% greater thermodynamic temperature and absolute pressure; that is, a pressure of 320 kPa-absolute, which is 220 kPa-gage.

Definition of thermodynamic temperature

The thermodynamic temperature is defined by the ideal gas law

and its consequences. It can be linked also to the second law of

thermodynamics. The thermodynamic temperature can be shown to have

special properties, and in particular can be seen to be uniquely defined

(up to some constant multiplicative factor) by considering the efficiency of idealized heat engines. Thus the ratio T2/T1 of two temperaturesT1 andT2 is the same in all absolute scales.

Strictly speaking, the temperature of a system is well-defined only if it is at thermal equilibrium.

From a microscopic viewpoint, a material is at thermal equilibrium if

the quantity of heat between its individual particles cancel out. There

are many possible scales of temperature, derived from a variety of

observations of physical phenomena.

Loosely stated, temperature differences dictate the direction of

heat between two systems such that their combined energy is maximally

distributed among their lowest possible states. We call this

distribution "entropy". To better understand the relationship between temperature and entropy, consider the relationship between heat, work and temperature illustrated in the Carnot heat engine. The engine converts heat into work by directing a temperature gradient between a higher temperature heat source, TH, and a lower temperature heat sink, TC, through a gas filled piston. The work done per cycle is equal to the difference between the heat supplied to the engine by TH, qH, and the heat supplied to TC by the engine, qC. The efficiency of the engine is the work divided by the heat put into the system or

where wcy is the work done per cycle. Thus the efficiency depends only on qC/qH.

Carnot's theorem states that all reversible engines operating between the same heat reservoirs are equally efficient.

Thus, any reversible heat engine operating between temperatures T1 and T2 must have the same efficiency, that is to say, the efficiency is the function of only temperatures

In addition, a reversible heat engine operating between temperatures T1 and T3 must have the same efficiency as one consisting of two cycles, one between T1 and another (intermediate) temperature T2, and the second between T2 andT3. If this were not the case, then energy (in the form of Q)

will be wasted or gained, resulting in different overall efficiencies

every time a cycle is split into component cycles; clearly a cycle can

be composed of any number of smaller cycles.

With this understanding of Q1, Q2 and Q3, we note also that mathematically,

But the first function is NOT a function of T2, therefore the product of the final two functions MUST result in the removal of T2 as a variable. The only way is therefore to define the function f as follows:

and

so that

i.e. The ratio of heat exchanged is a function of the respective

temperatures at which they occur. We can choose any monotonic function

for our ; it is a matter of convenience and convention that we choose . Choosing then one fixed reference temperature (i.e. triple point of water), we establish the thermodynamic temperature scale.

It is to be noted that such a definition coincides with that of the ideal gas derivation; also it is this definition of the thermodynamic temperature that enables us to represent the Carnot efficiency in terms of TH and TC, and hence derive that the (complete) Carnot cycle is isentropic:

Substituting this back into our first formula for efficiency yields a relationship in terms of temperature:

Notice that for TC=0 the efficiency is 100% and that efficiency becomes greater than 100% for TC<0 4="" and="" are="" cases="" equation="" from="" gives="" hand="" middle="" of="" p="" portion="" rearranging="" right="" side="" subtracting="" the="" unrealistic.="" which="">

where the negative sign indicates heat ejected from the system. The generalization of this equation is Clausius theorem, which suggests the existence of a state functionS

(i.e., a function which depends only on the state of the system, not on

how it reached that state) defined (up to an additive constant) by

where the subscript indicates heat transfer in a reversible process. The function S corresponds to the entropy of the system, mentioned previously, and the change of S

around any cycle is zero (as is necessary for any state function).

Equation 5 can be rearranged to get an alternative definition for

temperature in terms of entropy and heat (to avoid logic loop, we should

first define entropy through statistical mechanics):

For a system in which the entropy S is a function S(E) of its energy E, the thermodynamic temperature T is therefore given by

so that the reciprocal of the thermodynamic temperature is the rate of increase of entropy with energy.

History

Parmenides in his treatise "On Nature" postulated the existence of primum frigidum, a hypothetical elementary substance source of all cooling or cold in the world.

Coincident with the death of Anders Celsius, the famous botanist Carl Linnaeus (1707–1778) effectively reversed Celsius's scale upon receipt of his first thermometer featuring a scale where zero represented the melting point of ice and 100 represented water's boiling point. The custom-made linnaeus-thermometer, for use in his greenhouses, was made by Daniel Ekström, Sweden's leading maker of scientific instruments at the time. For the next 204 years, the scientific and thermometry communities worldwide referred to this scale as the centigrade scale. Temperatures on the centigrade scale were often reported simply as degrees or, when greater specificity was desired, degrees centigrade. The symbol for temperature values on this scale was °C (in several formats over the years). Because the term centigrade was also the French-language name for a unit of angular measurement (one-hundredth of a right angle) and had a similar connotation in other languages, the term "centesimal degree" was used when very precise, unambiguous language was required by international standards bodies such as the International Bureau of Weights and Measures (Bureau international des poids et mesures) (BIPM). The 9th CGPM (General Conference on Weights and Measures (Conférence générale des poids et mesures) and the CIPM (International Committee for Weights and Measures (Comité international des poids et mesures) formally adopted degree Celsius (symbol: °C) in 1948.

In his book Pyrometrie (Berlin: Haude & Spener, 1779) completed four months before his death, Johann Heinrich Lambert (1728–1777), sometimes incorrectly referred to as Joseph Lambert, proposed an absolute temperature scale based on the pressure/temperature relationship of a fixed volume of gas. This is distinct from the volume/temperature relationship of gas under constant pressure that Guillaume Amontons discovered 75 years earlier. Lambert stated that absolute zero was the point where a simple straight-line extrapolation reached zero gas pressure and was equal to −270 °C.

Notwithstanding the work of Guillaume Amontons 85 years earlier, Jacques Alexandre César Charles (1746–1823) is often credited with discovering, but not publishing, that the volume of a gas under constant pressure is proportional to its absolute temperature. The formula he created was V1/T1 = V2/T2.

Joseph Louis Gay-Lussac (1778–1850) published work (acknowledging the unpublished lab notes of Jacques Charles fifteen years earlier) describing how the volume of gas under constant pressure changes linearly with its absolute (thermodynamic) temperature. This behavior is called Charles's Law and is one of the gas laws. His are the first known formulas to use the number 273 for the expansion coefficient of gas relative to the melting point of ice (indicating that absolute zero was equivalent to −273 °C).

William Thomson, (1824–1907) also known as Lord Kelvin, wrote in his paper, On an Absolute Thermometric Scale, of the need for a scale whereby infinite cold (absolute zero) was the scale's null point, and which used the degree Celsius for its unit increment. Like Gay-Lussac, Thomson calculated that absolute zero was equivalent to −273 °C on the air thermometers of the time. This absolute scale is known today as the kelvin thermodynamic temperature scale. It's noteworthy that Thomson's value of −273 was actually derived from 0.00366, which was the accepted expansion coefficient of gas per degree Celsius relative to the ice point. The inverse of −0.00366 expressed to five significant digits is −273.22 °C which is remarkably close to the true value of −273.15 °C.

William John Macquorn Rankine (1820–1872) proposed a thermodynamic temperature scale similar to William Thomson's but which used the degree Fahrenheit for its unit increment. This absolute scale is known today as the Rankine thermodynamic temperature scale.

Ludwig Boltzmann (1844–1906) made major contributions to thermodynamics through an understanding of the role that particle kinetics and black body radiation played. His name is now attached to several of the formulas used today in thermodynamics.