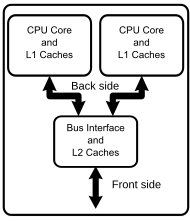

Diagram of a generic dual-core processor with CPU-local level-1 caches and a shared, on-die level-2 cache.

An Intel Core 2 Duo E6750 dual-core processor.

An AMD Athlon X2 6400+ dual-core processor.

A multi-core processor is a computer processor integrated circuit with two or more separate processing units, called cores, each of which reads and executes program instructions, as if the computer had several processors. The instructions are ordinary CPU instructions

(such as add, move data, and branch) but the single processor can run

instructions on separate cores at the same time, increasing overall

speed for programs that support multithreading or other parallel computing techniques. Manufacturers typically integrate the cores onto a single integrated circuit die (known as a chip multiprocessor or CMP) or onto multiple dies in a single chip package. The microprocessors currently used in almost all personal computers are multi-core.

A multi-core processor implements multiprocessing

in a single physical package. Designers may couple cores in a

multi-core device tightly or loosely. For example, cores may or may not

share caches, and they may implement message passing or shared-memory inter-core communication methods. Common network topologies to interconnect cores include bus, ring, two-dimensional mesh, and crossbar. Homogeneous multi-core systems include only identical cores; heterogeneous multi-core systems have cores that are not identical (e.g. big.LITTLE have heterogeneous cores that share the same instruction set, while AMD Accelerated Processing Units

have cores that don't even share the same instruction set). Just as

with single-processor systems, cores in multi-core systems may implement

architectures such as VLIW, superscalar, vector, or multithreading.

Multi-core processors are widely used across many application domains, including general-purpose, embedded, network, digital signal processing (DSP), and graphics (GPU).

The improvement in performance gained by the use of a multi-core processor depends very much on the software algorithms used and their implementation. In particular, possible gains are limited by the fraction of the software that can run in parallel simultaneously on multiple cores; this effect is described by Amdahl's law. In the best case, so-called embarrassingly parallel

problems may realize speedup factors near the number of cores, or even

more if the problem is split up enough to fit within each core's

cache(s), avoiding use of much slower main-system memory. Most

applications, however, are not accelerated so much unless programmers

invest a prohibitive amount of effort in re-factoring the whole problem.

The parallelization of software is a significant ongoing topic of

research. Cointegration of multiprocessor applications provides

flexibility in network architecture design. Adaptability within parallel

models is an additional feature of systems utilizing these protocols.

Terminology

The terms multi-core and dual-core most commonly refer to some sort of central processing unit (CPU), but are sometimes also applied to digital signal processors (DSP) and system on a chip (SoC). The terms are generally used only to refer to multi-core microprocessors that are manufactured on the same integrated circuit die; separate microprocessor dies in the same package are generally referred to by another name, such as multi-chip module. This article uses the terms "multi-core" and "dual-core" for CPUs manufactured on the same integrated circuit, unless otherwise noted.

In contrast to multi-core systems, the term multi-CPU

refers to multiple physically separate processing-units (which often

contain special circuitry to facilitate communication between each

other).

The terms many-core and massively multi-core are sometimes used to describe multi-core architectures with an especially high number of cores (tens to thousands).

Some systems use many soft microprocessor cores placed on a single FPGA. Each "core" can be considered a "semiconductor intellectual property core" as well as a CPU core.

Development

While manufacturing technology improves, reducing the size of individual gates, physical limits of semiconductor-based microelectronics

have become a major design concern. These physical limitations can

cause significant heat dissipation and data synchronization problems.

Various other methods are used to improve CPU performance. Some instruction-level parallelism (ILP) methods such as superscalar pipelining

are suitable for many applications, but are inefficient for others that

contain difficult-to-predict code. Many applications are better suited

to thread-level parallelism

(TLP) methods, and multiple independent CPUs are commonly used to

increase a system's overall TLP. A combination of increased available

space (due to refined manufacturing processes) and the demand for

increased TLP led to the development of multi-core CPUs.

Commercial incentives

Several

business motives drive the development of multi-core architectures. For

decades, it was possible to improve performance of a CPU by shrinking

the area of the integrated circuit (IC), which reduced the cost per

device on the IC. Alternatively, for the same circuit area, more

transistors could be used in the design, which increased functionality,

especially for complex instruction set computing (CISC) architectures. Clock rates

also increased by orders of magnitude in the decades of the late 20th

century, from several megahertz in the 1980s to several gigahertz in the

early 2000s.

As the rate of clock speed improvements slowed, increased use of

parallel computing in the form of multi-core processors has been pursued

to improve overall processing performance. Multiple cores were used on

the same CPU chip, which could then lead to better sales of CPU chips

with two or more cores. For example, Intel has produced a 48-core

processor for research in cloud computing; each core has an x86 architecture.

Technical factors

Since computer manufacturers have long implemented symmetric multiprocessing

(SMP) designs using discrete CPUs, the issues regarding implementing

multi-core processor architecture and supporting it with software are

well known.

Additionally:

- Using a proven processing-core design without architectural changes reduces design risk significantly.

- For general-purpose processors, much of the motivation for

multi-core processors comes from greatly diminished gains in processor

performance from increasing the operating frequency. This is due to three primary factors:

- The memory wall; the increasing gap between processor and memory speeds. This, in effect, pushes for cache sizes to be larger in order to mask the latency of memory. This helps only to the extent that memory bandwidth is not the bottleneck in performance.

- The ILP wall; the increasing difficulty of finding enough parallelism in a single instruction stream to keep a high-performance single-core processor busy.

- The power wall; the trend of consuming exponentially increasing power (and thus also generating exponentially increasing heat) with each factorial increase of operating frequency. This increase can be mitigated by "shrinking" the processor by using smaller traces for the same logic. The power wall poses manufacturing, system design and deployment problems that have not been justified in the face of the diminished gains in performance due to the memory wall and ILP wall.

In order to continue delivering regular performance improvements for general-purpose processors, manufacturers such as Intel and AMD

have turned to multi-core designs, sacrificing lower

manufacturing-costs for higher performance in some applications and

systems. Multi-core architectures are being developed, but so are the

alternatives. An especially strong contender for established markets is

the further integration of peripheral functions into the chip.

Advantages

The proximity of multiple CPU cores on the same die allows the cache coherency

circuitry to operate at a much higher clock rate than what is possible

if the signals have to travel off-chip. Combining equivalent CPUs on a

single die significantly improves the performance of cache snoop (alternative: Bus snooping) operations. Put simply, this means that signals between different CPUs travel shorter distances, and therefore those signals degrade

less. These higher-quality signals allow more data to be sent in a

given time period, since individual signals can be shorter and do not

need to be repeated as often.

Assuming that the die can physically fit into the package, multi-core CPU designs require much less printed circuit board

(PCB) space than do multi-chip SMP designs. Also, a dual-core processor

uses slightly less power than two coupled single-core processors,

principally because of the decreased power required to drive signals

external to the chip. Furthermore, the cores share some circuitry, like

the L2 cache and the interface to the front-side bus

(FSB). In terms of competing technologies for the available silicon die

area, multi-core design can make use of proven CPU core library designs

and produce a product with lower risk of design error than devising a

new wider-core design. Also, adding more cache suffers from diminishing

returns.

Multi-core chips also allow higher performance at lower energy.

This can be a big factor in mobile devices that operate on batteries.

Since each core in a multi-core CPU is generally more energy-efficient,

the chip becomes more efficient than having a single large monolithic

core. This allows higher performance with less energy. A challenge in

this, however, is the additional overhead of writing parallel code.

Disadvantages

Maximizing the usage of the computing resources provided by multi-core processors requires adjustments both to the operating system

(OS) support and to existing application software. Also, the ability of

multi-core processors to increase application performance depends on

the use of multiple threads within applications.

Integration of a multi-core chip can lower the chip production

yields. They are also more difficult to manage thermally than

lower-density single-core designs. Intel has partially countered this

first problem by creating its quad-core designs by combining two

dual-core ones on a single die with a unified cache, hence any two

working dual-core dies can be used, as opposed to producing four cores

on a single die and requiring all four to work to produce a quad-core

CPU. From an architectural point of view, ultimately, single CPU designs

may make better use of the silicon surface area than multiprocessing

cores, so a development commitment to this architecture may carry the

risk of obsolescence. Finally, raw processing power is not the only

constraint on system performance. Two processing cores sharing the same

system bus and memory bandwidth limits the real-world performance

advantage. In a 2009 report, Dr Jun Ni showed that if a single core is

close to being memory-bandwidth limited, then going to dual-core might

give 30% to 70% improvement; if memory bandwidth is not a problem, then a

90% improvement can be expected; however, Amdahl's law makes this claim dubious.

It would be possible for an application that used two CPUs to end up

running faster on a single-core one if communication between the CPUs

was the limiting factor, which would count as more than 100%

improvement.

Hardware

Trends

The

trend in processor development has been towards an ever-increasing

number of cores, as processors with hundreds or even thousands of cores

become theoretically possible. In addition, multi-core chips mixed with simultaneous multithreading, memory-on-chip, and special-purpose "heterogeneous" (or asymmetric) cores promise further performance and efficiency gains, especially in processing multimedia, recognition and networking applications. For example, a big.LITTLE

core includes a high-performance core (called 'big') and a low-power

core (called 'LITTLE'). There is also a trend towards improving

energy-efficiency by focusing on performance-per-watt with advanced

fine-grain or ultra fine-grain power management and dynamic voltage and frequency scaling (i.e. laptop computers and portable media players).

Chips designed from the outset for a large number of cores

(rather than having evolved from single core designs) are sometimes

referred to as manycore designs, emphasising qualitative differences.

Architecture

The

composition and balance of the cores in multi-core architecture show

great variety. Some architectures use one core design repeated

consistently ("homogeneous"), while others use a mixture of different

cores, each optimized for a different, "heterogeneous" role.

How multiple cores are implemented and integrated significantly

affects both the developer's programming skills and the consumer's

expectations of apps and interactivity versus the device.[14] A device advertised as being octa-core will only have independent cores if advertised as True Octa-core, or similar styling, as opposed to being merely two sets of quad-cores each with fixed clock speeds.

The article "CPU designers debate multi-core future" by Rick Merritt, EE Times 2008, includes these comments:

Chuck Moore [...] suggested computers should be like cellphones, using a variety of specialty cores to run modular software scheduled by a high-level applications programming interface.

[...] Atsushi Hasegawa, a senior chief engineer at Renesas, generally agreed. He suggested the cellphone's use of many specialty cores working in concert is a good model for future multi-core designs.

[...] Anant Agarwal, founder and chief executive of startup Tilera, took the opposing view. He said multi-core chips need to be homogeneous collections of general-purpose cores to keep the software model simple.

Software effects

An outdated version of an anti-virus application may create a new thread for a scan process, while its GUI

thread waits for commands from the user (e.g. cancel the scan). In such

cases, a multi-core architecture is of little benefit for the

application itself due to the single thread doing all the heavy lifting

and the inability to balance the work evenly across multiple cores.

Programming truly multithreaded code often requires complex

co-ordination of threads and can easily introduce subtle and

difficult-to-find bugs due to the interweaving of processing on data

shared between threads (see thread-safety).

Consequently, such code is much more difficult to debug than

single-threaded code when it breaks. There has been a perceived lack of

motivation for writing consumer-level threaded applications because of

the relative rarity of consumer-level demand for maximum use of computer

hardware. Also, serial tasks like decoding the entropy encoding algorithms used in video codecs

are impossible to parallelize because each result generated is used to

help create the next result of the entropy decoding algorithm.

Given the increasing emphasis on multi-core chip design, stemming

from the grave thermal and power consumption problems posed by any

further significant increase in processor clock speeds, the extent to

which software can be multithreaded to take advantage of these new chips

is likely to be the single greatest constraint on computer performance

in the future. If developers are unable to design software to fully

exploit the resources provided by multiple cores, then they will

ultimately reach an insurmountable performance ceiling.

The telecommunications market had been one of the first that

needed a new design of parallel datapath packet processing because there

was a very quick adoption of these multiple-core processors for the

datapath and the control plane. These MPUs are going to replace the traditional Network Processors that were based on proprietary microcode or picocode.

Parallel programming techniques can benefit from multiple cores directly. Some existing parallel programming models such as Cilk Plus, OpenMP, OpenHMPP, FastFlow, Skandium, MPI, and Erlang can be used on multi-core platforms. Intel introduced a new abstraction for C++ parallelism called TBB. Other research efforts include the Codeplay Sieve System, Cray's Chapel, Sun's Fortress, and IBM's X10.

Multi-core processing has also affected the ability of modern

computational software development. Developers programming in newer

languages might find that their modern languages do not support

multi-core functionality. This then requires the use of numerical libraries to access code written in languages like C and Fortran, which perform math computations faster than newer languages like C#. Intel's MKL and AMD's ACML

are written in these native languages and take advantage of multi-core

processing. Balancing the application workload across processors can be

problematic, especially if they have different performance

characteristics. There are different conceptual models to deal with the

problem, for example using a coordination language and program building

blocks (programming libraries or higher-order functions). Each block can

have a different native implementation for each processor type. Users

simply program using these abstractions and an intelligent compiler

chooses the best implementation based on the context.

Managing concurrency acquires a central role in developing parallel applications. The basic steps in designing parallel applications are:

- Partitioning

- The partitioning stage of a design is intended to expose opportunities for parallel execution. Hence, the focus is on defining a large number of small tasks in order to yield what is termed a fine-grained decomposition of a problem.

- Communication

- The tasks generated by a partition are intended to execute concurrently but cannot, in general, execute independently. The computation to be performed in one task will typically require data associated with another task. Data must then be transferred between tasks so as to allow computation to proceed. This information flow is specified in the communication phase of a design.

- Agglomeration

- In the third stage, development moves from the abstract toward the concrete. Developers revisit decisions made in the partitioning and communication phases with a view to obtaining an algorithm that will execute efficiently on some class of parallel computer. In particular, developers consider whether it is useful to combine, or agglomerate, tasks identified by the partitioning phase, so as to provide a smaller number of tasks, each of greater size. They also determine whether it is worthwhile to replicate data and computation.

- Mapping

- In the fourth and final stage of the design of parallel algorithms, the developers specify where each task is to execute. This mapping problem does not arise on uniprocessors or on shared-memory computers that provide automatic task scheduling.

On the other hand, on the server side, multi-core processors are ideal because they allow many users to connect to a site simultaneously and have independent threads of execution. This allows for Web servers and application servers that have much better throughput.

Licensing

Vendors

may license some software "per processor". This can give rise to

ambiguity, because a "processor" may consist either of a single core or

of a combination of cores.

- Initially, for some of its enterprise software, Microsoft continued to use a per-socket licensing system. However, for some software such as BizTalk Server 2013, SQL Server 2014, and Windows Server 2016, Microsoft has shifted to per-core licensing.

- Oracle Corporation counts an AMD X2 or an Intel dual-core CPU as a single processor but uses other metrics for other types, especially for processors with more than two cores.

Embedded applications

An embedded system on a plug-in card with processor, memory, power supply, and external interfaces

Embedded computing

operates in an area of processor technology distinct from that of

"mainstream" PCs. The same technological drives towards multi-core apply

here too. Indeed, in many cases the application is a "natural" fit for

multi-core technologies, if the task can easily be partitioned between

the different processors.

In addition, embedded software is typically developed for a specific hardware release, making issues of software portability,

legacy code or supporting independent developers less critical than is

the case for PC or enterprise computing. As a result, it is easier for

developers to adopt new technologies and as a result there is a greater

variety of multi-core processing architectures and suppliers.

Network processors

As of 2010, multi-core network processors have become mainstream, with companies such as Freescale Semiconductor, Cavium Networks, Wintegra and Broadcom

all manufacturing products with eight processors. For the system

developer, a key challenge is how to exploit all the cores in these

devices to achieve maximum networking performance at the system level,

despite the performance limitations inherent in a symmetric multiprocessing (SMP) operating system. Companies such as 6WIND

provide portable packet processing software designed so that the

networking data plane runs in a fast path environment outside the

operating system of the network device.

Digital signal processing

In digital signal processing the same trend applies: Texas Instruments has the three-core TMS320C6488 and four-core TMS320C5441, Freescale

the four-core MSC8144 and six-core MSC8156 (and both have stated they

are working on eight-core successors). Newer entries include the Storm-1

family from Stream Processors, Inc with 40 and 80 general purpose ALUs per chip, all programmable in C as a SIMD engine and Picochip with three-hundred processors on a single die, focused on communication applications.

Heterogeneous systems

In heterogeneous computing, where a system uses more than one kind of processor or cores, multi-core solutions are becoming more common: Xilinx

Zynq UltraScale+ MPSoC has Quad-core ARM Cortex-A53 and Dual-core ARM

Cortex-R5. Software solutions such as OpenAMP are being used to help

with inter processor communication.

Mobile devices may use the ARM big.LITTLE architecture.