Virtual Fixtures – first A.R. system, 1992, U.S. Air Force, WPAFB

Augmented reality (AR) is an interactive experience of a

real-world environment where the objects that reside in the real-world

are "augmented" by computer-generated perceptual information, sometimes

across multiple sensory modalities, including visual, auditory, haptic, somatosensory, and olfactory.

The overlaid sensory information can be constructive (i.e. additive to

the natural environment) or destructive (i.e. masking of the natural

environment) and is seamlessly interwoven with the physical world such

that it is perceived as an immersive aspect of the real environment. In this way, augmented reality alters one's ongoing perception of a real-world environment, whereas virtual reality completely replaces the user's real-world environment with a simulated one. Augmented reality is related to two largely synonymous terms: mixed reality and computer-mediated reality.

The primary value of augmented reality is that it brings

components of the digital world into a person's perception of the real

world, and does so not as a simple display of data, but through the

integration of immersive sensations that are perceived as natural parts

of an environment. The first functional AR systems that provided

immersive mixed reality experiences for users were invented in the early

1990s, starting with the Virtual Fixtures system developed at the U.S. Air Force's Armstrong Laboratory in 1992.

The first commercial augmented reality experiences were used largely

in the entertainment and gaming businesses, but now other industries are

also getting interested about AR's possibilities for example in

knowledge sharing, educating, managing the information flood and

organizing distant meetings. Augmented reality is also transforming the

world of education, where content may be accessed by scanning or viewing

an image with a mobile device or by bringing immersive, markerless AR

experiences to the classroom. Another example is an AR helmet for construction workers which display information about the construction sites.

Augmented reality is used to enhance natural environments or

situations and offer perceptually enriched experiences. With the help of

advanced AR technologies (e.g. adding computer vision and object recognition) the information about the surrounding real world of the user becomes interactive

and digitally manipulable. Information about the environment and its

objects is overlaid on the real world. This information can be virtual

or real, e.g. seeing other real sensed or measured information such as

electromagnetic radio waves overlaid in exact alignment with where they

actually are in space.

Augmented reality also has a lot of potential in the gathering and

sharing of tacit knowledge. Augmentation techniques are typically

performed in real time and in semantic context with environmental

elements. Immersive perceptual information is sometimes combined with

supplemental information like scores over a live video feed of a

sporting event. This combines the benefits of both augmented reality

technology and heads up display technology (HUD).

Technology

A Microsoft HoloLens being worn by a man

Hardware

Hardware components for augmented reality are: processor, display, sensors and input devices. Modern mobile computing devices like smartphones and tablet computers contain these elements which often include a camera and MEMS sensors such as accelerometer, GPS, and solid state compass, making them suitable AR platforms.

There are 2 technologies: diffractive waveguides and reflective waveguides.

Augmented reality systems guru Karl Guttag compared the optics of

diffractive waveguides against the competing technology, reflective

waveguides.

Display

Various technologies are used in augmented reality rendering, including optical projection systems, monitors, handheld devices, and display systems worn on the human body.

A head-mounted display

(HMD) is a display device worn on the forehead, such as a harness or

helmet. HMDs place images of both the physical world and virtual objects

over the user's field of view. Modern HMDs often employ sensors for six

degrees of freedom

monitoring that allow the system to align virtual information to the

physical world and adjust accordingly with the user's head movements. HMDs can provide VR users with mobile and collaborative experiences. Specific providers, such as uSens and Gestigon, include gesture controls for full virtual immersion.

In January 2015, Meta launched a project led by Horizons Ventures, Tim Draper, Alexis Ohanian, BOE Optoelectronics and Garry Tan. On February 17, 2016, Meta announced their second-generation product at TED, Meta 2. The Meta 2 head-mounted display headset uses a sensory array for hand interactions and positional tracking,

visual field view of 90 degrees (diagonal), and resolution display of

2560 x 1440 (20 pixels per degree), which is considered the largest field of view (FOV) currently available.

Eyeglasses

Smartglasses for augmented reality

AR displays can be rendered on devices resembling eyeglasses.

Versions include eyewear that employs cameras to intercept the real

world view and re-display its augmented view through the eyepieces and devices in which the AR imagery is projected through or reflected off the surfaces of the eyewear's lenspieces.

HUD

Headset computer

A head-up display (HUD) is a transparent display that presents data

without requiring users to look away from their usual viewpoints. A

precursor technology to augmented reality, heads-up displays were first

developed for pilots in the 1950s, projecting simple flight data into

their line of sight, thereby enabling them to keep their "heads up" and

not look down at the instruments. Near-eye augmented reality devices can

be used as portable head-up displays as they can show data,

information, and images while the user views the real world. Many

definitions of augmented reality only define it as overlaying the

information.

This is basically what a head-up display does; however, practically

speaking, augmented reality is expected to include registration and

tracking between the superimposed perceptions, sensations, information,

data, and images and some portion of the real world.

A number of smartglasses

have been launched for augmented reality. Due to encumbered control,

smartglasses are primarily designed for micro-interaction like reading a

text message but still far from more well-rounded applications of

augmented reality.

Contact lenses

Contact lenses that display AR imaging are in development. These bionic contact lenses

might contain the elements for display embedded into the lens including

integrated circuitry, LEDs and an antenna for wireless communication.

The first contact lens display was reported in 1999, then 11 years later in 2010-2011.

Another version of contact lenses, in development for the U.S.

military, is designed to function with AR spectacles, allowing soldiers

to focus on close-to-the-eye AR images on the spectacles and distant

real world objects at the same time.

The futuristic short film Sight features contact lens-like augmented reality devices.

Many scientists have been working on contact lenses capable of

many different technological feats. The company Samsung has been working

on a contact lens as well. This lens, when finished, is meant to have a

built-in camera on the lens itself.

The design is intended to have you blink to control its interface for

recording purposes. It is also intended to be linked with your

smartphone to review footage, and control it separately. When

successful, the lens would feature a camera, or sensor inside of it. It

is said that it could be anything from a light sensor, to a temperature

sensor.

In Augmented Reality, the distinction is made between two distinct modes of tracking, known as marker and markerless. Marker are visual cues which trigger the display of the virtual information.

A piece of paper with some distinct geometries can be used. The camera

recognizes the geometries by identifying specific points in the drawing.

Markerless tracking, also called instant tracking, does not use

markers. Instead the user positions the object in the camera view

preferably in a horizontal plane. It uses sensors in mobile devices to

accurately detect the real-world environment, such as the locations of

walls and points of intersection.

Virtual retinal display

A virtual retinal display (VRD) is a personal display device under development at the University of Washington's Human Interface Technology Laboratory under Dr. Thomas A. Furness III. With this technology, a display is scanned directly onto the retina

of a viewer's eye. This results in bright images with high resolution

and high contrast. The viewer sees what appears to be a conventional

display floating in space.

Several of tests were done in order to analyze the safety of the VRD.

In one test, patients with partial loss of vision were selected to view

images using the technology having either macular degeneration (a

disease that degenerates the retina) or keratoconus. In the macular

degeneration group, 5 out of 8 subjects preferred the VRD images to the

CRT or paper images and thought they were better and brighter and were

able to see equal or better resolution levels. The Kerocunus patients

could all resolve smaller lines in several line tests using the VDR as

opposed to their own correction. They also found the VDR images to be

easier to view and sharper. As a result of these several tests, virtual

retinal display is considered safe technology.

Virtual retinal display creates images that can be seen in

ambient daylight and ambient roomlight. The VRD is considered a

preferred candidate to use in a surgical display due to its combination

of high resolution and high contrast and brightness. Additional tests

show high potential for VRD to be used as a display technology for

patients that have low vision.

EyeTap

The EyeTap (also known as Generation-2 Glass)

captures rays of light that would otherwise pass through the center of

the lens of the eye of the wearer, and substitutes synthetic

computer-controlled light for each ray of real light.

The Generation-4 Glass

(Laser EyeTap) is similar to the VRD (i.e. it uses a

computer-controlled laser light source) except that it also has infinite

depth of focus and causes the eye itself to, in effect, function as

both a camera and a display by way of exact alignment with the eye and

resynthesis (in laser light) of rays of light entering the eye.

Handheld

A

Handheld display employs a small display that fits in a user's hand. All

handheld AR solutions to date opt for video see-through. Initially

handheld AR employed fiducial markers, and later GPS units and MEMS sensors such as digital compasses and six degrees of freedom accelerometer–gyroscope. Today SLAM

markerless trackers such as PTAM are starting to come into use.

Handheld display AR promises to be the first commercial success for AR

technologies. The two main advantages of handheld AR are the portable

nature of handheld devices and the ubiquitous nature of camera phones.

The disadvantages are the physical constraints of the user having to

hold the handheld device out in front of them at all times, as well as

the distorting effect of classically wide-angled mobile phone cameras

when compared to the real world as viewed through the eye.

Games such as Pokémon Go and Ingress utilize an Image Linked Map (ILM) interface, where approved geotagged locations appear on a stylized map for the user to interact with.

Spatial

Spatial augmented reality (SAR) augments real-world objects and scenes without the use of special displays such as monitors, head-mounted displays

or hand-held devices. SAR makes use of digital projectors to display

graphical information onto physical objects. The key difference in SAR

is that the display is separated from the users of the system. Because

the displays are not associated with each user, SAR scales naturally up

to groups of users, thus allowing for collocated collaboration between

users.

Examples include shader lamps,

mobile projectors, virtual tables, and smart projectors. Shader lamps

mimic and augment reality by projecting imagery onto neutral objects,

providing the opportunity to enhance the object's appearance with

materials of a simple unit - a projector, camera, and sensor.

Other applications include table and wall projections. One

innovation, the Extended Virtual Table, separates the virtual from the

real by including beam-splitter mirrors attached to the ceiling at an adjustable angle.

Virtual showcases, which employ beam-splitter mirrors together with

multiple graphics displays, provide an interactive means of

simultaneously engaging with the virtual and the real. Many more

implementations and configurations make spatial augmented reality

display an increasingly attractive interactive alternative.

A SAR system can display on any number of surfaces of an indoor

setting at once. SAR supports both a graphical visualization and passive

haptic sensation for the end users. Users are able to touch physical objects in a process that provides passive haptic sensation.

Tracking

Modern mobile augmented-reality systems use one or more of the following motion tracking technologies:

digital cameras and/or other optical sensors, accelerometers, GPS, gyroscopes, solid state compasses, RFID.

These technologies offer varying levels of accuracy and precision. The

most important is the position and orientation of the user's head. Tracking the user's hand(s) or a handheld input device can provide a 6DOF interaction technique.

Networking

Mobile

augmented reality applications are gaining popularity due to the wide

adoption of mobile and especially wearable devices. However, they often

rely on computationally intensive computer vision algorithms with

extreme latency requirements. To compensate for the lack of computing

power, offloading data processing to a distant machine is often desired.

Computation offloading introduces new constraints in applications,

especially in terms of latency and bandwidth. Although there are a

plethora of real-time multimedia transport protocols, there is a need

for support from network infrastructure as well.

Input devices

Techniques include speech recognition systems that translate a user's spoken words into computer instructions, and gesture recognition

systems that interpret a user's body movements by visual detection or

from sensors embedded in a peripheral device such as a wand, stylus,

pointer, glove or other body wear. Products which are trying to serve as a controller of AR headsets include Wave by Seebright Inc. and Nimble by Intugine Technologies.

Computer

The

computer analyzes the sensed visual and other data to synthesize and

position augmentations. Computers are responsible for the graphics that

go with augmented reality. Augmented reality uses a computer-generated

image and it has an striking effect on the way the real world is shown.

With the improvement of technology and computers, augmented reality is

going to have a drastic change on our perspective of the real world.

According to Time Magazine, in about 15–20 years it is predicted that

Augmented reality and virtual reality are going to become the primary

use for computer interactions.

Computers are improving at a very fast rate, which means that we are

figuring out new ways to improve other technology. The more that

computers progress, augmented reality will become more flexible and more

common in our society. Computers are the core of augmented reality. The Computer receives data from the sensors which determine the

relative position of objects surface. This translates to an input to the

computer which then outputs to the users by adding something that would

otherwise not be there. The computer comprises memory and a processor.

The computer takes the scanned environment then generates images or a

video and puts it on the receiver for the observer to see. The fixed

marks on an objects surface are stored in the memory of a computer. The

computer also withdrawals from its memory to present images

realistically to the onlooker. The best example of this is of the Pepsi

Max AR Bus Shelter.

Software and algorithms

A

key measure of AR systems is how realistically they integrate

augmentations with the real world. The software must derive real world

coordinates, independent from the camera, from camera images. That

process is called image registration, and uses different methods of computer vision, mostly related to video tracking. Many computer vision methods of augmented reality are inherited from visual odometry.

Usually those methods consist of two parts. The first stage is to detect interest points, fiducial markers or optical flow in the camera images. This step can use feature detection methods like corner detection, blob detection, edge detection or thresholding, and other image processing methods.

The second stage restores a real world coordinate system from the data

obtained in the first stage. Some methods assume objects with known

geometry (or fiducial markers) are present in the scene. In some of

those cases the scene 3D structure should be precalculated beforehand.

If part of the scene is unknown simultaneous localization and mapping (SLAM) can map relative positions. If no information about scene geometry is available, structure from motion methods like bundle adjustment are used. Mathematical methods used in the second stage include projective (epipolar) geometry, geometric algebra, rotation representation with exponential map, kalman and particle filters, nonlinear optimization, robust statistics.

Augmented Reality Markup Language (ARML) is a data standard developed within the Open Geospatial Consortium (OGC), which consists of XML

grammar to describe the location and appearance of virtual objects in

the scene, as well as ECMAScript bindings to allow dynamic access to

properties of virtual objects.

To enable rapid development of augmented reality applications, some software development kits (SDKs) have emerged.

Development

The

implementation of Augmented Reality in consumer products requires

considering the design of the applications and the related constraints

of the technology platform. Since AR system rely heavily on the

immersion of the user and the interaction between the user and the

system, design can facilitate the adoption of virtuality. For most

Augmented Reality systems, a similar design guideline can be followed.

The following lists some considerations for designing Augmented Reality

applications:

Environmental/context design

Context

Design focuses on the end-user's physical surrounding, spatial space,

and accessibility that may play a role when using the AR system.

Designers should be aware of the possible physical scenarios the

end-user may be in such as:

- Public, in which the users uses their whole body to interact with the software

- Personal, in which the user uses a smartphone in a public space

- Intimate, in which the user is sitting with a desktop and is not really in movement

- Private, in which the user has on a wearable.

By evaluating each physical scenario, potential safety hazard can be

avoided and changes can be made to greater improve the end-user's

immersion. UX designers will have to define user journeys for the

relevant physical scenarios and define how the interface will react to

each.

Especially in AR systems, it is vital to also consider the

spatial space and the surrounding elements that change the effectiveness

of the AR technology. Environmental elements such as lighting, and

sound can prevent the sensor of AR devices from detecting necessary data

and ruin the immersion of the end-user.

Another aspect of context design involves the design of the

system's functionality and its ability to accommodate for user

preferences.

While accessibility tools are common in basic application design, some

consideration should be made when designing time-limited prompts (to

prevent unintentional operations), audio cues and overall engagement

time. It is important to note that in some situations, the application's

functionality may hinder the user's ability. For example, applications

that is used for driving should reduce the amount of user interaction

and user audio cues instead.

Interaction design

Interaction design

in augmented reality technology centers on the user's engagement with

the end product to improve the overall user experience and enjoyment.

The purpose of Interaction Design is to avoid alienating or confusing

the user by organising the information presented. Since user interaction

relies on the user's input, designers must make system controls easier

to understand and accessible. A common technique to improve usability

for augmented reality applications is by discovering the frequently

accessed areas in the device's touch display and design the application

to match those areas of control. It is also important to structure the user journey maps

and the flow of information presented which reduce the system's overall

cognitive load and greatly improves the learning curve of the

application.

In interaction design, it is important for developers to utilize

augmented reality technology that complement the system's function or

purpose. For instance, the utilization of exciting AR filters and the design of the unique sharing platform in Snapchat

enables users to better the user's social interactions. In other

applications that require users to understand the focus and intent,

designers can employ a reticle or raycast from the device.

Moreover, augmented reality developers may find it appropriate to have

digital elements scale or react to the direction of the camera and the

context of objects that can are detected.

Augmented reality technology allows to utilize the introduction of 3D space. This means that a user can potentially access multiple copies of 2D interfaces within a single AR application.

Visual design

In general, visual design

is the appearance of the developing application that engages the user.

To improve the graphic interface elements and user interaction,

developers may use visual cues to inform user what elements of UI are

designed to interact with and how to interact with them. Since

navigating in AR application may appear difficult and seem frustrating,

visual cues design can make interactions seem more natural.

In some augmented reality applications that uses a 2D device as

an interactive surface, the 2D control environment does not translate

well in 3D space making users hesitant to explore their surroundings. To

solve this issue, designers should apply visual cues to assist and

encourage users to explore their surroundings.

It is important to note the two main objects in AR when developing VR applications: 3D volumetric

objects that are manipulatable and realistically interact with light

and shadow; and animated media imagery such as images and videos which

are mostly traditional 2D media rendered in a new context for augmented

reality.

When virtual objects are projected onto a real environment, it is

challenging for augmented reality application designers to ensure a

perfectly seamless integration relative to the real-world environment,

especially with 2D objects. As such, designers can add weight to

objects, use depths maps, and choose different material properties that

highlight the object's presence in the real world. Another visual design

that can be applied is using different lighting

techniques or casting shadows to improve overall depth judgment. For

instance, a common lighting technique is simply placing a light source

overhead at the 12 o’clock position, to create shadows upon virtual

objects.

Possible applications

Augmented reality has been explored for many applications, from

gaming and entertainment to medicine, education and business. Example

application areas described below include Archaeology, Architecture,

Commerce and Education. Some of the earliest cited examples include

Augmented Reality used to support surgery by providing virtual overlays

to guide medical practitioners to AR content for astronomy and welding.

Literature

An example of an AR code containing a QR code

The first description of AR as it is known today was in Virtual Light, the 1994 novel by William Gibson. In 2011, AR was blended with poetry by ni ka from Sekai Camera in Tokyo, Japan. The prose of these AR poems come from Paul Celan, "Die Niemandsrose", expressing the aftermath of the 2011 Tōhoku earthquake and tsunami.

Archaeology

AR

has been used to aid archaeological research. By augmenting

archaeological features onto the modern landscape, AR allows

archaeologists to formulate possible site configurations from extant

structures.

Computer generated models of ruins, buildings, landscapes or even

ancient people have been recycled into early archaeological AR

applications.

For example, implementing a system like, "VITA (Visual Interaction Tool

for Archaeology)" will allow users to imagine and investigate instant

excavation results without leaving their home. Each user can collaborate

by mutually "navigating, searching, and viewing data." Hrvoje Benko, a

researcher for the computer science department at Columbia University,

points out that these particular systems and others like it can provide

"3D panoramic images and 3D models of the site itself at different

excavation stages" all the while organizing much of the data in a

collaborative way that is easy to use. Collaborative AR systems supply

multimodal interactions that combine the real world with virtual images

of both environments.

AR has been recently adopted also in the underwater archaeology field to

efficiently support and facilitate the manipulation of archaeological

artefacts.

Architecture

AR

can aid in visualizing building projects. Computer-generated images of a

structure can be superimposed into a real-life local view of a property

before the physical building is constructed there; this was

demonstrated publicly by Trimble Navigation

in 2004. AR can also be employed within an architect's workspace,

rendering animated 3D visualizations of their 2D drawings. Architecture

sight-seeing can be enhanced with AR applications, allowing users

viewing a building's exterior to virtually see through its walls,

viewing its interior objects and layout.

With the continual improvements to GPS accuracy, businesses are able to use augmented reality to visualize georeferenced models of construction sites, underground structures, cables and pipes using mobile devices.

Augmented reality is applied to present new projects, to solve on-site

construction challenges, and to enhance promotional materials. Examples include the Daqri

Smart Helmet, an Android-powered hard hat used to create augmented

reality for the industrial worker, including visual instructions,

real-time alerts, and 3D mapping.

Following the Christchurch earthquake, the University of Canterbury released CityViewAR, which enabled city planners and engineers to visualize buildings that had been destroyed. Not only did this provide planners with tools to reference the previous cityscape, but it also served as a reminder to the magnitude of the devastation caused, as entire buildings had been demolished.

Visual art

10.000 Moving Cities, Marc Lee, Augmented Reality Multiplayer Game, Art Installation

AR applied in the visual arts allows objects or places to trigger

artistic multidimensional experiences and interpretations of reality.

Augmented Reality can aid in the progression of visual art in

museums by allowing museum visitors to view artwork in galleries in a

multidimensional way through their phone screens. The Museum of Modern Art

in New York has created an exhibit in their art museum showcasing

Augmented Reality features that viewers can see using an app on their

smartphone.

The museum has developed their personal app, called MoMAR Gallery, that

museum guests can download and use in the Augmented Reality specialized

gallery in order to view the museum's paintings in a different way.

This allows individuals to see hidden aspects and information about the

paintings, and to be able to have an interactive technological

experience with artwork as well.

AR technology aided the development of eye tracking technology to translate a disabled person's eye movements into drawings on a screen.

Commerce

The

AR-Icon can be used as a marker on print as well as on online media. It

signals the viewer that digital content is behind it. The content can

be viewed with a smartphone or tablet.

AR is used to integrate print and video marketing. Printed marketing

material can be designed with certain "trigger" images that, when

scanned by an AR-enabled device using image recognition, activate a

video version of the promotional material. A major difference between

augmented reality and straightforward image recognition is that one can

overlay multiple media at the same time in the view screen, such as

social media share buttons, the in-page video even audio and 3D objects.

Traditional print-only publications are using augmented reality to

connect many different types of media.

AR can enhance product previews such as allowing a customer to view what's inside a product's packaging without opening it.

AR can also be used as an aid in selecting products from a catalog or

through a kiosk. Scanned images of products can activate views of

additional content such as customization options and additional images

of the product in its use.

By 2010, virtual dressing rooms had been developed for e-commerce.

Augment

SDK offers brands and retailers the capability to personalize their

customers' shopping experience by embedding AR product visualization

into their eCommerce platforms.

In 2012, a mint used AR techniques to market a commemorative coin for

Aruba. The coin itself was used as an AR trigger, and when held in

front of an AR-enabled device it revealed additional objects and layers

of information that were not visible without the device.

In 2015, the Bulgarian startup iGreet developed its own AR

technology and used it to make the first premade "live" greeting card. A

traditional paper card was augmented with digital content which was

revealed by using the iGreet app.

In 2017, Ikea

announced Ikea Place app. The app contains a catalogue of over 2,000

products—nearly the company's full collection of umlauted sofas,

armchairs, coffee tables, and storage units which one can place anywhere

in a room with their phone.

In 2018, Apple

announced USDZ AR file support for iPhones and iPads with iOS12. Apple

has created an AR QuickLook Gallery that allows masses experience

Augmented reality on their own Apple device.

In 2018, Shopify,

the Canadian commerce company, announced ARkit2 integrations and their

merchants are able to use the tools to upload 3D models of their

products, which users will be able to tap on the goods inside Safari to

view in their real-world environments.

In 2018, Twinkl released free AR classroom application, pupils can see how York looked over 1,900 years ago. Twinkl launched the first ever multi-player AR game, Little Red and has over 100 free AR educational models.

Education

In

educational settings, AR has been used to complement a standard

curriculum. Text, graphics, video, and audio may be superimposed into a

student's real-time environment. Textbooks, flashcards and other

educational reading material may contain embedded "markers"

or triggers that, when scanned by an AR device, produced supplementary

information to the student rendered in a multimedia format.

As AR evolves, students can participate interactively and

interact with knowledge more authentically. Instead of remaining passive

recipients, students can become active learners, able to interact with

their learning environment. Computer-generated simulations of historical

events allow students to explore and learning details of each

significant area of the event site.

In higher education, Construct3D, a Studierstube system, allows

students to learn mechanical engineering concepts, math or geometry.

Chemistry AR apps allow students to visualize and interact with the

spatial structure of a molecule using a marker object held in the hand.

Others have used HP Reveal, a free app, to create AR notecards for

studying organic chemistry mechanisms or to create virtual

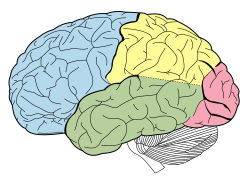

demonstrations of how to use laboratory instrumentation. Anatomy students can visualize different systems of the human body in three dimensions.

Remote collaboration

Primary

school children learn easily from interactive experiences. Astronomical

constellations and the movements of objects in the solar system were

oriented in 3D and overlaid in the direction the device was held, and

expanded with supplemental video information. Paper-based science book

illustrations could seem to come alive as video without requiring the

child to navigate to web-based materials.

In 2013, a project was launched on Kickstarter to teach about

electronics with an educational toy that allowed children to scan their

circuit with an iPad and see the electric current flowing around.

While some educational apps were available for AR by 2016, it was not

broadly used. Apps that leverage augmented reality to aid learning

included SkyView for studying astronomy, AR Circuits for building simple electric circuits, and SketchAr for drawing.

AR would also be a way for parents and teachers to achieve their

goals for modern education, which might include providing a more

individualized and flexible learning, making closer connections between

what is taught at school and the real world, and helping students to

become more engaged in their own learning.

A recent research compared the functionalities of augmented reality tools with potential for education

Emergency management/search and rescue

Augmented reality systems are used in public safety situations, from super storms to suspects at large.

As early as 2009, two articles from Emergency Management

magazine discussed the power of this technology for emergency

management. The first was "Augmented Reality--Emerging Technology for

Emergency Management" by Gerald Baron.

Per Adam Crowe: "Technologies like augmented reality (ex: Google Glass)

and the growing expectation of the public will continue to force

professional emergency managers to radically shift when, where, and how

technology is deployed before, during, and after disasters."

Another early example was a search aircraft looking for a lost

hiker in rugged mountain terrain. Augmented reality systems provided

aerial camera operators with a geographic awareness of forest road names

and locations blended with the camera video. The camera operator was

better able to search for the hiker knowing the geographic context of

the camera image. Once located, the operator could more efficiently

direct rescuers to the hiker's location because the geographic position

and reference landmarks were clearly labeled.

Social interaction

AR

can be used to facilitate social interaction. An augmented reality

social network framework called Talk2Me enables people to disseminate

information and view others’ advertised information in an augmented

reality way. The timely and dynamic information sharing and viewing

functionalities of Talk2Me help initiate conversations and make friends

for users with people in physical proximity.

Augmented reality also Gives users the ability to practice

different forms of social interactions with other people in a safe,

risk-free environment. Hannes Kauffman, Associate Professor for Virtual

Reality at TU Vienna, says “In collaborative Augmented Reality multiple

users may access a shared space populated by virtual objects, while

remaining grounded in the real world. This technique is particularly

powerful for educational purposes when users are collocated and can use

natural means of communication (speech, gestures etc.), but can also be

mixed successfully with immersive VR or remote collaboration.”

(Hannes)Hannes cites a specific use for this technology, education.

Video games

Merchlar's mobile game Get On Target uses a trigger image as fiducial marker.

The gaming industry embraced AR technology. A number of games were

developed for prepared indoor environments, such as AR air hockey, Titans of Space, collaborative combat against virtual enemies, and AR-enhanced pool table games.

Augmented reality allowed video game players to experience digital game play in a real-world environment. Niantic released the popular augmented reality mobile game Pokémon Go. Disney has partnered with Lenovo to create the augmented reality game Star Wars: Jedi Challenges that works with a Lenovo Mirage AR headset, a tracking sensor and a Lightsaber controller, scheduled to launch in December 2017.

Augmented Reality Gaming (ARG) is also used to market film and

television entertainment properties. On March 16, 2011, BitTorrent

promoted an open licensed version of the feature film Zenith

in the United States. Users who downloaded the BitTorrent client

software were also encouraged to download and share Part One of three

parts of the film. On May 4, 2011, Part Two of the film was made

available on Vodo. The episodic release of the film, supplemented by an

ARG transmedia marketing campaign, created a viral effect and over a

million users downloaded the movie.

Industrial design

AR allows industrial designers to experience a product's design and

operation before completion. Volkswagen has used AR for comparing

calculated and actual crash test imagery.

AR has been used to visualize and modify car body structure and engine

layout. It has also been used to compare digital mock-ups with physical

mock-ups for finding discrepancies between them.

Medical

Since 2005, a device called a near-infrared vein finder that films subcutaneous veins, processes and projects the image of the veins onto the skin has been used to locate veins.

AR provides surgeons with patient monitoring data in the style of

a fighter pilot's heads-up display, and allows patient imaging records,

including functional videos, to be accessed and overlaid. Examples

include a virtual X-ray view based on prior tomography or on real-time images from ultrasound and confocal microscopy probes, visualizing the position of a tumor in the video of an endoscope, or radiation exposure risks from X-ray imaging devices. AR can enhance viewing a fetus inside a mother's womb.

Siemens, Karl Storz and IRCAD have developed a system for laparoscopic

liver surgery that uses AR to view sub-surface tumors and vessels.

AR has been used for cockroach phobia treatment.

Patients wearing augmented reality glasses can be reminded to take medications. Virtual reality has been seen promising in the medical field since the 90's. Augmented reality can be very helpful in the medical field.

It could be used to provide crucial information to a doctor or surgeon

with having them take their eyes off the patient. On the 30th of April,

2015 Microsoft announced the Microsoft HoloLens, their first shot at augmented reality. The HoloLens

has advanced through the years and it has gotten so advanced that it

has been used to project holograms for near infrared fluorescence based

image guided surgery.

As augment reality advances, the more it is implemented into medical

use. Augmented reality and other computer based-utility is being used

today to help train medical professionals.

Spatial immersion and interaction

Augmented

reality applications, running on handheld devices utilized as virtual

reality headsets, can also digitalize human presence in space and

provide a computer generated model of them, in a virtual space where

they can interact and perform various actions. Such capabilities are

demonstrated by "Project Anywhere", developed by a postgraduate student

at ETH Zurich, which was dubbed as an "out-of-body experience".

Flight training

Building

on decades of perceptual-motor research in experimental psychology,

researchers at the Aviation Research Laboratory of the University of

Illinois at Urbana-Champaign used augmented reality in the form of a

flight path in the sky to teach flight students how to land a flight

simulator. An adaptive augmented schedule in which students were shown

the augmentation only when they departed from the flight path proved to

be a more effective training intervention than a constant schedule.

Flight students taught to land in the simulator with the adaptive

augmentation learned to land a light aircraft more quickly than students

with the same amount of landing training in the simulator but with

constant augmentation or without any augmentation.

Military

Augmented Reality System for Soldier ARC4(USA)

An interesting early application of AR occurred when Rockwell

International created video map overlays of satellite and orbital debris

tracks to aid in space observations at Air Force Maui Optical System.

In their 1993 paper "Debris Correlation Using the Rockwell WorldView

System" the authors describe the use of map overlays applied to video

from space surveillance telescopes. The map overlays indicated the

trajectories of various objects in geographic coordinates. This allowed

telescope operators to identify satellites, and also to identify and

catalog potentially dangerous space debris.

Starting in 2003 the US Army integrated the SmartCam3D augmented

reality system into the Shadow Unmanned Aerial System to aid sensor

operators using telescopic cameras to locate people or points of

interest. The system combined both fixed geographic information

including street names, points of interest, airports, and railroads with

live video from the camera system. The system offered a "picture in

picture" mode that allows the system to show a synthetic view of the

area surrounding the camera's field of view. This helps solve a problem

in which the field of view is so narrow that it excludes important

context, as if "looking through a soda straw". The system displays

real-time friend/foe/neutral location markers blended with live video,

providing the operator with improved situational awareness.

As of 2010, Korean researchers are looking to implement

mine-detecting robots into the military. The proposed design for such a

robot includes a mobile platform that is like a track which would be

able to cover uneven distances including stairs. The robot's mine

detection sensor would include a combination of metal detectors and

ground penetration radars to locate mines or IEDs. This unique design

would be immeasurably helpful in saving lives of Korean soldiers.

Researchers at USAF Research Lab (Calhoun, Draper et al.) found

an approximately two-fold increase in the speed at which UAV sensor

operators found points of interest using this technology.

This ability to maintain geographic awareness quantitatively enhances

mission efficiency. The system is in use on the US Army RQ-7 Shadow and

the MQ-1C Gray Eagle Unmanned Aerial Systems.

In combat, AR can serve as a networked communication system that

renders useful battlefield data onto a soldier's goggles in real time.

From the soldier's viewpoint, people and various objects can be marked

with special indicators to warn of potential dangers. Virtual maps and

360° view camera imaging can also be rendered to aid a soldier's

navigation and battlefield perspective, and this can be transmitted to

military leaders at a remote command center.

LandForm video map overlay marking runways, road, and buildings during 1999 helicopter flight test

The NASA X-38 was flown using a Hybrid Synthetic Vision system that

overlaid map data on video to provide enhanced navigation for the

spacecraft during flight tests from 1998 to 2002. It used the LandForm

software and was useful for times of limited visibility, including an

instance when the video camera window frosted over leaving astronauts to

rely on the map overlays.

The LandForm software was also test flown at the Army Yuma Proving

Ground in 1999. In the photo at right one can see the map markers

indicating runways, air traffic control tower, taxiways, and hangars

overlaid on the video.

AR can augment the effectiveness of navigation devices.

Information can be displayed on an automobile's windshield indicating

destination directions and meter, weather, terrain, road conditions and

traffic information as well as alerts to potential hazards in their

path. Since 2012, a Swiss-based company WayRay

has been developing holographic AR navigation systems that use

holographic optical elements for projecting all route-related

information including directions, important notifications, and points of

interest right into the drivers’ line of sight and far ahead of the

vehicle.

Aboard maritime vessels, AR can allow bridge watch-standers to

continuously monitor important information such as a ship's heading and

speed while moving throughout the bridge or performing other tasks.

Workplace

Augmented

reality may have a good impact on work collaboration as people may be

inclined to interact more actively with their learning environment. It

may also encourage tacit knowledge renewal which makes firms more

competitive. AR was used to facilitate collaboration among distributed

team members via conferences with local and virtual participants. AR

tasks included brainstorming and discussion meetings utilizing common

visualization via touch screen tables, interactive digital whiteboards,

shared design spaces and distributed control rooms.

In industrial environments, augmented reality is proving to have a

substantial impact with more and more use cases emerging across all

aspect of the product lifecycle, starting from product design and new

product introduction (NPI) to manufacturing to service and maintenance,

to material handling and distribution. For example, labels were

displayed on parts of a system to clarify operating instructions for a

mechanic performing maintenance on a system.

Assembly lines benefited from the usage of AR. In addition to Boeing,

BMW and Volkswagen were known for incorporating this technology into

assembly lines for monitoring process improvements.

Big machines are difficult to maintain because of their multiple layers

or structures. AR permits people to look through the machine as if with

an x-ray, pointing them to the problem right away.

As AR technology has evolved and second and third generation AR

devices come to market, the impact of AR in enterprise continues to

flourish. In a Harvard Business Review, Magid Abraham and Marco

Annunziata discuss how AR devices are now being used to "boost workers’

productivity on an array of tasks the first time they're used, even

without prior training."

They go on to contend that "these technologies increase productivity by

making workers more skilled and efficient, and thus have the potential

to yield both more economic growth and better jobs."

Broadcast and live events

Weather

visualizations were the first application of augmented reality to

television. It has now become common in weathercasting to display full

motion video of images captured in real-time from multiple cameras and

other imaging devices. Coupled with 3D graphics symbols and mapped to a

common virtual geospace model, these animated visualizations constitute

the first true application of AR to TV.

AR has become common in sports telecasting. Sports and

entertainment venues are provided with see-through and overlay

augmentation through tracked camera feeds for enhanced viewing by the

audience. Examples include the yellow "first down" line seen in television broadcasts of American football

games showing the line the offensive team must cross to receive a first

down. AR is also used in association with football and other sporting

events to show commercial advertisements overlaid onto the view of the

playing area. Sections of rugby fields and cricket

pitches also display sponsored images. Swimming telecasts often add a

line across the lanes to indicate the position of the current record

holder as a race proceeds to allow viewers to compare the current race

to the best performance. Other examples include hockey puck tracking and

annotations of racing car performance and snooker ball trajectories.

Augmented reality for Next Generation TV allows viewers to

interact with the programs they were watching. They can place objects

into an existing program and interact with them, such as moving them

around. Objects include avatars of real persons in real time who are

also watching the same program.

AR has been used to enhance concert and theater performances. For

example, artists allow listeners to augment their listening experience

by adding their performance to that of other bands/groups of users.

Tourism and sightseeing

Travelers

may use AR to access real-time informational displays regarding a

location, its features, and comments or content provided by previous

visitors. Advanced AR applications include simulations of historical

events, places, and objects rendered into the landscape.

AR applications linked to geographic locations present location

information by audio, announcing features of interest at a particular

site as they become visible to the user.

Companies can use AR to attract tourists to particular areas that

they may not be familiar with by name. Tourists will be able to

experience beautiful landscapes in first person with the use of AR

devices. Companies like Phocuswright plan to use such technology in

order to expose the lesser known but beautiful areas of the planet, and

in turn, increase tourism. Other companies such as Matoke Tours have

already developed an application where the user can see 360 degrees from

several different places in Uganda. Matoke Tours and Phocuswright have

the ability to display their apps on virtual reality headsets like the

Samsung VR and Oculus Rift.

Translation

AR systems such as Word Lens

can interpret the foreign text on signs and menus and, in a user's

augmented view, re-display the text in the user's language. Spoken words

of a foreign language can be translated and displayed in a user's view

as printed subtitles.

Music

It has been suggested that augmented reality may be used in new methods of music production, mixing, control and visualization.

A tool for 3D music creation in clubs that, in addition to regular sound mixing features, allows the DJ to play dozens of sound samples, placed anywhere in 3D space, has been conceptualized.

Leeds College of Music teams have developed an AR app that can be used with Audient

desks and allow students to use their smartphone or tablet to put

layers of information or interactivity on top of an Audient mixing desk.

ARmony is a software package that makes use of augmented reality to help people to learn an instrument.

In a proof-of-concept project Ian Sterling, interaction design student at California College of the Arts,

and software engineer Swaroop Pal demonstrated a HoloLens app whose

primary purpose is to provide a 3D spatial UI for cross-platform devices

— the Android Music Player app and Arduino-controlled Fan and Light —

and also allow interaction using gaze and gesture control.

AR Mixer is an app that allows one to select and mix between

songs by manipulating objects – such as changing the orientation of a

bottle or can.

In a video, Uriel Yehezkel demonstrates using the Leap Motion controller and GECO MIDI to control Ableton Live with hand gestures

and states that by this method he was able to control more than 10

parameters simultaneously with both hands and take full control over the

construction of the song, emotion and energy.

A novel musical instrument that allows novices to play electronic

musical compositions, interactively remixing and modulating their

elements, by manipulating simple physical objects has been proposed.

A system using explicit gestures and implicit dance moves to

control the visual augmentations of a live music performance that enable

more dynamic and spontaneous performances and—in combination with

indirect augmented reality—leading to a more intense interaction between

artist and audience has been suggested.

Research by members of the CRIStAL at the University of Lille

makes use of augmented reality in order to enrich musical performance.

The ControllAR project allows musicians to augment their MIDI control surfaces with the remixed graphical user interfaces of music software. The Rouages project proposes to augment digital musical instruments in order to reveal their mechanisms to the audience and thus improve the perceived liveness.

Reflets is a novel augmented reality display dedicated to musical

performances where the audience acts as a 3D display by revealing

virtual content on stage, which can also be used for 3D musical

interaction and collaboration.

Retail

Augmented

reality is becoming more frequently used for online advertising.

Retailers offer the ability to upload a picture on their website and

"try on" various clothes which is overlaid on the picture. Even further,

companies such as Bodymetrics install dressing booths in department

stores that offer full-body scanning.

These booths render a 3-D model of the user, allowing the consumers to

view different outfits on themselves without the need of physically

changing clothes. For example, JC Penney and Bloomingdale's use "virtual dressing rooms" that allow customers to see themselves in clothes without trying them on. Another store that uses AR to market clothing to its customers is Neiman Marcus. Neiman Marcus offers consumers the ability to see their outfits in a 360 degree view with their "memory mirror". Makeup stores like L'Oreal, Sephora, Charlotte Tilbury, and Rimmel also have apps that utilize AR. These apps allow consumers to see how the makeup will look on them.

According to Greg Jones, director of AR and VR at Google, augmented

reality is going to "reconnect physical and digital retail."

AR technology is also used by furniture retailers such as IKEA, Houzz, and Wayfair. These retailers offer apps that allow consumers to view their products in their home prior to purchasing anything.

IKEA launched their IKEA Place at the end of 2017 and made it possible

to have 3D and true to scale models of furniture in their living space,

through using the app and their camera. IKEA realized that their

customers are not shopping in stores as often or directly buy things

anymore. So they created IKEA Place to tackle these problems and have

people try out the furniture with Augmented Reality and then decide if

they want to buy it.

Snapchat

Snapchat

users have access to augmented reality in the company's instant

messaging app through use of camera filters. In September 2017, Snapchat

updated its app to include a camera filter that allowed users to render

an animated, cartoon version of themselves called "Bitmoji". These

animated avatars would be projected in the real world through the

camera, and can be photographed or video recorded.

In the same month, Snapchat also announced a new feature called "Sky

Filters" that will be available on its app. This new feature makes use

of augmented reality to alter the look of a picture taken of the sky,

much like how users can apply the app's filters to other pictures. Users

can choose from sky filters such as starry night, stormy clouds,

beautiful sunsets, and rainbow.

The Dangers of AR

Reality modifications

There

is a danger that will make individuals overconfident and put their life

at risk because of it. Pokémon GO with a couple of deaths and many

injuries is the perfect example of it. "Death by Pokémon GO”,

by a pair of researchers from Purdue University's Krannert School of

Management, says the game caused “a disproportionate increase in

vehicular crashes and associated vehicular damage, personal injuries,

and fatalities in the vicinity of locations, called PokéStops, where

users can play the game while driving.”.

The paper extrapolated what that might mean nationwide and concluded

“the increase in crashes attributable to the introduction of Pokémon GO

is 145,632 with an associated increase in the number of injuries of

29,370 and an associated increase in the number of fatalities of 256

over the period of July 6, 2016, through November 30, 2016.” The authors

valued those crashes and fatalities at between $2bn and $7.3 billion

for the same period.

Furthermore, more than one in three surveyed advanced internet

users would like to edit out disturbing elements around them, such as

garbage or graffiti.

They would like to even modify their surroundings by erasing street

signs, billboard ads, and uninteresting shopping windows. So it seems

that AR is a threat to companies as it is an opportunity. Although this

could be a nightmare to numerous brands that do not manage to capture

consumer imaginations it also creates the risk that the wearers of

augmented reality glasses may become unaware of surrounding dangers.

Consumers want to use augmented reality glasses to change their

surroundings into something that reflects their own personal opinions.

Around two in five want to change the way their surroundings look and

even how people appear to them.

Next, to the possible privacy issues that are described below,

overload and over-reliance issues is the biggest danger of AR. For the

development of new AR related products, this implies that the

user-interface should follow certain guidelines as not to overload the

user with information while also preventing the user to overly rely on

the AR system such that important cues from the environment are missed. This is called the virtually-augmented key. Once the key is not taken into account people might not need the real world anymore.

Privacy concerns

The

concept of modern augmented reality depends on the ability of the

device to record and analyze the environment in real time. Because of

this, there are potential legal concerns over privacy. While the First Amendment to the United States Constitution

allows for such recording in the name of public interest, the constant

recording of an AR device makes it difficult to do so without also

recording outside of the public domain. Legal complications would be

found in areas where a right to a certain amount of privacy is expected

or where copyrighted media are displayed.

In terms of individual privacy, there exists the ease of access

to information that one should not readily possess about a given person.

This is accomplished through facial recognition technology. Assuming

that AR automatically passes information about persons that the user

sees, there could be anything seen from social media, criminal record,

and marital status.

Privacy-compliant image capture solutions can be deployed to temper the impact of constant filming on individual privacy.

Notable researchers

- Ivan Sutherland invented the first VR head-mounted display at Harvard University.

- Steve Mann formulated an earlier concept of mediated reality in the 1970s and 1980s, using cameras, processors, and display systems to modify visual reality to help people see better (dynamic range management), building computerized welding helmets, as well as "augmediated reality" vision systems for use in everyday life. He is also an adviser to Meta.

- Louis Rosenberg developed one of the first known AR systems, called Virtual Fixtures, while working at the U.S. Air Force Armstrong Labs in 1991, and published the first study of how an AR system can enhance human performance. Rosenberg's subsequent work at Stanford University in the early 90's, was the first proof that virtual overlays when registered and presented over a user's direct view of the real physical world, could significantly enhance human performance.

- Mike Abernathy pioneered one of the first successful augmented video overlays (also called hybrid syntheric vision) using map data for space debris in 1993, while at Rockwell International. He co-founded Rapid Imaging Software, Inc. and was the primary author of the LandForm system in 1995, and the SmartCam3D system. LandForm augmented reality was successfully flight tested in 1999 aboard a helicopter and SmartCam3D was used to fly the NASA X-38 from 1999–2002. He and NASA colleague Francisco Delgado received the National Defense Industries Association Top5 awards in 2004.

- Steven Feiner, Professor at Columbia University, is the author of a 1993 paper on an AR system prototype, KARMA (the Knowledge-based Augmented Reality Maintenance Assistant), along with Blair MacIntyre and Doree Seligmann. He is also an advisor to Meta.

- Tracy McSheery, of Phasespace, developer in 2009 of wide field of view AR lenses as used in Meta 2 and others.

- S. Ravela, B. Draper, J. Lim and A. Hanson developed a marker/fixture-less augmented reality system with computer vision in 1994. They augmented an engine block observed from a single video camera with annotations for repair. They use model-based pose estimation, aspect graphs and visual feature tracking to dynamically register model with the observed video.

- Francisco Delgado is a NASA engineer and project manager specializing in human interface research and development. Starting 1998 he conducted research into displays that combined video with synthetic vision systems (called hybrid synthetic vision at the time) that we recognize today as augmented reality systems for the control of aircraft and spacecraft. In 1999 he and colleague Mike Abernathy flight-tested the LandForm system aboard a US Army helicopter. Delgado oversaw integration of the LandForm and SmartCam3D systems into the X-38 Crew Return Vehicle. In 2001, Aviation Week reported NASA astronaut's successful use of hybrid synthetic vision (augmented reality) to fly the X-38 during a flight test at Dryden Flight Research Center. The technology was used in all subsequent flights of the X-38. Delgado was co-recipient of the National Defense Industries Association 2004 Top 5 software of the year award for SmartCam3D.

- Bruce H. Thomas and Wayne Piekarski develop the Tinmith system in 1998. They along with Steve Feiner with his MARS system pioneer outdoor augmented reality.

- Mark Billinghurst is Director of the HIT Lab New Zealand (HIT Lab NZ) at the University of Canterbury in New Zealand and a notable AR researcher. He has produced over 250 technical publications and presented demonstrations and courses at a wide variety of conferences.

- Reinhold Behringer performed important early work (1998) in image registration for augmented reality, and prototype wearable testbeds for augmented reality. He also co-organized the First IEEE International Symposium on Augmented Reality in 1998 (IWAR'98), and co-edited one of the first books on augmented reality.

- Felix G. Hamza-Lup, Larry Davis and Jannick Rolland developed the 3D ARC display with optical see-through head-warned display for AR visualization in 2002.

- Dieter Schmalstieg and Daniel Wagner developed a marker tracking systems for mobile phones and PDAs in 2009.

History

- 1901: L. Frank Baum, an author, first mentions the idea of an electronic display/spectacles that overlays data onto real life (in this case 'people'). It is named a 'character marker'.

- 1957–62: Morton Heilig, a cinematographer, creates and patents a simulator called Sensorama with visuals, sound, vibration, and smell.

- 1968: Ivan Sutherland invents the head-mounted display and positions it as a window into a virtual world.

- 1975: Myron Krueger creates Videoplace to allow users to interact with virtual objects.

- 1980: The research by Gavan Lintern of the University of Illinois is the first published work to show the value of a heads up display for teaching real-world flight skills.

- 1980: Steve Mann creates the first wearable computer, a computer vision system with text and graphical overlays on a photographically mediated scene.

- 1981: Dan Reitan geospatially maps multiple weather radar images and space-based and studio cameras to earth maps and abstract symbols for television weather broadcasts, bringing a precursor concept to augmented reality (mixed real/graphical images) to TV.

- 1987: Douglas George and Robert Morris create a working prototype of an astronomical telescope-based "heads-up display" system (a precursor concept to augmented reality) which superimposed in the telescope eyepiece, over the actual sky images, multi-intensity star, and celestial body images, and other relevant information.

- 1990: The term 'Augmented Reality' is attributed to Thomas P. Caudell, a former Boeing researcher.

- 1992: Louis Rosenberg developed one of the first functioning AR systems, called Virtual Fixtures, at the United States Air Force Research Laboratory—Armstrong, that demonstrated benefit to human perception.

- 1993: Steven Feiner, Blair MacIntyre and Doree Seligmann present an early paper on an AR system prototype, KARMA, at the Graphics Interface conference.

- 1993: Mike Abernathy, et al., report the first use of augmented reality in identifying space debris using Rockwell WorldView by overlaying satellite geographic trajectories on live telescope video.

- 1993 A widely cited version of the paper above is published in Communications of the ACM – Special issue on computer augmented environments, edited by Pierre Wellner, Wendy Mackay, and Rich Gold.

- 1993: Loral WDL, with sponsorship from STRICOM, performed the first demonstration combining live AR-equipped vehicles and manned simulators. Unpublished paper, J. Barrilleaux, "Experiences and Observations in Applying Augmented Reality to Live Training", 1999.

- 1994: Julie Martin creates first 'Augmented Reality Theater production', Dancing In Cyberspace, funded by the Australia Council for the Arts, features dancers and acrobats manipulating body–sized virtual object in real time, projected into the same physical space and performance plane. The acrobats appeared immersed within the virtual object and environments. The installation used Silicon Graphics computers and Polhemus sensing system.

- 1995: S. Ravela et al. at University of Massachusetts introduce a vision-based system using monocular cameras to track objects (engine blocks) across views for augmented reality.

- 1998: Spatial Augmented Reality introduced at University of North Carolina at Chapel Hill by Ramesh Raskar, Welch, Henry Fuchs.

- 1999: Frank Delgado, Mike Abernathy et al. report successful flight test of LandForm software video map overlay from a helicopter at Army Yuma Proving Ground overlaying video with runways, taxiways, roads and road names.

- 1999: The US Naval Research Laboratory engages on a decade-long research program called the Battlefield Augmented Reality System (BARS) to prototype some of the early wearable systems for dismounted soldier operating in urban environment for situation awareness and training.

- 1999: NASA X-38 flown using LandForm software video map overlays at Dryden Flight Research Center.

- 2004: Outdoor helmet-mounted AR system demonstrated by Trimble Navigation and the Human Interface Technology Laboratory (HIT lab).

- 2008: Wikitude AR Travel Guide launches on 20 Oct 2008 with the G1 Android phone.

- 2009: ARToolkit was ported to Adobe Flash (FLARToolkit) by Saqoosha, bringing augmented reality to the web browser.

- 2010: Design of mine detection robot for Korean mine field.

- 2012: Launch of Lyteshot, an interactive AR gaming platform that utilizes smart glasses for game data

- 2013: Meta announces the Meta 1 developer kit.

- 2013: Google announces an open beta test of its Google Glass augmented reality glasses. The glasses reach the Internet through Bluetooth, which connects to the wireless service on a user's cellphone. The glasses respond when a user speaks, touches the frame or moves the head.

- 2015: Microsoft announces Windows Holographic and the HoloLens augmented reality headset. The headset utilizes various sensors and a processing unit to blend high definition "holograms" with the real world.

- 2016: Niantic released Pokémon Go for iOS and Android in July 2016. The game quickly became one of the most popular smartphone applications and in turn spikes the popularity of augmented reality games.

- 2017: Magic Leap announces the use of Digital Lightfield technology embedded into the Magic Leap One headset. The creators edition headset includes the glasses and a computing pack worn on your belt.