The

Idiot’s Guide to Making Atoms

Avagadro’s

Number and Moles

Writing this

chapter has reminded me of the opening of a story by a well-known

science fiction author (whose name, needless to say, I can’t

recall): “This is a warning, the only one you’ll get so don’t

take it lightly.” Alice in Wonderland or “We’re not in

Kansas anymore” also pop into mind. What I mean by this is that I

could find no way of writing it without requiring the reader to put

his thinking (and imagining) cap on. So: be prepared.

A few things

about science in general before I plunge headlong into the subject

I’m going to cover. I have already mentioned the way science is a

step-by-step, often even torturous, process of discovering facts,

running experiments, making observations, thinking about them, and so

on; a slow but steady accumulation of knowledge and theory which

gradually reveals to us the way nature works, as well as why. But

there is more to science than this. This more has to do with the

concept, or hope I might say, of trying to understand things like the

universe as a whole, or things as tiny as atoms, or geological time,

or events that happen over exceedingly short times scales, like

billionths of a second. I say hope because in dealing with such

things, we are extremely removed from reality as we deal with it

every day, in the normal course of our lives.

The problem is

that, when dealing with such extremes, we find that most of our

normal ideas and expectations – our intuitive, “common sense”,

feeling grasp of reality – all too frequently starts to break down.

There is of course good reason why this should be, and is, so. Our

intuitions and common sense reasoning have been sculpted by our

evolution – I will resist the temptation to say designed, although

that often feels to be the case, for, ironically, the same reasons –

to grasp and deal with ordinary events over ordinary scales of time

and space. Our minds are not well endowed with the ability to

intuitively understand nature’s extremes, which is why these

extremes so often seem counter-intuitive and even absurd to us.

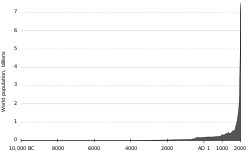

Take, as one of

the best examples I know of this, biological evolution, a lá

Darwin. As the English biologist and author Richard Dawkins has

noted several times in his books, one of the reasons so many people

have a hard time accepting Darwinian evolution is the extremely long

time scale over which it occurs, time scales in the millions of years

and more. None of us can intuitively grasp a million years; we can’t

even grasp, for that matter, a thousand years, which is

one-thousandth of a million. As a result, the claim that something

like a mouse can evolve into something like an elephant feels

“obviously” false. But that feeling is precisely what we should

ignore in evaluating the possibility of such events, because we

cannot have any such feeling for the exceedingly long time span it

would take. Rather, we have to evaluate the likelihood using

evidence and hard logic; commonsense can seriously mislead us.

The same is true

for nature on the scale of the extremely small. When we start poking

around in this territory, around with things like atoms and

sub-atomic particles, we find ourselves in a world which bears little

resemblance to the one we are used to. I am going to try various

ways of giving you a sense of how the ultra-tiny works, but I know in

advance that no matter what I do I am still going to be presenting

concepts and ideas that seem, if anything, more outlandish than

Darwinian evolution; ideas and concepts that might, no, probably

will, leave your head spinning. If it is any comfort, they often

leave my mind spinning as well. And again, the only reason to accept

them is that they pass the scientific tests of requiring evidence and

passing the muster of logic and reason; but they will often seem

preposterous, nevertheless.

First, however,

let’s try to grab hold of just how tiny the world we are about to

enter is. Remember Avogadro’s number, the number of a mole of

anything, from the last chapter? The reason we need such an enormous

number when dealing with atoms is that they are so

mind-overwhelmingly small. When I say mind-overwhelmingly, I really

mean it. A good illustration of just how small that I enjoy is to

compare the number of atoms in a glass of water to the number of

glasses of water in all the oceans on our planet. As incredible as

it sounds, the ratio of the former to the latter is around 10,000

to 1. This means that if you fill a glass with water,

walk down to the seashore, pour the water into the ocean and wait

long enough for it to disperse evenly throughout all the oceans (if

anyone has managed to calculate how long this would take, please let

me know), then dip your now empty glass into the sea and re-fill it,

you will have scooped up some ten thousand of the original atoms that

it contained. Another good way of stressing the smallness of atoms

is to note that every time you breathe in you are inhaling some of

the atoms that some historical figure – say Benjamin Franklin or

Muhammad – breathed in his lifetime. Or maybe just in one of their

breaths; I can’t remember which – that’s how hard to grasp just

how small they are.

One reason all

this matters is that nature in general does not demonstrate the

property that physicists and mathematicians call “scale

invariance.” Scale invariance simply means that, if you take an

object or a system of objects, you can increase its size up to as

large as you want, or decrease it down, and its various properties

and behaviors will not change. Some interesting systems that do

possess scale invariance are found among the mathematical entities

called fractals: no matter how much you enlarge or shrink these

fractals, their patterns repeat themselves over and over ad

infinitum without change. A good example of this is the Koch

snowflake:

which is just a set of repeating triangles, to as much depth as you want. There are a number of physical systems that have scale invariance as well, but, as I just said, in general this is not true. For example, going back to the mouse and the elephant, you could not scale the former up to the size of the latter and let it out to frolic in the African savannah with the other animals; our supermouse’s proportionately tiny legs, for one thing, would not be strong enough to lift it from the ground. Making flies human sized, or vice-versa, run into similar kinds of problems (a fly can walk on walls and ceilings because it is so small that electrostatic forces dominate its behavior far more than gravity).

Scale Invariance – Why it Matters

One natural phenomenon that we know

lacks scale invariance, we met in the last chapter is matter itself.

We know now that you cannot take a piece of matter, a nugget of gold

for example, and keep cutting it into smaller and smaller pieces, and

so on until the end of time. Eventually we reach the scale of

individual gold atoms, and then even smaller, into the electrons,

protons, and neutrons that comprise the atoms, all of which are much

different things than the nugget we started out with. I hardly need

to say that all elements, and all their varied combinations, up to

stars and galaxies and larger, including even the entire universe,

suffer the same fate. I should add, for the sake of completeness,

that we cannot go in the opposite direction either; as we move toward

increasingly more massive objects, their behavior is more and more

dominated by the field equations of Einstein’s general relativity,

which alters the space and time around and inside them to a more and

more significant degree.

Why do I take

the time to mention all this? Because we are en route to

explaining how atoms, electrons and all, are built up and how they

behave, and we need to understand that what goes on in nature at

these scales is very different than what we are accustomed to, and

that if we cannot adopt our thinking to these different behaviors we

are going to find it very tough, actually impossible, sledding,

indeed.

In my previous

book, Wondering About, I out of necessity gave a very rough

picture of the world of atoms and electrons, and how that picture

helped explained the various chemical and biological behaviors that a

number of atoms (mostly carbon) displayed. I say “of necessity”

because I didn’t, in that book, want to mire the reader in a morass

of details and physics and equations which weren’t needed to

explain the things I was trying to explain in a chapter or two. But

here, in a book largely dedicated to chemistry, I think the sledding

is worth it, even necessary, even if we do still have to make some

dashes around trees and skirt the edges of ponds and creeks, and so

forth.

Actually, it

seems to me that there are two approaches to this field, the field of

quantum mechanics, the world we are about to enter, and how it

applies to chemistry. One is to simply present the details, as if

out of a cook book: so we are presented our various dishes of,

first, classical mechanics, then the LaGrangian equation of motion

and Hamiltonium operators and so forth, followed by Schrödinger’s

various equations and Heisenberg’s matrix approach, with

eigenvectors and eigenvalues, and all sorts of stuff that one can

bury one’s head into and never come up for air. Incidentally, if

you do want to summon your courage and take the plunge, a very good

book to start with is Melvin Hanna’s Quantum Mechanics in

Chemistry, of which I possess the third edition, and go perusing

through from time to time when I am in the mood for such fodder.

The problem with this approach is that,

although it cuts straight to the chase, it leaves out the historical

development of quantum mechanics, which, I believe, is needed if we

are to understand why and how physicists came to present us with such

a peculiar view of reality. They had very good reasons for doing so,

and yet the development of modern quantum mechanical theory is

something that took several decades to mature and is still in some

respects an unfinished body of work. Again, this is largely because

some it its premises and findings are at odds with what we would

intuitively expect about the world (another is that the math can be

very difficult). These are premises and findings such as the

quantitization of energy and other properties to discrete values in

very small systems such as atoms. Then there is Heisenberg’s

famous though still largely misunderstood uncertainly principle (and

how the latter leads to the former).

Talking About Light and its Nature

A good way of

launching this discussion is to begin with light, or, more precisely,

electromagnetic radiation. What do I mean by these

polysyllabic words? Sticking with the historical approach, the

phenomena of electricity and magnetism had been intensely studied in

the 1800s by people like Faraday and Gauss and Ørsted, among others.

The culmination of all this brilliant theoretical and experimental

work was summarized by the Scottish physicist James Clerk Maxwell,

who in 1865 published a set of eight equations describing the

relationships between the two phenomena and all that had been

discovered about them. These equations were then further condensed

down into four and placed in one of their modern forms in 1884 by

Oliver Heaviside. One version of these equations is (if you are a

fan of partial differential equations):

To assist you in

understanding this wave, look at just one component of it, the

oscillating electric field, or the part that is going up and down.

For those not familiar with the idea of an electric (or magnetic)

field, simply take a bar magnet, set it on a piece of paper, and

sprinkle iron filings around it. You will discover, to your pleasure

I’m certain, that the filings quickly align themselves according to

the following pattern:

Go back to the

previous figure, of the electromagnetic wave. The wave is a

combination of oscillating electric and magnetic fields, at right

angles (90°) to each other, propagating through space. Now, imagine

this wave passing through a wire made of copper or any other metal.

Hopefully you can perceive by now that, if the wave is within a

certain frequency range, it will cause the electrons in the wire’s

atoms to start spinning around and gyrating in order to accommodate

the changing electric and magnetic fields, just as you saw with the

iron filings and the bar magnet. Not only would they do that, but

the resulting electron motions could be picked up by the right kinds

of electronic gizmos, transistors and capacitators and resistors and

the like – here, we have just explained the basic working principle

of radio transmission and receiving, assuming the wire is the

antenna. Not bad for a few paragraphs of reading.

This sounds all very nice and neat, yet

it is but our first foot into the door of what leads to modern

quantum theory. The reason for this is that this pat, pretty

perception of light as a wave just didn’t jibe with some other

phenomena scientists were trying to explain at the end of the

nineteenth century / beginning of the twentieth century. The

main such phenomena along these lines which quantum thinking solved

were the puzzles of the so-called “blackbody” radiation spectrum

and the photo-electric effect.

Blackbody Radiation and the Photo-electric Effect

If you take an object, say, the

tungsten filament of the familiar incandescent light bulb, and start

pumping energy into it, not only will its temperature rise but at

some point it will begin to emit visible light: first a dull red,

then brighter red, then orange, then yellow – the filament

eventually glows with a brilliant white light, meaning all of the

colors of the visible spectrum are present in more or less equal

amounts, illuminating the room in which we switched the light on.

Even before it starts to visibly glow, the filament emits infrared

radiation, which consist of longer wavelengths than visible red, and

is outside our range of vision. It does so in progressively greater

and greater amounts and shorter and shorter wavelengths, until the

red light region and above is finally reached. At not much higher

temperatures the filament melts, or at least breaks at one of its

ends (which is why it is made from tungsten, the metal with the

highest melting point), breaking the electric current and causing us

to replace the bulb.

The filament is

a blackbody in the sense that, to a first approximation, it

completely absorbs all radiation poured onto it, and so its

electromagnetic spectrum depends only on its temperature and not any

on properties of its physical or chemical composition. Other such

objects which are blackbodies include the sun and stars, and even our

own bodies – if you could see into right region of the infrared

range of radiation, we would all be glowing. A set of five blackbody

electromagnetic spectra are illustrated below:

Another,

seemingly altogether different, phenomenon that could not be

explained using classical physics principles was the so-called

photoelectric effect. The general idea is simple enough: if you

shine enough light of the right wavelength or shorter onto certain

metals – the alkali metals, including sodium and potassium, show

this effect the strongest – electrons will be ejected from the

metal, which can then be easily detected:

The reason this

is so difficult to explain with the physics of the 1800’s is that

physics then defined the energy of all waves using both the wave’s

amplitude, which is the distance from crest or highest point to

trough or lowest point, in combination with the wavelength (the

shorter the wavelength the more waves can strike within a given

time). This is something you can easily appreciate by walking into

the ocean until the water is up to your chest; both the higher the

waves are and the faster they hit you, the harder it is to stay on

your feet.

Why don’t the

electrons in the potassium plate above react in the same way? If

light behaved as a classical wave it should not only be the

wavelength but the intensity or brightness (assuming this is the

equivalent of amplitude) that determines how many electrons are

ejected and with what velocity. But this is not what we see: e.g.,

no matter how much red light, of what intensity, we shine on the

plate no electrons are emitted at all, while for green and purple

light only the shortening of the wavelength in and of itself

increases the energy of the ejected electrons, once again, regardless

of intensity. In fact, increasing the intensity only increases the

number of escaping electrons, assuming any escape at all, not their

velocity. All in all, a very strange situation, which, as I said,

had physicists scratching their heads all over at the end of the

1800s.

The answers to

these puzzles, and several others, comes back to the point I made

earlier about nature not being scale invariant. These conundrums

were simply insolvable until scientists began to think of things like

atoms and electrons and light waves as being quite unlike anything

they were used to on the larger scale of human beings and the world

as we perceive it. Using such an approach, the two men who cracked

the blackbody spectrum problem and the photoelectric effect, Max

Planck and Albert Einstein, did so by discarding the concept of light

being a classical wave and instead, as Newton had insisted two

hundred years earlier, thought of it as a particle, a particle which

came to be called a photon. But they also did not allude to

the photon as a classical particle either but as a particle with a

wavelength; furthermore, that the energy E

of this particle was described, or quantized, by the equation

If you are

starting to feel a little dizzy at this point in the story, don’t

worry; you are in good company. A particle with a wavelength? Or,

conversely, a wave that acts like a particle even if only under

certain circumstances? A wavicle? Trying to wrap your mind

around such a concept is like awakening from a strange dream in which

bizarre things, only vaguely remembered, happened. And the only

justification of this dream world is that it made sense of what was

being seen in the laboratories of those who studied these phenomena.

Max Planck, for example, was able, using this definition, to develop

an equation which correctly predicted the shapes of blackbody spectra

at all possible temperature ranges. And Einstein elegantly showed

how it solved the mystery of the photoelectric effect: it took a

minimum energy to eject an electron from a metal atom, an energy

dictated by the wavelength of the incoming photon; the velocity or

kinetic energy of the emitted electron came solely from the residual

energy of the photon after the ejection. The number of electrons

freed this way was simply equal to the number of the photons that

showered down on the metal, or the light’s intensity. It all fit

perfectly. The world of the quantum had made its first secure foot

prints in the field of physics.

There was much, much more to come.

The Quantum and the

Atom

Another phenomena that scientists

couldn’t explain until the concept of the quantum came along around

1900-1905 was the atom itself. Part of the reason for this is that,

as I have said, atoms were not widely accepted as real, physical

entities until electrons and radioactivity were discovered by people

like the Curies and J. J. Thompson, Rutherford performed his

experiments with alpha particles, and Einstein did his work on

Brownian motion and the photo-electric effect (the results of which

he published in 1905, the same year he published his papers on

special relativity and the E = mc2

equivalence of mass and energy in the same year, all at the tender

age of twenty-six!). Another part is that, even if accepted, physics

through the end of the 1800s simply could not explain how atoms could

be stable entities.

The problem with

the atomic structure became apparent in 1911, when Rutherford

published his “solar system” model, in which a tiny, positively

charged nucleus (again, neutrons were not discovered until 1932 so at

the time physicists only knew about the atomic masses of elements)

was surrounded by orbiting electrons, in much the same way as the

planets orbit the sun. The snag with this rather intuitive model

involved – here we go both with not trusting intuition and nature

not being scale invariant again – something physicists had known

for some time about charged particles.

When a charged

particle changes direction, it will emit electromagnetic radiation

and thereby lose energy. Orbiting electrons are electrons which are

constantly changing direction and so, theoretically, should lose

their energy and fall into the nucleus in a tiny fraction of a second

(the same is true with planets orbiting a sun, but it takes many

trillions of years for it to happen). It appeared that the

Rutherford model, although still commonly evoked today, suffered from

a lethal flaw.

And yet this

model was compelling enough that there ought to be some means of

rescuing it from its fate. That means was published two years later,

in 1913, by Niels Bohr, possibly behind Einstein the most influential

physicist of the twentieth century. Bohr’s insight was to take

Planck’s and Einstein’s idea of the quantitization of light and

apply it to the electrons’ orbits. It was a magnificent synthesis

of scientific thinking; I cannot resist inserting here Jacob

Bronowski’s description of Bohr’s idea, from his book The

Ascent of Man:

Now in a sense, of course, Bohr’s

task was easy. He had the Rutherford atom in one hand, he had the

quantum in the other. What was there so wonderful about a young man

of twenty-seven in 1913 putting the two together and making the

modern image of the atom? Nothing but the wonderful, visible

thought-process: nothing but the effort of synthesis. And the idea

of seeking support for it in the one place where it could be found:

the fingerprint of the atom, namely the spectrum in which its

behavior becomes visible to us, looking at it from outside.

Reading

this reminds me of another feature of atoms I have yet to mention.

Just as blackbodies emit a spectrum of radiation, one based purely on

their temperature, so did the different atoms have their own spectra.

But the latter had the twist that, instead of being continuous, they

consisted of a series a sharp lines and were not temperature

dependent but were invoked usually by electric discharges into a mass

of the atoms. The best known of these spectra, and the one shown

below, is that of atomic hydrogen (atomic because hydrogen usually

exists as diatomic molecules, H2, but the electric

discharge also dissociates the molecules into discrete atoms):

Bohr’s dual

challenge was explain both why the atom, in this case hydrogen, the

simplest of atoms, didn’t wind down like a spinning top as

classical physics predicted, and why its spectrum consisted of these

sharp lines instead of being continuous as the energy is lost. As

said, he accomplished both tasks by invoking quantum ideas. His

reasoning was more or less as this: the planets in their paths

around the sun can potentially occupy any orbit, in the same

continuous fashion we have learned to expect from the world at large.

As we now might begin to suspect however, this is not true for the

electrons “orbiting” (I put this in quotes because we shall see

that this is not actually the case) the nucleus. Indeed, this is the

key concept which solves the puzzle of atomic structure, and which

allowed scientists and other people to finally breathe freely while

they accepted the reality of atoms.

Bohr kept the

basic solar system model, but modified it by saying that there was

not a continuous series of orbits the electrons could occupy but

instead a set of discrete ones, in-between which there was a kind of

no man’s land where electrons could never enter. Without going

into details you can see how, at one stroke, this solved the riddle

of the line spectra of atoms: each spectral line represented the

transition of an electron from a higher orbit (more energy) to a

lower one (less energy). For example, the 656 nm red line in the

Balmer spectrum of hydrogen is caused by an electron dropping from

orbit level three to orbit level two:

This is fundamentally the way science

works. Inexplicable features of reality are solved, step by step,

sweat drop by tear drop , and blood drop by drop, by the application

of known physical laws; or, when needed, new laws and new ideas are

summoned forth to explain them. Corks are popped, the bubbly flows,

and awards are apportioned among the minds that made the

breakthroughs. But then, as always, when the party is over and the

guests start working off their hangovers, we realize that although,

yes, progress has been made, there is still more territory to cover.

Ironically, sometimes the new territory is a direct consequence of

the conquests themselves.

Bohr’s triumph

over atomic structure is perhaps the best known entrée in this genre

of the story of scientific progress. There were two problems, one

empirical and one theoretical, which arose from it in particular,

problems which sobered up the scientific community. The empirical

problem was that Bohr’s atomic model, while it perfectly explained

the behavior of atomic hydrogen, could not be successfully applied to

any other atom or molecule, not even seemingly simple helium or

molecular hydrogen (H2), the former of which is just after

hydrogen in the periodic table. The theoretical problem was that the

quantitization of orbits was purely done on an ad hoc basis,

without any meaningful physical insight as to why it should be

true.

And so the great

minds returned to their offices and chalkboards, determined to answer

these new questions.

Key

Ideas in the Development of Quantum Mechanics

The key idea

which came out of trying to solve these problems was that, if that

which had been thought of as a wave, light, could also possess

particle properties, then perhaps the reverse was also true: that

which had been thought of as having a particle nature, such as the

electron, could also have the characteristics of waves. Louis de

Broglie, in his 1924 model of the hydrogen atom, introduced this,

what was to become called the wave-particle duality concept,

explaining the discrete orbits concept of Bohr by recasting them as

distances from the nuclei where standing electron waves could exist

only in whole numbers, as the mathematical theory behind waves

demanded:

That glue was

first provided by people like Werner Heisenberg and Max Born, who,

only a few years after de Broglie’s publication, created a

revelation, or perhaps I should say revolution, of one of scientific

– no, philosophic – history’s most astonishing ideas. In 1925

Heisenberg, working with Born, introduced the technique of matrix

mechanics, one of the modern ways of formulating quantum mechanical

systems. Crucial to the technique was the concept that at the

smallest levels of nature, such as with electrons in an atom, neither

the positions nor motions of particles could be defined exactly.

Rather, these properties were “smeared out” in a way that left

the particles with a defined uncertainty. This led, within two

years, to Heisenberg’s famous Uncertainty Principle, which declared

that certain pairs of properties of a particle in any system could

not be simultaneously known with perfect precision, but only within a

region of uncertainty. One formulation of this principle is, as I

have used before:

x

× s

≤

h / (2π

× m)

which states

that the product of the uncertainty of a particle’s position (x)

and its speed (s)

is always less than or equal to Planck’s (h)

constant divided by 2π times the object’s mass

(m). Now, there is something I must say

upfront. It is critical to understand that this uncertainty is not

due to deficiencies in our measuring instruments, but is built

directly into nature, at a fundamental level. When I say fundamental

I mean just that. One could say that, if God or Mother Nature really

exists, even He Himself (or Herself, or Itself) does not and cannot

know these properties with zero uncertainty. They simply do not have

a certainty to reveal to any observer, not even to a supernatural

one, should such an observer exist.

Yes, this is what I am saying. Yes,

nature is this strange.

Another, more precise way of putting

this idea is that you can specify the exact position of an object at

a certain time, but then you can say nothing about its speed (or

direction of motion); or the reverse, that speed / direction can be

perfectly specified but then the position is a complete unknown. A

critical point here is that the reason we do not notice this bizarre

behavior in our ordinary lives – and so, never suspected it until

the 20’th century – is that the product of these two

uncertainties is inversely proportional to the object’s mass

(that is, proportional to 1/m) as well as

directly proportional to the tiny size of Planck’s constant h.

The result of this is that large objects, such as grains of sand,

are simply much too massive to make this infinitetesimally small

uncertainty product measurable by any known or even imaginable

technique.

Whew, I know.

And just what does all this talk about uncertainty have to do with

waves? Mainly it is that trigonometric wave functions, like sine and

cosine, are closely related to probability functions, such as the

well-known Gaussian, or bell-shaped, curve. Let’s start with the

latter. This function starts off near (but never at) zero at very

large negative x, rises to a maximum y = f(x) value at a certain

point, say x = 0, and then, as though reflected through a mirror,

trails off again at large positive x. A simple example should help

make it clear. Take a large group of people. It could be the entire

planet’s human’s population, though in practice that would make

this exercise difficult. Record the heights of all these people,

rounding the numbers off to a convenient unit, say, centimeters or

cm. Now make sub-groups of these people, each sub-group consisting

of all individuals of a certain height in cm. If you make a plot of

the number of people within each sub-group, or the y value, versus

the height of that sub-group, the x value, you will get a graph

looking rather (but not exactly) like this:

What about those

trigonometric functions? As another example, a sine function, which

is the typical shape of a wave, looks like this:

It is possible

to set up Schrödinger’s equation for any physical system,

including any atom. Alas, for all atoms except hydrogen, the

equation is unsolvable due to a stone wall in mathematical physics

known as the three-body problem; any system with more than two

interacting components, say the two electrons plus nucleus of helium,

simply cannot be solved by any closed algorithm. Fortunately, for

hydrogen, where there is only a single proton and a single electron,

the proper form of the equation can be devised and then solved,

albeit with some horrendous looking mathematics, to yield a set of ψ,

or wave functions. The complex squares of these functions as

described above, or solutions I should say as there are an infinite

number of them, describe the probability distributions and other

properties of the hydrogen atom’s electron.

The nut had at last been (almost)

cracked.

Solving Other Atoms

So all of this brilliance and sweat and

blood, from Planck to Born, came down to the bottom line of, find the

set of wave functions, or ψs, that solve

the Schrödinger equation for hydrogen and you have solved the riddle

of how electrons behave in atoms.

Scientists,

thanks to Robert Mullikan in 1932, even went so far as to propose a

name for the squared functions, or probability distribution

functions, a term I dislike because it still invokes the image of

electrons orbiting the nucleus: the atomic orbital.

Despite what I

just said, actually, we haven’t completely solved the riddle. As I

said, the Schrödinger equation cannot be directly solved for any

other atom besides hydrogen. But nature can be kind sometimes as

well as capricious, and thus allows us to find side door entrances

into her secret realms. In the case of orbitals, it turns out that

their basic pattern holds for almost all the atoms, with a little

tweaking here, and some further (often computer intensive)

calculations there. For our purposes here, it is the basic pattern

that matters in cooking up atoms.

Orbitals.

Despite the name, again, the electrons do not circle the nucleus

(although most of them do have what is called angular momentum,

which is the physicists’ fancy term for moving in a curved path).

I’ve thought and thought about this, and decided that the only way

to begin describing them is to present the general solution (a wave

function, remember) to the Schrödinger equation for the hydrogen

atom in all its brain-overloading detail:

The importance

of n, ℓ, and m

lies in the fact that they are not free to take on any values, and

that the values they can have are interrelated. Collectively, they

are called quantum numbers, and since n

is dubbed the principle quantum number, we will start with it.

It is also the easiest to understand: its potential values are all

the positive integers (whole numbers), from one on up. Historically,

it roughly corresponds to the orbit numbers in Bohr’s 1913 orbiting

model of the hydrogen atom. Note that one is its lowest possible

value; it cannot be zero, meaning that the electron cannot collapse

into the nucleus. Also sprach Zarathustra!

The next entry

in the quantum number menagerie is ℓ, the

angular momentum quantum number. As with n

it is also restricted to integer values, but with the additional

caveat that for every n it can only have

values from zero to n-one. So, for

example, if n is one, then ℓ

can only equal one value, that of zero, while if n

is two, then ℓ can be either zero or one, and

so on. Another way of thinking about ℓ is that

it describes the kind of orbital we are dealing with: a value

of zero refers to what is called an s orbital,

while a value of one means a so-called p orbital.

What about m,

the magnetic moment quantum number? This can range in value

from – ℓ to ℓ, and

represents the number of orbitals of a given type, as designated by

ℓ. Again, for an n

of one, ℓ has just the one value of zero;

furthermore, for ℓ equals zero m

can only be zero (so there is only one s

orbital), while for ℓ equals one m

can be one of three integers: minus one, zero, and one. Seems

complicated? Play around with this system for a while and you will

get the hang of it. See? College chemistry isn’t so bad after

all.

* * *

Let’s

summarize before moving on. I have mentioned two kinds of orbitals,

or electron probability distribution functions, so far: s

and p. When ℓ equals zero

we are dealing only with an s orbital, while for

ℓ equals one the orbital is type p.

Furthermore, when ℓ equals one m

can be either minus one, zero, or one, meaning that at each level (as

determined by n) there are always three p

orbitals, and only one s orbital.

What about when

n equals two? Following our scheme, for

this value of n there are three orbital

types, as ℓ can go from zero to one to two.

The orbital designation when ℓ equals two is d;

and as m can now vary from minus two to

plus two (-2, -1, 0, 1, 2), there are five of these d

type orbitals. I could press onward to ever increasing ns

and their orbital types (f, g,

etc.), but once again nature is cooperative, and for all known

elements we rarely get past f orbitals, at least

at the ground energy level (even though n

reaches seven in the most massive atoms, as we shall see).