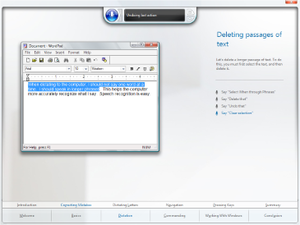

The tutorial for Windows Speech Recognition in Windows Vista depicting the selection of text in WordPad for deletion.

| |

| Developer(s) | Microsoft |

|---|---|

| Initial release | January 30, 2007 |

| Operating system | Windows Vista and later |

| Type | Speech recognition |

Windows Speech Recognition (WSR) is speech recognition developed by Microsoft for Windows Vista that enables voice commands to control the desktop user interface; dictate text in electronic documents and email; navigate websites; perform keyboard shortcuts; and to operate the mouse cursor. It supports custom macros to perform additional or supplementary tasks.

WSR is a locally processed speech recognition platform; it does not rely on cloud computing for accuracy, dictation, or recognition, but adapts based on contexts, grammars, speech samples, training sessions, and vocabularies. It provides a personal dictionary that allows users to include or exclude words or expressions from dictation and to record pronunciations to increase recognition accuracy. Custom language models are also supported.

With Windows Vista, WSR was developed to be part of Windows, as speech recognition was previously exclusive to applications such as Windows Media Player. It is present in Windows 7, Windows 8, Windows 8.1, Windows RT, and Windows 10.

History

Microsoft was involved in speech recognition and speech synthesis research for many years before WSR. In 1993, Microsoft hired Xuedong Huang from Carnegie Mellon University to lead its speech development efforts; the company's research led to the development of the Speech API (SAPI) introduced in 1994. Speech recognition had also been used in previous Microsoft products. Office XP and Office 2003 provided speech recognition capabilities among Internet Explorer and Microsoft Office applications; it also enabled limited speech functionality in Windows 98, Windows ME, Windows NT 4.0, and Windows 2000. Windows XP Tablet PC Edition 2002 included speech recognition capabilities with the Tablet PC Input Panel, and Microsoft Plus! for Windows XP enabled voice commands for Windows Media Player.

However, these all required installation of speech recognition as a

separate component; before Windows Vista, Windows did not include

integrated or extensive speech recognition. Office 2007 and later versions rely on WSR for speech recognition services.

Windows Vista

At WinHEC 2002

Microsoft announced that Windows Vista (codenamed "Longhorn") would

include advances in speech recognition and in features such as microphone array support

as part of an effort to "provide a consistent quality audio

infrastructure for natural (continuous) speech recognition and

(discrete) command and control." Bill Gates stated during PDC 2003

that Microsoft would "build speech capabilities into the system — a big

advance for that in 'Longhorn,' in both recognition and synthesis,

real-time"; and pre-release builds during the development of Windows Vista included a speech engine with training features.

A PDC 2003 developer presentation stated Windows Vista would also

include a user interface for microphone feedback and control, and user

configuration and training features. Microsoft clarified the extent to which speech recognition would be integrated when it stated in a pre-release software development kit that "the common speech scenarios, like speech-enabling menus and buttons, will be enabled system-wide."

During WinHEC 2004 Microsoft included WSR as part of a strategy to improve productivity on mobile PCs. Microsoft later emphasized accessibility,

new mobility scenarios, support for additional languages, and

improvements to the speech user experience at WinHEC 2005. Unlike the

speech support included in Windows XP, which was integrated with the

Tablet PC Input Panel and required switching between separate Commanding

and Dictation modes, Windows Vista would introduce a dedicated

interface for speech input on the desktop and would unify the separate

speech modes; users previously could not speak a command after dictating or vice versa without first switching between these two modes. Windows Vista Beta 1 included integrated speech recognition. To incentivize company employees to analyze WSR for software glitches and to provide feedback, Microsoft offered an opportunity for its testers to win a Premium model of the Xbox 360.

During a demonstration by Microsoft on July 27, 2006—before Windows Vista's release to manufacturing

(RTM)—a notable incident involving WSR occurred that resulted in an

unintended output of "Dear aunt, let's set so double the killer delete

select all" when several attempts to dictate led to consecutive output

errors; the incident was a subject of significant derision among analysts and journalists in the audience, despite another demonstration for application management and navigation being successful. Microsoft revealed these issues were due to an audio gain glitch that caused the recognizer to distort commands and dictations; the glitch was fixed before Windows Vista's release.

Reports from early 2007 indicated that WSR is vulnerable to

attackers using speech recognition for malicious operations by playing

certain audio commands through a target's speakers; it was the first vulnerability discovered after Windows Vista's general availability.

Microsoft stated that although such an attack is theoretically

possible, a number of mitigating factors and prerequisites would limit

its effectiveness or prevent it altogether: a target would need the

recognizer to be active and configured to properly interpret such

commands; microphones and speakers would both need to be enabled and at

sufficient volume levels; and an attack would require the computer to

perform visible operations and produce audible feedback without users

noticing. User Account Control would also prohibit the occurrence of privileged operations.

Windows 7

The dictation scratchpad in Windows 7 replaces the "enable dictation everywhere" option of Windows Vista.

WSR was updated to use Microsoft UI Automation and its engine now uses the WASAPI audio stack, substantially enhancing its performance and enabling support for echo cancellation,

respectively. The document harvester, which can analyze and collect

text in email and documents to contextualize user terms has improved

performance, and now runs periodically in the background instead of only

after recognizer startup. Sleep mode has also seen performance

improvements and, to address security issues, the recognizer is turned

off by default after users speak "stop listening" instead of being

suspended. Windows 7 also introduces an option to submit speech training

data to Microsoft to improve future recognizer versions.

A new dictation scratchpad interface functions as a temporary

document into which users can dictate or type text for insertion into

applications that are not compatible with the Text Services Framework. Windows Vista previously provided an "enable dictation everywhere option" for such applications.

Windows 8.x and Windows RT

WSR can be used to control the Metro user interface in Windows 8, Windows 8.1, and Windows RT with commands to open the Charms bar ("Press Windows C"); to dictate or display commands in Metro-style apps ("Press Windows Z"); to perform tasks in apps (e.g., "Change to Celsius" in MSN Weather); and to display all installed apps listed by the Start screen ("Apps").

Windows 10

WSR is featured in the Settings application starting with the Windows 10 April 2018 Update (Version 1803); the change first appeared in Insider Preview Build 17083. The April 2018 Update also introduces a new ⊞ Win+Ctrl+S keyboard shortcut to activate WSR.

Overview and features

WSR allows a user to control applications and the Windows desktop user interface through voice commands. Users can dictate text within documents, email, and forms; control the operating system user interface; perform keyboard shortcuts; and move the mouse cursor. The majority of integrated applications in Windows Vista can be controlled; third-party applications must support the Text Services Framework for dictation. English (U.S.), English (U.K.), French, German, Japanese, Mandarin Chinese, and Spanish are supported languages.

When started for the first time, WSR presents a microphone setup

wizard and an optional interactive step-by-step tutorial that users can

commence to learn basic commands while adapting the recognizer to their

specific voice characteristics; the tutorial is estimated to require approximately 10 minutes to complete.

The accuracy of the recognizer increases through regular use, which

adapts it to contexts, grammars, patterns, and vocabularies.

Custom language models for the specific contexts, phonetics, and

terminologies of users in particular occupational fields such as legal

or medical are also supported. With Windows Search, the recognizer also can optionally harvest text in documents, email, as well as handwritten tablet PC input to contextualize and disambiguate terms to improve accuracy; no information is sent to Microsoft.

WSR is a locally processed speech recognition platform; it does

not rely on cloud computing for accuracy, dictation, or recognition. Speech profiles that store information about users are retained locally. Backups and transfers of profiles can be performed via Windows Easy Transfer.

Interface

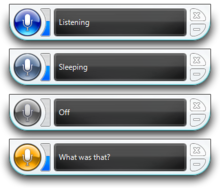

The

speech recognizer displaying information based on different modes; the

color of the recognizer button changes based on user interaction.

The WSR interface consists of a status area that displays

instructions, information about commands (e.g., if a command is not

heard by the recognizer), and the status of the recognizer; a voice

meter displays visual feedback about volume levels. The status area

represents the current state of WSR in a total of three modes, listed

below with their respective meanings:

- Listening: The recognizer is active and waiting for user input

- Sleeping: The recognizer will not listen for or respond to commands other than "Start listening"

- Off: The recognizer will not listen or respond to any commands; this mode can be enabled by speaking "Stop listening"

Colors of the recognizer listening mode button denote its various

modes of operation: blue when listening; blue-gray when sleeping; gray

when turned off; and yellow when the user switches context (e.g., from

the desktop to the taskbar) or when a voice command is misinterpreted.

The status area can also display custom user information as part of Windows Speech Recognition Macros.

The alternates panel displaying suggestions for a phrase.

Alternates panel

An

alternates panel disambiguation interface lists items interpreted as

being relevant to a user's spoken word(s); if the word or phrase that a

user desired to insert into an application is listed among results, a

user can speak the corresponding number of the word or phrase in the

results and confirm this choice by speaking "OK" to insert it within the

application.

The alternates panel also appear when launching applications or

speaking commands that refer to more than one item (e.g., speaking

"Start Internet Explorer" may list both the web browser and a separate

version with add-ons disabled). An ExactMatchOverPartialMatch entry in the Windows Registry can limit commands to items with exact names if there is more than one instance included in results.

Common commands

Listed below are common WSR commands. Words in italics indicate a word that can be substituted for the desired item (e.g., "direction" in "scroll direction" can be substituted with the word "down"). A "start typing" command enables WSR to interpret all dictation commands as keyboard shortcuts.

- Dictation commands: "New line"; "New paragraph"; "Tab"; "Literal word"; "Numeral number"; "Go to word"; "Go after word"; "No space"; "Go to start of sentence"; "Go to end of sentence"; "Go to start of paragraph"; "Go to end of paragraph"; "Go to start of document" "Go to end of document"; "Go to field name" (e.g., go to address, cc, or subject). Special characters such as a comma are dictated by speaking the name of the special character.

- Navigation commands:

- Keyboard shortcuts: "Press keyboard key"; "Press ⇧ Shift plus a"; "Press capital b."

- Keys that can be pressed without first giving the press command include: ← Backspace, Delete, End, ↵ Enter, Home, Page Down, Page Up, and Tab ↹.

- Mouse commands: "Click"; "Click that"; "Double-click"; "Double-click that"; "Mark"; "Mark that"; "Right-click"; "Right-click that"; "MouseGrid".

- Window management commands: "Close (alternatively maximize, minimize, or restore) window"; "Close that"; "Close name of open application"; "Switch applications"; "Switch to name of open application"; "Scroll direction"; "Scroll direction in number of pages"; "Show desktop"; "Show Numbers."

- Speech recognition commands: "Start listening"; "Stop listening"; "Show speech options"; "Open speech dictionary"; "Move speech recognition"; "Minimize speech recognition"; "Restore speech recognition". In the English language, applicable commands can be shown by speaking "What can I say?" Users can also query the recognizer about tasks in Windows by speaking "How do I task name" (e.g., "How do I install a printer?") which opens related help documentation.

The MouseGrid command displaying a grid of numbers on the Windows Vista desktop.

MouseGrid

MouseGrid

enables users to control the mouse cursor by overlaying numbers across

nine regions on the screen; these regions gradually narrow as a user

speaks the number(s) of the region on which to focus until the desired

interface element is reached. Users can then issue commands including

"Click number of region," which moves the mouse cursor to the desired region and then clicks it; and "Mark number of region", which allows an item (such as a computer icon) in a region to be selected, which can then be clicked with the previous click command. Users also can interact with multiple regions at once.

Show Numbers

Applications

and interface elements that do not present identifiable commands can

still be controlled by asking the system to overlay numbers on top of

them through a Show Numbers command. Once active, speaking the overlaid number selects that item so a user can open it or perform other operations. Show Numbers was designed so that users could interact with items that are not readily identifiable.

The Show Numbers command overlaying numbers in the Games Explorer.

Dictation

WSR

enables dictation of text in applications and Windows. If a dictation

mistake occurs it can be corrected by speaking "Correct word" or

"Correct that" and the alternates panel will appear and provide

suggestions for correction; these suggestions can be selected by

speaking the number corresponding to the number of the suggestion and by

speaking "OK." If the desired item is not listed among suggestions, a

user can speak it so that it might appear. Alternatively, users can

speak "Spell it" or "I'll spell it myself" to speak the desired word on

letter-by-letter basis; users can use their personal alphabet or the NATO phonetic alphabet (e.g., "N as in November") when spelling.

Multiple words in a sentence can be corrected simultaneously (for

example, if a user speaks "dictating" but the recognizer interprets

this word as "the thing," a user can state "correct the thing" to

correct both words at once). In the English language over 100,000 words

are recognized by default.

Speech dictionary

A personal dictionary allows users to include or exclude certain words or expressions from dictation.

When a user adds a word beginning with a capital letter to the

dictionary, a user can specify whether it should always be capitalized

or if capitalization depends on the context in which the word is spoken.

Users can also record pronunciations for words added to the dictionary

to increase recognition accuracy; words written via a stylus on a tablet PC for the Windows handwriting recognition feature are also stored. Information stored within a dictionary is included as part of a user's speech profile. Users can open the speech dictionary by speaking the "show speech dictionary" command.

Macros

An Aero Wizard interface displaying options to create speech recognition macros.

WSR supports custom macros through a supplementary application by Microsoft that enables additional natural language commands.

As an example of this functionality, an email macro released by

Microsoft enables a natural language command where a user can speak

"send email to contact about subject," which opens Microsoft Outlook to compose a new message with the designated contact and subject automatically inserted. Microsoft has also released sample macros for the speech dictionary, for Windows Media Player, for Microsoft PowerPoint, for speech synthesis, to switch between multiple microphones, to customize various aspects of audio device configuration such as volume levels, and for general natural language queries such as "What is the weather forecast?" "What time is it?" and "What's the date?" Responses to these user inquiries are spoken back to the user in the active Microsoft text-to-speech voice installed on the machine.

| Application or item | ||||||||

|---|---|---|---|---|---|---|---|---|

| Sample macro phrases (italics indicate substitutable words) | |||||||

|---|---|---|---|---|---|---|---|

| Microsoft Outlook | Send email | Send email to | Send email to Makoto | Send email to Makoto Yamagishi | Send email to Makoto Yamagishi about | Send email to Makoto Yamagishi about This week's meeting | Refresh Outlook email contacts |

| Microsoft PowerPoint | Next slide | Previous slide | Next | Previous | Go forward 5 slides | Go back 3 slides | Go to slide 8 |

| Windows Media Player | Next track | Previous song | Play Beethoven | Play something by Mozart | Play the CD that has In the Hall of the Mountain King | Play something written in 1930 | Pause music |

| Microphones in Windows | Microphone | Switch microphone | Microphone Array microphone | Switch to Line | Switch to Microphone Array | Switch to Line microphone | Switch to Microphone Array microphone |

| Volume levels in Windows | Mute the speakers | Unmute the speakers | Turn off the audio | Increase the volume | Increase the volume by 2 times | Decrease the volume by 50 | Set the volume to 66 |

| WSR Speech Dictionary | Export the speech dictionary | Add a pronunciation | Add that [selected text] to the speech dictionary | Block that [selected text] from the speech dictionary | Remove that [selected text] | [Selected text] sounds like... | What does that [selected text] sound like? |

| Speech Synthesis | Read that [selected text] | Read the next 3 paragraphs | Read the previous sentence | Please stop reading | What time is it? | What's today's date? | Tell me the weather forecast for Redmond |

Users and developers can create their own macros based on text

transcription and substitution; application execution (with support for command-line arguments); keyboard shortcuts; emulation of existing voice commands; or a combination of these items. XML, JScript and VBScript are supported. Macros can be limited to specific applications and rules for macros can be defined programmatically.

For a macro to load, it must be stored in a Speech Macros folder within the active user's Documents directory. All macros are digitally signed by default if a user certificate

is available to ensure that stored commands are not altered or loaded

by third-parties; if a certificate is not available, an administrator

can create one.

Configurable security levels can prohibit unsigned macros from being

loaded; to prompt users to sign macros after creation; and to load

unsigned macros.

Performance

As of 2017

WSR uses Microsoft Speech Recognizer 8.0, the version introduced in

Windows Vista. For dictation it was found to be 93.6% accurate without

training by Mark Hachman, a Senior Editor of PC World—a

rate that is not as accurate as competing software. According to

Microsoft, the rate of accuracy when trained is 99%. Hachman opined that

Microsoft does not publicly discuss the feature because of the 2006

incident during the development of Windows Vista, with the result being

that few users knew that documents could be dictated within Windows

before the introduction of Cortana.