The concept of entropy developed in response to the observation that a certain amount of functional energy released from combustion reactions is always lost to dissipation or friction and is thus not transformed into useful work. Early heat-powered engines such as Thomas Savery's (1698), the Newcomen engine (1712) and the Cugnot steam tricycle (1769) were inefficient, converting less than two percent of the input energy into useful work output; a great deal of useful energy was dissipated or lost. Over the next two centuries, physicists investigated this puzzle of lost energy; the result was the concept of entropy.

In the early 1850s, Rudolf Clausius set forth the concept of the thermodynamic system and posited the argument that in any irreversible process a small amount of heat energy δQ is incrementally dissipated across the system boundary. Clausius continued to develop his ideas of lost energy, and coined the term entropy.

Since the mid-20th century the concept of entropy has found application in the field of information theory, describing an analogous loss of data in information transmission systems.

In 2019, the notion was leveraged as 'relative beam entropy' for a single-parameter characterization of beamspace randomness of 5G/6G sparse MIMO channels, e.g., 3GPP 5G cellular channels, in millimeter-wave and teraHertz bands.

Classical thermodynamic views

In 1803, mathematician Lazare Carnot published a work entitled Fundamental Principles of Equilibrium and Movement. This work includes a discussion on the efficiency of fundamental machines, i.e. pulleys and inclined planes. Carnot saw through all the details of the mechanisms to develop a general discussion on the conservation of mechanical energy. Over the next three decades, Carnot's theorem was taken as a statement that in any machine the accelerations and shocks of the moving parts all represent losses of moment of activity, i.e. the useful work done. From this Carnot drew the inference that perpetual motion was impossible. This loss of moment of activity was the first-ever rudimentary statement of the second law of thermodynamics and the concept of 'transformation-energy' or entropy, i.e. energy lost to dissipation and friction.

Carnot died in exile in 1823. During the following year his son Sadi Carnot, having graduated from the École Polytechnique training school for engineers, but now living on half-pay with his brother Hippolyte in a small apartment in Paris, wrote Reflections on the Motive Power of Fire. In this book, Sadi visualized an ideal engine in which any heat (i.e., caloric) converted into work, could be reinstated by reversing the motion of the cycle, a concept subsequently known as thermodynamic reversibility. Building on his father's work, Sadi postulated the concept that "some caloric is always lost" in the conversion into work, even in his idealized reversible heat engine, which excluded frictional losses and other losses due to the imperfections of any real machine. He also discovered that this idealized efficiency was dependent only on the temperatures of the heat reservoirs between which the engine was working, and not on the types of working fluids. Any real heat engine could not realize the Carnot cycle's reversibility, and was condemned to be even less efficient. This loss of usable caloric was a precursory form of the increase in entropy as we now know it. Though formulated in terms of caloric, rather than entropy, this was an early insight into the second law of thermodynamics.

1854 definition

In his 1854 memoir, Clausius first develops the concepts of interior work, i.e. that "which the atoms of the body exert upon each other", and exterior work, i.e. that "which arise from foreign influences [to] which the body may be exposed", which may act on a working body of fluid or gas, typically functioning to work a piston. He then discusses the three categories into which heat Q may be divided:

- Heat employed in increasing the heat actually existing in the body.

- Heat employed in producing the interior work.

- Heat employed in producing the exterior work.

Building on this logic, and following a mathematical presentation of the first fundamental theorem, Clausius then presented the first-ever mathematical formulation of entropy, although at this point in the development of his theories he called it "equivalence-value", perhaps referring to the concept of the mechanical equivalent of heat which was developing at the time rather than entropy, a term which was to come into use later. He stated:

the second fundamental theorem in the mechanical theory of heat may thus be enunciated:

If two transformations which, without necessitating any other permanent change, can mutually replace one another, be called equivalent, then the generations of the quantity of heat Q from work at the temperature T, has the equivalence-value:

and the passage of the quantity of heat Q from the temperature T1 to the temperature T2, has the equivalence-value:

wherein T is a function of the temperature, independent of the nature of the process by which the transformation is effected.

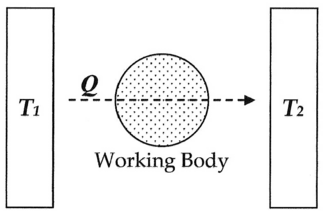

In modern terminology, we think of this equivalence-value as "entropy", symbolized by S. Thus, using the above description, we can calculate the entropy change ΔS for the passage of the quantity of heat Q from the temperature T1, through the "working body" of fluid, which was typically a body of steam, to the temperature T2 as shown below:

If we make the assignment:

Then, the entropy change or "equivalence-value" for this transformation is:

which equals:

and by factoring out Q, we have the following form, as was derived by Clausius:

1856 definition

In 1856, Clausius stated what he called the "second fundamental theorem in the mechanical theory of heat" in the following form:

where N is the "equivalence-value" of all uncompensated transformations involved in a cyclical process. This equivalence-value was a precursory formulation of entropy.[4]

1862 definition

In 1862, Clausius stated what he calls the "theorem respecting the equivalence-values of the transformations" or what is now known as the second law of thermodynamics, as such:

The algebraic sum of all the transformations occurring in a cyclical process can only be positive, or, as an extreme case, equal to nothing.

Quantitatively, Clausius states the mathematical expression for this theorem is follows.

Let δQ be an element of the heat given up by the body to any reservoir of heat during its own changes, heat which it may absorb from a reservoir being here reckoned as negative, and T the absolute temperature of the body at the moment of giving up this heat, then the equation:

must be true for every reversible cyclical process, and the relation:

must hold good for every cyclical process which is in any way possible.

This was an early formulation of the second law and one of the original forms of the concept of entropy.

1865 definition

In 1865, Clausius gave irreversible heat loss, or what he had previously been calling "equivalence-value", a name:

I propose that S be taken from the Greek words, `en-tropie' [intrinsic direction]. I have deliberately chosen the word entropy to be as similar as possible to the word energy: the two quantities to be named by these words are so closely related in physical significance that a certain similarity in their names appears to be appropriate.

Clausius did not specify why he chose the symbol "S" to represent entropy, and it is almost certainly untrue that Clausius chose "S" in honor of Sadi Carnot; the given names of scientists are rarely if ever used this way.

Later developments

In 1876, physicist J. Willard Gibbs, building on the work of Clausius, Hermann von Helmholtz and others, proposed that the measurement of "available energy" ΔG in a thermodynamic system could be mathematically accounted for by subtracting the "energy loss" TΔS from total energy change of the system ΔH. These concepts were further developed by James Clerk Maxwell [1871] and Max Planck [1903].

Statistical thermodynamic views

In 1877, Ludwig Boltzmann developed a statistical mechanical evaluation of the entropy S, of a body in its own given macrostate of internal thermodynamic equilibrium. It may be written as:

where

- kB denotes Boltzmann's constant and

- Ω denotes the number of microstates consistent with the given equilibrium macrostate.

Boltzmann himself did not actually write this formula expressed with the named constant kB, which is due to Planck's reading of Boltzmann.

Boltzmann saw entropy as a measure of statistical "mixedupness" or disorder. This concept was soon refined by J. Willard Gibbs, and is now regarded as one of the cornerstones of the theory of statistical mechanics.

Erwin Schrödinger made use of Boltzmann's work in his book What is Life?

to explain why living systems have far fewer replication errors than

would be predicted from Statistical Thermodynamics. Schrödinger used

the Boltzmann equation in a different form to show increase of entropy

where D is the number of possible energy states in the system that can be randomly filled with energy. He postulated a local decrease of entropy for living systems when (1/D) represents the number of states that are prevented from randomly distributing, such as occurs in replication of the genetic code.

Without this correction Schrödinger claimed that statistical thermodynamics would predict one thousand mutations per million replications, and ten mutations per hundred replications following the rule for square root of n, far more mutations than actually occur.

Schrödinger's separation of random and non-random energy states is one of the few explanations for why entropy could be low in the past, but continually increasing now. It has been proposed as an explanation of localized decrease of entropy in radiant energy focusing in parabolic reflectors and during dark current in diodes, which would otherwise be in violation of Statistical Thermodynamics.

Information theory

An analog to thermodynamic entropy is information entropy. In 1948, while working at Bell Telephone Laboratories, electrical engineer Claude Shannon set out to mathematically quantify the statistical nature of "lost information" in phone-line signals. To do this, Shannon developed the very general concept of information entropy, a fundamental cornerstone of information theory. Although the story varies, initially it seems that Shannon was not particularly aware of the close similarity between his new quantity and earlier work in thermodynamics. In 1939, however, when Shannon had been working on his equations for some time, he happened to visit the mathematician John von Neumann. During their discussions, regarding what Shannon should call the "measure of uncertainty" or attenuation in phone-line signals with reference to his new information theory, according to one source:

My greatest concern was what to call it. I thought of calling it ‘information’, but the word was overly used, so I decided to call it ‘uncertainty’. When I discussed it with John von Neumann, he had a better idea. Von Neumann told me, ‘You should call it entropy, for two reasons: In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. In the second place, and more important, nobody knows what entropy really is, so in a debate you will always have the advantage.

According to another source, when von Neumann asked him how he was getting on with his information theory, Shannon replied:

The theory was in excellent shape, except that he needed a good name for "missing information". "Why don’t you call it entropy", von Neumann suggested. "In the first place, a mathematical development very much like yours already exists in Boltzmann's statistical mechanics, and in the second place, no one understands entropy very well, so in any discussion you will be in a position of advantage.

In 1948 Shannon published his seminal paper A Mathematical Theory of Communication, in which he devoted a section to what he calls Choice, Uncertainty, and Entropy. In this section, Shannon introduces an H function of the following form:

where K is a positive constant. Shannon then states that "any quantity of this form, where K merely amounts to a choice of a unit of measurement, plays a central role in information theory as measures of information, choice, and uncertainty." Then, as an example of how this expression applies in a number of different fields, he references R.C. Tolman's 1938 Principles of Statistical Mechanics, stating that "the form of H will be recognized as that of entropy as defined in certain formulations of statistical mechanics where pi is the probability of a system being in cell i of its phase space… H is then, for example, the H in Boltzmann's famous H theorem." As such, over the last fifty years, ever since this statement was made, people have been overlapping the two concepts or even stating that they are exactly the same.

Shannon's information entropy is a much more general concept than statistical thermodynamic entropy. Information entropy is present whenever there are unknown quantities that can be described only by a probability distribution. In a series of papers by E. T. Jaynes starting in 1957, the statistical thermodynamic entropy can be seen as just a particular application of Shannon's information entropy to the probabilities of particular microstates of a system occurring in order to produce a particular macrostate.

Beam Entropy

The beamspace randomness of MIMO wireless channels, e.g., 3GPP 5G cellular channels, was characterized using the single parameter of 'Beam Entropy.' It facilitates the selection of the sparse MIMO channel learning algorithms in the beamspace.

Popular use

The term entropy is often used in popular language to denote a variety of unrelated phenomena. One example is the concept of corporate entropy as put forward somewhat humorously by authors Tom DeMarco and Timothy Lister in their 1987 classic publication Peopleware, a book on growing and managing productive teams and successful software projects. Here, they view energy waste as red tape and business team inefficiency as a form of entropy, i.e. energy lost to waste. This concept has caught on and is now common jargon in business schools.

In another example, entropy is the central theme in Isaac Asimov's short story The Last Question (first copyrighted in 1956). The story plays with the idea that the most important question is how to stop the increase of entropy.