The following outline provides an overview of and a topical guide to transhumanism:

Transhumanism – international intellectual and cultural movement that affirms the possibility and desirability of fundamentally transforming the human condition by developing and making widely available technologies to eliminate aging and to greatly enhance human intellectual, physical, and psychological capacities.[1] Transhumanist thinkers study the potential benefits and dangers of emerging and hypothetical technologies that could overcome fundamental human limitations, as well as study the ethical matters involved in developing and using such technologies.[1] They predict that human beings may eventually be able to transform themselves into beings with such greatly expanded abilities as to merit the label "posthuman".[1]

Classification of transhumanism

Transhumanism can be described as all of the following:- Branch of philosophy

– study of general and fundamental problems, such as those connected

with reality, existence, knowledge, values, reason, mind, and language.

- Branch of humanism – philosophical and ethical stance that emphasizes the value and agency of human beings, individually and collectively, and generally prefers critical thinking and evidence (rationalism, empiricism) over established doctrine or faith (fideism). In modern times, humanist movements are typically aligned with secularism, and today "Humanism" typically refers to a non-theistic life stance centred on human agency, and looking to science instead of religious dogma in order to understand the world.[2] According to Max More: "Transhumanism shares many elements of humanism, including a respect for reason and science, a commitment to progress, and a valuing of human (or transhuman) existence in this life. [...] Transhumanism differs from humanism in recognizing and anticipating the radical alterations in the nature and possibilities of our lives resulting from various sciences and technologies."

- Life stance – one's relation with what they accept as being of ultimate importance. It involves the presuppositions and theories upon which such a stance could be made, a belief system, and a commitment to working it out in one's life.[3]

- Social movement – type of group action. Large, sometimes informal, grouping of individuals or organizations which focus on specific political or social issues. In other words, members of a social movement carry out, resist or undo a social change.

- World view – fundamental cognitive orientation of an individual or society encompassing the entirety of the individual or society's knowledge and point of view. A world view can include natural philosophy; fundamental, existential, and normative postulates; or themes, values, emotions, and ethics.[4] It is the framework of ideas and beliefs forming a global description through which an individual, group or culture watches and interprets the world and interacts with it.

Transhumanist values

Neophilia

Neophilia – strong affinity for novelty and change. Transhumanist neophiliac values include:- Posthumanism – desire to become posthuman.

- Proactionary principle – ethical and decision-making principle formulated by the transhumanist philosopher Max More, pertaining to people's freedom to innovate, and the value of protecting that freedom.

- Singularitarianism – technocentric ideology and social movement defined by the belief that a technological singularity—the creation of a superintelligence—will likely happen in the medium future, and that deliberate action ought to be taken to ensure that the Singularity benefits humans.

- Technophilia – strong enthusiasm for technology, especially new technologies such as personal computers, the Internet, mobile phones and home cinema. The term is used in sociology when examining the interaction of individuals with their society, especially contrasted with technophobia.

Survival

Survival – survival, or self-preservation, is behavior that ensures the survival of an organism.[5] It is almost universal among living organisms. Humans differ from other animals in that they use technology extensively to improve chances of survival and increase life expectancy.- Global catastrophic risk – hypothetical future event with the potential to inflict serious damage to human well-being on a global scale. Some such events could destroy or cripple modern civilization. Other, even more severe, scenarios threaten permanent human extinction. In transhumanist thought, existential risks are to be actively avoided, with the notable exception of the technological singularity.

- Human extinction – end of the species Homo sapiens sapiens. Usually considered bad. Though opinions vary depending on whether humans are succeeded by progeny species or entities, such as posthumans or strong AI. If there are posthumans, people wouldn't be extinct, just their human predecessors. If all that remained was strong AI, sentience wouldn't be extinct, just biological sentience.

- Longevity – the length of one's life

- Immortality – unable to die or be killed, which scientifically speaking is theoretically impossible. A related term is biological immortality, which refers to forms of life immune to the effects of aging, which technically are not immortal in the general sense. It is not yet known if biological immortality is possible for humans.

- Indefinite lifespan – hypothetical longevity of humans (and other life-forms) under conditions in which aging is effectively and completely prevented and treated. Their lifespans would be "indefinite" (that is, they would not be "immortal"), because protection from the effects of aging on health does not guarantee survival (from accidents, natural disasters, war, etc.).

- Rejuvenation – distinct from life extension. Life extension strategies often study the causes of aging and try to oppose those causes in order to slow aging. Rejuvenation is the reversal of aging and thus requires a different strategy, namely repair of the damage that is associated with aging or replacement of damaged tissue with new tissue. Rejuvenation can be a means of life extension, but most life extension strategies do not involve rejuvenation.

Transhumanist ideologies

- Extropianism – early school of transhumanist thought characterized by a set of principles advocating a proactive approach to human evolution.[6] It is an evolving framework of values and standards for continuously improving the human condition. Extropians believe that advances in science and technology will some day let people live indefinitely. An extropian may wish to contribute to this goal, e.g. by doing research and development or volunteering to test new technology.

- Immortalism – moral ideology based upon the belief that technological immortality is possible and desirable, and advocating research and development to ensure its realization.[7]

- Postgenderism – social ideology which, though not currently possible, seeks the voluntary elimination of gender in the human species through the application of advanced biotechnology and assisted reproductive technologies.[8]

- Singularitarianism – moral ideology based upon the belief that a technological singularity is possible, and advocating deliberate action to affect it and ensure its safety.[9]

- Technogaianism – ecological ideology based upon the belief that emerging technologies can help restore Earth's environment, and that developing safe, clean, alternative technology should therefore be an important goal of environmentalists.[10]

Transhumanist politics

- Democratic transhumanism – political philosophy synthesizing liberal democracy, social democracy, radical democracy and transhumanism.[11]

- Libertarian transhumanism – political philosophy synthesizing libertarian capitalism and transhumanism.[12]

Transhumanist rights

- Cognitive liberty – freedom of sovereign control over one's own consciousness. It is an extension of the concepts of freedom of thought and self-ownership.

- Morphological freedom – proposed civil right of a person to either maintain or modify his or her own body, on his or her own terms, through informed, consensual recourse to, or refusal of, available therapeutic or enabling medical technology.[13]

History of transhumanism

The term "transhumanism" was first coined in 1957 by Sir Julian Huxley, a zoologist and prominent humanist.[14]- Preludes to transhumanism

- Renaissance humanism – cultural and educational reform during the fourteenth and the beginning of the fifteenth centuries, as a response to the challenge of Mediæval scholastic education, emphasizing practical, pre-professional and -scientific studies. Rather than train professionals in jargon and strict practice, humanists sought to create a citizenry (sometimes including women) able to speak and write with eloquence and clarity.

- Age of Enlightenment – elite cultural movement of intellectuals in 18th century Europe that sought to mobilize the power of reason in order to reform society and advance knowledge. It promoted intellectual interchange and opposed intolerance and abuses in church and state.

- Russian cosmism – past philosophical and cultural movement that emerged in Russia in the early 20th century. It entailed a broad theory of natural philosophy, combining elements of religion and ethics with a history and philosophy of the origin, evolution and future existence of the cosmos, and an expanding role of humankind within it.

- Emergence of transhumanism

Current technological factors

- Human condition – the irreducible part of humanity that is inherent and not connected to gender, race, class, etc.; the experiences of being human in a social, cultural, and personal context. Transhumanism aims to radically improve the human condition. The present human condition is highly technological, and is becoming more so.

- Noosphere – "sphere of human thought".[15] In the original theory of Vernadsky, the noosphere (sentience) is the third in a succession of phases of development of the Earth, after the geosphere (inanimate matter) and the biosphere (biological life). The noosphere includes technological endeavor.

- Technological change – overall process of invention, innovation and diffusion of technology (including processes).

- Rate of technological change

- Accelerating change

– perceived increase in the rate of technological (and sometimes social

and cultural) progress throughout history, which may suggest faster and

more profound change in the future. While many have suggested

accelerating change, the popularity of this theory in modern times is

closely associated with various advocates of the technological

singularity, such as Vernor Vinge and Ray Kurzweil.

- Moore's law – the observation that, over the history of computing hardware, the number of transistors on integrated circuits doubles approximately every two years. The law is named after Intel co-founder Gordon E. Moore, who described the trend in his 1965 paper.

- Accelerating change

– perceived increase in the rate of technological (and sometimes social

and cultural) progress throughout history, which may suggest faster and

more profound change in the future. While many have suggested

accelerating change, the popularity of this theory in modern times is

closely associated with various advocates of the technological

singularity, such as Vernor Vinge and Ray Kurzweil.

- Management of technological change

- Differential technological development – strategy proposed by transhumanist philosopher Nick Bostrom in which societies would seek to influence the sequence in which emerging technologies developed. On this approach, societies would strive to retard the development of harmful technologies and their applications, while accelerating the development of beneficial technologies, especially those that offer protection against the harmful ones.

- Influences on technological change

- Ideologies promoting technological change

- Innovation economics – growing economic theory that emphasizes entrepreneurship and innovation. Innovation economics is based on two fundamental tenets: that the central goal of economic policy should be to spur higher productivity through greater innovation, and that markets relying on input resources and price signals alone will not always be as effective in spurring higher productivity, and thereby economic growth.

- Singularitarianism – technocentric ideology and social movement defined by the belief that a technological singularity (TS), that is, the creation of a superintelligence, will likely happen in the medium future, and that deliberate action ought to be taken to ensure that the Singularity benefits humans. Singularitarians endeavor to bring about the TS.

- Innovation – application of better solutions (in the form of new ideas, new devices or new processes) that meet new requirements, inarticulated needs, or existing market needs. This is accomplished through more effective products, processes, services, technologies, or ideas that are readily available to markets, governments and society.

- Ideologies promoting technological change

- Rate of technological change

Human enhancement technologies

in biology

in genetics

- Genetic engineering

- Preimplantation genetic diagnosis

- Designer baby

- Liberal eugenics

- Directed evolution

Neuro-based

- Neuroenhancement

- Neurohacking

- Intelligence amplification

- Brain–computer interface

- Neuroprosthetics

- Neuroinformatics

- Nootropic

- Artificial intelligence

- Exocortex

Info-based

Prosthetics

Emerging technologies of interest to transhumanists

Emerging technologies – contemporary advances and innovation in various fields of technology, prior to or early in their diffusion. They are typically in the form of progressive developments intended to achieve a competitive advantage.[16] Transhumanists believe that humans can and should use technologies to become more than human. Emerging technologies offer the greatest potential in doing so. Examples of developing technologies that have become the focus of transhumanism include:- Anti-aging – another term for "life extension".

- Artificial intelligence – intelligence of machines and the branch of computer science that aims to create it. AI textbooks define the field as "the study and design of intelligent agents",[17] where an intelligent agent is a system that perceives its environment and takes actions that maximize its chances of success. John McCarthy, who coined the term in 1956, defines it as "the science and engineering of making intelligent machines."[18]

- Augmented reality – live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data. It is related to a more general concept called mediated reality, in which a view of reality is modified (possibly even diminished rather than augmented) by a computer. As a result, the technology functions by enhancing one’s current perception of reality. By contrast, virtual reality replaces the real world with a simulated one.

- Biomedical engineering

– application of engineering principles and design concepts to biology

and medicine, to improve healthcare diagnosis, monitoring and therapy.[19]

Applications include the development of biocompatible prostheses,

clinical equipment, micro-implants, imaging equipment such as MRIs and

EEGs, regenerative tissue growth, pharmaceutical drugs and therapeutic

biologicals.

- Neural engineering

– discipline that uses engineering techniques to understand, repair,

replace, enhance, or otherwise exploit the properties of neural systems.

Neural engineers are uniquely qualified to solve design problems at the

interface of living neural tissue and non-living constructs. Also known

as "neuroengineering".

- Neurohacking – colloquial term encompassing all methods of manipulating or interfering with the structure and/or function of neurons for improvement or repair.

- Neural engineering

– discipline that uses engineering techniques to understand, repair,

replace, enhance, or otherwise exploit the properties of neural systems.

Neural engineers are uniquely qualified to solve design problems at the

interface of living neural tissue and non-living constructs. Also known

as "neuroengineering".

- Biotechnology

– field of applied biology that uses living organisms and bioprocesses

in engineering, technology, medicine, and manufacturing, among other

fields. It encompasses a wide range of procedures for modifying living

organisms for human purposes. Early examples of biotechnology include

domestication of animals, cultivation of plants, and breeding through

artificial selection and hybridization.

- Bionics

– in medicine, this refers to the replacement or enhancement of organs

or other body parts by mechanical versions. Bionic implants differ from

mere prostheses by mimicking the original function very closely, or even

surpassing it.

- Cyborg – being with both biological and artificial (e.g. electronic, mechanical or robotic) parts.

- Brain-computer interface – direct communication pathway between the brain and an external device. BCIs are under development to assist, augment, or repair human cognitive and sensory-motor functions. Sometimes called a direct neural interface or a brain–machine interface (BMI).

- Cloning

– in biotechnology, this refers to processes used to create copies of

DNA fragments (molecular cloning), cells (cell cloning), or organisms.

- Therapeutic cloning – application of somatic-cell nuclear transfer (a laboratory technique for creating a clonal embryo using an ovum with a donor nucleus) in regenerative medicine.

- Bionics

– in medicine, this refers to the replacement or enhancement of organs

or other body parts by mechanical versions. Bionic implants differ from

mere prostheses by mimicking the original function very closely, or even

surpassing it.

- Cognitive science – interdisciplinary scientific study of mind and its processes. It examines what cognition is, what it does and how it works. It includes research on how information is processed (in faculties such as perception, language, memory, reasoning, and emotion), represented, and transformed in behaviour, (human or other animal) nervous system or machine (e.g., computer). It includes research on artificial intelligence.

- Computer-mediated reality – ability to add to, subtract information from, or otherwise manipulate one's perception of reality through the use of a wearable computer or hand-held device[20] such as a smart phone.

- Cryonics – low-temperature preservation of humans and animals who can no longer be sustained by contemporary medicine, with the hope that healing and resuscitation may be possible in the future. Cryopreservation of people or large animals is not reversible with current technology.

- Cyberware – hardware or machine parts implanted in the human body and acting as an interface between the central nervous system and the computers or machinery connected to it. Research in this area is a protoscience.

- Head-mounted display (HMD) – display device, worn on the head or as part of a helmet, that has a small display optic in front of one (monocular HMD) or each eye (binocular HMD).

- Human enhancement technologies (HET) – techniques used to treat illness or disability, or to enhance human characteristics and capacities.[21]

- Human genetic engineering – alteration of an individual's genotype with the aim of choosing the phenotype of a newborn or changing the existing phenotype of a child or adult.[22]

- Human-machine interface – the part of a machine that handles its human-machine interaction.

- Information technology

– acquisition, processing, storage and dissemination of vocal,

pictorial, textual and numerical information by a microelectronics-based

combination of computing and telecommunications.[23]

- Internet of Autonomous Things – technological developments that are expected to bring computers into the physical environment as autonomous entities without human direction, freely moving and interacting with humans and other objects. An expected evolution of the Internet of things.

- Life extension – study of slowing down or reversing the processes of aging to extend both the maximum and average lifespan. Some researchers in this area, and persons who wish to achieve longer lives for themselves (called "life extensionists" or "longevists"), expect that future breakthroughs in tissue rejuvenation with stem cells, molecular repair, and organ replacement (such as with artificial organs or xenotransplantations) will eventually enable humans to live indefinitely (agerasia[24]) through complete rejuvenation to a healthy youthful condition. Also known as anti-aging medicine, experimental gerontology, and biomedical gerontology.

- Nanotechnology – study of physical phenomena on the nanoscale, dealing with things measured in nanometres, billionths of a meter. The development of microscopic or molecular machines.

- Nootropics – drugs, supplements, nutraceuticals, and functional foods that improve mental functions such as cognition, memory, intelligence, motivation, attention, and concentration.[25][26] Also referred to as "smart drugs", "brain steroids", "memory enhancers", "cognitive enhancers", "brain boosters", and "intelligence enhancers".

- Organ transplants – moving of an organ from one body to another or from a donor site on the patient's own body, for the purpose of replacing the recipient's damaged or absent organ. The emerging field of regenerative medicine is allowing scientists and engineers to create organs to be re-grown from the patient's own cells (stem cells, or cells extracted from the failing organs).

- Personal communicators – Around 1990 the next generation digital mobile phones were called digital personal communicators. Another definition, coined in 1991, is for a category of handheld devices that provide personal information manager functions and packet switched wireless data communications capabilities over wireless wide area networks such as cellular networks. These devices are now commonly referred to as smartphones or wireless PDAs.

- Personal development – includes activities that improve awareness and identity, develop talents and potential, build human capital and facilitates employability, enhance quality of life and contribute to the realization of dreams and aspirations. The concept is not limited to self-help, but includes formal and informal activities for developing others, in roles such as teacher, guide, counselor, manager, coach, or mentor. Finally, as personal development takes place in the context of institutions, it refers to the methods, programs, tools, techniques, and assessment systems that support human development at the individual level in organizations.[27]

- Powered exoskeleton – powered mobile machine consisting primarily of an exoskeleton-like framework worn by a person and a power supply that supplies at least part of the activation-energy for limb movement. Also known as "powered armor", or "exoframe".

- Prosthetics – artificial device extensions that replace missing body parts.

- Robotics

– design, construction, operation, structural disposition, manufacture

and application of robots. It draws heavily upon electronics,

engineering, mechanics, mechatronics, and software engineering.

- Autonomous Things (also the "Internet of Autonomous Things") – emerging term[28][29][30][31][32] for the technological developments that are expected to bring computers into the physical environment as autonomous entities without human direction, freely moving and interacting with humans and other objects.

- Swarm robotics –

- Simulated reality –

- Suspended animation – slowing of life processes by external means without termination. Breathing, heartbeat, and other involuntary functions may still occur, but they can only be detected by artificial means. Extreme cold can be used to precipitate the slowing of an individual's functions. For example, Laina Beasley was kept in suspended animation as a two-celled embryo for 13 years.[33][34]

- Virtual retinal display – display technology that draws a raster display (like a television) directly onto the retina of the eye. Users see what appears to be a conventional display floating in space in front of them.

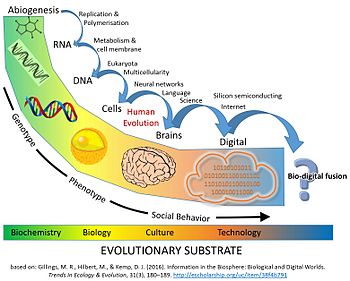

Technological evolution

Schematic Timeline of Information and Replicators in the Biosphere: major evolutionary transitions in information processing

Technological evolution –

- Directed evolution –

- Extropy – opposing concept of entropy. It poses that culture and technology will aid the universe in developing in an orderly progressive manner. So extropy is the tendency of systems to grow more organized.

- Intelligence explosion – possible outcome of humanity building artificial general intelligence (AGI), and hypothetically a direct result of such a technological singularity, in that AGI would be capable of recursive self-improvement leading to rapid emergence of ASI (artificial superintelligence), the limits of which are unknown.

- Megatrajectory – theoretical concept in evolutionary biology that describes paradigmatic developmental stages (major evolutionary milestones) and potential directionality in the evolution of life. A theorized megatrajectory that hasn't occurred yet is postbiological evolution triggered by the emergence of strong AI and several other similarly complex technologies.

- Participant evolution – process of deliberately redesigning the

human body and brain using technological means, rather than through the

natural processes of mutation and natural selection, with the goal of

removing "biological limitations."

- Liberal eugenics – use of reproductive and genetic technologies where the choice of enhancing human characteristics and capacities is left to the individual preferences of parents acting as consumers, rather than the public health policies of the state.

- Posthumanity – all persons technologically evolved from humans, but that are no longer human. Post humanity might include:

- Posthumans[35] – in transhumanism, they are hypothetical future beings "whose basic capacities so radically exceed those of present humans as to be no longer unambiguously human by our current standards."[35] A being technologically evolved from humans.

- Superhumans

– humans with extraordinary and unusual capabilities enabling them to

perform feats well beyond anything that an ordinary person could

conceivably achieve, even through long-time training and development.

Superhuman can mean an improved human, for example, by genetic

modification, cybernetic implants, nanotechnology, or as what humans

might eventually evolve into thousands or millions of years later into

the distant future.

- Posthuman Gods – posthumans, being no longer confined to the parameters of human nature, might grow physically and mentally so powerful as to appear god-like by human standards.[35]

- Übermensch – concept of the superhuman in the philosophy of Friedrich Nietzsche. Nietzsche had his character Zarathustra posit the Übermensch as a goal for humanity to set for itself in his 1883 novel Thus Spoke Zarathustra (German: Also Sprach Zarathustra).

- Superhumans

– humans with extraordinary and unusual capabilities enabling them to

perform feats well beyond anything that an ordinary person could

conceivably achieve, even through long-time training and development.

Superhuman can mean an improved human, for example, by genetic

modification, cybernetic implants, nanotechnology, or as what humans

might eventually evolve into thousands or millions of years later into

the distant future.

- Posthumans[35] – in transhumanism, they are hypothetical future beings "whose basic capacities so radically exceed those of present humans as to be no longer unambiguously human by our current standards."[35] A being technologically evolved from humans.

- Sociocultural evolution from a technological perspective – evolution transitioning from one basis to new forms, such as evolution through biology, then through cognition, then through culture, then through technology, including the possibility of a fusion between biology and technology.

- Technological convergence

– tendency for different technological systems to evolve towards

performing similar tasks. Convergence can refer to previously separate

technologies such as voice (and telephony features), data (and

productivity applications), and video that now share resources and

interact with each other synergistically.

- NBIC – acronym signifying the converging of Nanotechnology, Biotechnology, Information technology and Cognitive science

- GNR – acronym that denotes the converging technologies of Genetics, Nanotechnology, and Robotics.[36]

- Technological singularity

(TS) – hypothetical future emergence of superintelligence through

technological means. This could be achieved through the development of

artificial intelligence as smart as humans (strong AI).

Once computers become as capable as humans, they would be recursive,

that is, they could improve themselves through redesign and

modification. They could also conceivably mass-produce successively more

capable models of artificially intelligent computers and robots.

Another route might be through the fusion of a human and a computer,

resulting in a being with rapid recursive improvement potential.

Therefore, the technological singularity is seen as an intellectual

event horizon, beyond which the future becomes difficult to forecast.

Nevertheless, proponents of the singularity typically anticipate an

"intelligence explosion", leading quickly to the development of

superintelligence, and resulting in technological and sociological

change so rapid that mere humans would not be able to keep up with it.

Does the TS hold promise for human civilization, or peril?

- Promise

- Friendly artificial intelligence – if the technological singularity were to be achieved through the development of strong AI that was friendly to humans and held the well-being of the human race in primary focus, human civilization may be improved in progressively more fantastic ways, rather than come to an abrupt end.

- Utopia – see Utopia, below

- Peril

- AI takeover – upon its emergence, artificial general intelligence may quickly become the dominant form of intelligence on Earth

- Existential risk from artificial general intelligence

– in that strong AI has the potential to wipe out the human species, as

would a comet hitting the Earth, the technological singularity is an

existential risk. See also Survival, above

- Artificial general intelligence § Risk of human extinction

- Effective altruism § Far future and global catastrophic risks

- Global catastrophic risk § Artificial intelligence

- Intelligence explosion § Existential risk

- Recursive self-improvement

- Roboethics § In popular culture

- Self-replicating spacecraft

- Stephen Hawking § With regard to Artificial Intelligence

- Superintelligence § Potential danger to human survival

- Technological singularity § Uncertainty and risk

- Transhumanism § Existential risks

- Promise

- Utopia – an idyllic society, one of the goals of transhumanism.

- Techno-utopia – hypothetical ideal society, in which laws, government, and social conditions are solely operating for the benefit and well-being of all its citizens, set in the near- or far-future, when advanced science and technology will allow these ideal living standards to exist; for example, post scarcity, transformations in human nature, the abolition of suffering and even the end of death.

- Techno-utopianism – any ideology based on the belief that advances in science and technology will eventually bring about a utopia, or at least help to fulfill one or another utopian ideal.

- Omega Point – term coined by the French Jesuit Pierre Teilhard de Chardin (1881–1955) to describe a maximum level of complexity and consciousness towards which he believed the universe was evolving.

Hypothetical technologies

Hypothetical technology – technology that does not exist yet, but the development of which could potentially be achieved in the future. It is distinct from an emerging technology, which has achieved some developmental success. A hypothetical technology is typically not proven to be impossible. Many hypothetical technologies have been the subject of science fiction.- Artificial general intelligence

– hypothetical artificial intelligence that demonstrates human-like

intelligence – the intelligence of a machine that could successfully

perform any intellectual task that a human being can. It is a primary

goal of artificial intelligence research and an important topic for

science fiction writers and futurists. Artificial general intelligence

is also referred to as strong AI,[37] full AI[38] or as the ability to perform "general intelligent action".[39] Strong AI is the focus and hypothesized cause of the technological singularity.

- Friendly artificial intelligence – artificial intelligence (AI) that has a positive rather than negative effect on humanity. Friendly AI also refers to the field of knowledge required to build such an AI. AIs may be harmful to humans if steps are not taken to specifically design them to be benevolent. Doing so effectively is the primary goal of Friendly AI.

- Designer babies – babies whose genetic makeup has been artificially selected by genetic engineering combined with in vitro fertilisation to ensure the presence or absence of particular genes or characteristics.[40]

- Human cloning – creation of a genetically identical copy of a human. It does not usually refer to monozygotic multiple births nor the reproduction of human cells or tissue. The term is generally used to refer to artificial human cloning; human clones in the form of identical twins are commonplace, with their cloning occurring during the natural process of reproduction.

- Mind uploading – hypothetical process of transferring or copying a conscious mind from a brain to a non-biological substrate by scanning and mapping a biological brain in detail and copying its state into a computer system or another computational device. The computer would have to run a simulation model so faithful to the original that it would behave in essentially the same way as the original brain, or for all practical purposes, indistinguishably.[41]

- Molecular nanotechnology – technology based on the ability to build structures to complex, atomic specifications by means of mechanosynthesis.[42]

- Molecular assemblers – as defined by K. Eric Drexler, is a "proposed device able to guide chemical reactions by positioning reactive molecules with atomic precision". Some biological molecules such as ribosomes fit this definition, because they receive instructions from messenger RNA and then assemble specific sequences of amino acids to construct protein molecules. However, the term "molecular assembler" usually refers to theoretical human-made devices.[43]

- Rejuvenation – reversal of aging, which entails the repair of the damage associated with aging, or replacement of damaged tissue with new tissue. Rejuvenation can be a means of life extension, but most life extension strategies do not involve rejuvenation.

- Reprogenetics – merging of reproductive and genetic technologies expected to happen in the near future as techniques like germinal choice technology become more available and more powerful.

- Self-replicating machine – artificial construct that is theoretically capable of autonomously manufacturing a copy of itself using raw materials taken from its environment, thus exhibiting self-replication in a way analogous to that found in nature.

- Space colonization – concept of permanent human habitation outside of Earth. Although hypothetical at the present time, there are many proposals and speculations about the first space colony. It is a long-term goal of some national space programs. Also called "space settlement", "space humanization", and "space habitation".

- Superintelligence – hypothetical agent that possesses intelligence far surpassing that of the brightest and most gifted human minds. For example, a supercomputer with mental capacity exceeding the brainpower and cognitive abilities of all the people of Earth combined, while developing itself even further.

Related fields

- Futures studies – study of postulating possible, probable, and preferable futures and the worldviews and myths that underlie them. Also called "futurology".

Transhumanist media

Documentary films about transhumanism

- TechnoCalyps

- Transcendent Man

- The Singularity Is Near

- Transhumanism: Recreating Humanity. Vol. I Hyperreality Series

Transhumanist books

- Converging Technologies for Improving Human Performance

- The Transhumanist Reader: Classical and Contemporary Essays on the Science, Technology, and Philosophy of the Human Future, First Edition. Edited by Max More and Natasha Vita-More. © 2013 John Wiley & Sons, Inc. Published 2013 by John Wiley & Sons, Inc.

Transhumanist periodicals

Transhumanism in fiction

Transhumanism in fiction – Many of the tropes of science fiction can be viewed as similar to the goals of transhumanism. Science fiction literature contains many positive depictions of technologically enhanced human life, occasionally set in utopian (especially techno-utopian) societies. However, science fiction's depictions of technologically enhanced humans or other posthuman beings frequently come with a cautionary twist. The more pessimistic scenarios include many dystopian tales of human bioengineering gone wrong.Notable transhumanist authors

- Neal Asher

- Margaret Atwood

- Iain M. Banks

- Stephen Baxter

- Greg Bear

- Gregory Benford

- Marshall Brain

- David Brin

- Dan Brown

- Ted Chiang

- Arthur C. Clarke

- Philip K. Dick

- Cory Doctorow

- Jacek Dukaj

- Greg Egan

- Peter F Hamilton

- Michel Houellebecq

- Aldous Huxley

- Zoltan Istvan

- Stanisław Lem

- Richard K. Morgan

- Yuri Nikitin

- Alastair Reynolds

- John Scalzi

- Dan Simmons

- Olaf Stapledon

- Charles Stross

- John C. Wright

Television programs and films with transhumanist themes

- 2001: A Space Odyssey

- The 4400

- Akira

- Andromeda

- Avatar – film by James Cameron, in which a paralyzed soldier finds new life by transferring his consciousness into a genetically engineered alien body.

- Battlestar Galactica

- Beneath the Planet of the Apes

- Bio Booster Armor Guyver

- Blade Runner – movie based on the novel Do Androids Dream of Electric Sheep, in which blade runners track down and "retire" replicants who have escaped to earth. Replicants are artificial humans created and sold to serve in the off-world colonies as soldiers and slaves.

- Brainstorm

- Crest of the Stars

- Dark Angel

- Elysium

- Ex Machina

- Fringe: a television show about a group of paranormal investigators who regularly deal with transhumans that have used technology to go beyond normal limits.

- Gattaca

- Ghost in the Shell

- Her

- Heroes

- The Lawnmower Man

- Limitless – movie in which a man obtains superhuman intelligence by taking a nootropic designer drug with some problematic side-effects.

- Lucy

- The Machine

- The Matrix

- The Melancholy of Haruhi Suzumiya

- Mobile Suit Gundam

- Neon Genesis Evangelion – Japanese animation in which the antagonist's endgame is the reduction of all life to a single living form (in this case a great ocean of consciousness) known as "The Human Instrumentality Project"

- Orphan Black

- Prometheus

- Robocop

- Stargate SG-1

- Star Trek

- Star Trek: The Next Generation –

- Lieutenant Commander Data – artificially intelligent synthetic life form designed, built by, and a self-likeness of, Doctor Noonien Soong. Both Data and Dr. Soong were portrayed by Brent Spiner. Data is a self-aware, sapient, sentient, and anatomically fully functional robot who serves as the second officer and chief operations officer aboard the Federation starships USS Enterprise-D and USS Enterprise-E. Though an example of artificial general intelligence, Data did not implement a technological singularity.

- Star Trek: The Next Generation –

- Star Wars

- Strange Days

- Terminator Salvation

- Texhnolyze

- Transcendence

- Tron

- The X Files episode "Killswitch" – features a sentient artificial intelligence, created by uploading a man's consciousness into a computer[44]

Comics or graphic novels

- Battle Angel Alita/Gunm

- Dresden Codak – webcomic that stars a transhuman cyborg named Kimiko Ross who augments her body over the course of the strip's stories.

- Transmetropolitan – comic about a transhuman society several centuries in the future that includes many cyborgs, uploaded humans, and genetically modified mutants.

- Upgrade – satirical dystopian graphic novel by Louis Rosenberg, about humans approaching the singularity

- Monkey Room – satirical dystopian graphic novel by Louis Rosenberg, about humans approaching the singularity

Video games

- BioShock – video game in which humans develop a biotechnology called "Plasmids" which grants them seemingly magical powers, including telekinesis and superhuman strength.

- Crysis (series)

- Destiny

- Deus Ex (series) - a main focus of the game is human augmentation and its effects on and risks to society

- Half-Life 2

- Halo

- Metal Gear Rising: Revengeance

- PlanetSide 2

- Remember Me

- Syndicate (series)

- Total Annihilation

- SOMA - The entire game hinges on the concept of the capacity to upload a Consciousness into a computer, and explores the consequences thoroughly.

Table-top games

- Eclipse Phase – role playing game that takes transhumanism to a post-apocalyptic horror setting in which General Artificial Intelligences have gone rogue, introducing itself with the slogan “Your mind is software. Program it. Your body is a shell. Change it. Death is a disease. Cure it. Extinction is approaching. Fight it.”

- Transhuman Space – GURPS supplement that presents a transhumanist future set in our solar system in the year 2100.

- Warhammer 40,000 – game and fiction franchise by Games Workshop, that depicts a universe which includes cybernetic and genetic modifications, human-machine interfaces, self-aware computer "spirits" (advanced AIs), ubiquitous space travel and posthuman gods. The main protagonists of many novels and campaigns, the Imperial Space Marines, are literal textbook transhumans: normal human men who have been so vastly augmented and changed by technology that they are no longer Homo sapiens but some other, new species.

Transhumanist organizations

- Carboncopies - nonprofit dedicated to advancing research in whole brain emulation and substrate-independent minds.

- 2045 Initiative - an initiative to develop cybernetic immortality by 2045.

- Alcor Life Extension Foundation – nonprofit company based in Scottsdale, Arizona, USA that researches, advocates for and performs cryonics, the preservation of humans in liquid nitrogen after legal death, in the hope of restoring them to full health when new technology is developed in the future.

- American Cryonics Society

- Cryonics Institute

- Extropy Institute

- Foresight Institute – nonprofit organization based in Palo Alto, California that promotes transformative technologies. They sponsor conferences on molecular nanotechnology, publish reports, produce a newsletter, and offer several running prizes, including the annual Feynman Prizes given in experimental and theory categories, and the $250,000 Feynman Grand Prize for demonstrating two molecular machines capable of nanoscale positional accuracy and computation.[45]

- Humanity+ – international non-governmental organization which advocates the ethical use of emerging technologies to enhance human capacities. It was formerly named the "World Transhumanist Association".

- Machine Intelligence Research Institute – non-profit organization founded in 2000 (as the "Singularity Institute for Artificial Intelligence") to develop safe artificial intelligence software, and to raise awareness of both the dangers and potential benefits it believes AI presents. In their view, the potential benefits and risks of a technological singularity necessitate the search for solutions to problems involving AI goal systems to ensure powerful AIs are not dangerous when they are created.[46][47]

- Mormon Transhumanist Association

- Christian Transhumanist Association, founded 2013

Transhumanist leaders and scholars

Some people who have made a major impact on the advancement of transhumanism:- Nick Bostrom – Swedish philosopher at the University of Oxford known for his work on existential risk, the anthropic principle, human enhancement ethics, superintelligence risks, the reversal test, and consequentialism.

- K. Eric Drexler –

- George Dvorsky –

- Robert Ettinger –

- Nikolai Fyodorovich Fyodorov

- FM-2030 (October 15, 1930, – July 8, 2000) – author, teacher, transhumanist philosopher, futurist, and consultant.[48] His given name was Fereidoun M. Esfandiary. He became notable as a transhumanist with the book Are You a Transhuman?: Monitoring and Stimulating Your Personal Rate of Growth in a Rapidly Changing World, published in 1989.

- Aubrey de Grey – English author and theoretician in the field of gerontology, and the Chief Science Officer of the SENS Foundation. He is perhaps best known for his view that human beings could, in theory, live to lifespans far in excess of that which any authenticated cases have lived to today.

- James Hughes – sociologist and bioethicist teaching health policy at Trinity College in Hartford, Connecticut in the United States. Hughes served as the executive director of the World Transhumanist Association (which has since changed its name to Humanity+) from 2004 to 2006, and moved on to serve as the executive director of the Institute for Ethics and Emerging Technologies, which he founded with Nick Bostrom.

- Julian Huxley –

- Raymond Kurzweil – pioneer in optical character recognition, text-to-speech synthesis, speech recognition technology, and musical synthesizer keyboard instruments. He is most notable now as a public advocate for the futurist and transhumanist movements, and as Director of Engineering at Google, where he has a one-sentence job description: "to bring natural language understanding to Google". Books he has authored which pertain to transhumanism include The Age of Intelligent Machines, The 10% Solution for a Healthy Life, The Age of Spiritual Machines, Fantastic Voyage: Live Long Enough to Live Forever, The Singularity Is Near, Transcend: Nine Steps to Living Well Forever,[49] and How to Create a Mind. He co-founded the Singularity University, and he is also a co-founder of the Singularity Summit.

- Hans Moravec – adjunct faculty member at the Robotics Institute of Carnegie Mellon University. He is known for his work on robotics, artificial intelligence, and writings on the impact of technology. Moravec also is a futurist with many of his publications and predictions focusing on transhumanism. Moravec developed techniques in computer vision for determining the region of interest (ROI) in a scene.

- Max More – a philosopher and futurist who writes, speaks, and consults on advanced decision-making about emerging technologies

- David Pearce – Utilitarian thinker and author of The Hedonistic Imperative, in which he explores the possibility of how technologies such as genetic engineering, nanotechnology, pharmacology, and neurosurgery could potentially converge to eliminate all forms of unpleasant experience in human life and produce a posthuman civilization.[50]

- Giulio Prisco –

- Anders Sandberg – researcher, science debater, futurist, transhumanist, and author born in Solna, Sweden, whose recent contributions include work on cognitive enhancement[51] (methods, impacts, and policy analysis); a technical roadmap on whole brain emulation;[52] on neuroethics; and on global catastrophic risks, particularly on the question of how to take into account the subjective uncertainty in risk estimates of low-likelihood, high-consequence risk.[53]

- Stefan Lorenz Sorgner – philosopher who argues for a Nietzschean transhumanism

- Frank J. Tipler –