| Intellectual disability | |

|---|---|

| Other names | Intellectual developmental disability (IDD), general learning disability |

| |

| Children with intellectual disabilities or other developmental conditions can compete in the Special Olympics. | |

| Specialty | Psychiatry, pediatrics |

| Frequency | 153 million (2015) |

Intellectual disability (ID), also known as general learning disability and mental retardation (MR), is a generalized neurodevelopmental disorder characterized by significantly impaired intellectual and adaptive functioning. It is defined by an IQ under 70, in addition to deficits in two or more adaptive behaviors that affect everyday, general living.

Once focused almost entirely on cognition, the definition now includes both a component relating to mental functioning and one relating to an individual's functional skills in their daily environment. As a result of this focus on the person's abilities in practice, a person with an unusually low IQ may still not be considered to have intellectual disability.

Intellectual disability is subdivided into syndromic intellectual disability, in which intellectual deficits associated with other medical and behavioral signs and symptoms are present, and non-syndromic intellectual disability, in which intellectual deficits appear without other abnormalities. Down syndrome and fragile X syndrome are examples of syndromic intellectual disabilities.

Intellectual disability affects about 2–3% of the general population. Seventy-five to ninety percent of the affected people have mild intellectual disability. Non-syndromic, or idiopathic cases account for 30–50% of these cases. About a quarter of cases are caused by a genetic disorder, and about 5% of cases are inherited from a person's parents. Cases of unknown cause affect about 95 million people as of 2013.

Signs and symptoms

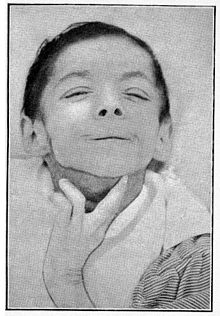

A historical image of a person with intellectual disability

Intellectual disability (ID) becomes apparent during childhood and

involves deficits in mental abilities, social skills, and core activities of daily living (ADLs) when compared to same-aged peers.

There often are no physical signs of mild forms of ID, although there

may be characteristic physical traits when it is associated with a

genetic disorder (e.g., Down syndrome).

The level of impairment ranges in severity for each person. Some of the early signs can include:

- Delays in reaching, or failure to achieve milestones in motor skills development (sitting, crawling, walking)

- Slowness learning to talk, or continued difficulties with speech and language skills after starting to talk

- Difficulty with self-help and self-care skills (e.g., getting dressed, washing, and feeding themselves)

- Poor planning or problem-solving abilities

- Behavioral and social problems

- Failure to grow intellectually, or continued infant-like behavior

- Problems keeping up in school

- Failure to adapt or adjust to new situations

- Difficulty understanding and following social rules

In early childhood, mild ID (IQ 50–69) may not be obvious or identified until children begin school.

Even when poor academic performance is recognized, it may take expert

assessment to distinguish mild intellectual disability from specific learning disability

or emotional/behavioral disorders. People with mild ID are capable of

learning reading and mathematics skills to approximately the level of a

typical child aged nine to twelve. They can learn self-care and

practical skills, such as cooking or using the local mass transit

system. As individuals with intellectual disability reach adulthood,

many learn to live independently and maintain gainful employment.

Moderate ID (IQ 35–49) is nearly always apparent within the first years of life. Speech delays

are particularly common signs of moderate ID. People with moderate

intellectual disability need considerable supports in school, at home,

and in the community in order to fully participate. While their academic

potential is limited, they can learn simple health and safety skills

and to participate in simple activities. As adults, they may live with

their parents, in a supportive group home,

or even semi-independently with significant supportive services to help

them, for example, manage their finances. As adults, they may work in a

sheltered workshop.

People with Severe (IQ 20–34) or Profound ID (IQ 19 or below)

need more intensive support and supervision for their entire lives. They

may learn some ADLs, but an intellectual disability is considered

severe or profound when individuals are unable to independently care for

themselves without ongoing significant assistance from a caregiver

throughout adulthood.

Individuals with profound ID are completely dependent on others for all

ADLs and to maintain their physical health and safety. They may be able

to learn to participate in some of these activities to limited degree.

Causes

Down syndrome is the most common genetic cause of intellectual disability.

Among children, the cause of intellectual disability is unknown for one-third to one-half of cases. About 5% of cases are inherited from a person's parents.

Genetic defects that cause intellectual disability, but are not

inherited, can be caused by accidents or mutations in genetic

development. Examples of such accidents are development of an extra

chromosome 18 (trisomy 18) and Down syndrome, which is the most common genetic cause. Velocardiofacial syndrome and fetal alcohol spectrum disorders are the two next most common causes. However, there are many other causes. The most common are:

- Genetic conditions. Sometimes disability is caused by abnormal genes inherited from parents, errors when genes combine, or other reasons. The most prevalent genetic conditions include Down syndrome, Klinefelter syndrome, Fragile X syndrome (common among boys), neurofibromatosis, congenital hypothyroidism, Williams syndrome, phenylketonuria (PKU), and Prader–Willi syndrome. Other genetic conditions include Phelan-McDermid syndrome (22q13del), Mowat–Wilson syndrome, genetic ciliopathy, and Siderius type X-linked intellectual disability (OMIM 300263) as caused by mutations in the PHF8 gene (OMIM 300560). In the rarest of cases, abnormalities with the X or Y chromosome may also cause disability. 48, XXXX and 49, XXXXX syndrome affect a small number of girls worldwide, while boys may be affected by 49, XXXXY, or 49, XYYYY. 47, XYY is not associated with significantly lowered IQ though affected individuals may have slightly lower IQs than non-affected siblings on average.

- Problems during pregnancy. Intellectual disability can result when the fetus does not develop properly. For example, there may be a problem with the way the fetus's cells divide as it grows. A pregnant woman who drinks alcohol or gets an infection like rubella during pregnancy may also have a baby with intellectual disability.

- Problems at birth. If a baby has problems during labor and birth, such as not getting enough oxygen, he or she may have developmental disability due to brain damage.

- Exposure to certain types of disease or toxins. Diseases like whooping cough, measles, or meningitis can cause intellectual disability if medical care is delayed or inadequate. Exposure to poisons like lead or mercury may also affect mental ability.

- Iodine deficiency, affecting approximately 2 billion people worldwide, is the leading preventable cause of intellectual disability in areas of the developing world where iodine deficiency is endemic. Iodine deficiency also causes goiter, an enlargement of the thyroid gland. More common than full-fledged cretinism, as intellectual disability caused by severe iodine deficiency is called, is mild impairment of intelligence. Residents of certain areas of the world, due to natural deficiency and governmental inaction, are severely affected by iodine deficiency. India has 500 million suffering from deficiency, 54 million from goiter, and 2 million from cretinism. Among other nations affected by iodine deficiency, China and Kazakhstan have instituted widespread salt iodization programs. But, as of 2006, Russia had not.

- Malnutrition is a common cause of reduced intelligence in parts of the world affected by famine, such as Ethiopia and nations struggling with extended periods of warfare that disrupt agriculture production and distributioni.

- Absence of the arcuate fasciculus.

Diagnosis

According to both the American Association on Intellectual and Developmental Disabilities (Intellectual Disability: Definition, Classification, and Systems of Supports (11th Edition) and the American Psychiatric Association Diagnostic and Statistical Manual of Mental Disorders

(DSM-IV), three criteria must be met for a diagnosis of intellectual

disability: significant limitation in general mental abilities

(intellectual functioning), significant limitations in one or more areas

of adaptive behavior across multiple environments (as measured by an adaptive behavior rating scale, i.e. communication, self-help skills, interpersonal skills,

and more), and evidence that the limitations became apparent in

childhood or adolescence. In general, people with intellectual

disability have an IQ below 70, but clinical discretion may be necessary

for individuals who have a somewhat higher IQ but severe impairment in

adaptive functioning.

It is formally diagnosed by an assessment of IQ and adaptive

behavior. A third condition requiring onset during the developmental

period is used to distinguish intellectual disability from other

conditions, such as traumatic brain injuries and dementias (including Alzheimer's disease).

Intelligence quotient

The first English-language IQ test, the Stanford–Binet Intelligence Scales, was adapted from a test battery designed for school placement by Alfred Binet in France. Lewis Terman

adapted Binet's test and promoted it as a test measuring "general

intelligence." Terman's test was the first widely used mental test to

report scores in "intelligence quotient" form ("mental age" divided by

chronological age, multiplied by 100). Current tests are scored in

"deviation IQ" form, with a performance level by a test-taker two

standard deviations below the median score for the test-taker's age

group defined as IQ 70. Until the most recent revision of diagnostic

standards, an IQ of 70 or below was a primary factor for intellectual

disability diagnosis, and IQ scores were used to categorize degrees of

intellectual disability.

Since current diagnosis of intellectual disability is not based

on IQ scores alone, but must also take into consideration a person's

adaptive functioning, the diagnosis is not made rigidly. It encompasses

intellectual scores, adaptive functioning scores from an adaptive

behavior rating scale based on descriptions of known abilities provided

by someone familiar with the person, and also the observations of the

assessment examiner who is able to find out directly from the person

what he or she can understand, communicate, and such like. IQ assessment

must be based on a current test. This enables diagnosis to avoid the

pitfall of the Flynn effect, which is a consequence of changes in population IQ test performance changing IQ test norms over time.

Distinction from other disabilities

Clinically, intellectual disability is a subtype of cognitive deficit or disabilities affecting intellectual abilities,

which is a broader concept and includes intellectual deficits that are

too mild to properly qualify as intellectual disability, or too specific

(as in specific learning disability), or acquired later in life through acquired brain injuries or neurodegenerative diseases like dementia. Cognitive deficits may appear at any age. Developmental disability is any disability that is due to problems with growth and development. This term encompasses many congenital medical conditions that have no mental or intellectual components, although it, too, is sometimes used as a euphemism for intellectual disability.

Limitations in more than one area

Adaptive behavior, or adaptive functioning, refers to the skills

needed to live independently (or at the minimally acceptable level for

age). To assess adaptive behavior, professionals compare the functional

abilities of a child to those of other children of similar age. To

measure adaptive behavior, professionals use structured interviews, with

which they systematically elicit information about persons' functioning

in the community from people who know them well. There are many

adaptive behavior scales, and accurate assessment of the quality of

someone's adaptive behavior requires clinical judgment as well. Certain

skills are important to adaptive behavior, such as:

- Daily living skills, such as getting dressed, using the bathroom, and feeding oneself

- Communication skills, such as understanding what is said and being able to answer

- Social skills with peers, family members, spouses, adults, and others

Management

By most definitions, intellectual disability is more accurately considered a disability rather than a disease. Intellectual disability can be distinguished in many ways from mental illness, such as schizophrenia or depression.

Currently, there is no "cure" for an established disability, though

with appropriate support and teaching, most individuals can learn to do

many things. Causes, such as congenital hypothyroidism, if detected

early may be treated to prevent development of an intellectual

disability.

There are thousands of agencies around the world that provide

assistance for people with developmental disabilities. They include

state-run, for-profit, and non-profit, privately run agencies. Within

one agency there could be departments that include fully staffed

residential homes, day rehabilitation programs that approximate schools,

workshops wherein people with disabilities can obtain jobs, programs

that assist people with developmental disabilities in obtaining jobs in

the community, programs that provide support for people with

developmental disabilities who have their own apartments, programs that

assist them with raising their children, and many more. There are also

many agencies and programs for parents of children with developmental

disabilities.

Beyond that, there are specific programs that people with

developmental disabilities can take part in wherein they learn basic

life skills. These "goals" may take a much longer amount of time for

them to accomplish, but the ultimate goal is independence. This may be

anything from independence in tooth brushing to an independent

residence. People with developmental disabilities learn throughout their

lives and can obtain many new skills even late in life with the help of

their families, caregivers, clinicians and the people who coordinate

the efforts of all of these people.

There are four broad areas of intervention that allow for active

participation from caregivers, community members, clinicians, and of

course, the individual(s) with an intellectual disability. These include

psychosocial treatments, behavioral treatments, cognitive-behavioral

treatments, and family-oriented strategies.

Psychosocial treatments are intended primarily for children before and

during the preschool years as this is the optimum time for

intervention.

This early intervention should include encouragement of exploration,

mentoring in basic skills, celebration of developmental advances, guided

rehearsal and extension of newly acquired skills, protection from

harmful displays of disapproval, teasing, or punishment, and exposure to

a rich and responsive language environment.

A great example of a successful intervention is the Carolina

Abecedarian Project that was conducted with over 100 children from low

SES families beginning in infancy through pre-school years. Results

indicated that by age 2, the children provided the intervention had

higher test scores than control group children, and they remained

approximately 5 points higher 10 years after the end of the program. By

young adulthood, children from the intervention group had better

educational attainment, employment opportunities, and fewer behavioral

problems than their control-group counterparts.

Core components of behavioral treatments include language and

social skills acquisition. Typically, one-to-one training is offered in

which a therapist uses a shaping procedure in combination with positive

reinforcements to help the child pronounce syllables until words are

completed. Sometimes involving pictures and visual aids, therapists aim

at improving speech capacity so that short sentences about important

daily tasks (e.g. bathroom use, eating, etc.) can be effectively

communicated by the child.

In a similar fashion, older children benefit from this type of training

as they learn to sharpen their social skills such as sharing, taking

turns, following instruction, and smiling.

At the same time, a movement known as social inclusion attempts to

increase valuable interactions between children with an intellectual

disability and their non-disabled peers. Cognitive-behavioral treatments, a combination of the previous two treatment types, involves a strategical-metastrategical learning technique

that teaches children math, language, and other basic skills pertaining

to memory and learning. The first goal of the training is to teach the

child to be a strategical thinker through making cognitive connections

and plans. Then, the therapist teaches the child to be metastrategical

by teaching them to discriminate among different tasks and determine

which plan or strategy suits each task.

Finally, family-oriented strategies delve into empowering the family

with the skill set they need to support and encourage their child or

children with an intellectual disability. In general, this includes

teaching assertiveness skills or behavior management techniques as well

as how to ask for help from neighbors, extended family, or day-care

staff.

As the child ages, parents are then taught how to approach topics such

as housing/residential care, employment, and relationships. The ultimate

goal for every intervention or technique is to give the child autonomy

and a sense of independence using the acquired skills he/she has.

Although there is no specific medication for intellectual

disability, many people with developmental disabilities have further

medical complications and may be prescribed several medications. For

example, autistic children with developmental delay may be prescribed antipsychotics or mood stabilizers to help with their behavior. Use of psychotropic medications such as benzodiazepines

in people with intellectual disability requires monitoring and

vigilance as side effects occur commonly and are often misdiagnosed as

behavioral and psychiatric problems.

Epidemiology

Intellectual disability affects about 2–3% of the general population.

75–90% of the affected people have mild intellectual disability.

Non-syndromic or idiopathic ID accounts for 30–50% of cases. About a quarter of cases are caused by a genetic disorder. Cases of unknown cause affect about 95 million people as of 2013. It is more common in males and in low to middle income countries.

History

Intellectual disability has been documented under a variety of names

throughout history. Throughout much of human history, society was unkind

to those with any type of disability, and people with intellectual

disability were commonly viewed as burdens on their families.

Greek and Roman philosophers, who valued reasoning abilities, disparaged people with intellectual disability as barely human. The oldest physiological view of intellectual disability is in the writings of Hippocrates in the late fifth century BCE, who believed that it was caused by an imbalance in the four humors in the brain.

Caliph Al-Walid

(r. 705–715) built one of the first care homes for intellectually

disabled individuals and built the first hospital which accommodated

intellectually disabled individuals as part of its services. In

addition, Al-Walid assigned each intellectually disabled individual a

caregiver.

Until the Enlightenment

in Europe, care and asylum was provided by families and the church (in

monasteries and other religious communities), focusing on the provision

of basic physical needs such as food, shelter and clothing. Negative

stereotypes were prominent in social attitudes of the time.

In the 13th century, England declared people with intellectual

disability to be incapable of making decisions or managing their

affairs. Guardianships were created to take over their financial affairs.

In the 17th century, Thomas Willis provided the first description of intellectual disability as a disease.

He believed that it was caused by structural problems in the brain.

According to Willis, the anatomical problems could be either an inborn

condition or acquired later in life.

In the 18th and 19th centuries, housing and care moved away from families and towards an asylum model.

People were placed by, or removed from, their families (usually in

infancy) and housed in large professional institutions, many of which

were self-sufficient through the labor of the residents. Some of these

institutions provided a very basic level of education (such as

differentiation between colors and basic word recognition and numeracy),

but most continued to focus solely on the provision of basic needs

of food, clothing, and shelter. Conditions in such institutions varied

widely, but the support provided was generally non-individualized, with

aberrant behavior and low levels of economic productivity regarded as a

burden to society. Individuals of higher wealth were often able to

afford higher degrees of care such as home care or private asylums. Heavy tranquilization and assembly-line methods of support were the norm, and the medical model of disability

prevailed. Services were provided based on the relative ease to the

provider, not based on the needs of the individual. A survey taken in

1891 in Cape Town, South Africa shows the distribution between different

facilities. Out of 2046 persons surveyed, 1,281 were in private

dwellings, 120 in jails, and 645 in asylums, with men representing

nearly two-thirds of the number surveyed. In situations of scarcity of

accommodation, preference was given to white men and black men (whose

insanity threatened white society by disrupting employment relations and

the tabooed sexual contact with white women).

In the late 19th century, in response to Charles Darwin's On the Origin of Species, Francis Galton proposed selective breeding of humans to reduce intellectual disability. Early in the 20th century, the eugenics

movement became popular throughout the world. This led to forced

sterilization and prohibition of marriage in most of the developed world

and was later used by Adolf Hitler as a rationale for the mass murder of people with intellectual disability during the holocaust.

Eugenics was later abandoned as an evil violation of human rights, and

the practice of forced sterilization and prohibition from marriage was

discontinued by most of the developed world by the mid-20th century.

In 1905, Alfred Binet produced the first standardized test for measuring intelligence in children.

Although ancient Roman law had declared people with intellectual disability to be incapable of the deliberate intent to harm that was necessary for a person to commit a crime, during the 1920s, Western society believed they were morally degenerate.

Ignoring the prevailing attitude, Civitans

adopted service to people with developmental disabilities as a major

organizational emphasis in 1952. Their earliest efforts included

workshops for special education teachers and daycamps for children with

disabilities, all at a time when such training and programs were almost

nonexistent.

The segregation of people with developmental disabilities was not

widely questioned by academics or policy-makers until the 1969

publication of Wolf Wolfensberger's seminal work "The Origin and Nature of Our Institutional Models",

drawing on some of the ideas proposed by SG Howe 100 years earlier.

This book posited that society characterizes people with disabilities as

deviant,

sub-human and burdens of charity, resulting in the adoption of that

"deviant" role. Wolfensberger argued that this dehumanization, and the

segregated institutions that result from it, ignored the potential

productive contributions that all people can make to society. He pushed

for a shift in policy and practice that recognized the human needs of

those with intellectual disability and provided the same basic human

rights as for the rest of the population.

The publication of this book may be regarded as the first move towards the widespread adoption of the social model of disability

in regard to these types of disabilities, and was the impetus for the

development of government strategies for desegregation. Successful lawsuits

against governments and an increasing awareness of human rights and

self-advocacy also contributed to this process, resulting in the passing

in the U.S. of the Civil Rights of Institutionalized Persons Act in 1980.

From the 1960s to the present, most states have moved towards the elimination of segregated institutions. Normalization and deinstitutionalization are dominant. Along with the work of Wolfensberger and others including Gunnar and Rosemary Dybwad,

a number of scandalous revelations around the horrific conditions

within state institutions created public outrage that led to change to a

more community-based method of providing services.

By the mid-1970s, most governments had committed to

de-institutionalization, and had started preparing for the wholesale

movement of people into the general community, in line with the

principles of normalization.

In most countries, this was essentially complete by the late 1990s,

although the debate over whether or not to close institutions persists

in some states, including Massachusetts.

In the past, lead poisoning and infectious diseases

were significant causes of intellectual disability. Some causes of

intellectual disability are decreasing, as medical advances, such as

vaccination, increase. Other causes are increasing as a proportion of

cases, perhaps due to rising maternal age, which is associated with several syndromic forms of intellectual disability.

Along with the changes in terminology, and the downward drift in

acceptability of the old terms, institutions of all kinds have had to

repeatedly change their names. This affects the names of schools,

hospitals, societies, government departments, and academic journals. For

example, the Midlands Institute of Mental Subnormality became the

British Institute of Mental Handicap and is now the British Institute of

Learning Disability. This phenomenon is shared with mental health and motor disabilities, and seen to a lesser degree in sensory disabilities.

Terminology

The terms used for this condition are subject to a process called the euphemism treadmill. This means that whatever term is chosen for this condition, it eventually becomes perceived as an insult. The terms mental retardation and mentally retarded were invented in the middle of the 20th century to replace the previous set of terms, which included "imbecile" and "moron"

and are now considered offensive. By the end of the 20th century, these

terms themselves have come to be widely seen as disparaging, politically incorrect, and in need of replacement. The term intellectual disability is now preferred by most advocates and researchers in most English-speaking countries.

The term "mental retardation" was used in the American Psychiatric Association's DSM-IV (1994) and in the World Health Organization's ICD-10 (codes F70–F79). In the next revision, the ICD-11, this term has been replaced by the term "disorders of intellectual development" (codes 6A00–6A04; 6A00.Z for the "unspecified" diagnosis code). The term "intellectual disability (intellectual developmental disorder)" is used in DSM-5 (2013). As of 2013, "intellectual disability (intellectual developmental disorder)" is the term that has come into common use by among educational, psychiatric, and other professionals over the past two decades.

Because of its specificity and lack of confusion with other conditions,

the term "mental retardation" is still sometimes used in professional

medical settings around the world, such as formal scientific research and health insurance paperwork.

The several traditional terms that long predate psychiatry

are simple forms of abuse in common usage today; they are often

encountered in such old documents as books, academic papers, and census forms. For example, the British census of 1901 has a column heading including the terms imbecile and feeble-minded.

Vaguer expressions like developmentally disabled, special, or challenged have been used instead of the term mentally retarded. The term developmental delay was popular among caretakers and parents of individuals with intellectual disability because delay suggests that a person is slowly reaching his or her full potential, rather than having a lifelong condition.

Usage has changed over the years and differed from country to country. For example, mental retardation in some contexts covers the whole field but previously applied to what is now the mild MR group. Feeble-minded used to mean mild MR in the UK, and once applied in the US to the whole field. "Borderline intellectual functioning"

is not currently defined, but the term may be used to apply to people

with IQs in the 70s. People with IQs of 70 to 85 used to be eligible for

special consideration in the US public education system on grounds of

intellectual disability.

- Cretin is the oldest and comes from a dialectal French word for Christian. The implication was that people with significant intellectual or developmental disabilities were "still human" (or "still Christian") and deserved to be treated with basic human dignity. Individuals with the condition were considered to be incapable of sinning, thus "christ-like" in their disposition. This term has not been used in scientific endeavors since the middle of the 20th century and is generally considered a term of abuse. Although cretin is no longer in use, the term cretinism is still used to refer to the mental and physical disability resulting from untreated congenital hypothyroidism.

- Amentia has a long history, mostly associated with dementia. The difference between amentia and dementia was originally defined by time of onset. Amentia was the term used to denote an individual who developed deficits in mental functioning early in life, while dementia included individuals who develop mental deficiencies as adults. Theodor Meynert in the 1890s lectures described amentia as a form of sudden-onset confusion (German: Verwirrtheit), often with hallucinations. This term was long in use in psychiatry in this sense. Emil Kraepelin in the 1910s wrote that “acute confusion (amentia)” is a form of febrile delirium. By 1912, amentia was a classification lumping "idiots, imbeciles, and feeble minded" individuals in a category separate from a dementia classification, in which the onset is later in life. In Russian psychiatry the term “amentia” defines a form of clouding of consciousness, which is dominated by confusion, true hallucinations, incoherence of thinking and speech and chaotic movements. In Russia “amentia” (Russian: аменция) is not associated with intellectual disability and mean only clouding of consciousness.

- Idiot indicated the greatest degree of intellectual disability, where the mental age is two years or less, and the person cannot guard himself or herself against common physical dangers. The term was gradually replaced by the term profound mental retardation (which has itself since been replaced by other terms).

- Imbecile indicated an intellectual disability less extreme than idiocy and not necessarily inherited. It is now usually subdivided into two categories, known as severe intellectual disability and moderate intellectual disability.

- Moron was defined by the American Association for the Study of the Feeble-minded in 1910, following work by Henry H. Goddard, as the term for an adult with a mental age between eight and twelve; mild intellectual disability is now the term for this condition. Alternative definitions of these terms based on IQ were also used. This group was known in UK law from 1911 to 1959–60 as feeble-minded.

- Mongolism and Mongoloid idiot were medical terms used to identify someone with Down syndrome, as the doctor who first described the syndrome, John Langdon Down, believed that children with Down syndrome shared facial similarities with Blumenbach's "Mongolian race". The Mongolian People's Republic requested that the medical community cease use of the term as a referent to intellectual disability. Their request was granted in the 1960s, when the World Health Organization agreed that the term should cease being used within the medical community.

- In the field of special education, educable (or "educable intellectual disability") refers to ID students with IQs of approximately 50–75 who can progress academically to a late elementary level. Trainable (or "trainable intellectual disability") refers to students whose IQs fall below 50 but who are still capable of learning personal hygiene and other living skills in a sheltered setting, such as a group home. In many areas, these terms have been replaced by use of "moderate" and "severe" intellectual disability. While the names change, the meaning stays roughly the same in practice.

- Retarded comes from the Latin retardare, "to make slow, delay, keep back, or hinder," so mental retardation meant the same as mentally delayed.

The term was recorded in 1426 as a "fact or action of making slower in

movement or time". The first record of retarded in relation to being

mentally slow was in 1895. The term mentally retarded was used to replace terms like idiot, moron, and imbecile because retarded was not then a derogatory term. By the 1960s, however, the term had taken on a partially derogatory meaning as well. The noun retard is particularly seen as pejorative; a BBC survey in 2003 ranked it as the most offensive disability-related word, ahead of terms such as spastic (or its abbreviation spaz) and mong. The terms mentally retarded and mental retardation are still fairly common, but currently the Special Olympics, Best Buddies, and over 100 other organizations are striving to eliminate their use by referring to the word retard and its variants as the "r-word", in an effort to equate it to the word nigger and the associated euphemism "n-word",

in everyday conversation. These efforts have resulted in federal

legislation, sometimes known as "Rosa's Law", to replace the term mentally retarded with the term intellectual disability in some federal statutes.

The term mental retardation was a diagnostic term denoting the group of disconnected categories of mental functioning such as idiot, imbecile, and moron derived from early IQ tests, which acquired pejorative connotations in popular discourse. It acquired negative and shameful connotations over the last few decades due to the use of the words retarded and retard as insults. This may have contributed to its replacement with euphemisms such as mentally challenged or intellectually disabled. While developmental disability includes many other disorders, developmental disability and developmental delay (for people under the age of 18) are generally considered more polite terms than mental retardation.

United States

Special Olympics USA team in July 2019

- In North America, intellectual disability is subsumed into the broader term developmental disability, which also includes epilepsy, autism, cerebral palsy, and other disorders that develop during the developmental period (birth to age 18). Because service provision is tied to the designation "developmental disability", it is used by many parents, direct support professionals, and physicians. In the United States, however, in school-based settings, the more specific term mental retardation or, more recently (and preferably), intellectual disability, is still typically used, and is one of 13 categories of disability under which children may be identified for special education services under Public Law 108-446.

- The phrase intellectual disability is increasingly being used as a synonym for people with significantly below-average cognitive ability. These terms are sometimes used as a means of separating general intellectual limitations from specific, limited deficits as well as indicating that it is not an emotional or psychological disability. It is not specific to congenital disorders such as Down syndrome.

The American Association on Mental Retardation changed its name to the American Association on Intellectual and Developmental Disabilities (AAIDD) in 2007, and soon thereafter changed the names of its scholarly journals

to reflect the term "intellectual disability". In 2010, the AAIDD

released its 11th edition of its terminology and classification manual,

which also used the term intellectual disability.

United Kingdom

In the UK, mental handicap had become the common medical term, replacing mental subnormality in Scotland and mental deficiency in England and Wales, until Stephen Dorrell, Secretary of State for Health for the United Kingdom from 1995–97, changed the NHS's designation to learning disability.

The new term is not yet widely understood, and is often taken to refer

to problems affecting schoolwork (the American usage), which are known

in the UK as "learning difficulties". British social workers may use "learning difficulty" to refer to both people with intellectual disability and those with conditions such as dyslexia. In education, "learning difficulties" is applied to a wide range of conditions: "specific learning difficulty" may refer to dyslexia, dyscalculia or developmental coordination disorder,

while "moderate learning difficulties", "severe learning difficulties"

and "profound learning difficulties" refer to more significant

impairments.

In England and Wales between 1983 and 2008, the Mental Health Act 1983

defined "mental impairment" and "severe mental impairment" as "a state

of arrested or incomplete development of mind which includes

significant/severe impairment of intelligence and social functioning and

is associated with abnormally aggressive or seriously irresponsible

conduct on the part of the person concerned."

As behavior was involved, these were not necessarily permanent

conditions: they were defined for the purpose of authorizing detention

in hospital or guardianship. The term mental impairment was removed from the Act in November 2008, but the grounds for detention remained. However, English statute law uses mental impairment

elsewhere in a less well-defined manner—e.g. to allow exemption from

taxes—implying that intellectual disability without any behavioral

problems is what is meant.

A BBC poll conducted in the United Kingdom came to the conclusion that 'retard' was the most offensive disability-related word. On the reverse side of that, when a contestant on Celebrity Big Brother live used the phrase "walking like a retard", despite complaints from the public and the charity Mencap, the communications regulator Ofcom

did not uphold the complaint saying "it was not used in an offensive

context [...] and had been used light-heartedly". It was, however, noted

that two previous similar complaints from other shows were upheld.

Australia

In the past, Australia has used British and American terms

interchangeably, including "mental retardation" and "mental handicap".

Today, "intellectual disability" is the preferred and more commonly used

descriptor.

Society and culture

Severely disabled girl in Bhutan

People with intellectual disabilities are often not seen as full

citizens of society. Person-centered planning and approaches are seen as

methods of addressing the continued labeling and exclusion of socially

devalued people, such as people with disabilities, encouraging a focus

on the person as someone with capacities and gifts as well as support

needs. The self-advocacy movement promotes the right of self-determination and self-direction by people with intellectually disabilities, which means allowing them to make decisions about their own lives.

Until the middle of the 20th century, people with intellectual

disabilities were routinely excluded from public education, or educated

away from other typically developing children. Compared to peers who

were segregated in special schools, students who are mainstreamed or included in regular classrooms report similar levels of stigma and social self-conception, but more ambitious plans for employment.

As adults, they may live independently, with family members, or in

different types of institutions organized to support people with

disabilities. About 8% currently live in an institution or a group home.

In the United States, the average lifetime cost of a person with

an intellectual disability amounts to $223,000 per person, in 2003 US

dollars, for direct costs such as medical and educational expenses. The indirect costs were estimated at $771,000, due to shorter lifespans and lower than average economic productivity.

The total direct and indirect costs, which amount to a little more

than a million dollars, are slightly more than the economic costs

associated with cerebral palsy, and double that associated with serious vision or hearing impairmentss.

Of the costs, about 14% is due to increased medical expenses (not

including what is normally incurred by the typical person), and 10% is

due to direct non-medical expenses, such as the excess cost of special education compared to standard schooling. The largest amount, 76%, is indirect costs accounting for reduced productivity and shortened lifespans. Some expenses, such as ongoing costs to family caregivers or the extra costs associated with living in a group home, were excluded from this calculation.

Health disparities

People with intellectual disability as a group have higher rates of

adverse health conditions such as epilepsy and neurological disorders,

gastrointestinal disorders, and behavioral and psychiatric problems

compared to people without disabilities.

Adults also have a higher prevalence of poor social determinants of

health, behavioral risk factors, depression, diabetes, and poor or fair

health status than adults without intellectual disability.

In the United Kingdom people with intellectual disability live on average 16 years less than the general population.