A software bug is an error, flaw or fault in a computer program or system that causes it to produce an incorrect or unexpected result, or to behave in unintended ways. The process of finding and fixing bugs is termed "debugging" and often uses formal techniques or tools to pinpoint bugs, and since the 1950s, some computer systems have been designed to also deter, detect or auto-correct various computer bugs during operations.

Most bugs arise from mistakes and errors made in either a program's design or its source code, or in components and operating systems used by such programs. A few are caused by compilers producing incorrect code. A program that contains many bugs, and/or bugs that seriously interfere with its functionality, is said to be buggy (defective). Bugs can trigger errors that may have ripple effects. Bugs may have subtle effects or cause the program to crash or freeze the computer. Other bugs qualify as security bugs and might, for example, enable a malicious user to bypass access controls in order to obtain unauthorized privileges.

Some software bugs have been linked to disasters. Bugs in code that controlled the Therac-25 radiation therapy machine were directly responsible for patient deaths in the 1980s. In 1996, the European Space Agency's US$1 billion prototype Ariane 5 rocket had to be destroyed less than a minute after launch due to a bug in the on-board guidance computer program. In June 1994, a Royal Air Force Chinook helicopter crashed into the Mull of Kintyre, killing 29. This was initially dismissed as pilot error, but an investigation by Computer Weekly convinced a House of Lords inquiry that it may have been caused by a software bug in the aircraft's engine-control computer.

In 2002, a study commissioned by the US Department of Commerce's National Institute of Standards and Technology concluded that "software bugs, or errors, are so prevalent and so detrimental that they cost the US economy an estimated $59 billion annually, or about 0.6 percent of the gross domestic product".

History

The Middle English word bugge is the basis for the terms "bugbear" and "bugaboo" as terms used for a monster.

The term "bug" to describe defects has been a part of engineering

jargon since the 1870s and predates electronic computers and computer

software; it may have originally been used in hardware engineering to

describe mechanical malfunctions. For instance, Thomas Edison wrote the following words in a letter to an associate in 1878:

It has been just so in all of my inventions. The first step is an intuition, and comes with a burst, then difficulties arise—this thing gives out and [it is] then that "Bugs"—as such little faults and difficulties are called—show themselves and months of intense watching, study and labor are requisite before commercial success or failure is certainly reached.

Baffle Ball, the first mechanical pinball game, was advertised as being "free of bugs" in 1931. Problems with military gear during World War II were referred to as bugs (or glitches). In the 1940 film, Flight Command, a defect in a piece of direction-finding gear is called a "bug". In a book published in 1942, Louise Dickinson Rich, speaking of a powered ice cutting machine, said, "Ice sawing was suspended until the creator could be brought in to take the bugs out of his darling."

Isaac Asimov used the term "bug" to relate to issues with a robot in his short story "Catch That Rabbit", published in 1944.

A page from the Harvard Mark II electromechanical computer's log, featuring a dead moth that was removed from the device.

The term "bug" was used in an account by computer pioneer Grace Hopper, who publicized the cause of a malfunction in an early electromechanical computer. A typical version of the story is:

In 1946, when Hopper was released from active duty, she joined the Harvard Faculty at the Computation Laboratory where she continued her work on the Mark II and Mark III. Operators traced an error in the Mark II to a moth trapped in a relay, coining the term bug. This bug was carefully removed and taped to the log book. Stemming from the first bug, today we call errors or glitches in a program a bug.

Hopper did not find the bug, as she readily acknowledged. The date in the log book was September 9, 1947. The operators who found it, including William "Bill" Burke, later of the Naval Weapons Laboratory, Dahlgren, Virginia,

were familiar with the engineering term and amusedly kept the insect

with the notation "First actual case of bug being found." Hopper loved

to recount the story. This log book, complete with attached moth, is part of the collection of the Smithsonian National Museum of American History.

The related term "debug" also appears to predate its usage in computing: the Oxford English Dictionary's etymology of the word contains an attestation from 1945, in the context of aircraft engines.

The concept that software might contain errors dates back to Ada Lovelace's 1843 notes on the analytical engine, in which she speaks of the possibility of program "cards" for Charles Babbage's analytical engine being erroneous:

... an analysing process must equally have been performed in order to furnish the Analytical Engine with the necessary operative data; and that herein may also lie a possible source of error. Granted that the actual mechanism is unerring in its processes, the cards may give it wrong orders.

"Bugs in the System" report

The Open Technology Institute, run by the group, New America,

released a report "Bugs in the System" in August 2016 stating that U.S.

policymakers should make reforms to help researchers identify and

address software bugs. The report "highlights the need for reform in the

field of software vulnerability discovery and disclosure."

One of the report's authors said that Congress has not done enough to

address cyber software vulnerability, even though Congress has passed a

number of bills to combat the larger issue of cyber security.

Government researchers, companies, and cyber security experts are

the people who typically discover software flaws. The report calls for

reforming computer crime and copyright laws.

The Computer Fraud and Abuse Act, the Digital Millennium Copyright Act and the Electronic Communications Privacy Act criminalize and create civil penalties for actions that security researchers routinely engage in while conducting legitimate security research, the report said.

Terminology

While

the use of the term "bug" to describe software errors is common, many

have suggested that it should be abandoned. One argument is that the

word "bug" is divorced from a sense that a human being caused the

problem, and instead implies that the defect arose on its own, leading

to a push to abandon the term "bug" in favor of terms such as "defect",

with limited success. Since the 1970s Gary Kildall somewhat humorously suggested to use the term "blunder".

In software engineering, mistake metamorphism (from Greek meta = "change", morph

= "form") refers to the evolution of a defect in the final stage of

software deployment. Transformation of a "mistake" committed by an

analyst in the early stages of the software development lifecycle, which

leads to a "defect" in the final stage of the cycle has been called

'mistake metamorphism'. Different stages of a "mistake" in the entire cycle may be

described as "mistakes", "anomalies", "faults", "failures", "errors",

"exceptions", "crashes", " glitches", "bugs", "defects", "incidents", or

"side effects".

Prevention

The software industry has put much effort into reducing bug counts. These include:

Typographical errors

Bugs usually appear when the programmer makes a logic error. Various innovations in programming style and defensive programming are designed to make these bugs less likely, or easier to spot. Some typos, especially of symbols or logical/mathematical operators,

allow the program to operate incorrectly, while others such as a

missing symbol or misspelled name may prevent the program from

operating. Compiled languages can reveal some typos when the source code

is compiled.

Development methodologies

Several schemes assist managing programmer activity so that fewer bugs are produced. Software engineering (which addresses software design issues as well) applies many techniques to prevent defects. For example, formal program specifications

state the exact behavior of programs so that design bugs may be

eliminated. Unfortunately, formal specifications are impractical for

anything but the shortest programs, because of problems of combinatorial explosion and indeterminacy.

Unit testing involves writing a test for every function (unit) that a program is to perform.

In test-driven development unit tests are written before the code and the code is not considered complete until all tests complete successfully.

Agile software development involves frequent software releases with relatively small changes. Defects are revealed by user feedback.

Open source development allows anyone to examine source code. A school of thought popularized by Eric S. Raymond as Linus's law says that popular open-source software has more chance of having few or no bugs than other software, because "given enough eyeballs, all bugs are shallow". This assertion has been disputed, however: computer security specialist Elias Levy

wrote that "it is easy to hide vulnerabilities in complex, little

understood and undocumented source code," because, "even if people are

reviewing the code, that doesn't mean they're qualified to do so." An example of this actually happening, accidentally, was the 2008 OpenSSL vulnerability in Debian.

Programming language support

Programming languages include features to help prevent bugs, such as static type systems, restricted namespaces and modular programming. For example, when a programmer writes (pseudocode)

LET REAL_VALUE PI = "THREE AND A BIT", although this may be syntactically correct, the code fails a type check.

Compiled languages catch this without having to run the program.

Interpreted languages catch such errors at runtime. Some languages

deliberately exclude features that easily lead to bugs, at the expense

of slower performance: the general principle being that, it is almost

always better to write simpler, slower code than inscrutable code that

runs slightly faster, especially considering that maintenance cost is substantial. For example, the Java programming language does not support pointer arithmetic; implementations of some languages such as Pascal and scripting languages often have runtime bounds checking of arrays, at least in a debugging build.

Code analysis

Tools for code analysis

help developers by inspecting the program text beyond the compiler's

capabilities to spot potential problems. Although in general the problem

of finding all programming errors given a specification is not solvable,

these tools exploit the fact that human programmers tend to make

certain kinds of simple mistakes often when writing software.

Instrumentation

Tools to monitor the performance of the software as it is running, either specifically to find problems such as bottlenecks or to give assurance as to correct working, may be embedded in the code explicitly (perhaps as simple as a statement saying

PRINT "I AM HERE"),

or provided as tools. It is often a surprise to find where most of the

time is taken by a piece of code, and this removal of assumptions might

cause the code to be rewritten.

Testing

Software testers

are people whose primary task is to find bugs, or write code to support

testing. On some projects, more resources may be spent on testing than

in developing the program.

Measurements during testing can provide an estimate of the number

of likely bugs remaining; this becomes more reliable the longer a

product is tested and developed.

Debugging

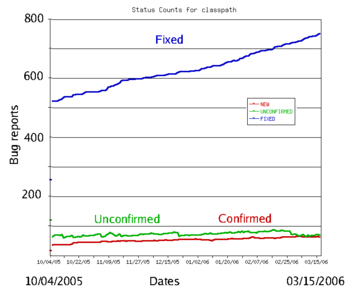

The typical bug history (GNU Classpath project data). A new bug submitted by the user is unconfirmed. Once it has been reproduced by a developer, it is a confirmed bug. The confirmed bugs are later fixed. Bugs belonging to other categories (unreproducible, will not be fixed, etc.) are usually in the minority

Finding and fixing bugs, or debugging, is a major part of computer programming. Maurice Wilkes,

an early computing pioneer, described his realization in the late 1940s

that much of the rest of his life would be spent finding mistakes in

his own programs.

Usually, the most difficult part of debugging is finding the bug.

Once it is found, correcting it is usually relatively easy. Programs

known as debuggers

help programmers locate bugs by executing code line by line, watching

variable values, and other features to observe program behavior. Without

a debugger, code may be added so that messages or values may be written

to a console or to a window or log file to trace program execution or

show values.

However, even with the aid of a debugger, locating bugs is

something of an art. It is not uncommon for a bug in one section of a

program to cause failures in a completely different section, thus making it especially difficult to track (for example, an error in a graphics rendering routine causing a file I/O routine to fail), in an apparently unrelated part of the system.

Sometimes, a bug is not an isolated flaw, but represents an error of thinking or planning on the part of the programmer. Such logic errors require a section of the program to be overhauled or rewritten. As a part of code review,

stepping through the code and imagining or transcribing the execution

process may often find errors without ever reproducing the bug as such.

More typically, the first step in locating a bug is to reproduce

it reliably. Once the bug is reproducible, the programmer may use a

debugger or other tool while reproducing the error to find the point at

which the program went astray.

Some bugs are revealed by inputs that may be difficult for the programmer to re-create. One cause of the Therac-25 radiation machine deaths was a bug (specifically, a race condition)

that occurred only when the machine operator very rapidly entered a

treatment plan; it took days of practice to become able to do this, so

the bug did not manifest in testing or when the manufacturer attempted

to duplicate it. Other bugs may stop occurring whenever the setup is

augmented to help find the bug, such as running the program with a

debugger; these are called heisenbugs (humorously named after the Heisenberg uncertainty principle).

Since the 1990s, particularly following the Ariane 5 Flight 501 disaster, interest in automated aids to debugging rose, such as static code analysis by abstract interpretation.

Some classes of bugs have nothing to do with the code. Faulty

documentation or hardware may lead to problems in system use, even

though the code matches the documentation. In some cases, changes to the

code eliminate the problem even though the code then no longer matches

the documentation. Embedded systems frequently work around hardware bugs, since to make a new version of a ROM is much cheaper than remanufacturing the hardware, especially if they are commodity items.

Benchmark of bugs

To facilitate reproducible research on testing and debugging, researchers use curated benchmarks of bugs:

- the Siemens benchmark

- ManyBugs is a benchmark of 185 C bugs in nine open-source programs.

- Defects4J is a benchmark of 341 Java bugs from 5 open-source projects. It contains the corresponding patches, which cover a variety of patch type.

- BEARS is a benchmark of continuous integration build failures focusing on test failures. It has been created by monitoring builds from open-source projects on Travis CI.

Bug management

Bug

management includes the process of documenting, categorizing,

assigning, reproducing, correcting and releasing the corrected code.

Proposed changes to software – bugs as well as enhancement requests and

even entire releases – are commonly tracked and managed using bug tracking systems or issue tracking systems. The items added may be called defects, tickets, issues, or, following the agile development paradigm, stories and epics. Categories may be objective, subjective or a combination, such as version number, area of the software, severity and priority, as well as what type of issue it is, such as a feature request or a bug.

Severity

Severity

is the impact the bug has on system operation. This impact may be data

loss, financial, loss of goodwill and wasted effort. Severity levels are

not standardized. Impacts differ across industry. A crash in a video

game has a totally different impact than a crash in a web browser, or

real time monitoring system. For example, bug severity levels might be

"crash or hang", "no workaround" (meaning there is no way the customer

can accomplish a given task), "has workaround" (meaning the user can

still accomplish the task), "visual defect" (for example, a missing

image or displaced button or form element), or "documentation error".

Some software publishers use more qualified severities such as

"critical", "high", "low", "blocker" or "trivial".

The severity of a bug may be a separate category to its priority for

fixing, and the two may be quantified and managed separately.

Priority

Priority

controls where a bug falls on the list of planned changes. The priority

is decided by each software producer. Priorities may be numerical, such

as 1 through 5, or named, such as "critical", "high", "low", or

"deferred". These rating scales may be similar or even identical to severity

ratings, but are evaluated as a combination of the bug's severity with

its estimated effort to fix; a bug with low severity but easy to fix may

get a higher priority than a bug with moderate severity that requires

excessive effort to fix. Priority ratings may be aligned with product

releases, such as "critical" priority indicating all the bugs that must

be fixed before the next software release.

Software releases

It

is common practice to release software with known, low-priority bugs.

Most big software projects maintain two lists of "known bugs" – those

known to the software team, and those to be told to users. The second list informs users about bugs that are not fixed in a specific release and workarounds

may be offered. Releases are of different kinds. Bugs of sufficiently

high priority may warrant a special release of part of the code

containing only modules with those fixes. These are known as patches. Most releases include a mixture of behavior changes and multiple bug fixes. Releases that emphasize bug fixes are known as maintenance

releases. Releases that emphasize feature additions/changes are known

as major releases and often have names to distinguish the new features

from the old.

Reasons that a software publisher opts not to patch or even fix a particular bug include:

- A deadline must be met and resources are insufficient to fix all bugs by the deadline.

- The bug is already fixed in an upcoming release, and it is not of high priority.

- The changes required to fix the bug are too costly or affect too many other components, requiring a major testing activity.

- It may be suspected, or known, that some users are relying on the existing buggy behavior; a proposed fix may introduce a breaking change.

- The problem is in an area that will be obsolete with an upcoming release; fixing it is unnecessary.

- It's "not a bug". A misunderstanding has arisen between expected and perceived behavior, when such misunderstanding is not due to confusion arising from design flaws, or faulty documentation.

Types

In software development projects, a "mistake" or "fault" may be

introduced at any stage. Bugs arise from oversights or misunderstandings

made by a software team during specification, design, coding, data

entry or documentation. For example, a relatively simple program to

alphabetize a list of words, the design might fail to consider what

should happen when a word contains a hyphen. Or when converting an abstract design into code, the coder might inadvertently create an off-by-one error and fail to sort the last word in a list. Errors may be as simple as a typing error: a "<" where a ">" was intended.

Another category of bug is called a race condition

that may occur when programs have multiple components executing at the

same time. If the components interact in a different order than the

developer intended, they could interfere with each other and stop the

program from completing its tasks. These bugs may be difficult to detect

or anticipate, since they may not occur during every execution of a

program.

Conceptual errors are a developer's misunderstanding of what the

software must do. The resulting software may perform according to the

developer's understanding, but not what is really needed. Other types:

Arithmetic

- Division by zero.

- Arithmetic overflow or underflow.

- Loss of arithmetic precision due to rounding or numerically unstable algorithms.

Logic

- Infinite loops and infinite recursion.

- Off-by-one error, counting one too many or too few when looping.

Syntax

- Use of the wrong operator, such as performing assignment instead of equality test. For example, in some languages x=5 will set the value of x to 5 while x==5 will check whether x is currently 5 or some other number. Interpreted languages allow such code to fail. Compiled languages can catch such errors before testing begins.

Resource

- Null pointer dereference.

- Using an uninitialized variable.

- Using an otherwise valid instruction on the wrong data type (see packed decimal/binary coded decimal).

- Access violations.

- Resource leaks, where a finite system resource (such as memory or file handles) become exhausted by repeated allocation without release.

- Buffer overflow, in which a program tries to store data past the end of allocated storage. This may or may not lead to an access violation or storage violation. These are known as security bugs.

- Excessive recursion which—though logically valid—causes stack overflow.

- Use-after-free error, where a pointer is used after the system has freed the memory it references.

- Double free error.

Multi-threading

- Deadlock, where task A cannot continue until task B finishes, but at the same time, task B cannot continue until task A finishes.

- Race condition, where the computer does not perform tasks in the order the programmer intended.

- Concurrency errors in critical sections, mutual exclusions and other features of concurrent processing. Time-of-check-to-time-of-use (TOCTOU) is a form of unprotected critical section.

Interfacing

- Incorrect API usage.

- Incorrect protocol implementation.

- Incorrect hardware handling.

- Incorrect assumptions of a particular platform.

- Incompatible systems. A new API or communications protocol may seem to work when two systems use different versions, but errors may occur when a function or feature implemented in one version is changed or missing in another. In production systems which must run continually, shutting down the entire system for a major update may not be possible, such as in the telecommunication industry or the internet. In this case, smaller segments of a large system are upgraded individually, to minimize disruption to a large network. However, some sections could be overlooked and not upgraded, and cause compatibility errors which may be difficult to find and repair.

- Incorrect code annotations

Teamworking

- Unpropagated updates; e.g. programmer changes "myAdd" but forgets to change "mySubtract", which uses the same algorithm. These errors are mitigated by the Don't Repeat Yourself philosophy.

- Comments out of date or incorrect: many programmers assume the comments accurately describe the code.

- Differences between documentation and product.

Implications

The

amount and type of damage a software bug may cause naturally affects

decision-making, processes and policy regarding software quality. In

applications such as manned space travel or automotive safety,

since software flaws have the potential to cause human injury or even

death, such software will have far more scrutiny and quality control

than, for example, an online shopping website. In applications such as

banking, where software flaws have the potential to cause serious

financial damage to a bank or its customers, quality control is also

more important than, say, a photo editing application. NASA's Software Assurance Technology Center managed to reduce the number of errors to fewer than 0.1 per 1000 lines of code (SLOC) but this was not felt to be feasible for projects in the business world.

Well-known bugs

A number of software bugs have become well-known, usually due to

their severity: examples include various space and military aircraft

crashes. Possibly the most famous bug is the Year 2000 problem,

also known as the Y2K bug, in which it was feared that worldwide

economic collapse would happen at the start of the year 2000 as a result

of computers thinking it was 1900. (In the end, no major problems

occurred.)

The 2012 stock trading disruption involved one such incompatibility between the old API and a new API.

In popular culture

- In both the 1968 novel 2001: A Space Odyssey and the corresponding 1968 film 2001: A Space Odyssey, a spaceship's onboard computer, HAL 9000, attempts to kill all its crew members. In the follow-up 1982 novel, 2010: Odyssey Two, and the accompanying 1984 film, 2010, it is revealed that this action was caused by the computer having been programmed with two conflicting objectives: to fully disclose all its information, and to keep the true purpose of the flight secret from the crew; this conflict caused HAL to become paranoid and eventually homicidal.

- In the 1999 American comedy Office Space, three employees attempt to exploit their company's preoccupation with fixing the Y2K computer bug by infecting the company's computer system with a virus that sends rounded off pennies to a separate bank account. The plan backfires as the virus itself has its own bug, which sends large amounts of money to the account prematurely.

- The 2004 novel The Bug, by Ellen Ullman, is about a programmer's attempt to find an elusive bug in a database application.

- The 2008 Canadian film Control Alt Delete is about a computer programmer at the end of 1999 struggling to fix bugs at his company related to the year 2000 problem.