Text mining, also referred to as text data mining, similar to text analytics, is the process of deriving high-quality information from text. It involves "the discovery by computer of new, previously unknown information, by automatically extracting information from different written resources." Written resources may include websites, books, emails, reviews, and articles. High-quality information is typically obtained by devising patterns and trends by means such as statistical pattern learning. According to Hotho et al. (2005) we can differ three different perspectives of text mining: information extraction, data mining, and a KDD (Knowledge Discovery in Databases) process. Text mining usually involves the process of structuring the input text (usually parsing, along with the addition of some derived linguistic features and the removal of others, and subsequent insertion into a database), deriving patterns within the structured data, and finally evaluation and interpretation of the output. 'High quality' in text mining usually refers to some combination of relevance, novelty, and interest. Typical text mining tasks include text categorization, text clustering, concept/entity extraction, production of granular taxonomies, sentiment analysis, document summarization, and entity relation modeling (i.e., learning relations between named entities).

Text analysis involves information retrieval, lexical analysis to study word frequency distributions, pattern recognition, tagging/annotation, information extraction, data mining techniques including link and association analysis, visualization, and predictive analytics. The overarching goal is, essentially, to turn text into data for analysis, via application of natural language processing (NLP), different types of algorithms and analytical methods. An important phase of this process is the interpretation of the gathered information.

A typical application is to scan a set of documents written in a natural language and either model the document set for predictive classification purposes or populate a database or search index with the information extracted. The document is the basic element while starting with text mining. Here, we define a document as a unit of textual data, which normally exists in many types of collections.

Text analytics

The term text analytics describes a set of linguistic, statistical, and machine learning techniques that model and structure the information content of textual sources for business intelligence, exploratory data analysis, research, or investigation. The term is roughly synonymous with text mining; indeed, Ronen Feldman modified a 2000 description of "text mining" in 2004 to describe "text analytics". The latter term is now used more frequently in business settings while "text mining" is used in some of the earliest application areas, dating to the 1980s, notably life-sciences research and government intelligence.

The term text analytics also describes that application of text analytics to respond to business problems, whether independently or in conjunction with query and analysis of fielded, numerical data. It is a truism that 80 percent of business-relevant information originates in unstructured form, primarily text. These techniques and processes discover and present knowledge – facts, business rules, and relationships – that is otherwise locked in textual form, impenetrable to automated processing.

Text analysis processes

Subtasks—components of a larger text-analytics effort—typically include:

- Dimensionality reduction is important technique for pre-processing data. Technique is used to identify the root word for actual words and reduce the size of the text data.[citation needed]

- Information retrieval or identification of a corpus is a preparatory step: collecting or identifying a set of textual materials, on the Web or held in a file system, database, or content corpus manager, for analysis.

- Although some text analytics systems apply exclusively advanced statistical methods, many others apply more extensive natural language processing, such as part of speech tagging, syntactic parsing, and other types of linguistic analysis.

- Named entity recognition is the use of gazetteers or statistical techniques to identify named text features: people, organizations, place names, stock ticker symbols, certain abbreviations, and so on.

- Disambiguation—the use of contextual clues—may be required to decide where, for instance, "Ford" can refer to a former U.S. president, a vehicle manufacturer, a movie star, a river crossing, or some other entity.

- Recognition of Pattern Identified Entities: Features such as telephone numbers, e-mail addresses, quantities (with units) can be discerned via regular expression or other pattern matches.

- Document clustering: identification of sets of similar text documents.

- Coreference: identification of noun phrases and other terms that refer to the same object.

- Relationship, fact, and event Extraction: identification of associations among entities and other information in text

- Sentiment analysis involves discerning subjective (as opposed to factual) material and extracting various forms of attitudinal information: sentiment, opinion, mood, and emotion. Text analytics techniques are helpful in analyzing sentiment at the entity, concept, or topic level and in distinguishing opinion holder and opinion object.

- Quantitative text analysis is a set of techniques stemming from the social sciences where either a human judge or a computer extracts semantic or grammatical relationships between words in order to find out the meaning or stylistic patterns of, usually, a casual personal text for the purpose of psychological profiling etc.

Applications

Text mining technology is now broadly applied to a wide variety of government, research, and business needs. All these groups may use text mining for records management and searching documents relevant to their daily activities. Legal professionals may use text mining for e-discovery, for example. Governments and military groups use text mining for national security and intelligence purposes. Scientific researchers incorporate text mining approaches into efforts to organize large sets of text data (i.e., addressing the problem of unstructured data), to determine ideas communicated through text (e.g., sentiment analysis in social media) and to support scientific discovery in fields such as the life sciences and bioinformatics. In business, applications are used to support competitive intelligence and automated ad placement, among numerous other activities.

Security applications

Many text mining software packages are marketed for security applications, especially monitoring and analysis of online plain text sources such as Internet news, blogs, etc. for national security purposes. It is also involved in the study of text encryption/decryption.

Biomedical applications

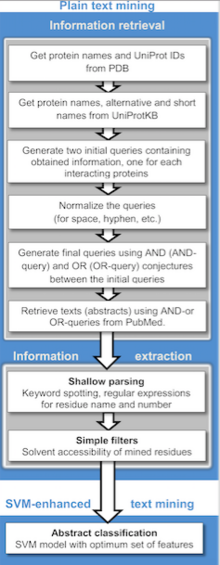

A range of text mining applications in the biomedical literature has been described, including computational approaches to assist with studies in protein docking, protein interactions, and protein-disease associations. In addition, with large patient textual datasets in the clinical field, datasets of demographic information in population studies and adverse event reports, text mining can facilitate clinical studies and precision medicine. Text mining algorithms can facilitate the stratification and indexing of specific clinical events in large patient textual datasets of symptoms, side effects, and comorbidities from electronic health records, event reports, and reports from specific diagnostic tests. One online text mining application in the biomedical literature is PubGene, a publicly accessible search engine that combines biomedical text mining with network visualization. GoPubMed is a knowledge-based search engine for biomedical texts. Text mining techniques also enable us to extract unknown knowledge from unstructured documents in the clinical domain

Software applications

Text mining methods and software is also being researched and developed by major firms, including IBM and Microsoft, to further automate the mining and analysis processes, and by different firms working in the area of search and indexing in general as a way to improve their results. Within public sector much effort has been concentrated on creating software for tracking and monitoring terrorist activities. For study purposes, Weka software is one of the most popular options in the scientific world, acting as an excellent entry point for beginners. For Python programmers, there is an excellent toolkit called NLTK for more general purposes. For more advanced programmers, there's also the Gensim library, which focuses on word embedding-based text representations.

Online media applications

Text mining is being used by large media companies, such as the Tribune Company, to clarify information and to provide readers with greater search experiences, which in turn increases site "stickiness" and revenue. Additionally, on the back end, editors are benefiting by being able to share, associate and package news across properties, significantly increasing opportunities to monetize content.

Business and marketing applications

Text analytics is being used in business, particularly, in marketing, such as in customer relationship management. Coussement and Van den Poel (2008) apply it to improve predictive analytics models for customer churn (customer attrition). Text mining is also being applied in stock returns prediction.

Sentiment analysis

Sentiment analysis may involve analysis of movie reviews for estimating how favorable a review is for a movie. Such an analysis may need a labeled data set or labeling of the affectivity of words. Resources for affectivity of words and concepts have been made for WordNet and ConceptNet, respectively.

Text has been used to detect emotions in the related area of affective computing. Text based approaches to affective computing have been used on multiple corpora such as students evaluations, children stories and news stories.

Scientific literature mining and academic applications

The issue of text mining is of importance to publishers who hold large databases of information needing indexing for retrieval. This is especially true in scientific disciplines, in which highly specific information is often contained within the written text. Therefore, initiatives have been taken such as Nature's proposal for an Open Text Mining Interface (OTMI) and the National Institutes of Health's common Journal Publishing Document Type Definition (DTD) that would provide semantic cues to machines to answer specific queries contained within the text without removing publisher barriers to public access.

Academic institutions have also become involved in the text mining initiative:

- The National Centre for Text Mining (NaCTeM), is the first publicly funded text mining centre in the world. NaCTeM is operated by the University of Manchester in close collaboration with the Tsujii Lab, University of Tokyo. NaCTeM provides customised tools, research facilities and offers advice to the academic community. They are funded by the Joint Information Systems Committee (JISC) and two of the UK research councils (EPSRC & BBSRC). With an initial focus on text mining in the biological and biomedical sciences, research has since expanded into the areas of social sciences.

- In the United States, the School of Information at University of California, Berkeley is developing a program called BioText to assist biology researchers in text mining and analysis.

- The Text Analysis Portal for Research (TAPoR), currently housed at the University of Alberta, is a scholarly project to catalogue text analysis applications and create a gateway for researchers new to the practice.

Methods for scientific literature mining

Computational methods have been developed to assist with information retrieval from scientific literature. Published approaches include methods for searching, determining novelty, and clarifying homonyms among technical reports.

Digital humanities and computational sociology

The automatic analysis of vast textual corpora has created the possibility for scholars to analyze millions of documents in multiple languages with very limited manual intervention. Key enabling technologies have been parsing, machine translation, topic categorization, and machine learning.

The automatic parsing of textual corpora has enabled the extraction of actors and their relational networks on a vast scale, turning textual data into network data. The resulting networks, which can contain thousands of nodes, are then analyzed by using tools from network theory to identify the key actors, the key communities or parties, and general properties such as robustness or structural stability of the overall network, or centrality of certain nodes. This automates the approach introduced by quantitative narrative analysis, whereby subject-verb-object triplets are identified with pairs of actors linked by an action, or pairs formed by actor-object.

Content analysis has been a traditional part of social sciences and media studies for a long time. The automation of content analysis has allowed a "big data" revolution to take place in that field, with studies in social media and newspaper content that include millions of news items. Gender bias, readability, content similarity, reader preferences, and even mood have been analyzed based on text mining methods over millions of documents. The analysis of readability, gender bias and topic bias was demonstrated in Flaounas et al. showing how different topics have different gender biases and levels of readability; the possibility to detect mood patterns in a vast population by analyzing Twitter content was demonstrated as well.

Software

Text mining computer programs are available from many commercial and open source companies and sources.

Intellectual property law

Situation in Europe

Under European copyright and database laws, the mining of in-copyright works (such as by web mining) without the permission of the copyright owner is illegal. In the UK in 2014, on the recommendation of the Hargreaves review, the government amended copyright law to allow text mining as a limitation and exception. It was the second country in the world to do so, following Japan, which introduced a mining-specific exception in 2009. However, owing to the restriction of the Information Society Directive (2001), the UK exception only allows content mining for non-commercial purposes. UK copyright law does not allow this provision to be overridden by contractual terms and conditions.

The European Commission facilitated stakeholder discussion on text and data mining in 2013, under the title of Licenses for Europe. The fact that the focus on the solution to this legal issue was licenses, and not limitations and exceptions to copyright law, led representatives of universities, researchers, libraries, civil society groups and open access publishers to leave the stakeholder dialogue in May 2013.

Situation in the United States

US copyright law, and in particular its fair use provisions, means that text mining in America, as well as other fair use countries such as Israel, Taiwan and South Korea, is viewed as being legal. As text mining is transformative, meaning that it does not supplant the original work, it is viewed as being lawful under fair use. For example, as part of the Google Book settlement the presiding judge on the case ruled that Google's digitization project of in-copyright books was lawful, in part because of the transformative uses that the digitization project displayed—one such use being text and data mining.

Implications

Until recently, websites most often used text-based searches, which only found documents containing specific user-defined words or phrases. Now, through use of a semantic web, text mining can find content based on meaning and context (rather than just by a specific word). Additionally, text mining software can be used to build large dossiers of information about specific people and events. For example, large datasets based on data extracted from news reports can be built to facilitate social networks analysis or counter-intelligence. In effect, the text mining software may act in a capacity similar to an intelligence analyst or research librarian, albeit with a more limited scope of analysis. Text mining is also used in some email spam filters as a way of determining the characteristics of messages that are likely to be advertisements or other unwanted material. Text mining plays an important role in determining financial market sentiment.

Future

Increasing interest is being paid to multilingual data mining: the ability to gain information across languages and cluster similar items from different linguistic sources according to their meaning.

The challenge of exploiting the large proportion of enterprise information that originates in "unstructured" form has been recognized for decades. It is recognized in the earliest definition of business intelligence (BI), in an October 1958 IBM Journal article by H.P. Luhn, A Business Intelligence System, which describes a system that will:

"...utilize data-processing machines for auto-abstracting and auto-encoding of documents and for creating interest profiles for each of the 'action points' in an organization. Both incoming and internally generated documents are automatically abstracted, characterized by a word pattern, and sent automatically to appropriate action points."

Yet as management information systems developed starting in the 1960s, and as BI emerged in the '80s and '90s as a software category and field of practice, the emphasis was on numerical data stored in relational databases. This is not surprising: text in "unstructured" documents is hard to process. The emergence of text analytics in its current form stems from a refocusing of research in the late 1990s from algorithm development to application, as described by Prof. Marti A. Hearst in the paper Untangling Text Data Mining:

For almost a decade the computational linguistics community has viewed large text collections as a resource to be tapped in order to produce better text analysis algorithms. In this paper, I have attempted to suggest a new emphasis: the use of large online text collections to discover new facts and trends about the world itself. I suggest that to make progress we do not need fully artificial intelligent text analysis; rather, a mixture of computationally-driven and user-guided analysis may open the door to exciting new results.

Hearst's 1999 statement of need fairly well describes the state of text analytics technology and practice a decade later.