A graphics processing unit (GPU) is a specialized electronic circuit initially designed to accelerate computer graphics and image processing (either on a video card or embedded on the motherboards, mobile phones, personal computers, workstations, and game consoles). After their initial design, GPUs were found to be useful for non-graphic calculations involving embarrassingly parallel problems due to their parallel structure. Other non-graphical uses include the training of neural networks and cryptocurrency mining.

History

1970s

Arcade system boards have used specialized graphics circuits since the 1970s. In early video game hardware, RAM for frame buffers was expensive, so video chips composited data together as the display was being scanned out on the monitor.

A specialized barrel shifter circuit helped the CPU animate the framebuffer graphics for various 1970s arcade video games from Midway and Taito, such as Gun Fight (1975), Sea Wolf (1976), and Space Invaders (1978). The Namco Galaxian arcade system in 1979 used specialized graphics hardware that supported RGB color, multi-colored sprites, and tilemap backgrounds. The Galaxian hardware was widely used during the golden age of arcade video games, by game companies such as Namco, Centuri, Gremlin, Irem, Konami, Midway, Nichibutsu, Sega, and Taito.

The Atari 2600 in 1977 used a video shifter called the Television Interface Adaptor. Atari 8-bit computers (1979) had ANTIC, a video processor which interpreted instructions describing a "display list"—the way the scan lines map to specific bitmapped or character modes and where the memory is stored (so there did not need to be a contiguous frame buffer). 6502 machine code subroutines could be triggered on scan lines by setting a bit on a display list instruction. ANTIC also supported smooth vertical and horizontal scrolling independent of the CPU.

1980s

The NEC µPD7220 was the first implementation of a personal computer graphics display processor as a single large-scale integration (LSI) integrated circuit chip. This enabled the design of low-cost, high-performance video graphics cards such as those from Number Nine Visual Technology. It became the best-known GPU until the mid-1980s. It was the first fully integrated VLSI (very large-scale integration) metal–oxide–semiconductor (NMOS) graphics display processor for PCs, supported up to 1024×1024 resolution, and laid the foundations for the emerging PC graphics market. It was used in a number of graphics cards and was licensed for clones such as the Intel 82720, the first of Intel's graphics processing units. The Williams Electronics arcade games Robotron 2084, Joust, Sinistar, and Bubbles, all released in 1982, contain custom blitter chips for operating on 16-color bitmaps.

In 1984, Hitachi released ARTC HD63484, the first major CMOS graphics processor for PC. The ARTC could display up to 4K resolution when in monochrome mode. It was used in a number of graphics cards and terminals during the late 1980s. In 1985, the Amiga was released with a custom graphics chip including a blitter for bitmap manipulation, line drawing, and area fill. It also included a coprocessor with its own simple instruction set, that was capable of manipulating graphics hardware registers in sync with the video beam (e.g. for per-scanline palette switches, sprite multiplexing, and hardware windowing), or driving the blitter. In 1986, Texas Instruments released the TMS34010, the first fully programmable graphics processor. It could run general-purpose code, but it had a graphics-oriented instruction set. During 1990–92, this chip became the basis of the Texas Instruments Graphics Architecture ("TIGA") Windows accelerator cards.

In 1987, the IBM 8514 graphics system was released. It was one of the first video cards for IBM PC compatibles to implement fixed-function 2D primitives in electronic hardware. Sharp's X68000, released in 1987, used a custom graphics chipset with a 65,536 color palette and hardware support for sprites, scrolling, and multiple playfields. It served as a development machine for Capcom's CP System arcade board. Fujitsu's FM Towns computer, released in 1989, had support for a 16,777,216 color palette. In 1988, the first dedicated polygonal 3D graphics boards were introduced in arcades with the Namco System 21 and Taito Air System.

IBM introduced its proprietary Video Graphics Array (VGA) display standard in 1987, with a maximum resolution of 640×480 pixels. In November 1988, NEC Home Electronics announced its creation of the Video Electronics Standards Association (VESA) to develop and promote a Super VGA (SVGA) computer display standard as a successor to VGA. Super VGA enabled graphics display resolutions up to 800×600 pixels, a 36% increase.

1990s

In 1991, S3 Graphics introduced the S3 86C911, which its designers named after the Porsche 911 as an indication of the performance increase it promised. The 86C911 spawned a host of imitators: by 1995, all major PC graphics chip makers had added 2D acceleration support to their chips. Fixed-function Windows accelerators surpassed expensive general-purpose graphics coprocessors in Windows performance, and such coprocessors faded away from the PC market.

Throughout the 1990s, 2D GUI acceleration evolved. As manufacturing capabilities improved, so did the level of integration of graphics chips. Additional application programming interfaces (APIs) arrived for a variety of tasks, such as Microsoft's WinG graphics library for Windows 3.x, and their later DirectDraw interface for hardware acceleration of 2D games in Windows 95 and later.

In the early- and mid-1990s, real-time 3D graphics became increasingly common in arcade, computer, and console games, which led to increasing public demand for hardware-accelerated 3D graphics. Early examples of mass-market 3D graphics hardware can be found in arcade system boards such as the Sega Model 1, Namco System 22, and Sega Model 2, and the fifth-generation video game consoles such as the Saturn, PlayStation, and Nintendo 64. Arcade systems such as the Sega Model 2 and SGI Onyx-based Namco Magic Edge Hornet Simulator in 1993 were capable of hardware T&L (transform, clipping, and lighting) years before appearing in consumer graphics cards. Another early example is the Super FX chip, a RISC-based on-cartridge graphics chip used in some SNES games, notably Doom and Star Fox. Some systems used DSPs to accelerate transformations. Fujitsu, which worked on the Sega Model 2 arcade system, began working on integrating T&L into a single LSI solution for use in home computers in 1995; the Fujitsu Pinolite, the first 3D geometry processor for personal computers, released in 1997. The first hardware T&L GPU on home video game consoles was the Nintendo 64's Reality Coprocessor, released in 1996. In 1997, Mitsubishi released the 3Dpro/2MP, a GPU capable of transformation and lighting, for workstations and Windows NT desktops; ATi utilized it for its FireGL 4000 graphics card, released in 1997.

The term "GPU" was coined by Sony in reference to the 32-bit Sony GPU (designed by Toshiba) in the PlayStation video game console, released in 1994.

In the PC world, notable failed attempts for low-cost 3D graphics chips included the S3 ViRGE, ATI Rage, and Matrox Mystique. These chips were essentially previous-generation 2D accelerators with 3D features bolted on. Many were pin-compatible with the earlier-generation chips for ease of implementation and minimal cost. Initially, performance 3D graphics were possible only with discrete boards dedicated to accelerating 3D functions (and lacking 2D GUI acceleration entirely) such as the PowerVR and the 3dfx Voodoo. However, as manufacturing technology continued to progress, video, 2D GUI acceleration, and 3D functionality were all integrated into one chip. Rendition's Verite chipsets were among the first to do this well. In 1997, Rendition collaborated with Hercules and Fujitsu on a "Thriller Conspiracy" project which combined a Fujitsu FXG-1 Pinolite geometry processor with a Vérité V2200 core to create a graphics card with a full T&L engine years before Nvidia's GeForce 256; This card, designed to reduce the load placed upon the system's CPU, never made it to market. NVIDIA RIVA 128 was one of the first consumer-facing GPU integrated 3D processing unit and 2D processing unit on a chip.

OpenGL appeared in the early '90s as a professional graphics API, but originally suffered from performance issues which allowed the Glide API to become a dominant force on the PC in the late '90s. These issues were quickly overcome and the Glide API fell by the wayside. Software implementations of OpenGL were common during this time, although the influence of OpenGL eventually led to widespread hardware support. DirectX became popular among Windows game developers during the late 90s. Unlike OpenGL, Microsoft insisted on providing strict one-to-one support of hardware. The approach made DirectX less popular as a standalone graphics API initially, since many GPUs provided their own specific features, which existing OpenGL applications were already able to benefit from, leaving DirectX often one generation behind. (See: Comparison of OpenGL and Direct3D.)

Microsoft began to work more closely with hardware developers and started to target the releases of DirectX to coincide with those of the supporting graphics hardware. Direct3D 5.0 was the first version of the API to gain widespread adoption in the gaming market, and it competed directly with more-hardware-specific, often proprietary, graphics libraries, while OpenGL maintained a strong following. Direct3D 7.0 introduced support for hardware-accelerated transform and lighting (T&L) for Direct3D; OpenGL had this capability from its inception. 3D accelerator cards moved beyond being simple rasterizers to add another significant hardware stage to the 3D rendering pipeline. The Nvidia GeForce 256 (also known as NV10) was the first consumer-level card with hardware-accelerated T&L; professional 3D cards already had this capability. Hardware transform and lighting—existing features of OpenGL—came to consumer-level hardware in the '90s and set the precedent for later pixel shader and vertex shader units which were far more flexible and programmable.

2000s

Nvidia was first to produce a chip capable of programmable shading: the GeForce 3 (code named NV20). Each pixel could now be processed by a short program that could include additional image textures as inputs, and each geometric vertex could likewise be processed by a short program before it was projected onto the screen. Used in the Xbox console, this chip competed with the one in the PlayStation 2, which used a custom vector unit for hardware accelerated vertex processing (commonly referred to as VU0/VU1). The earliest incarnations of shader execution engines used in Xbox were not general purpose and could not execute arbitrary pixel code. Vertices and pixels were processed by different units which had their own resources, with pixel shaders having much tighter constraints (because they execute at much higher frequencies than vertices). Pixel shading engines were actually more akin to a highly customizable function block and did not really "run" a program. Many of these disparities between vertex and pixel shading were not addressed until the Unified Shader Model.

In October 2002, with the introduction of the ATI Radeon 9700 (also known as R300), the world's first Direct3D 9.0 accelerator, pixel and vertex shaders could implement looping and lengthy floating point math, and were quickly becoming as flexible as CPUs, yet orders of magnitude faster for image-array operations. Pixel shading is often used for bump mapping, which adds texture to make an object look shiny, dull, rough, or even round or extruded.

With the introduction of the Nvidia GeForce 8 series and new generic stream processing units, GPUs became more generalized computing devices. Parallel GPUs are making computational inroads against the CPU, and a subfield of research, dubbed GPU computing or GPGPU for general purpose computing on GPU, has found applications in fields as diverse as machine learning, oil exploration, scientific image processing, linear algebra, statistics, 3D reconstruction, and stock options pricing. GPGPU was the precursor to what is now called a compute shader (e.g. CUDA, OpenCL, DirectCompute) and actually abused the hardware to a degree by treating the data passed to algorithms as texture maps and executing algorithms by drawing a triangle or quad with an appropriate pixel shader. This obviously entails some overheads since units like the scan converter are involved where they are not needed (nor are triangle manipulations even a concern—except to invoke the pixel shader).

Nvidia's CUDA platform, first introduced in 2007, was the earliest widely adopted programming model for GPU computing. OpenCL is an open standard defined by the Khronos Group that allows for the development of code for both GPUs and CPUs with an emphasis on portability. OpenCL solutions are supported by Intel, AMD, Nvidia, and ARM, and according to a report in 2011 by Evans Data, OpenCL had become the second most popular HPC tool.

2010s

In 2010, Nvidia partnered with Audi to power their cars' dashboards, using the Tegra GPU to provide increased functionality to cars' navigation and entertainment systems. Advances in GPU technology in cars helped advance self-driving technology. AMD's Radeon HD 6000 Series cards were released in 2010, and in 2011 AMD released its 6000M Series discrete GPUs for mobile devices. The Kepler line of graphics cards by Nvidia came out in 2012 and were used in the Nvidia's 600 and 700 series cards. A feature in this new GPU microarchitecture included GPU boost, a technology that adjusts the clock-speed of a video card to increase or decrease it according to its power draw. The Kepler microarchitecture was manufactured on the 28 nm process.

The PS4 and Xbox One were released in 2013; they both use GPUs based on AMD's Radeon HD 7850 and 7790. Nvidia's Kepler line of GPUs was followed by the Maxwell line, manufactured on the same process. Nvidia's 28 nm chips were manufactured by TSMC in Taiwan using the 28 nm process. Compared to the 40 nm technology from the past, this new manufacturing process allowed a 20 percent boost in performance while drawing less power. Virtual reality headsets have high system requirements; manufacturers recommended the GTX 970 and the R9 290X or better at the time of their release. Cards based on the Pascal microarchitecture were released in 2016. The GeForce 10 series of cards are under this generation of graphics cards. They are made using the 16 nm manufacturing process which improves upon previous microarchitectures. Nvidia released one non-consumer card under the new Volta architecture, the Titan V. Changes from the Titan XP, Pascal's high-end card, include an increase in the number of CUDA cores, the addition of tensor cores, and HBM2. Tensor cores are designed for deep learning, while high-bandwidth memory is on-die, stacked, lower-clocked memory that offers an extremely wide memory bus. To emphasize that the Titan V is not a gaming card, Nvidia removed the "GeForce GTX" suffix it adds to consumer gaming cards.

In 2018, Nvidia launched the RTX 20 series GPUs that added ray-tracing cores to GPUs, improving their performance on lighting effects. Polaris 11 and Polaris 10 GPUs from AMD are fabricated by a 14 nm process. Their release resulted in a substantial increase in the performance per watt of AMD video cards. AMD also released the Vega GPU series for the high end market as a competitor to Nvidia's high end Pascal cards, also featuring HBM2 like the Titan V.

In 2019, AMD released the successor to their Graphics Core Next (GCN) microarchitecture/instruction set. Dubbed RDNA, the first product featuring it was the Radeon RX 5000 series of video cards.

The company announced that the successor to the RDNA microarchitecture would be a refresh. AMD unveiled the Radeon RX 6000 series, its RDNA 2 graphics cards with support for hardware-accelerated ray tracing. The lineup consisted of the RX 6800, RX 6800 XT, and RX 6900 XT. The RX 6800 and 6800 XT launched on November 18, 2020; the RX 6900 XT on December 8, 2020. The RX 6700 XT, which is based on Navi 22, was launched on March 18, 2021.

The PlayStation 5 and Xbox Series X and Series S were released in 2020; they both use GPUs based on the RDNA 2 microarchitecture with proprietary tweaks and different GPU configurations in each system's implementation.

Intel first entered the GPU market in the late 1990s, but produced lackluster 3D accelerators compared to the competition at the time. Rather than attempting to compete with the high-end manufacturers Nvidia and ATI/AMD, they began integrating Intel Graphics Technology GPUs into motherboard chipsets, beginning with the Intel 810 for the Pentium III, and later into CPUs. They began with the Intel Atom 'Pineview' laptop processor in 2009, continuing in 2010 with desktop processors in the first generation of the Intel Core line and with contemporary Pentiums and Celerons. This resulted in a large nominal market share, as the majority of computers with an Intel CPU also featured this embedded graphics processor. These generally lagged behind discrete processors in performance. Intel re-entered the discrete GPU market in 2022 with its Arc series, which competed with the then-current GeForce 30 series and Radeon 6000 series cards at competitive prices.

2020s

In 2020s, GPUs have been increasingly used for calculations involving embarrassingly parallel problems, such as training of neural networks on enormous datasets that are needed for large language models. Specialized processing cores on some modern workstation's GPUs are dedicated for deep learning since they have significant FLOPS performance increases, utilizing 4×4 matrix multiplication and division, resulting in hardware performance up to 128 TFLOPS in some applications. These tensor cores are supposed to appear in consumer cards, as well.

GPU companies

Many companies have produced GPUs under a number of brand names. In 2009, Intel, Nvidia, and AMD/ATI were the market share leaders, with 49.4%, 27.8%, and 20.6% market share respectively. However, those numbers include Intel's integrated graphics solutions as GPUs. Not counting those, Nvidia and AMD control nearly 100% of the market as of 2018. Their respective market shares are 66% and 33%. In addition, Matrox produces GPUs. Modern smartphones use mostly Adreno GPUs from Qualcomm, PowerVR GPUs from Imagination Technologies, and Mali GPUs from ARM.

Computational functions

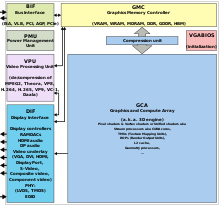

Modern GPUs use most of their transistors to do calculations related to 3D computer graphics. In addition to the 3D hardware, today's GPUs include basic 2D acceleration and framebuffer capabilities (usually with a VGA compatibility mode). Newer cards such as AMD/ATI HD5000–HD7000 lack dedicated 2D acceleration; it has to be emulated by 3D hardware. GPUs were initially used to accelerate the memory-intensive work of texture mapping and rendering polygons. They later added units to accelerate geometric calculations such as the rotation and translation of vertices into different coordinate systems. Recent developments in GPUs include support for programmable shaders which can manipulate vertices and textures with many of the same operations that are supported by CPUs, oversampling and interpolation techniques to reduce aliasing, and very high-precision color spaces.

Several factors of GPU construction affect the performance of the card for real-time rendering, such as the size of the connector pathways in the semiconductor device fabrication, the clock signal frequency, and the number and size of various on-chip memory caches. Performance is also affected by the number of streaming multiprocessors (SM) for NVidia GPUs, or compute units (CU) for AMD GPUs, which describe the number of core on-silicon processor units within the GPU chip that perform the core calculations, typically working in parallel with other SM/CUs on the GPU. GPU performance is typically measured in floating point operations per second (FLOPS); GPUs in the 2010s and 2020s typically deliver performance measured in teraflops (TFLOPS). This is an estimated performance measure, as other factors can affect the actual display rate.

GPU accelerated video decoding and encoding

Most GPUs made since 1995 support the YUV color space and hardware overlays, important for digital video playback, and many GPUs made since 2000 also support MPEG primitives such as motion compensation and iDCT. This hardware-accelerated video decoding, in which portions of the video decoding process and video post-processing are offloaded to the GPU hardware, is commonly referred to as "GPU accelerated video decoding", "GPU assisted video decoding", "GPU hardware accelerated video decoding", or "GPU hardware assisted video decoding".

Recent graphics cards decode high-definition video on the card, offloading the central processing unit. The most common APIs for GPU accelerated video decoding are DxVA for Microsoft Windows operating system and VDPAU, VAAPI, XvMC, and XvBA for Linux-based and UNIX-like operating systems. All except XvMC are capable of decoding videos encoded with MPEG-1, MPEG-2, MPEG-4 ASP (MPEG-4 Part 2), MPEG-4 AVC (H.264 / DivX 6), VC-1, WMV3/WMV9, Xvid / OpenDivX (DivX 4), and DivX 5 codecs, while XvMC is only capable of decoding MPEG-1 and MPEG-2.

There are several dedicated hardware video decoding and encoding solutions.

Video decoding processes that can be accelerated

Video decoding processes that can be accelerated by modern GPU hardware are:

- Motion compensation (mocomp)

- Inverse discrete cosine transform (iDCT)

- Inverse telecine 3:2 and 2:2 pull-down correction

- Inverse modified discrete cosine transform (iMDCT)

- In-loop deblocking filter

- Intra-frame prediction

- Inverse quantization (IQ)

- Variable-length decoding (VLD), more commonly known as slice-level acceleration

- Spatial-temporal deinterlacing and automatic interlace/progressive source detection

- Bitstream processing (Context-adaptive variable-length coding/Context-adaptive binary arithmetic coding) and perfect pixel positioning

These operations also have applications in video editing, encoding, and transcoding.

3D graphics APIs

A GPU can support one or more 3D graphics API, such as DirectX, Metal, OpenGL, OpenGL ES, Vulkan.

GPU forms

Terminology

In the 1970s, the term "GPU" originally stood for graphics processor unit and described a programmable processing unit working independently from the CPU that was responsible for graphics manipulation and output. In 1994, Sony used the term (now standing for graphics processing unit) in reference to the PlayStation console's Toshiba-designed Sony GPU. The term was popularized by Nvidia in 1999, who marketed the GeForce 256 as "the world's first GPU". It was presented as a "single-chip processor with integrated transform, lighting, triangle setup/clipping, and rendering engines". Rival ATI Technologies coined the term "visual processing unit" or VPU with the release of the Radeon 9700 in 2002.

In personal computers, there are two main forms of GPUs. Each has many synonyms:

- Dedicated graphics - also called discrete.

- Integrated graphics - also called shared graphics solutions, integrated graphics processors (IGP), or unified memory architecture (UMA).

Usage-specific GPU

Most GPUs are designed for a specific use, real-time 3D graphics, or other mass calculations:

- Gaming

- Cloud Gaming

- Nvidia GRID

- Radeon Sky

- Workstation

- Cloud Workstation

- Artificial Intelligence training and Cloud

- Automated/Driverless car

- Nvidia Drive PX

Dedicated graphics processing unit

Dedicated graphics processing units are not necessarily removable, nor does it necessarily interface with the motherboard in a standard fashion. The term "dedicated" refers to the fact that graphics cards have RAM that is dedicated to the card's use, not to the fact that most dedicated GPUs are removable. This RAM is usually specially selected for the expected serial workload of the graphics card (see GDDR). Sometimes, systems with dedicated, discrete GPUs were called "DIS" systems, as opposed to "UMA" systems (see next section). Dedicated GPUs for portable computers are most commonly interfaced through a non-standard and often proprietary slot due to size and weight constraints. Such ports may still be considered PCIe or AGP in terms of their logical host interface, even if they are not physically interchangeable with their counterparts.

Graphics cards with dedicated GPUs typically interface with the motherboard by means of an expansion slot such as PCI Express (PCIe) or Accelerated Graphics Port (AGP). They can usually be replaced or upgraded with relative ease, assuming the motherboard is capable of supporting the upgrade. A few graphics cards still use Peripheral Component Interconnect (PCI) slots, but their bandwidth is so limited that they are generally used only when a PCIe or AGP slot is not available.

Technologies such as SLI and NVLink by Nvidia and CrossFire by AMD allow multiple GPUs to draw images simultaneously for a single screen, increasing the processing power available for graphics. These technologies, however, are increasingly uncommon; most games do not fully utilize multiple GPUs, as most users cannot afford them. Multiple GPUs are still used on supercomputers (like in Summit), on workstations to accelerate video (processing multiple videos at once) and 3D rendering, for VFX and for simulations, and in AI to expedite training, as is the case with Nvidia's lineup of DGX workstations and servers, Tesla GPUs, and Intel's Ponte Vecchio GPUs.

Integrated graphics processing unit

Integrated graphics processing unit (IGPU), integrated graphics, shared graphics solutions, integrated graphics processors (IGP), or unified memory architecture (UMA) utilize a portion of a computer's system RAM rather than dedicated graphics memory. IGPs can be integrated onto the motherboard as part of the (northbridge) chipset, or on the same die (integrated circuit) with the CPU (like AMD APU or Intel HD Graphics). On certain motherboards, AMD's IGPs can use dedicated sideport memory: a separate fixed block of high performance memory that is dedicated for use by the GPU. As of early 2007 computers with integrated graphics account for about 90% of all PC shipments. They are less costly to implement than dedicated graphics processing, but tend to be less capable. Historically, integrated processing was considered unfit for 3D games or graphically intensive programs but could run less intensive programs such as Adobe Flash. Examples of such IGPs would be offerings from SiS and VIA circa 2004. However, modern integrated graphics processors such as AMD Accelerated Processing Unit and Intel Graphics Technology (HD, UHD, Iris, Iris Pro, Iris Plus, and Xe-LP) can handle 2D graphics or low-stress 3D graphics.

Since GPU computations are memory-intensive, integrated processing may compete with the CPU for relatively slow system RAM, as it has minimal or no dedicated video memory. IGPs use system memory with bandwidth up to a current maximum of 128 GB/s, whereas a discrete graphics card may have a bandwidth of more than 1000 GB/s between its VRAM and GPU core. This memory bus bandwidth can limit the performance of the GPU, though multi-channel memory can mitigate this deficiency. Older integrated graphics chipsets lacked hardware transform and lighting, but newer ones include it.

Hybrid graphics processing

Hybrid GPUs compete with integrated graphics in the low-end desktop and notebook markets. The most common implementations of this are ATI's HyperMemory and Nvidia's TurboCache.

Hybrid graphics cards are somewhat more expensive than integrated graphics, but much less expensive than dedicated graphics cards. They share memory with the system and have a small dedicated memory cache, to make up for the high latency of the system RAM. Technologies within PCI Express make this possible. While these solutions are sometimes advertised as having as much as 768 MB of RAM, this refers to how much can be shared with the system memory.

Stream processing and general purpose GPUs (GPGPU)

It is common to use a general purpose graphics processing unit (GPGPU) as a modified form of stream processor (or a vector processor), running compute kernels. This turns the massive computational power of a modern graphics accelerator's shader pipeline into general-purpose computing power. In certain applications requiring massive vector operations, this can yield several orders of magnitude higher performance than a conventional CPU. The two largest discrete (see "Dedicated graphics cards" above) GPU designers, AMD and Nvidia, are pursuing this approach with an array of applications. Both Nvidia and AMD teamed with Stanford University to create a GPU-based client for the Folding@home distributed computing project for protein folding calculations. In certain circumstances, the GPU calculates forty times faster than the CPUs traditionally used by such applications.

GPGPU can be used for many types of embarrassingly parallel tasks including ray tracing. They are generally suited to high-throughput computations that exhibit data-parallelism to exploit the wide vector width SIMD architecture of the GPU.

GPU-based high performance computers play a significant role in large-scale modelling. Three of the ten most powerful supercomputers in the world take advantage of GPU acceleration.

GPUs support API extensions to the C programming language such as OpenCL and OpenMP. Furthermore, each GPU vendor introduced its own API which only works with their cards: AMD APP SDK from AMD, and CUDA from Nvidia. These allow functions called compute kernels to run on the GPU's stream processors. This makes it possible for C programs to take advantage of a GPU's ability to operate on large buffers in parallel, while still using the CPU when appropriate. CUDA was the first API to allow CPU-based applications to directly access the resources of a GPU for more general purpose computing without the limitations of using a graphics API.

Since 2005 there has been interest in using the performance offered by GPUs for evolutionary computation in general, and for accelerating the fitness evaluation in genetic programming in particular. Most approaches compile linear or tree programs on the host PC and transfer the executable to the GPU to be run. Typically the performance advantage is only obtained by running the single active program simultaneously on many example problems in parallel, using the GPU's SIMD architecture. However, substantial acceleration can also be obtained by not compiling the programs, and instead transferring them to the GPU, to be interpreted there. Acceleration can then be obtained by either interpreting multiple programs simultaneously, simultaneously running multiple example problems, or combinations of both. A modern GPU can simultaneously interpret hundreds of thousands of very small programs.

External GPU (eGPU)

An external GPU is a graphics processor located outside of the housing of the computer, similar to a large external hard drive. External graphics processors are sometimes used with laptop computers. Laptops might have a substantial amount of RAM and a sufficiently powerful central processing unit (CPU), but often lack a powerful graphics processor, and instead have a less powerful but more energy-efficient on-board graphics chip. On-board graphics chips are often not powerful enough for playing video games, or for other graphically intensive tasks, such as editing video or 3D animation/rendering.

Therefore, it is desirable to attach a GPU to some external bus of a notebook. PCI Express is the only bus used for this purpose. The port may be, for example, an ExpressCard or mPCIe port (PCIe ×1, up to 5 or 2.5 Gbit/s respectively) or a Thunderbolt 1, 2, or 3 port (PCIe ×4, up to 10, 20, or 40 Gbit/s respectively). Those ports are only available on certain notebook systems. eGPU enclosures include their own power supply (PSU), because powerful GPUs can consume hundreds of watts.

Official vendor support for external GPUs has gained traction. A milestone was Apple's decision to support external GPUs with MacOS High Sierra 10.13.4. Several major hardware vendors (HP, Alienware, Razer) released Thunderbolt 3 eGPU enclosures. This support fuels eGPU implementations by enthusiasts.