The watchmaker analogy or watchmaker argument is a teleological argument, an argument for the existence of God. In broad terms, the watchmaker analogy states that just as it is readily observed that a watch (e.g., a pocket watch) did not come to be accidentally or on its own but rather through the intentional handiwork of a skilled watchmaker, it is also readily observed that nature did not come to be accidentally or on its own but through the intentional handiwork of an intelligent designer. The watchmaker analogy originated in natural theology and is often used to argue for the concept of intelligent design. The analogy states that a design implies a designer, by an intelligent designer, i.e., a creator deity. The watchmaker analogy was given by William Paley in his 1802 book Natural Theology or Evidences of the Existence and Attributes of the Deity.

The original analogy played a prominent role in natural theology and the "argument from design", where it was used to support arguments for the existence of God of the universe, in both Christianity and Deism. Prior to Paley, however, Sir Isaac Newton, René Descartes, and others from the time of the Scientific Revolution had each believed "that the physical laws he [each] had uncovered revealed the mechanical perfection of the workings of the universe to be akin to a watch, wherein the watchmaker is God."

The 1859 publication of Charles Darwin's book on natural selection put forward an alternative explanation to the watchmaker analogy, for complexity and adaptation. In the 19th century, deists, who championed the watchmaker analogy, held that Darwin's theory fit with "the principle of uniformitarianism—the idea that all processes in the world occur now as they have in the past" and that deistic evolution "provided an explanatory framework for understanding species variation in a mechanical universe."

When evolutionary biology began being taught in American high schools in the 1960s, Christian fundamentalists used versions of the argument to dispute the concepts of evolution and natural selection, and there was renewed interest in the watchmaker argument. Evolutionary biologist Richard Dawkins referred to the analogy in his 1986 book The Blind Watchmaker when explaining the mechanism of evolution. Others, however, consider the watchmaker analogy to be compatible with evolutionary creation, opining that the two concepts are not mutually exclusive.

History

Ancient predecessor

In the second century Epictetus argued that, by analogy to the way a sword is made by a craftsman to fit with a scabbard, so human genitals and the desire of humans to fit them together suggest a type of design or craftsmanship of the human form. Epictetus attributed this design to a type of Providence woven into the fabric of the universe, rather than to a personal monotheistic god.

Scientific Revolution

The Scientific Revolution "nurtured a growing awareness" that "there were universal laws of nature at work that ordered the movement of the world and its parts." Amos Yong writes that in "astronomy, the Copernican revolution regarding the heliocentrism of the solar system, Johannes Kepler's (1571–1630) three laws of planetary motion, and Isaac Newton's (1642–1727) law of universal gravitation—laws of gravitation and of motion, and notions of absolute space and time—all combined to establish the regularities of heavenly and earthly bodies".

Simultaneously, the development of machine technology and the emergence of the mechanical philosophy encouraged mechanical imagery unlikely to have come to the fore in previous ages.

With such a backdrop, "deists suggested the watchmaker analogy: just as watches are set in motion by watchmakers, after which they operate according to their pre-established mechanisms, so also was the world begun by God as creator, after which it and all its parts have operated according to their pre-established natural laws. With these laws perfectly in place, events have unfolded according to the prescribed plan." For Sir Isaac Newton, "the regular motion of the planets made it reasonable to believe in the continued existence of God". Newton also upheld the idea that "like a watchmaker, God was forced to intervene in the universe and tinker with the mechanism from time to time to ensure that it continued operating in good working order". Similarly to Newton, René Descartes (1596–1650) speculated on "the cosmos as a great time machine operating according to fixed laws, a watch created and wound up by the great watchmaker".

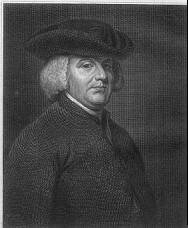

William Paley

Watches and timepieces have been used as examples of complicated technology in philosophical discussions. For example, Cicero, Voltaire and René Descartes all used timepieces in arguments regarding purpose. The watchmaker analogy, as described here, was used by Bernard le Bovier de Fontenelle in 1686, but was most famously formulated by Paley.

Paley used the watchmaker analogy in his book Natural Theology, or Evidences of the Existence and Attributes of the Deity collected from the Appearances of Nature, published in 1802. In it, Paley wrote that if a pocket watch is found on a heath, it is most reasonable to assume that someone dropped it and that it was made by at least one watchmaker, not by natural forces:

In crossing a heath, suppose I pitched my foot against a stone, and were asked how the stone came to be there; I might possibly answer, that, for anything I knew to the contrary, it had lain there forever: nor would it perhaps be very easy to show the absurdity of this answer. But suppose I had found a watch upon the ground, and it should be inquired how the watch happened to be in that place; I should hardly think of the answer I had before given, that for anything I knew, the watch might have always been there. ... There must have existed, at some time, and at some place or other, an artificer or artificers, who formed [the watch] for the purpose which we find it actually to answer; who comprehended its construction, and designed its use. ... Every indication of contrivance, every manifestation of design, which existed in the watch, exists in the works of nature; with the difference, on the side of nature, of being greater or more, and that in a degree which exceeds all computation.

— William Paley, Natural Theology (1802)

Paley went on to argue that the complex structures of living things and the remarkable adaptations of plants and animals required an intelligent designer. He believed the natural world was the creation of God and showed the nature of the creator. According to Paley, God had carefully designed "even the most humble and insignificant organisms" and all of their minute features (such as the wings and antennae of earwigs). He believed, therefore, that God must care even more for humanity.

Paley recognised that there is great suffering in nature and nature appears to be indifferent to pain. His way of reconciling that with his belief in a benevolent God was to assume that life had more pleasure than pain.

As a side note, a charge of wholesale plagiarism from this book was brought against Paley in The Athenaeum for 1848, but the famous illustration of the watch was not peculiar to Nieuwentyt and had been used by many others before either Paley or Nieuwentyt. But the charge of plagiarism was based on more similarities. For example, Nieuwentyt wrote "in the middle of a Sandy down, or in a desart [sic] and solitary Place, where few People are used to pass, any one should find a Watch ..."

Joseph Butler

William Paley taught the works of Joseph Butler and appears to have built on Butler's 1736 design arguments of inferring a designer from evidence of design. Butler noted: "As the manifold Appearances of Design and of final Causes, in the Constitution of the World, prove it to be the Work of an intelligent Mind ... The appearances of Design and of final Causes in the constitution of nature as really prove this acting agent to be an intelligent Designer... ten thousand Instances of Design, cannot but prove a Designer.".

Jean-Jacques Rousseau

Rousseau also mentioned the watchmaker theory. He wrote the following in his 1762 book, Emile:

I am like a man who sees the works of a watch for the first time; he is never weary of admiring the mechanism, though he does not know the use of the instrument and has never seen its face. I do not know what this is for, says he, but I see that each part of it is fitted to the rest, I admire the workman in the details of his work, and I am quite certain that all these wheels only work together in this fashion for some common end which I cannot perceive. Let us compare the special ends, the means, the ordered relations of every kind, then let us listen to the inner voice of feeling; what healthy mind can reject its evidence? Unless the eyes are blinded by prejudices, can they fail to see that the visible order of the universe proclaims a supreme intelligence? What sophisms must be brought together before we fail to understand the harmony of existence and the wonderful co-operation of every part for the maintenance of the rest?

Criticism

David Hume

Before Paley published his book, David Hume (1711–1776) had already put forward a number of philosophical criticisms of the watch analogy, and to some extent anticipated the concept of natural selection. His criticisms can be separated into three major distinctions.

His first objection is that we have no experience of world-making. Hume highlighted the fact that everything we claim to know the cause of, we have derived the inductions from previous experiences of similar objects being created or seen the object itself being created ourselves. For example, with a watch, we know it has to be created by a watchmaker because we can observe it being made and compare it to the making of other similar watches or objects to deduce they have alike causes in their creation. However, he argues that we have no experience of the universe's creation or any other universe's creations to compare our own universe to and never will; therefore, it would be illogical to infer that our universe has been created by an intelligent designer in the same way that a watch has.

The second criticism that Hume offers is about the form of the argument as an analogy in itself. An analogical argument claims that because object X (a watch) is like object Y (the universe) in one respect, both are therefore probably alike in another, hidden, respect (their cause, having to be created by an intelligent designer). He points out that for an argument from analogy to be successful, the two things that are being compared have to have an adequate number of similarities that are relevant to the respect that are analogised. For example, a kitten and a lion may be very similar in many respects, but just because a lion makes a "roar", it would not be correct to infer a kitten also "roars", the similarities between the two objects being not enough and the degree of relevance to what sound they make being not relevant enough. Hume then argues that the universe and a watch also do not have enough relevant or close similarities to infer that they were both created the same way. For example, the universe is made of organic natural material, but the watch is made of artificial mechanic materials. He claims that in the same respect, the universe could be argued to be more analogous to something more organic such as a vegetable (which we can observe for ourselves does not need a 'designer' or a 'watchmaker' to be created). Although he admits the analogy of a universe to a vegetable to seem ridiculous, he says that it is just as ridiculous to analogize the universe with a watch.

The third criticism that Hume offers is that even if the argument did give evidence for a designer; it still gives no evidence for the traditional 'omnipotent', 'benevolent' (all-powerful and all-loving) God of traditional Christian theism. One of the main assumptions of Paley's argument is that 'like effects have like causes'; or that machines (like the watch) and the universe have similar features of design and so both also have the same cause of their existence: they must both have an intelligent designer. However, Hume points out that what Paley does not comprehend is to what extent 'like causes' extend: how similar the creation of a universe is to the creation of a watch. Instead, Paley moves straight to the conclusion that this designer of the universe is the 'God' he believes in of traditional Christianity. Hume, however takes the idea of 'like causes' and points out some potential absurdities in how far the 'likeness' of these causes could extend to if the argument were taken further as to explain this. One example that he uses is how a machine or a watch is usually designed by a whole team of people rather than just one person. Surely, if we are analogizing the two in this way, it would point to there being a group of gods who created the universe, not just a single being. Another example he uses is that complex machines are usually the result of many years of trial and error with every new machine being an improved version of the last. Also by analogy of the two, would that not hint that the universe could also have been just one of many of God's 'trials' and that there are much better universes out there? However, if that were taken to be true, surely the 'creator' of it all would not be 'all loving' and 'all powerful' if they had to carry out the process of 'trial and error' when creating the universe?

Hume also points out there is still a possibility that the universe could have been created by random chance but still show evidence of design as the universe is eternal and would have an infinite amount of time to be able to form a universe so complex and ordered as our own. He called that the 'Epicurean hypothesis'. It argued that when the universe was first created, the universe was random and chaotic, but if the universe is eternal, over an unlimited period of time, natural forces could have naturally 'evolved' by random particles coming together over time into the incredibly ordered system we can observe today without the need of an intelligent designer as an explanation.

The last objection that he makes draws on the widely discussed problem of evil. He argues that all the daily unnecessary suffering that goes on everywhere within the world is yet another factor that pulls away from the idea that God is an 'omnipotent' 'benevolent' being.

Charles Darwin

When Darwin completed his studies of theology at Christ's College, Cambridge, in 1831, he read Paley's Natural Theology and believed that the work gave rational proof of the existence of God. That was because living beings showed complexity and were exquisitely fitted to their places in a happy world.

Subsequently, on the voyage of the Beagle, Darwin found that nature was not so beneficent, and the distribution of species did not support ideas of divine creation. In 1838, shortly after his return, Darwin conceived his theory that natural selection, rather than divine design, was the best explanation for gradual change in populations over many generations. He published the theory in On the Origin of Species in 1859, and in later editions, he noted responses that he had received:

It can hardly be supposed that a false theory would explain, in so satisfactory a manner as does the theory of natural selection, the several large classes of facts above specified. It has recently been objected that this is an unsafe method of arguing; but it is a method used in judging of the common events of life, and has often been used by the greatest natural philosophers ... I see no good reason why the views given in this volume should shock the religious feelings of any one. It is satisfactory, as showing how transient such impressions are, to remember that the greatest discovery ever made by man, namely, the law of the attraction of gravity, was also attacked by Leibnitz, "as subversive of natural, and inferentially of revealed, religion." A celebrated author and divine has written to me that "he has gradually learnt to see that it is just as noble a conception of the Deity to believe that He created a few original forms capable of self-development into other and needful forms, as to believe that He required a fresh act of creation to supply the voids caused by the action of His laws."

— Charles Darwin, The Origin of Species (1859)

Darwin reviewed the implications of this finding in his autobiography:

Although I did not think much about the existence of a personal God until a considerably later period of my life, I will here give the vague conclusions to which I have been driven. The old argument of design in nature, as given by Paley, which formerly seemed to me so conclusive, fails, now that the law of natural selection has been discovered. We can no longer argue that, for instance, the beautiful hinge of a bivalve shell must have been made by an intelligent being, like the hinge of a door by man. There seems to be no more design in the variability of organic beings and in the action of natural selection, than in the course which the wind blows. Everything in nature is the result of fixed laws.

— Charles Darwin, The Autobiography of Charles Darwin 1809–1882. With the original omissions restored.

The idea that nature was governed by laws was already common, and in 1833, William Whewell as a proponent of the natural theology that Paley had inspired had written that "with regard to the material world, we can at least go so far as this—we can perceive that events are brought about not by insulated interpositions of Divine power, exerted in each particular case, but by the establishment of general laws." Darwin, who spoke of the "fixed laws" concurred with Whewell, writing in his second edition of On The Origin of Species:

There is grandeur in this view of life, with its several powers, having been originally breathed by the Creator into a few forms or into one; and that, whilst this planet has gone cycling on according to the fixed law of gravity, from so simple a beginning endless forms most beautiful and most wonderful have been, and are being, evolved.

— Charles Darwin, The Origin of Species (1860)

By the time that Darwin published his theory, theologians of liberal Christianity were already supporting such ideas, and by the late 19th century, their modernist approach was predominant in theology. In science, evolution theory incorporating Darwin's natural selection became completely accepted.

Richard Dawkins

In The Blind Watchmaker, Richard Dawkins argues that the watch analogy conflates the complexity that arises from living organisms that are able to reproduce themselves (and may become more complex over time) with the complexity of inanimate objects, unable to pass on any reproductive changes (such as the multitude of parts manufactured in a watch). The comparison breaks down because of this important distinction.

In a BBC Horizon episode, also entitled The Blind Watchmaker, Dawkins described Paley's argument as being "as mistaken as it is elegant". In both contexts, he saw Paley as having made an incorrect proposal as to a certain problem's solution, but Dawkins did not disrespect him. In his essay The Big Bang, Steven Pinker discusses Dawkins's coverage of Paley's argument, adding: "Biologists today do not disagree with Paley's laying out of the problem. They disagree only with his solution."

In his book The God Delusion, Dawkins argues that rather than luck, the evolution of human life is the result of natural selection. He suggests that it is fallacious to view "coming about by chance" and "coming about by design" as the only possibilities, with natural selection being the alternative to the existence of an intelligent designer. By amassing a large number of small changes, the theory of natural selection allows for a seemingly impossible end product to be produced.

In addition, he argues that the watchmaker's creation of the watch implies that the watchmaker must be more complex than the watch. Design is top-down, someone or something more complex designs something less complex. To follow the line upwards demands that the watch was designed by a (necessarily more complex) watchmaker, the watchmaker must have been created by a more complex being than himself. So the question becomes who designed the designer? Dawkins argues that (a) this line continues ad infinitum, and (b) it does not explain anything. Evolution, on the other hand, takes a bottom-up approach; it explains how more complexity can arise gradually by building on or combining lesser complexity.

Richerson and Boyd

Biologist Peter Richerson and anthropologist Robert Boyd offer an oblique criticism by arguing that watches were not "hopeful monsters created by single inventors," but were created by watchmakers building up their skills in a cumulative fashion over time, each contributing to a watch-making tradition from which any individual watchmaker draws their designs.

Contemporary usage

In the early 20th century, the modernist theology of higher criticism was contested in the United States by Biblical literalists, who campaigned successfully against the teaching of evolution and began calling themselves creationists in the 1920s. When teaching of evolution was reintroduced into public schools in the 1960s, they adopted what they called creation science that had a central concept of design in similar terms to Paley's argument. That idea was then relabeled intelligent design, which presents the same analogy as an argument against evolution by natural selection without explicitly stating that the "intelligent designer" was God. The argument from the complexity of biological organisms was now presented as the irreducible complexity argument, the most notable proponent of which was Michael Behe, and, leveraging off the verbiage of information theory, the specified complexity argument, the most notable proponent of which was William Dembski.

The watchmaker analogy was referenced in the 2005 Kitzmiller v. Dover Area School District trial. Throughout the trial, Paley was mentioned several times. The defense's expert witness John Haught noted that both intelligent design and the watchmaker analogy are "reformulations" of the same theological argument. On day 21 of the trial, Mr. Harvey walked Dr. Minnich through a modernized version of Paley's argument, substituting a cell phone for the watch. In his ruling, the judge stated that the use of the argument from design by intelligent design proponents "is merely a restatement of the Reverend William Paley's argument applied at the cell level," adding "Minnich, Behe, and Paley reach the same conclusion, that complex organisms must have been designed using the same reasoning, except that Professors Behe and Minnich refuse to identify the designer, whereas Paley inferred from the presence of design that it was God." The judge ruled that such an inductive argument is not accepted as science because it is unfalsifiable.