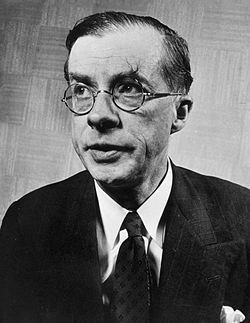

In astronomy, the main sequence is a classification of stars which appear on plots of stellar color versus brightness as a continuous and distinctive band. Stars spend the majority of their lives on the main sequence, during which core hydrogen burning is dominant. These main-sequence stars, or sometimes interchangeably dwarf stars, are the most numerous true stars in the universe and include the Sun. Color-magnitude plots are known as Hertzsprung–Russell diagrams after Ejnar Hertzsprung and Henry Norris Russell.

When a gaseous nebula undergoes sufficient gravitational collapse, the high pressure and temperature concentrated at the core will trigger the nuclear fusion of hydrogen into helium (see stars). The thermal energy from this process radiates out from the hot, dense core, generating a strong pressure gradient. It is this pressure gradient that counters the star's collapse under gravity, maintaining the star in a state of hydrostatic equilibrium. The star's position on the main sequence is determined primarily by the mass, but also by age and chemical composition. As a result, radiation is not the only method of energy transfer in stars. Convection plays a role in the movement of energy, particularly in the cores of stars greater than 1.3 to 1.5 times the Sun's mass, again depending on age and chemical composition.

When discussing chemical composition, astronomers generally refer to the metallicity of the star. This is the abundance of heavier-than-helium elements present in the star. For example, the fraction of the Sun by mass currently composed of hydrogen (denoted X) is 74.9%. For helium (denoted Y) it is 23.8%, meaning the star's metallicity, or mass fraction of all other elements, is 1.3% (denoted Z). This is a typical range for similar-mass main sequence stars. In fact, a higher metallicity leads to a higher opacity whereby the energy production can remain concentrated in the core without being radiated or transferred away to the star's outer layers. This hotter environment speeds up nuclear fusion and decreases the amount of time the star will spend on the main sequence.

The main sequence is divided into upper and lower parts, based on the dominant process that a star uses to generate energy. The Sun, along with main sequence stars below about 1.5 M☉, primarily fuse hydrogen atoms together in a series of stages to form helium, a sequence called the proton–proton chain. Above this mass, in the upper main sequence, the nuclear fusion process mainly uses atoms of carbon, nitrogen, and oxygen as intermediaries in the CNO cycle that produces helium from hydrogen atoms. The proton-proton chain is still occurring, but it produces less energy than the CNO cycle. Main-sequence stars where the CNO cycle is the dominant energy production process undergo convection in their core regions, which acts to stir up the newly created helium and maintain the proportion of fuel needed for fusion to occur. Below this mass, stars have cores that are entirely radiative with convective zones near the surface. With decreasing stellar mass, the proportion of the star forming a convective envelope steadily increases. The main-sequence stars below 0.4 M☉ undergo convection throughout their mass. When core convection does not occur, a helium-rich core develops surrounded by an outer layer of hydrogen.

The more massive a star is, the shorter its lifespan on the main sequence. After the hydrogen fuel at the core has been consumed, the star evolves away from the main sequence on the HR diagram, into a supergiant, red giant, or directly to a white dwarf.

History

In the early part of the 20th century, information about the types and distances of stars became more readily available. The spectra of stars were shown to have distinctive features, which allowed them to be categorized. Annie Jump Cannon and Edward Charles Pickering at Harvard College Observatory developed a method of categorization that became known as the Harvard Classification Scheme, published in the Harvard Annals in 1901.

In Potsdam in 1906, the Danish astronomer Ejnar Hertzsprung noticed that the reddest stars—classified as K and M in the Harvard scheme—could be divided into two distinct groups. These stars are either much brighter than the Sun or much fainter. To distinguish these groups, he called them "giant" and "dwarf" stars. The following year he began studying star clusters; large groupings of stars that are co-located at approximately the same distance. For these stars, he published the first plots of color versus luminosity. These plots showed a prominent and continuous sequence of stars, which he named the Main Sequence.

At Princeton University, Henry Norris Russell was following a similar course of research. He was studying the relationship between the spectral classification of stars and their actual brightness as corrected for distance—their absolute magnitude. For this purpose, he used a set of stars that had reliable parallaxes and many of which had been categorized at Harvard. When he plotted the spectral types of these stars against their absolute magnitude, he found that dwarf stars followed a distinct relationship. This allowed the real brightness of a dwarf star to be predicted with reasonable accuracy.

Of the red stars observed by Hertzsprung, the dwarf stars also followed the spectra-luminosity relationship discovered by Russell. However, giant stars are much brighter than dwarfs and so do not follow the same relationship. Russell proposed that "giant stars must have low density or great surface brightness, and the reverse is true of dwarf stars". The same curve also showed that there were very few faint white stars.

In 1933, Bengt Strömgren introduced the term Hertzsprung–Russell diagram to denote a luminosity-spectral class diagram. This name reflected the parallel development of this technique by both Hertzsprung and Russell earlier in the century.

As evolutionary models of stars were developed during the 1930s, it was shown that, for stars with the same composition, the star's mass determines its luminosity and radius. Conversely, when a star's chemical composition and its position on the main sequence are known, the star's mass and radius can be deduced. This became known as the Vogt–Russell theorem; named after Heinrich Vogt and Henry Norris Russell. It was subsequently discovered that this relationship breaks down somewhat for stars of the non-uniform composition.

A refined scheme for stellar classification was published in 1943 by William Wilson Morgan and Philip Childs Keenan. The MK classification assigned each star a spectral type—based on the Harvard classification—and a luminosity class. The Harvard classification had been developed by assigning a different letter to each star based on the strength of the hydrogen spectral line before the relationship between spectra and temperature was known. When ordered by temperature and when duplicate classes were removed, the spectral types of stars followed, in order of decreasing temperature with colors ranging from blue to red, the sequence O, B, A, F, G, K, and M. (A popular mnemonic for memorizing this sequence of stellar classes is "Oh Be A Fine Girl/Guy, Kiss Me".) The luminosity class ranged from I to V, in order of decreasing luminosity. Stars of luminosity class V belonged to the main sequence.

In April 2018, astronomers reported the detection of the most distant "ordinary" (i.e., main sequence) star, named Icarus (formally, MACS J1149 Lensed Star 1), at 9 billion light-years away from Earth.

Formation and evolution

| Star formation |

|---|

|

When a protostar is formed from the collapse of a giant molecular cloud of gas and dust in the local interstellar medium, the initial composition is homogeneous throughout, consisting of approximately 70% hydrogen, 28% helium, and trace amounts of other elements, by mass. The initial mass of the star depends on the local conditions within the cloud. (The mass distribution of newly formed stars is described empirically by the initial mass function.) During the initial collapse, this pre-main-sequence star generates thermal energy through the increase in pressure arising due to its gravitational contraction. During this phase, before hydrogen ignition, the star will spend a length of time contracting known as the Kelvin-Helmholtz, or thermal, timescale. This timescale describes the length of time a star can last by radiating its internal kinetic energy. Once sufficiently dense, stars begin converting hydrogen into helium and producing energy through an exothermic nuclear fusion process. The nuclear timescale is useful to describe the length of time a star can last during this next phase.

When nuclear fusion of hydrogen becomes the dominant energy production process and the excess energy gained from gravitational contraction has been lost, the star lies along a curve on the Hertzsprung–Russell diagram (or HR diagram) called the standard main sequence. Astronomers will sometimes refer to this stage as "zero-age main sequence", or ZAMS. The ZAMS curve can be calculated using computer models of stellar properties at the point when stars begin hydrogen fusion. From this point, the brightness and surface temperature of stars typically increase with age.

A star remains near its initial position on the main sequence until a significant amount of hydrogen in the core has been consumed, then begins to evolve into a more luminous star. (On the HR diagram, the evolving star moves up and to the right of the main sequence.) Thus the main sequence represents the primary hydrogen-burning stage of a star's lifetime.

Classification

Main sequence stars are divided into the following types:

- O-type main-sequence star

- B-type main-sequence star

- A-type main-sequence star

- F-type main-sequence star

- G-type main-sequence star

- K-type main-sequence star

- M-type main-sequence star

M-type (and, to a lesser extent, K-type) main-sequence stars are usually referred to as red dwarfs.

Properties

The majority of stars on a typical HR diagram lie along the main-sequence curve. This line is pronounced because both the spectral type and the luminosity depends only on a star's mass, at least to zeroth-order approximation, as long as it is fusing hydrogen at its core—and that is what almost all stars spend most of their "active" lives doing.

The temperature of a star determines its spectral type via its effect on the physical properties of plasma in its photosphere. A star's energy emission as a function of wavelength is influenced by both its temperature and composition. A key indicator of this energy distribution is given by the color index, B − V, which measures the star's magnitude in blue (B) and green-yellow (V) light by means of filters. This difference in magnitude provides a measure of a star's temperature.

Dwarf terminology

Main-sequence stars are called dwarf stars, but this terminology is partly historical and can be somewhat confusing. For the cooler stars, dwarfs such as red dwarfs, orange dwarfs, and yellow dwarfs are indeed much smaller and dimmer than other stars of those colors. However, for hotter blue and white stars, the difference in size and brightness between so-called "dwarf" stars that are on the main sequence and so-called "giant" stars that are not, becomes smaller. For the hottest stars the difference is not directly observable and for these stars, the terms "dwarf" and "giant" refer to differences in spectral lines which indicate whether a star is on or off the main sequence. Nevertheless, very hot main-sequence stars are still sometimes called dwarfs, even though they have roughly the same size and brightness as the "giant" stars of that temperature.

The common use of "dwarf" to mean the main sequence is confusing in another way because there are dwarf stars that are not main-sequence stars. For example, a white dwarf is the dead core left over after a star has shed its outer layers, and is much smaller than a main-sequence star, roughly the size of Earth. These represent the final evolutionary stage of many main-sequence stars.

Parameters

By treating the star as an idealized energy radiator known as a black body, the luminosity L and radius R can be related to the effective temperature Teff by the Stefan–Boltzmann law:

where σ is the Stefan–Boltzmann constant. As the position of a star on the HR diagram shows its approximate luminosity, this relation can be used to estimate its radius.

The mass, radius, and luminosity of a star are closely interlinked, and their respective values can be approximated by three relations. First is the Stefan–Boltzmann law, which relates the luminosity L, the radius R and the surface temperature Teff. Second is the mass–luminosity relation, which relates the luminosity L and the mass M. Finally, the relationship between M and R is close to linear. The ratio of M to R increases by a factor of only three over 2.5 orders of magnitude of M. This relation is roughly proportional to the star's inner temperature TI, and its extremely slow increase reflects the fact that the rate of energy generation in the core strongly depends on this temperature, whereas it has to fit the mass-luminosity relation. Thus, a too-high or too-low temperature will result in stellar instability.

A better approximation is to take ε = L/M, the energy generation rate per unit mass, as ε is proportional to TI15, where TI is the core temperature. This is suitable for stars at least as massive as the Sun, exhibiting the CNO cycle, and gives the better fit R ∝ M0.78.

Sample parameters

The table below shows typical values for stars along the main sequence. The values of luminosity (L), radius (R), and mass (M) are relative to the Sun—a dwarf star with a spectral classification of G2 V. The actual values for a star may vary by as much as 20–30% from the values listed below.

| Stellar class |

Radius, R/R☉ |

Mass, M/M☉ |

Luminosity, L/L☉ |

Temp. (K) |

Examples |

|---|---|---|---|---|---|

| O2 | 12 | 100 | 800,000 | 50,000 | BI 253 |

| O6 | 9.8 | 35 | 180,000 | 38,000 | Theta1 Orionis C |

| B0 | 7.4 | 18 | 20,000 | 30,000 | Phi1 Orionis |

| B5 | 3.8 | 6.5 | 800 | 16,400 | Pi Andromedae A |

| A0 | 2.5 | 3.2 | 80 | 10,800 | Alpha Coronae Borealis A |

| A5 | 1.7 | 2.1 | 20 | 8,620 | Beta Pictoris |

| F0 | 1.3 | 1.7 | 6 | 7,240 | Gamma Virginis |

| F5 | 1.2 | 1.3 | 2.5 | 6,540 | Eta Arietis |

| G0 | 1.05 | 1.10 | 1.26 | 5,920 | Beta Comae Berenices |

| G2 | 1 | 1 | 1 | 5,780 | Sun |

| G5 | 0.93 | 0.93 | 0.79 | 5,610 | Alpha Mensae |

| K0 | 0.85 | 0.78 | 0.40 | 5,240 | 70 Ophiuchi A |

| K5 | 0.74 | 0.69 | 0.16 | 4,410 | 61 Cygni A |

| M0 | 0.51 | 0.60 | 0.072 | 3,800 | Lacaille 8760 |

| M5 | 0.18 | 0.15 | 0.0027 | 3,120 | EZ Aquarii A |

| M8 | 0.11 | 0.08 | 0.0004 | 2,650 | Van Biesbroeck's star |

| L1 | 0.09 | 0.07 | 0.00017 | 2,200 | 2MASS J0523−1403 |

Energy generation

All main-sequence stars have a core region where energy is generated by nuclear fusion. The temperature and density of this core are at the levels necessary to sustain the energy production that will support the remainder of the star. A reduction of energy production would cause the overlaying mass to compress the core, resulting in an increase in the fusion rate because of higher temperature and pressure. Likewise, an increase in energy production would cause the star to expand, lowering the pressure at the core. Thus the star forms a self-regulating system in hydrostatic equilibrium that is stable over the course of its main-sequence lifetime.

Main-sequence stars employ two types of hydrogen fusion processes, and the rate of energy generation from each type depends on the temperature in the core region. Astronomers divide the main sequence into upper and lower parts, based on which of the two is the dominant fusion process. In the lower main sequence, energy is primarily generated as the result of the proton–proton chain, which directly fuses hydrogen together in a series of stages to produce helium. Stars in the upper main sequence have sufficiently high core temperatures to efficiently use the CNO cycle (see chart). This process uses atoms of carbon, nitrogen, and oxygen as intermediaries in the process of fusing hydrogen into helium.

At a stellar core temperature of 18 million kelvin, the PP process and CNO cycle are equally efficient, and each type generates half of the star's net luminosity. As this is the core temperature of a star with about 1.5 M☉, the upper main sequence consists of stars above this mass. Thus, roughly speaking, stars of spectral class F or cooler belong to the lower main sequence, while A-type stars or hotter are upper main-sequence stars. The transition in primary energy production from one form to the other spans a range difference of less than a single solar mass. In the Sun, a one solar-mass star, only 1.5% of the energy is generated by the CNO cycle. By contrast, stars with 1.8 M☉ or above generate almost their entire energy output through the CNO cycle.

The observed upper limit for a main-sequence star is 120–200 M☉. The theoretical explanation for this limit is that stars above this mass can not radiate energy fast enough to remain stable, so any additional mass will be ejected in a series of pulsations until the star reaches a stable limit. The lower limit for sustained proton-proton nuclear fusion is about 0.08 M☉ or 80 times the mass of Jupiter. Below this threshold are sub-stellar objects that can not sustain hydrogen fusion, known as brown dwarfs.

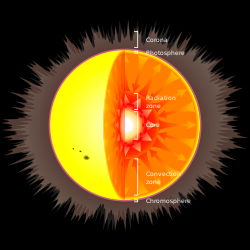

Structure

Because there is a temperature difference between the core and the surface, or photosphere, energy is transported outward. The two modes for transporting this energy are radiation and convection. A radiation zone, where energy is transported by radiation, is stable against convection and there is very little mixing of the plasma. By contrast, in a convection zone the energy is transported by bulk movement of plasma, with hotter material rising and cooler material descending. Convection is a more efficient mode for carrying energy than radiation, but it will only occur under conditions that create a steep temperature gradient.

In massive stars (above 10 M☉) the rate of energy generation by the CNO cycle is very sensitive to temperature, so the fusion is highly concentrated at the core. Consequently, there is a high temperature gradient in the core region, which results in a convection zone for more efficient energy transport. This mixing of material around the core removes the helium ash from the hydrogen-burning region, allowing more of the hydrogen in the star to be consumed during the main-sequence lifetime. The outer regions of a massive star transport energy by radiation, with little or no convection.

Intermediate-mass stars such as Sirius may transport energy primarily by radiation, with a small core convection region. Medium-sized, low-mass stars like the Sun have a core region that is stable against convection, with a convection zone near the surface that mixes the outer layers. This results in a steady buildup of a helium-rich core, surrounded by a hydrogen-rich outer region. By contrast, cool, very low-mass stars (below 0.4 M☉) are convective throughout. Thus the helium produced at the core is distributed across the star, producing a relatively uniform atmosphere and a proportionately longer main-sequence lifespan.

Luminosity-color variation

As non-fusing helium accumulates in the core of a main-sequence star, the reduction in the abundance of hydrogen per unit mass results in a gradual lowering of the fusion rate within that mass. Since it is fusion-supplied power that maintains the pressure of the core and supports the higher layers of the star, the core gradually gets compressed. This brings hydrogen-rich material into a shell around the helium-rich core at a depth where the pressure is sufficient for fusion to occur. The high power output from this shell pushes the higher layers of the star further out. This causes a gradual increase in the radius and consequently luminosity of the star over time. For example, the luminosity of the early Sun was only about 70% of its current value. As a star ages it thus changes its position on the HR diagram. This evolution is reflected in a broadening of the main sequence band which contains stars at various evolutionary stages.

Other factors that broaden the main sequence band on the HR diagram include uncertainty in the distance to stars and the presence of unresolved binary stars that can alter the observed stellar parameters. However, even perfect observation would show a fuzzy main sequence because mass is not the only parameter that affects a star's color and luminosity. Variations in chemical composition caused by the initial abundances, the star's evolutionary status, interaction with a close companion, rapid rotation, or a magnetic field can all slightly change a main-sequence star's HR diagram position, to name just a few factors. As an example, there are metal-poor stars (with a very low abundance of elements with higher atomic numbers than helium) that lie just below the main sequence and are known as subdwarfs. These stars are fusing hydrogen in their cores and so they mark the lower edge of the main sequence fuzziness caused by variance in chemical composition.

A nearly vertical region of the HR diagram, known as the instability strip, is occupied by pulsating variable stars known as Cepheid variables. These stars vary in magnitude at regular intervals, giving them a pulsating appearance. The strip intersects the upper part of the main sequence in the region of class A and F stars, which are between one and two solar masses. Pulsating stars in this part of the instability strip intersecting the upper part of the main sequence are called Delta Scuti variables. Main-sequence stars in this region experience only small changes in magnitude, so this variation is difficult to detect. Other classes of unstable main-sequence stars, like Beta Cephei variables, are unrelated to this instability strip.

Lifetime

The total amount of energy that a star can generate through nuclear fusion of hydrogen is limited by the amount of hydrogen fuel that can be consumed at the core. For a star in equilibrium, the thermal energy generated at the core must be at least equal to the energy radiated at the surface. Since the luminosity gives the amount of energy radiated per unit time, the total life span can be estimated, to first approximation, as the total energy produced divided by the star's luminosity.

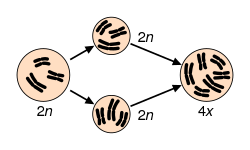

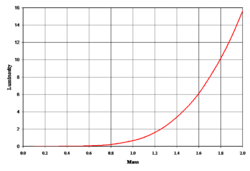

For a star with at least 0.5 M☉, when the hydrogen supply in its core is exhausted and it expands to become a red giant, it can start to fuse helium atoms to form carbon. The energy output of the helium fusion process per unit mass is only about a tenth the energy output of the hydrogen process, and the luminosity of the star increases. This results in a much shorter length of time in this stage compared to the main-sequence lifetime. (For example, the Sun is predicted to spend 130 million years burning helium, compared to about 12 billion years burning hydrogen.) Thus, about 90% of the observed stars above 0.5 M☉ will be on the main sequence. On average, main-sequence stars are known to follow an empirical mass–luminosity relationship. The luminosity (L) of the star is roughly proportional to the total mass (M) as the following power law:

This relationship applies to main-sequence stars in the range 0.1–50 M☉.

The amount of fuel available for nuclear fusion is proportional to the mass of the star. Thus, the lifetime of a star on the main sequence can be estimated by comparing it to solar evolutionary models. The Sun has been a main-sequence star for about 4.5 billion years and it will start to expand rapidly towards a red giant in 6.5 billion years, for a total main-sequence lifetime of roughly 1010 years. Hence:

where M and L are the mass and luminosity of the star, respectively, is a solar mass, is the solar luminosity and is the star's estimated main-sequence lifetime.

Although more massive stars have more fuel to burn and might intuitively be expected to last longer, they also radiate a proportionately greater amount with increased mass. This is required by the stellar equation of state; for a massive star to maintain equilibrium, the outward pressure of radiated energy generated in the core not only must but will rise to match the titanic inward gravitational pressure of its envelope. Thus, the most massive stars may remain on the main sequence for only a few million years, while stars with less than a tenth of a solar mass may last for over a trillion years.

The exact mass-luminosity relationship depends on how efficiently energy can be transported from the core to the surface. A higher opacity has an insulating effect that retains more energy at the core, so the star does not need to produce as much energy to remain in hydrostatic equilibrium. By contrast, a lower opacity means energy escapes more rapidly and the star must burn more fuel to remain in equilibrium. A sufficiently high opacity can result in energy transport via convection, which changes the conditions needed to remain in equilibrium.

In high-mass main-sequence stars, the opacity is dominated by electron scattering, which is nearly constant with increasing temperature. Thus the luminosity only increases as the cube of the star's mass. For stars below 10 M☉, the opacity becomes dependent on temperature, resulting in the luminosity varying approximately as the fourth power of the star's mass. For very low-mass stars, molecules in the atmosphere also contribute to the opacity. Below about 0.5 M☉, the luminosity of the star varies as the mass to the power of 2.3, producing a flattening of the slope on a graph of mass versus luminosity. Even these refinements are only an approximation, however, and the mass-luminosity relation can vary depending on a star's composition.

Evolutionary tracks

When a main-sequence star has consumed the hydrogen at its core, the loss of energy generation causes its gravitational collapse to resume and the star evolves off the main sequence. The path which the star follows across the HR diagram is called an evolutionary track. A track known as the zero age main sequence (ZAMS) is where stars of different masses begin their main sequence lives, while a track known as the terminal age main sequence (TAMS) is where stars of different masses end their main sequence lives when hydrogen is depleted in their cores.

Stars with less than 0.23 M☉ are predicted to directly become white dwarfs when energy generation by nuclear fusion of hydrogen at their core comes to a halt, but stars in this mass range have main-sequence lifetimes longer than the current age of the universe, so no stars are old enough for this to have occurred.

In stars more massive than 0.23 M☉, the hydrogen surrounding the helium core reaches sufficient temperature and pressure to undergo fusion, forming a hydrogen-burning shell and causing the outer layers of the star to expand and cool. The stage as these stars move away from the main sequence is known as the subgiant branch; it is relatively brief and appears as a gap in the evolutionary track since few stars are observed at that point.

When the helium core of low-mass stars becomes degenerate, or the outer layers of intermediate-mass stars cool sufficiently to become opaque, their hydrogen shells increase in temperature and the stars start to become more luminous. This is known as the red-giant branch; it is a relatively long-lived stage and it appears prominently in H–R diagrams. These stars will eventually end their lives as white dwarfs.

The most massive stars do not become red giants; instead, their cores quickly become hot enough to fuse helium and eventually heavier elements and they are known as supergiants. They follow approximately horizontal evolutionary tracks from the main sequence across the top of the H–R diagram. Supergiants are relatively rare and do not show prominently on most H–R diagrams. Their cores will eventually collapse, usually leading to a supernova and leaving behind either a neutron star or black hole.

When a cluster of stars is formed at about the same time, the main-sequence lifespan of these stars will depend on their individual masses. The most massive stars will leave the main sequence first, followed in sequence by stars of ever lower masses. The position where stars in the cluster are leaving the main sequence is known as the turnoff point. By knowing the main-sequence lifespan of stars at this point, it becomes possible to estimate the age of the cluster.

![{\displaystyle \tau _{\text{MS}}\approx 10^{10}{\text{years}}\left[{\frac {M}{M_{\bigodot }}}\right]\left[{\frac {L_{\bigodot }}{L}}\right]=10^{10}{\text{years}}\left[{\frac {M}{M_{\bigodot }}}\right]^{-2.5}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3712d23010eb29f6e900c55e7101048e76a4651d)