The Gaia hypothesis (/ˈɡaɪ.ə/), also known as the Gaia theory, Gaia paradigm, or the Gaia principle, proposes that living organisms interact with their inorganic surroundings on Earth to form a synergistic and self-regulating complex system that helps to maintain and perpetuate the conditions for life on the planet.

The Gaia hypothesis was formulated by the chemist James Lovelock and co-developed by the microbiologist Lynn Margulis in the 1970s. Following the suggestion by his neighbour, novelist William Golding, Lovelock named the hypothesis after Gaia, the primordial deity who was sometimes personified as the Earth in Greek mythology. In 2006, the Geological Society of London awarded Lovelock the Wollaston Medal in part for his work on the Gaia hypothesis.

Topics related to the Gaia hypothesis include how the biosphere and the evolution of organisms affect the stability of global temperature, salinity of seawater, atmospheric oxygen levels, the maintenance of the hydrosphere, and other environmental variables that affect the habitability of Earth.

The Gaia hypothesis was initially criticized for being teleological - implying the Earth purposefully maintains an atmosphere suitable for life - but this interpretation was rejected by Lovelock. The Gaia hypothesis continues to attract criticism, and today many scientists consider it to be only weakly supported by, or at odds with, the available evidence.

Overview

The Gaia hypothesis argues that organisms co-evolve with their environment. That is, organisms influence the abiotic, not just the biological environment, and in co-development the abiotic environment influences biota via some sort of a Darwinian process, which may indicate an evolution of a collaborative reciprocal evolving life habitat. In 1995, Lovelock gave evidence of this biotic-abiotic relationship in his second iteration of his conjecture within the book Ages of Gaia. This theory states the evolution from the world of the early warm-loving bacteria and methanogenic bacteria towards the oxygen-enriched extant atmosphere, that is, today's atmosphere, which we know is the Holocene and this is a supportive environment of more complex life than primordial times. As each individual species or other systems pursue their self-interest, their combined actions may have counterbalancing effects on the abiotic and biotic environment. Opponents of this view sometimes reference examples of events that resulted in dramatic change rather than stable equilibrium, such as the conversion of the Earth's atmosphere from a reducing environment to an oxygen-rich one at the end of the Archaean and the beginning of the Proterozoic periods.

Less accepted versions of the Gaia hypothesis claim that changes in the biosphere are brought about through the coordination of living organisms and maintain those conditions through homeostasis. In some versions of Gaia Hypothosis, all lifeforms are considered part of one single living planetary being called Gaia. In this view, the atmosphere, the seas and the terrestrial crust would be results of interventions carried out by Gaia through the coevolving diversity of living organisms.

Among the precursors of the Gaia hypothesis are Russian scientists such as Piotr Alekseevich Kropotkin (1842–1921), Rafail Vasil’evich Rizpolozhensky (1862 – c. 1922), Vladimir Ivanovich Vernadsky (1863–1945), and Vladimir Alexandrovich Kostitzin (1886–1963).

The Gaia paradigm was an influence on the deep ecology movement.

Details

The Gaia hypothesis posits that the Earth is a self-regulating complex system involving the biosphere, the atmosphere, the hydrospheres and the pedosphere, tightly coupled as an evolving system. The hypothesis contends that this system as a whole, called Gaia, seeks a physical and chemical environment optimal for contemporary life.

Gaia evolves through a cybernetic feedback system operated by the biota, leading to broad stabilization of the conditions of habitability in a full homeostasis. Many processes in the Earth's surface, essential for the conditions of life, depend on the interaction of living forms, especially microorganisms, with inorganic elements. These processes establish a global control system that regulates Earth's surface temperature, atmosphere composition and ocean salinity, powered by the global thermodynamic disequilibrium state of the Earth system.

The existence of a planetary homeostasis influenced by living forms had been observed previously in the field of biogeochemistry, and it is being investigated also in other fields like Earth system science. The originality of the Gaia hypothesis relies on the assessment that such homeostatic balance is actively pursued with the goal of keeping the optimal conditions for life, even when terrestrial or external events menace them.

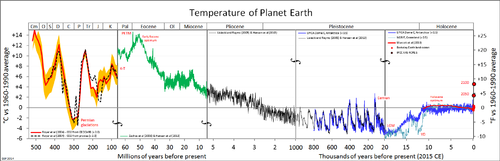

Regulation of global surface temperature

Since life started on Earth, the energy provided by the Sun has increased by 25–30%; however, the surface temperature of the planet has remained within the levels of habitability, reaching quite regular low and high margins. Lovelock has also hypothesised that methanogens produced elevated levels of methane in the early atmosphere, giving a situation similar to that found in petrochemical smog, similar in some respects to the atmosphere on Titan. This, he suggests, helped to screen out ultraviolet light until the formation of the ozone layer, maintaining a degree of homeostasis. However, the Snowball Earth research has suggested that "oxygen shocks" and reduced methane levels led, during the Huronian, Sturtian and Marinoan/Varanger Ice Ages, to a world that very nearly became a solid "snowball". These epochs are evidence against the ability of the pre Phanerozoic biosphere to fully self-regulate.

Processing of the greenhouse gas CO2, explained below, plays a critical role in the maintenance of the Earth temperature within the limits of habitability.

The CLAW hypothesis, inspired by the Gaia hypothesis, proposes a feedback loop that operates between ocean ecosystems and the Earth's climate. The hypothesis specifically proposes that particular phytoplankton that produce dimethyl sulfide are responsive to variations in climate forcing, and that these responses lead to a negative feedback loop that acts to stabilise the temperature of the Earth's atmosphere.

Currently the increase in human population and the environmental impact of its activities, such as the multiplication of greenhouse gases may cause negative feedbacks in the environment to become positive feedback. Lovelock has stated that this could bring an extremely accelerated global warming, but he has since stated the effects will likely occur more slowly.

Daisyworld simulations

In response to the criticism that the Gaia hypothesis seemingly required unrealistic group selection and cooperation between organisms, James Lovelock and Andrew Watson developed a mathematical model, Daisyworld, in which ecological competition underpinned planetary temperature regulation.

Daisyworld examines the energy budget of a planet populated by two different types of plants, black daisies and white daisies, which are assumed to occupy a significant portion of the surface. The colour of the daisies influences the albedo of the planet such that black daisies absorb more light and warm the planet, while white daisies reflect more light and cool the planet. The black daisies are assumed to grow and reproduce best at a lower temperature, while the white daisies are assumed to thrive best at a higher temperature. As the temperature rises closer to the value the white daisies like, the white daisies outreproduce the black daisies, leading to a larger percentage of white surface, and more sunlight is reflected, reducing the heat input and eventually cooling the planet. Conversely, as the temperature falls, the black daisies outreproduce the white daisies, absorbing more sunlight and warming the planet. The temperature will thus converge to the value at which the reproductive rates of the plants are equal.

Lovelock and Watson showed that, over a limited range of conditions, this negative feedback due to competition can stabilize the planet's temperature at a value which supports life, if the energy output of the Sun changes, while a planet without life would show wide temperature changes. The percentage of white and black daisies will continually change to keep the temperature at the value at which the plants' reproductive rates are equal, allowing both life forms to thrive.

It has been suggested that the results were predictable because Lovelock and Watson selected examples that produced the responses they desired.

Regulation of oceanic salinity

Ocean salinity has been constant at about 3.5% for a very long time. Salinity stability in oceanic environments is important as most cells require a rather constant salinity and do not generally tolerate values above 5%. The constant ocean salinity was a long-standing mystery, because no process counterbalancing the salt influx from rivers was known. Recently it was suggested that salinity may also be strongly influenced by seawater circulation through hot basaltic rocks, and emerging as hot water vents on mid-ocean ridges. However, the composition of seawater is far from equilibrium, and it is difficult to explain this fact without the influence of organic processes. One suggested explanation lies in the formation of salt plains throughout Earth's history. It is hypothesized that these are created by bacterial colonies that fix ions and heavy metals during their life processes.

In the biogeochemical processes of Earth, sources and sinks are the movement of elements. The composition of salt ions within our oceans and seas is: sodium (Na+), chlorine (Cl−), sulfate (SO42−), magnesium (Mg2+), calcium (Ca2+) and potassium (K+). The elements that comprise salinity do not readily change and are a conservative property of seawater. There are many mechanisms that change salinity from a particulate form to a dissolved form and back. Considering the metallic composition of iron sources across a multifaceted grid of thermomagnetic design, not only would the movement of elements hypothetically help restructure the movement of ions, electrons, and the like, but would also potentially and inexplicably assist in balancing the magnetic bodies of the Earth's geomagnetic field. The known sources of sodium i.e. salts are when weathering, erosion, and dissolution of rocks are transported into rivers and deposited into the oceans.

The Mediterranean Sea as being Gaia's kidney is found (here) by Kenneth J. Hsu, a correspondence author in 2001. Hsu suggests the "desiccation" of the Mediterranean is evidence of a functioning Gaia "kidney". In this and earlier suggested cases, it is plate movements and physics, not biology, which performs the regulation. Earlier "kidney functions" were performed during the "deposition of the Cretaceous (South Atlantic), Jurassic (Gulf of Mexico), Permo-Triassic (Europe), Devonian (Canada), and Cambrian/Precambrian (Gondwana) saline giants."

Regulation of oxygen in the atmosphere

The Gaia hypothesis states that the Earth's atmospheric composition is kept at a dynamically steady state by the presence of life. The atmospheric composition provides the conditions that contemporary life has adapted to. All the atmospheric gases other than noble gases present in the atmosphere are either made by organisms or processed by them.

The stability of the atmosphere in Earth is not a consequence of chemical equilibrium. Oxygen is a reactive compound, and should eventually combine with gases and minerals of the Earth's atmosphere and crust. Oxygen only began to persist in the atmosphere in small quantities about 50 million years before the start of the Great Oxygenation Event. Since the start of the Cambrian period, atmospheric oxygen concentrations have fluctuated between 15% and 40% of atmospheric volume. Traces of methane (at an amount of 100,000 tonnes produced per year) should not exist, as methane is combustible in an oxygen atmosphere.

Dry air in the atmosphere of Earth contains roughly (by volume) 78.09% nitrogen, 20.95% oxygen, 0.93% argon, 0.039% carbon dioxide, and small amounts of other gases including methane. Lovelock originally speculated that concentrations of oxygen above about 25% would increase the frequency of wildfires and conflagration of forests. This mechanism, however, would not raise oxygen levels if they became too low. If plants can be shown to robustly over-produce O2 then perhaps only the high oxygen forest fires regulator is necessary. Recent work on the findings of fire-caused charcoal in Carboniferous and Cretaceous coal measures, in geologic periods when O2 did exceed 25%, has supported Lovelock's contention.

Processing of CO2

Gaia scientists see the participation of living organisms in the carbon cycle as one of the complex processes that maintain conditions suitable for life. The only significant natural source of atmospheric carbon dioxide (CO2) is volcanic activity, while the only significant removal is through the precipitation of carbonate rocks. Carbon precipitation, solution and fixation are influenced by the bacteria and plant roots in soils, where they improve gaseous circulation, or in coral reefs, where calcium carbonate is deposited as a solid on the sea floor. Calcium carbonate is used by living organisms to manufacture carbonaceous tests and shells. Once dead, the living organisms' shells fall. Some arrive at the bottom of shallow seas where the heat and pressure of burial, and/or the forces of plate tectonics, eventually convert them to deposits of chalk and limestone. Much of the falling dead shells, however, redissolve into the ocean below the carbon compensation depth.

One of these organisms is Emiliania huxleyi, an abundant coccolithophore algae which may have a role in the formation of clouds. CO2 excess is compensated by an increase of coccolithophorid life, increasing the amount of CO2 locked in the ocean floor. Coccolithophorids, if the CLAW hypothesis turns out to be supported (see "Regulation of Global Surface Temperature" above), could help increase the cloud cover, hence control the surface temperature, help cool the whole planet and favor precipitation necessary for terrestrial plants. Lately the atmospheric CO2 concentration has increased and there is some evidence that concentrations of ocean algal blooms are also increasing.

Lichen and other organisms accelerate the weathering of rocks in the surface, while the decomposition of rocks also happens faster in the soil, thanks to the activity of roots, fungi, bacteria and subterranean animals. The flow of carbon dioxide from the atmosphere to the soil is therefore regulated with the help of living organisms. When CO2 levels rise in the atmosphere the temperature increases and plants grow. This growth brings higher consumption of CO2 by the plants, who process it into the soil, removing it from the atmosphere.

History

Precedents

The idea of the Earth as an integrated whole, a living being, has a long tradition. The mythical Gaia was the primal Greek goddess personifying the Earth, the Greek version of "Mother Nature" (from Ge = Earth, and Aia = PIE grandmother), or the Earth Mother. James Lovelock gave this name to his hypothesis after a suggestion from the novelist William Golding, who was living in the same village as Lovelock at the time (Bowerchalke, Wiltshire, UK). Golding's advice was based on Gea, an alternative spelling for the name of the Greek goddess, which is used as prefix in geology, geophysics and geochemistry. Golding later made reference to Gaia in his Nobel Prize acceptance lecture.

In the eighteenth century, as geology consolidated as a modern science, James Hutton maintained that geological and biological processes are interlinked. Later, the naturalist and explorer Alexander von Humboldt recognized the coevolution of living organisms, climate, and Earth's crust. In the twentieth century, Vladimir Vernadsky formulated a theory of Earth's development that is now one of the foundations of ecology. Vernadsky was a Ukrainian geochemist and was one of the first scientists to recognize that the oxygen, nitrogen, and carbon dioxide in the Earth's atmosphere result from biological processes. During the 1920s he published works arguing that living organisms could reshape the planet as surely as any physical force. Vernadsky was a pioneer of the scientific bases for the environmental sciences. His visionary pronouncements were not widely accepted in the West, and some decades later the Gaia hypothesis received the same type of initial resistance from the scientific community.

Also in the turn to the 20th century Aldo Leopold, pioneer in the development of modern environmental ethics and in the movement for wilderness conservation, suggested a living Earth in his biocentric or holistic ethics regarding land.

It is at least not impossible to regard the earth's parts—soil, mountains, rivers, atmosphere etc,—as organs or parts of organs of a coordinated whole, each part with its definite function. And if we could see this whole, as a whole, through a great period of time, we might perceive not only organs with coordinated functions, but possibly also that process of consumption as replacement which in biology we call metabolism, or growth. In such case we would have all the visible attributes of a living thing, which we do not realize to be such because it is too big, and its life processes too slow.

— Stephan Harding, Animate Earth

Another influence for the Gaia hypothesis and the environmental movement in general came as a side effect of the Space Race between the Soviet Union and the United States of America. During the 1960s, the first humans in space could see how the Earth looked as a whole. The photograph Earthrise taken by astronaut William Anders in 1968 during the Apollo 8 mission became, through the Overview Effect, an early symbol for the global ecology movement.

Formulation of the hypothesis

Lovelock started defining the idea of a self-regulating Earth controlled by the community of living organisms in September 1965, while working at the Jet Propulsion Laboratory in California on methods of detecting life on Mars. The first paper to mention it was Planetary Atmospheres: Compositional and other Changes Associated with the Presence of Life, co-authored with C.E. Giffin. A main concept was that life could be detected in a planetary scale by the chemical composition of the atmosphere. According to the data gathered by the Pic du Midi observatory, planets like Mars or Venus had atmospheres in chemical equilibrium. This difference with the Earth atmosphere was considered to be a proof that there was no life in these planets.

Lovelock formulated the Gaia Hypothesis in journal articles in 1972 and 1974, followed by a popularizing 1979 book Gaia: A new look at life on Earth. An article in the New Scientist of February 6, 1975, and a popular book length version of the hypothesis, published in 1979 as The Quest for Gaia, began to attract scientific and critical attention.

Lovelock called it first the Earth feedback hypothesis, and it was a way to explain the fact that combinations of chemicals including oxygen and methane persist in stable concentrations in the atmosphere of the Earth. Lovelock suggested detecting such combinations in other planets' atmospheres as a relatively reliable and cheap way to detect life.

Later, other relationships such as sea creatures producing sulfur and iodine in approximately the same quantities as required by land creatures emerged and helped bolster the hypothesis.

In 1971 microbiologist Dr. Lynn Margulis joined Lovelock in the effort of fleshing out the initial hypothesis into scientifically proven concepts, contributing her knowledge about how microbes affect the atmosphere and the different layers in the surface of the planet. The American biologist had also awakened criticism from the scientific community with her advocacy of the theory on the origin of eukaryotic organelles and her contributions to the endosymbiotic theory, nowadays accepted. Margulis dedicated the last of eight chapters in her book, The Symbiotic Planet, to Gaia. However, she objected to the widespread personification of Gaia and stressed that Gaia is "not an organism", but "an emergent property of interaction among organisms". She defined Gaia as "the series of interacting ecosystems that compose a single huge ecosystem at the Earth's surface. Period". The book's most memorable "slogan" was actually quipped by a student of Margulis'.

James Lovelock called his first proposal the Gaia hypothesis but has also used the term Gaia theory. Lovelock states that the initial formulation was based on observation, but still lacked a scientific explanation. The Gaia hypothesis has since been supported by a number of scientific experiments and provided a number of useful predictions.

First Gaia conference

In 1985, the first public symposium on the Gaia hypothesis, Is The Earth a Living Organism? was held at University of Massachusetts Amherst, August 1–6. The principal sponsor was the National Audubon Society. Speakers included James Lovelock, Lynn Margulis, George Wald, Mary Catherine Bateson, Lewis Thomas, Thomas Berry, David Abram, John Todd, Donald Michael, Christopher Bird, Michael Cohen, and William Fields. Some 500 people attended.

Second Gaia conference

In 1988, climatologist Stephen Schneider organised a conference of the American Geophysical Union. The first Chapman Conference on Gaia, was held in San Diego, California, on March 7, 1988.

During the "philosophical foundations" session of the conference, David Abram spoke on the influence of metaphor in science, and of the Gaia hypothesis as offering a new and potentially game-changing metaphorics, while James Kirchner criticised the Gaia hypothesis for its imprecision. Kirchner claimed that Lovelock and Margulis had not presented one Gaia hypothesis, but four:

- CoEvolutionary Gaia: that life and the environment had evolved in a coupled way. Kirchner claimed that this was already accepted scientifically and was not new.

- Homeostatic Gaia: that life maintained the stability of the natural environment, and that this stability enabled life to continue to exist.

- Geophysical Gaia: that the Gaia hypothesis generated interest in geophysical cycles and therefore led to interesting new research in terrestrial geophysical dynamics.

- Optimising Gaia: that Gaia shaped the planet in a way that made it an optimal environment for life as a whole. Kirchner claimed that this was not testable and therefore was not scientific.

Of Homeostatic Gaia, Kirchner recognised two alternatives. "Weak Gaia" asserted that life tends to make the environment stable for the flourishing of all life. "Strong Gaia" according to Kirchner, asserted that life tends to make the environment stable, to enable the flourishing of all life. Strong Gaia, Kirchner claimed, was untestable and therefore not scientific.

Lovelock and other Gaia-supporting scientists, however, did attempt to disprove the claim that the hypothesis is not scientific because it is impossible to test it by controlled experiment. For example, against the charge that Gaia was teleological, Lovelock and Andrew Watson offered the Daisyworld Model (and its modifications, above) as evidence against most of these criticisms. Lovelock said that the Daisyworld model "demonstrates that self-regulation of the global environment can emerge from competition amongst types of life altering their local environment in different ways".

Lovelock was careful to present a version of the Gaia hypothesis that had no claim that Gaia intentionally or consciously maintained the complex balance in her environment that life needed to survive. It would appear that the claim that Gaia acts "intentionally" was a statement in his popular initial book and was not meant to be taken literally. This new statement of the Gaia hypothesis was more acceptable to the scientific community. Most accusations of teleologism ceased, following this conference.

Third Gaia conference

By the time of the 2nd Chapman Conference on the Gaia Hypothesis, held at Valencia, Spain, on 23 June 2000, the situation had changed significantly. Rather than a discussion of the Gaian teleological views, or "types" of Gaia hypotheses, the focus was upon the specific mechanisms by which basic short term homeostasis was maintained within a framework of significant evolutionary long term structural change.

The major questions were:

- "How has the global biogeochemical/climate system called Gaia changed in time? What is its history? Can Gaia maintain stability of the system at one time scale but still undergo vectorial change at longer time scales? How can the geologic record be used to examine these questions?"

- "What is the structure of Gaia? Are the feedbacks sufficiently strong to influence the evolution of climate? Are there parts of the system determined pragmatically by whatever disciplinary study is being undertaken at any given time or are there a set of parts that should be taken as most true for understanding Gaia as containing evolving organisms over time? What are the feedbacks among these different parts of the Gaian system, and what does the near closure of matter mean for the structure of Gaia as a global ecosystem and for the productivity of life?"

- "How do models of Gaian processes and phenomena relate to reality and how do they help address and understand Gaia? How do results from Daisyworld transfer to the real world? What are the main candidates for "daisies"? Does it matter for Gaia theory whether we find daisies or not? How should we be searching for daisies, and should we intensify the search? How can Gaian mechanisms be collaborated with using process models or global models of the climate system that include the biota and allow for chemical cycling?"

In 1997, Tyler Volk argued that a Gaian system is almost inevitably produced as a result of an evolution towards far-from-equilibrium homeostatic states that maximise entropy production, and Axel Kleidon (2004) agreed stating: "...homeostatic behavior can emerge from a state of MEP associated with the planetary albedo"; "...the resulting behavior of a symbiotic Earth at a state of MEP may well lead to near-homeostatic behavior of the Earth system on long time scales, as stated by the Gaia hypothesis". M. Staley (2002) has similarly proposed "...an alternative form of Gaia theory based on more traditional Darwinian principles... In [this] new approach, environmental regulation is a consequence of population dynamics. The role of selection is to favor organisms that are best adapted to prevailing environmental conditions. However, the environment is not a static backdrop for evolution, but is heavily influenced by the presence of living organisms. The resulting co-evolving dynamical process eventually leads to the convergence of equilibrium and optimal conditions".

Fourth Gaia conference

A fourth international conference on the Gaia hypothesis, sponsored by the Northern Virginia Regional Park Authority and others, was held in October 2006 at the Arlington, Virginia campus of George Mason University.

Martin Ogle, Chief Naturalist, for NVRPA, and long-time Gaia hypothesis proponent, organized the event. Lynn Margulis, Distinguished University Professor in the Department of Geosciences, University of Massachusetts-Amherst, and long-time advocate of the Gaia hypothesis, was a keynote speaker. Among many other speakers: Tyler Volk, co-director of the Program in Earth and Environmental Science at New York University; Dr. Donald Aitken, Principal of Donald Aitken Associates; Dr. Thomas Lovejoy, President of the Heinz Center for Science, Economics and the Environment; Robert Corell, Senior Fellow, Atmospheric Policy Program, American Meteorological Society and noted environmental ethicist, J. Baird Callicott.

Criticism

After initially receiving little attention from scientists (from 1969 until 1977), thereafter for a period the initial Gaia hypothesis was criticized by a number of scientists, including Ford Doolittle, Richard Dawkins and Stephen Jay Gould. Lovelock has said that because his hypothesis is named after a Greek goddess, and championed by many non-scientists, the Gaia hypothesis was interpreted as a neo-Pagan religion. Many scientists in particular also criticized the approach taken in his popular book Gaia, a New Look at Life on Earth for being teleological—a belief that things are purposeful and aimed towards a goal. Responding to this critique in 1990, Lovelock stated, "Nowhere in our writings do we express the idea that planetary self-regulation is purposeful, or involves foresight or planning by the biota".

Stephen Jay Gould criticized Gaia as being "a metaphor, not a mechanism." He wanted to know the actual mechanisms by which self-regulating homeostasis was achieved. In his defense of Gaia, David Abram argues that Gould overlooked the fact that "mechanism", itself, is a metaphor—albeit an exceedingly common and often unrecognized metaphor—one which leads us to consider natural and living systems as though they were machines organized and built from outside (rather than as autopoietic or self-organizing phenomena). Mechanical metaphors, according to Abram, lead us to overlook the active or agentic quality of living entities, while the organismic metaphors of the Gaia hypothesis accentuate the active agency of both the biota and the biosphere as a whole. With regard to causality in Gaia, Lovelock argues that no single mechanism is responsible, that the connections between the various known mechanisms may never be known, that this is accepted in other fields of biology and ecology as a matter of course, and that specific hostility is reserved for his own hypothesis for other reasons.

Aside from clarifying his language and understanding of what is meant by a life form, Lovelock himself ascribes most of the criticism to a lack of understanding of non-linear mathematics by his critics, and a linearizing form of greedy reductionism in which all events have to be immediately ascribed to specific causes before the fact. He also states that most of his critics are biologists but that his hypothesis includes experiments in fields outside biology, and that some self-regulating phenomena may not be mathematically explainable.

Natural selection and evolution

Lovelock has suggested that global biological feedback mechanisms could evolve by natural selection, stating that organisms that improve their environment for their survival do better than those that damage their environment. However, in the early 1980s, W. Ford Doolittle and Richard Dawkins separately argued against this aspect of Gaia. Doolittle argued that nothing in the genome of individual organisms could provide the feedback mechanisms proposed by Lovelock, and therefore the Gaia hypothesis proposed no plausible mechanism and was unscientific. Dawkins meanwhile stated that for organisms to act in concert would require foresight and planning, which is contrary to the current scientific understanding of evolution. Like Doolittle, he also rejected the possibility that feedback loops could stabilize the system.

Margulis argued in 1999 that "Darwin's grand vision was not wrong, only incomplete. In accentuating the direct competition between individuals for resources as the primary selection mechanism, Darwin (and especially his followers) created the impression that the environment was simply a static arena". She wrote that the composition of the Earth's atmosphere, hydrosphere, and lithosphere are regulated around "set points" as in homeostasis, but those set points change with time.

Evolutionary biologist W. D. Hamilton called the concept of Gaia Copernican, adding that it would take another Newton to explain how Gaian self-regulation takes place through Darwinian natural selection. More recently Ford Doolittle building on his and Inkpen's ITSNTS (It's The Song Not The Singer) proposal proposed that differential persistence can play a similar role to differential reproduction in evolution by natural selections, thereby providing a possible reconciliation between the theory of natural selection and the Gaia hypothesis.

Criticism in the 21st century

The Gaia hypothesis continues to be broadly skeptically received by the scientific community. For instance, arguments both for and against it were laid out in the journal Climatic Change in 2002 and 2003. A significant argument raised against it are the many examples where life has had a detrimental or destabilising effect on the environment rather than acting to regulate it. Several recent books have criticised the Gaia hypothesis, expressing views ranging from "... the Gaia hypothesis lacks unambiguous observational support and has significant theoretical difficulties" to "Suspended uncomfortably between tainted metaphor, fact, and false science, I prefer to leave Gaia firmly in the background" to "The Gaia hypothesis is supported neither by evolutionary theory nor by the empirical evidence of the geological record". The CLAW hypothesis, initially suggested as a potential example of direct Gaian feedback, has subsequently been found to be less credible as understanding of cloud condensation nuclei has improved. In 2009 the Medea hypothesis was proposed: that life has highly detrimental (biocidal) impacts on planetary conditions, in direct opposition to the Gaia hypothesis.

In a 2013 book-length evaluation of the Gaia hypothesis considering modern evidence from across the various relevant disciplines, Toby Tyrrell concluded that: "I believe Gaia is a dead end. Its study has, however, generated many new and thought provoking questions. While rejecting Gaia, we can at the same time appreciate Lovelock's originality and breadth of vision, and recognize that his audacious concept has helped to stimulate many new ideas about the Earth, and to champion a holistic approach to studying it". Elsewhere he presents his conclusion "The Gaia hypothesis is not an accurate picture of how our world works". This statement needs to be understood as referring to the "strong" and "moderate" forms of Gaia—that the biota obeys a principle that works to make Earth optimal (strength 5) or favourable for life (strength 4) or that it works as a homeostatic mechanism (strength 3). The latter is the "weakest" form of Gaia that Lovelock has advocated. Tyrrell rejects it. However, he finds that the two weaker forms of Gaia—Coeveolutionary Gaia and Influential Gaia, which assert that there are close links between the evolution of life and the environment and that biology affects the physical and chemical environment—are both credible, but that it is not useful to use the term "Gaia" in this sense and that those two forms were already accepted and explained by the processes of natural selection and adaptation.

Anthropic principle

As emphasized by multiple critics, no plausible mechanism exists that would drive the evolution of negative feedback loops leading to planetary self-regulation of the climate. Indeed, multiple incidents in Earth's history (see the Medea hypothesis) have shown that the Earth and the biosphere can enter self-destructive positive feedback loops that lead to mass extinction events.

For example, the Snowball Earth glaciations appeared to result from the development of photosynthesis during a period when the Sun was cooler than it is now. These mechanisms will have some effect, but any understanding of glacial-interglacial cycles requires study of the variations in the Earth’s orbit around the Sun, the tilt of its axis of rotation, and the ‘wobble’ in that rotational movement which causes the periodicity in Northern Hemisphere insolation, thereby setting the Earth’s thermal regime. Including studies from the fields of mathematics and Earth science, the fields of geology and geography provide insight into the causes of ice ages. Meanwhile, the removal of carbon dioxide from the atmosphere, along with the oxidation of atmospheric methane by the released oxygen, resulted in a dramatic diminishment of the greenhouse effect. The resulting expansion of the polar ice sheets decreased the overall fraction of sunlight absorbed by the Earth, resulting in a runaway ice–albedo positive feedback loop ultimately resulting in glaciation over nearly the entire surface of the Earth. However, volcanic processes at this scale should be understood as relating to the pressure exerted on the Earth’s crust, and released during periods of ice sheet retreat. Breaking out of the Earth from the frozen condition appears to have directly been due to the release of carbon dioxide and methane by volcanos, although release of methane by microbes trapped underneath the ice could also have played a part. Lesser contributions to warming would come from the fact that coverage of the Earth by ice sheets largely inhibited photosynthesis and lessened the removal of carbon dioxide from the atmosphere by the weathering of siliceous rocks. However, in the absence of tectonic activity, the snowball condition could have persisted indefinitely.

Geologic events with amplifying positive feedbacks (along with some possible biologic participation) led to the greatest mass extinction event on record, the Permian–Triassic extinction event about 250 million years ago. The precipitating event appears to have been volcanic eruptions in the Siberian Traps, a hilly region of flood basalts in Siberia. These eruptions released high levels of carbon dioxide and sulfur dioxide which elevated world temperatures and acidified the oceans. Estimates of the rise in carbon dioxide levels range widely, from as little as a two-fold increase, to as much as a twenty-fold increase. Amplifying feedbacks increased the warming to considerably greater than that to be expected merely from the greenhouse effect of carbon dioxide: these include the ice albedo feedback, the increased evaporation of water vapor (another greenhouse gas) into the atmosphere, the release of methane from the warming of methane hydrate deposits buried under the permafrost and beneath continental shelf sediments, and increased wildfires. The rising carbon dioxide acidified the oceans, leading to widespread die-off of creatures with calcium carbonate shells, killing mollusks and crustaceans like crabs and lobsters and destroying coral reefs. Their demise led to disruption of the entire oceanic food chain. It has been argued that rising temperatures may have led to disruption of the chemocline separating sulfidic deep waters from oxygenated surface waters, which led to massive release of toxic hydrogen sulfide (produced by anerobic bacteria) to the surface ocean and even into atmosphere, contributing to the (primarily methane-driven) collapse of the ozone layer, and helping to explain the die-off of terrestrial animal and plant life.

According to the weak anthropic principle, our observation of such stabilizing feedback loops is an observer selection effect. In all the universe, it is only planets with Gaian properties that could have evolved intelligent, self-aware organisms capable of asking such questions. One can imagine innumerable worlds where life evolved with different biochemistries or where the worlds had different geophysical properties such that the worlds are presently dead due to runaway greenhouse effect, or else are in perpetual Snowball, or else due to one factor or another, life has been inhibited from evolving beyond the microbial level.

If no means exists for natural selection to operate at the biosphere level, then it would appear that the anthropic principle provides the only explanation for the survival of Earth's biosphere over geologic time. But in recent years, this strictly reductionistic view has been modified by recognition that natural selection can operate at multiple levels of the biological hierarchy — not just at the level of individual organisms. Traditional Darwinian natural selection requires reproducing entities that display inheritable properties or abilities that result in their having more offspring than their competitors. Successful biospheres clearly cannot reproduce to spawn copies of themselves, and so traditional Darwinian natural selection cannot operate. A mechanism for biosphere-level selection was proposed by Ford Doolittle: Although he had been a strong and early critic of the Gaia hypothesis, he had by 2015 started to think of ways whereby Gaia might be "Darwinised", seeking means whereby the planet could have evolved biosphere-level adaptations. Doolittle has suggested that differential persistence — mere survival — could be considered a legitimate mechanism for natural selection. As the Earth passes through various challenges, the phenomenon of differential persistence enables selected entities to achieve fixation by surviving the death of their competitors. Although Earth's biosphere is not competing against other biospheres on other planets, there are many competitors for survival on this planet. Collectively, Gaia constitutes the single clade of all living survivors descended from life’s last universal common ancestor (LUCA). Various other proposals for biosphere-level selection include sequential selection, entropic hierarchy, and considering Gaia as a holobiont-like system. Ultimately speaking, differential persistence and sequential selection are variants of the anthropic principle, while entropic hierarchy and holobiont arguments may possibly allow understanding the emergence of Gaia without anthropic arguments.