| Doomsday Clock | |

|---|---|

The Doomsday Clock pictured at its setting of "85 seconds to midnight", last changed on January 27, 2026 | |

| Frequency | Yearly |

| Inaugurated | June 1947 |

| Most recent | January 27, 2026 |

| Organized by | Bulletin of the Atomic Scientists |

| Website | thebulletin |

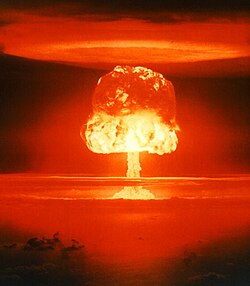

The Doomsday Clock is a symbol that represents the estimated likelihood of a human-made global catastrophe, in the opinion of the nonprofit organization Bulletin of the Atomic Scientists.

Maintained since 1947, the Clock is a proxy mechanism for threats to humanity from unchecked scientific and technological advances: A hypothetical global catastrophe is represented by midnight on the Clock, with the Bulletin's opinion on how close the world is to "zero" represented by a certain number of minutes or seconds to midnight. This is assessed in January of each year. The main factors influencing the Clock are nuclear warfare, climate change, and artificial intelligence. The Bulletin's Science and Security Board monitors new developments in the life sciences and technology that could inflict irrevocable harm to humanity.

The Clock's original setting in 1947 was seven minutes to midnight. It has since been set backward eight times and forward 19 times. The farthest time from midnight was 17 minutes in 1991, and the closest is 85 seconds in 2026.

The Clock was moved to 150 seconds (2 minutes, 30 seconds) in 2017, then forward to two minutes to midnight in 2018, and left unchanged in 2019. It was moved forward to 100 seconds (1 minute, 40 seconds) in 2020, 90 seconds (1 minute, 30 seconds) in 2023, 89 seconds (1 minute, 29 seconds) in 2025, and 85 seconds (1 minute, 25 seconds) in 2026.

History

The Doomsday Clock's origin can be traced to the international group of researchers called the Chicago Atomic Scientists, who had participated in the Manhattan Project. After the atomic bombings of Hiroshima and Nagasaki, they began publishing a mimeographed newsletter and then the magazine, Bulletin of the Atomic Scientists, which, since its inception, has depicted the Clock on every cover. The Clock was first represented in 1947, when the Bulletin co-founder Hyman Goldsmith asked artist Martyl Langsdorf (wife of Manhattan Project research associate and Szilárd petition signatory Alexander Langsdorf Jr.) to design a cover for the magazine's June 1947 issue. As Eugene Rabinowitch, another co-founder of the Bulletin, explained later:

The Bulletin's Clock is not a gauge to register the ups and downs of the international power struggle; it is intended to reflect basic changes in the level of continuous danger in which mankind lives in the nuclear age...

Langsdorf chose a clock to reflect the urgency of the problem: like a countdown, the Clock suggests that destruction will naturally occur unless someone takes action to stop it.

In January 2007, designer Michael Bierut, who was on the Bulletin's Governing Board, redesigned the Doomsday Clock to give it a more modern feel. In 2009, the Bulletin ceased its print edition and became one of the first print publications in the U.S. to become entirely digital; the Clock is now found as part of the logo on the Bulletin's website. Information about the Doomsday Clock Symposium, a timeline of the Clock's settings, and multimedia shows about the Clock's history and culture can also be found on the Bulletin's website.

The 5th Doomsday Clock Symposium was held on November 14, 2013, in Washington, D.C.; it was a day-long event that was open to the public and featured panelists discussing various issues on the topic "Communicating Catastrophe". There was also an evening event at the Hirshhorn Museum and Sculpture Garden in conjunction with the Hirshhorn's current exhibit, "Damage Control: Art and Destruction Since 1950". The panel discussions, held at the American Association for the Advancement of Science, were streamed live from the Bulletin's website and can still be viewed there. Reflecting international events dangerous to humankind, the Clock has been adjusted 27 times since its inception in 1947, when it was set to "seven minutes to midnight".

The Doomsday Clock has become a universally recognized metaphor according to The Two-Way, an NPR blog. According to the Bulletin, the Clock attracts more daily visitors to the Bulletin's site than any other feature.

Basis for settings

"Midnight" has a deeper meaning besides the constant threat of war. There are various elements taken into consideration when the scientists from the Bulletin decide what Midnight and "global catastrophe" really mean in a particular year. They might include "politics, energy, weapons, diplomacy, and climate science"; potential sources of threat include nuclear threats, climate change, bioterrorism, and artificial intelligence. Members of the board judge Midnight by discussing how close they think humanity is to the end of civilization. In 1947, at the beginning of the Cold War, the Clock was started at seven minutes to midnight.

Fluctuations and threats

Before January 2020, the two tied-for-lowest points for the Doomsday Clock were in 1953 (when the Clock was set to two minutes until midnight, after the U.S. and the Soviet Union began testing hydrogen bombs) and in 2018, following the failure of world leaders to address tensions relating to nuclear weapons and climate change issues. In other years, the Clock's time has fluctuated from 17 minutes in 1991 to 2 minutes 30 seconds in 2017. Discussing the change in 2017, Lawrence Krauss, one of the scientists from the Bulletin, warned that political leaders must make decisions based on facts, and those facts "must be taken into account if the future of humanity is to be preserved". In an announcement from the Bulletin about the status of the Clock, they went as far to call for action from "wise" public officials and "wise" citizens to make an attempt to steer human life away from catastrophe while humans still can.

On January 24, 2018, scientists moved the clock to two minutes to midnight, based on threats greatest in the nuclear realm. The scientists said, of recent moves by North Korea under Kim Jong-un and the administration of Donald Trump in the U.S.: "Hyperbolic rhetoric and provocative actions by both sides have increased the possibility of nuclear war by accident or miscalculation".

The clock was left unchanged in 2019 due to the twin threats of nuclear weapons and climate change, and the problem of those threats being "exacerbated this past year by the increased use of information warfare to undermine democracy around the world, amplifying risk from these and other threats and putting the future of civilization in extraordinary danger".

On January 23, 2020, the Clock was moved to 100 seconds (1 minute, 40 seconds) before midnight. The Bulletin's executive chairman, Jerry Brown, said "the dangerous rivalry and hostility among the superpowers increases the likelihood of nuclear blunder... Climate change just compounds the crisis". The "100 seconds to midnight" setting remained unchanged in 2021 and 2022.

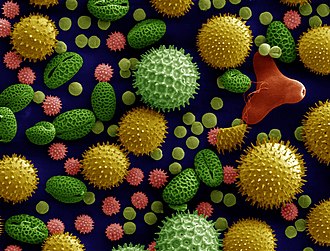

On January 24, 2023, the Clock was moved to 90 seconds (1 minute, 30 seconds) before midnight, which was largely attributed to the risk of nuclear escalation that arose from the Russian invasion of Ukraine. Other reasons cited included climate change, biological threats such as COVID-19, and risks associated with disinformation and disruptive technologies.

On January 28, 2025, the Clock was moved to 89 seconds (1 minute, 29 seconds) before midnight. In addition to last year's concerns, the increased usage of artificial intelligence in both the battlefield and social media was noted as a new factor.

On January 27, 2026, the Clock was moved to 85 seconds (1 minute, 25 seconds) before midnight, the closest it has ever been set to midnight since its inception in 1947. According to the bulletin, it's all caused as a "failure of leadership". From Alexandra Bell, CEO and president of the scientists "It is a hard truth, but this is our reality" as no significant action was done to push the clock back.

Criticism

In 2016, Anders Sandberg of the Future of Humanity Institute has stated that the "grab bag of threats" currently mixed together by the Clock can induce paralysis. People may be more likely to succeed at smaller, incremental challenges; for example, taking steps to prevent the accidental detonation of nuclear weapons was a small but significant step towards avoiding nuclear war. Alex Barasch in Slate argued that "putting humanity on a permanent, blanket high-alert isn't helpful when it comes to policy or science" and criticized the Bulletin for neither explaining nor attempting to quantify their methodology.

Cognitive psychologist Steven Pinker harshly criticized the Doomsday Clock as a political stunt, pointing to the words of its founder that its purpose was "to preserve civilization by scaring men into rationality". He stated that it is inconsistent and not based on any objective indicators of security, using as an example its being farther from midnight in 1962 during the Cuban Missile Crisis than in the "far calmer 2007". He argued it was another example of humanity's tendency toward historical pessimism, and compared it to other predictions of self-destruction that went unfulfilled.

Writing for the New Statesman, British journalist James Ball questioned the Clock's purpose and noted the Bulletin's lack of objective methodology for setting the Clock. Ball then observes that the organization is no different to other doomsday cults preaching the end of the world except that the Bulletin is secular instead of religious.

Conservative media outlets have often criticized the Bulletin and the Doomsday Clock. Keith Payne wrote 2010 in the National Review that the Clock overestimated the effects of "developments in the areas of nuclear testing and formal arms control". In 2018, Tristin Hopper in the National Post acknowledged that "there are plenty of things to worry about regarding climate change", but states that climate change is not in the same league as total nuclear destruction. In addition, some critics accuse the Bulletin of pushing a political agenda.

Timeline

| Year | Minutes to midnight | Time (24-h) | Change (minutes) | Reason | Clock |

|---|---|---|---|---|---|

| 1947 | 7 | 23:53 | 0 | The initial setting of the Doomsday Clock. |

|

| 1949 | 3 | 23:57 | −4 | The Soviet Union tests its first atomic bomb, the RDS-1, starting the nuclear arms race. |

|

| 1953 | 2 | 23:58 | −1 | The United States tests its first thermonuclear device in November 1952 as part of Operation Ivy, before the Soviet Union follows suit with the Joe 4 test in August. This remained the clock's closest approach to midnight (tied in 2018) until 2020. |

|

| 1960 | 7 | 23:53 | +5 | In response to a perception of increased scientific cooperation and public understanding of the dangers of nuclear weapons (as well as political actions taken to avoid "massive retaliation"), the United States and Soviet Union cooperate and avoid direct confrontation in regional conflicts such as the 1956 Suez Crisis, the 1958 Second Taiwan Strait Crisis, and the 1958 Lebanon crisis. Scientists from various countries help establish the International Geophysical Year, a series of coordinated, worldwide scientific observations between nations allied with both the United States and the Soviet Union, and the Pugwash Conferences on Science and World Affairs, which allow Soviet and American scientists to interact. |

|

| 1963 | 12 | 23:48 | +5 | The United States and the Soviet Union sign the Partial Test Ban Treaty, limiting atmospheric nuclear testing. |

|

| 1968 | 7 | 23:53 | −5 | The involvement of the United States in the Vietnam War intensifies, the Indo-Pakistani War of 1965 takes place, and the Six-Day War occurs in 1967. France and China, two nations which have not signed the Partial Test Ban Treaty, acquire and test nuclear weapons (the 1960 Gerboise Bleue and the 1964 596, respectively) to assert themselves as global players in the nuclear arms race. |

|

| 1969 | 10 | 23:50 | +3 | About 100 nations sign the Nuclear Non-Proliferation Treaty, and the United States also ratifies it. |

|

| 1972 | 12 | 23:48 | +2 | The United States and the Soviet Union sign the first Strategic Arms Limitation Treaty (SALT I) and the Anti-Ballistic Missile (ABM) Treaty. |

|

| 1974 | 9 | 23:51 | −3 | India tests a nuclear device (Smiling Buddha), and SALT II talks stall. Both the United States and the Soviet Union modernize multiple independently targetable reentry vehicles (MIRVs). |

|

| 1980 | 7 | 23:53 | −2 | Unforeseeable end to deadlock in American–Soviet talks as the Soviet–Afghan War begins. As a result of the war, the U.S. Senate refuses to ratify the SALT II agreement. |

|

| 1981 | 4 | 23:56 | −3 | The Soviet war in Afghanistan toughens the U.S.' nuclear posture. U.S. President Jimmy Carter withdraws the United States from the 1980 Summer Olympic Games in Moscow. The Carter administration considers ways in which the United States could win a nuclear war. Ronald Reagan becomes President of the United States, scraps further arms reduction talks with the Soviet Union, and argues that the only way to end the Cold War is to win it. Tensions between the United States and the Soviet Union contribute to the danger of nuclear annihilation as they each deploy intermediate-range missiles in Europe. The adjustment also accounts for the Iran hostage crisis, the Iran–Iraq War, China's atmospheric nuclear warhead test, the declaration of martial law in Poland, apartheid in South Africa, and human rights abuses across the world.[35][36] |

|

| 1984 | 3 | 23:57 | −1 | Further escalation of the tensions between the United States and the Soviet Union, with the ongoing Soviet–Afghan War intensifying the Cold War. U.S. Pershing II medium-range ballistic missile and cruise missiles are deployed in Western Europe.[35] Ronald Reagan pushes to win the Cold War by intensifying the arms race between the superpowers. The Soviet Union and its allies (except Romania) boycott the 1984 Olympic Games in Los Angeles, as a response to the U.S.-led boycott in 1980. |

|

| 1988 | 6 | 23:54 | +3 | In December 1987, the United States and the Soviet Union sign the Intermediate-Range Nuclear Forces Treaty, to eliminate intermediate-range nuclear missiles, and their relations improve. |

|

| 1990 | 10 | 23:50 | +4 | The fall of the Berlin Wall and the Iron Curtain, along with the reunification of Germany, meaning that the Cold War is nearing its end. |

|

| 1991 | 17 | 23:43 | +7 | The United States and Soviet Union sign the first Strategic Arms Reduction Treaty (START I), the US announces the removal of many tactical nuclear weapons in September 1991, and the Soviet Union takes similar steps, as well as announcing the complete cessation of all nuclear testing in October 1991. The Bulletin editorial, published November 26, 1991, announces that "the 40-year-long East-West nuclear arms race is over." One month after the Bulletin made this clock adjustment, the Soviet Union dissolves on December 26, 1991. This is the farthest from midnight the Clock has been since its inception. |

|

| 1995 | 14 | 23:46 | −3 | Global military spending continues at Cold War levels amid concerns about post-Soviet nuclear proliferation of weapons and brainpower. |

|

| 1998 | 9 | 23:51 | −5 | Both India (Pokhran-II) and Pakistan (Chagai-I) test nuclear weapons in a tit-for-tat show of aggression; the United States and Russia run into difficulties in further reducing stockpiles. |

|

| 2002 | 7 | 23:53 | −2 | Little progress on global nuclear disarmament. United States rejects a series of arms control treaties and announces its intentions to withdraw from the Anti-Ballistic Missile Treaty, amid concerns about the possibility of a nuclear terrorist attack due to the amount of weapon-grade nuclear materials that are unsecured and unaccounted for worldwide. |

|

| 2007 | 5 | 23:55 | −2 | North Korea tests a nuclear weapon in October 2006, Iran's nuclear ambitions, a renewed American emphasis on the military utility of nuclear weapons, the failure to adequately secure nuclear materials, and the continued presence of some 26,000 nuclear weapons in the United States and Russia. After assessing the dangers posed to civilization, climate change was added to the prospect of nuclear annihilation as the greatest threats to humanity. |

|

| 2010 | 6 | 23:54 | +1 | Worldwide cooperation to reduce nuclear arsenals and limit effect of climate change. The New START agreement is ratified by both the United States and Russia, and more negotiations for further reductions in the American and Russian nuclear arsenal are already planned. The 2009 United Nations Climate Change Conference in Copenhagen results in the developing and industrialized countries agreeing to take responsibility for carbon emissions and to limit global temperature rise to 2 degrees Celsius. |

|

| 2012 | 5 | 23:55 | −1 | Lack of global political action to address global climate change, nuclear weapons stockpiles, the potential for regional nuclear conflict, and nuclear power safety. |

|

| 2015 | 3 | 23:57 | −2 | Concerns amid continued lack of global political action to address global climate change, the modernization of nuclear weapons in the United States and Russia, and the problem of nuclear waste. |

|

| 2017 | 2+1⁄2 | 23:57:30 | −1⁄2 (−30 s) |

United States President Donald Trump's comments over nuclear weapons, the threat of a renewed arms race between the U.S. and Russia, and the expressed disbelief in the scientific consensus over climate change by the Trump administration. |

|

| 2018 | 2 | 23:58 | −1⁄2 (−30 s) |

Failure of world leaders to deal with looming threats of nuclear war and climate change. This was at the time the clock's third closest approach to midnight, matching that of 1953. In 2019, the Bulletin reaffirmed the "two minutes to midnight" time, citing continuing climate change and Trump administration's abandonment of U.S. efforts to lead the world toward decarbonization; U.S. withdrawal from the Paris Agreement, the Joint Comprehensive Plan of Action, and the Intermediate-Range Nuclear Forces Treaty; U.S. and Russian nuclear modernization efforts; information warfare threats and other dangers from "disruptive technologies" such as synthetic biology, artificial intelligence, and cyberwarfare. |

|

| 2020 | 1+2⁄3 (100 s) |

23:58:20 | −1⁄3 (−20 s) |

Failure of world leaders to deal with the increased threats of nuclear war, such as the end of the Intermediate-Range Nuclear Forces Treaty (INF) between the United States and Russia as well as increased tensions between the U.S. and Iran, along with the continued neglect of climate change. Announced in units of seconds, instead of minutes; this was the clock's closest approach to midnight, exceeding that of 1953 and 2018. The Bulletin concluded by stating that the current issues causing the adjustment are "the most dangerous situation that humanity has ever faced". In the annual statements for 2021 and 2022, issued in January of each year, the Bulletin left the "100 seconds to midnight" time setting unchanged. |

|

| 2023 | 1+1⁄2 (90 s) |

23:58:30 | −1⁄6 (−10 s) |

Due largely—but not exclusively—to the Russian invasion of Ukraine and the increased risk of nuclear escalation stemming from the conflict. Russia suspended its participation in the last remaining nuclear weapons treaty between it and the United States, New START. Russia also brought its war to the Chernobyl and Zaporizhzhia nuclear reactor sites, violating international protocols and risking widespread release of radioactive materials. North Korea resumed its nuclear rhetoric, launching an intermediate-range ballistic missile test over Japan in October 2022. Continuing threats posed by the climate crisis and the breakdown of global norms and institutions set up to mitigate risks associated with advancing technologies and biological threats such as COVID-19 also contributed to the time setting. This setting remained unchanged the following year. |

|

| 2025 | 1+29⁄60 (89 s) |

23:58:31 | −1⁄60 (−1 s) |

The continuing Russian invasion of Ukraine and the Middle Eastern crisis, increased nuclear proliferation, effects of climate change, biological threats, and advancing technologies. |

|

| 2026 | 1+5⁄12 (85 s) |

23:58:35 | −1⁄15 (−4 s) |

Russia's continued war in Ukraine, the U.S. and Israeli bombing of Iran, and border clashes between India and Pakistan. Other cited factors include ongoing tensions in Asia, including on the Korean Peninsula, as well as rising tensions in the Western hemisphere, and the expiration of the New START treaty on February 5, 2026. Rising nuclear proliferation, effects of climate change, biological threats, and advancing technologies have also continued. This is the closest to midnight the Clock has been since its inception. |

|

In popular culture

- "Seven Minutes to Midnight", a 1980 single by Wah! Heat, refers to that year's change of the Doomsday Clock from nine to seven minutes to midnight.

- Australian rock band Midnight Oil's 1984 LP Red Sails in the Sunset features a song called "Minutes to Midnight", and the album's cover shows an aerial-view rendering of Sydney after a nuclear strike.

- The title of Iron Maiden's 1984 song "2 Minutes to Midnight" is a reference to the Doomsday Clock.

- The Doomsday Clock appears in the beginning of the 1985 music video for "Russians" by Sting.

- The 1986 short story "The End of the Whole Mess" by Stephen King refers to the Doomsday Clock being set at fifteen seconds before midnight due to elevated geopolitical tension.

- The Doomsday Clock was a recurring visual theme in Alan Moore and Dave Gibbons's seminal Watchmen graphic novel series (1986–87), its 2009 film adaptation, and its 2019 television miniseries sequel. Additionally its sequel series, which takes place in the main DC Universe, borrows the title.

- The title of Linkin Park's 2007 album Minutes to Midnight is a reference to the Doomsday Clock. Their music video for "Shadow of the Day" from Minutes to Midnight, represents the Doomsday Clock as an actual clock with it reaching midnight at the end of the video.

- The Smashing Pumpkins 2007 album 'Zeitgeist' contains a reference through the song 'Doomsday Clock'

- In the Flobots' song "The Circle in the Square", the lyrics say "the clock is now 11:55 on the big hand", which was the Doomsday Clock's setting in 2012 when the song was released.

- The title of the 1982 Doctor Who episode "Four to Doomsday" references the Doomsday Clock. In the 2017 episode "The Pyramid at the End of the World", the Monks changed every clock in the world to three minutes to midnight as a warning about what will happen if humanity does not accept their help. Representatives of the three most powerful armies on Earth agreed not to fight each other, believing a potential war is the catastrophe. However, the clock remained displaying two minutes to midnight. After the Doctor averted the true catastrophe – an accidental bacteriological disaster –, the clock began moving backwards.

- The Doomsday Clock is featured in Yael Bartana's What if Women Ruled the World, which premiered on July 5, 2017 at the Manchester International Festival.

- One minute to midnight on the Doomsday Clock is heavily referenced in the grime/punk crossover song "Effed" by Nottingham rapper Snowy and Jason Williamson of Sleaford Mods. Because of the track's political content, there was an initial reluctance from mainstream radio stations to play the track before the 2019 United Kingdom general election. However, the track was later championed by a number of BBC Radio DJs, including punk innovator Iggy Pop.

- In the Criminal Minds season 13 episode "The Bunker", the unsubs abduct women using the Doomsday Clock.

- The Madam Secretary season 2 episode "On the Clock" features the Doomsday Clock, as the characters try to keep it from moving forward.