Green computing, green IT (information technology), or Information and Communication Technology Sustainability, is the study and practice of environmentally sustainable computing or IT.

The goals of green computing include optimising energy efficiency during the product's lifecycle; leveraging greener energy sources to power the product and its network; improving the reusability, maintainability, and repairability of the product to extend its lifecycle; improving the recyclability or biodegradability of e-waste to support circular economy ambitions; and aligning the manufacture and use of IT systems with environmental and social goals. Green computing is important for all classes of systems, ranging from handheld systems to large-scale data centers. According to the International Energy Agency, data centres accounted for about 1.5% of global electricity consumption in 2024 (~415 TWh), and under its central scenario, demand could roughly double to ~945 TWh by 2030, with AI workloads a major driver of growth. Sustainable development is a concept that redefines the notion of the general interest by integrating environmental, social, and economic considerations. Many corporate IT departments have green computing initiatives to reduce the environmental effect of their IT operations. Yet it is also clear that the environmental footprint of the sector is significant, estimated at 5-9% of the world's total electricity use and more than 2% of all emissions. Data centers and telecommunications networks will need to become more energy efficient, reuse waste energy, use more renewable energy sources, and use less water for cooling to stay competitive. In the European Union, policy efforts and industry initiatives aim for climate-neutral data centers by 2030.

The carbon emissions associated with manufacturing devices and network infrastructures is also a key factor.

Green computing can involve complex trade-offs. It can be useful to distinguish between IT for environmental sustainability and the environmental sustainability of IT. Although green IT focuses on the environmental sustainability of IT, in practice these two aspects are often interconnected. For example, launching an online shopping platform may increase the carbon footprint of a company's own IT operations, while at the same time helping customers to purchase products remotely, without requiring them to drive, in turn reducing greenhouse gas emission related to travel. The company might be able to take credit for these decarbonisation benefits under its Scope 3 emissions reporting, which includes emissions from across the entire value chain.

Origins

In 1992, the U.S. Environmental Protection Agency launched Energy Star, a voluntary labeling program that is designed to promote and recognize the energy efficiency in monitors, climate control equipment, and other technologies. This resulted in the widespread adoption of sleep mode among consumer electronics. Concurrently, the Swedish organization TCO Development launched the TCO Certified program to promote low magnetic and electrical emissions from CRT-based computer displays; this program was later expanded to include criteria on energy consumption, ergonomics, and the use of hazardous materials in construction.

Regulations and industry initiatives

In 2009 the Organisation for Economic Co-operation and Development (OECD) published a survey of over 90 government and industry initiatives on "Green ICTs" (Information and Communication Technologies), the environment and climate change. The report concluded that initiatives tended to concentrate on the greening ICTs themselves, rather than on their actual implementation to reduce global warming and environmental degradation. In general, only 20% of initiatives had measurable targets, with government programs tending to include targets more frequently than business associations.

Government

Many governmental agencies have continued to implement standards and regulations that encourage green computing. The Energy Star program was revised in October 2006 to include stricter efficiency requirements for computer equipment, along with a tiered ranking system for approved products.

By 2008, 26 US states established statewide recycling programs for obsolete computers and consumer electronics equipment. The statutes either impose an "advance recovery fee" for each unit sold at retail or require the manufacturers to reclaim the equipment at disposal.

In 2010, the American Recovery and Reinvestment Act (ARRA) was signed into legislation by President Obama. The bill allocated over $90 billion to be invested in green initiatives (renewable energy, smart grids, energy efficiency, etc.) In January 2010, the U.S. Energy Department granted $47 million of the ARRA money towards projects to improve the energy efficiency of data centers. The projects provided research to optimize data center hardware and software, improve power supply chain, and data center cooling technologies.

Green Digital Governance

Green digital governance refers to the use of information and communication technology (ICT) to support environmentally sustainable policies and practices. It describes a strategy with which an organisation strives to align its information and communications technology with sustainability goals. This can include using digital tools and platforms to monitor and regulate environmental impact, as well as promoting the development and use of clean and renewable energy sources in the technology sector. The goal of green digital governance is to reduce the carbon footprint of the digital economy and to support the transition to a more sustainable and resilient society.

Both the green and the digital transitions are on the agenda for most European countries, as well as the EU as a whole. Documents and goals such as the European Green Deal and the Sustainable Development Goals, fit for 55, Digital Europe and others have begun the transitions. These two transitions often contradict each other, as digital technologies have substantial environmental footprints that go against the targets of the green transition.

The European Union sees digitalisation and the adoption of ICT (Information and Communications Technology) solutions as an important tool for creating greener solutions, while also acknowledging that in order to achieve the desired positive environmental impact, the tools themselves must be environmentally sustainable. The green transition may accelerate innovation and adoption of digital solutions offering the ICT sector new opportunities for becoming more competitive. The synergy created as a result of the green transition and digitalisation brings social, economic and environmental benefits, which is a goal of environmentally friendly digital governments and the creation of green ICT solutions in general.

The digital component is expected to also be used to reach the ambitions of the European Green Deal and Sustainable Development Goals. As powerful enablers for the sustainability transition, digital solutions can advance the circular economy, support the decarbonisation of all sectors and reduce the environmental and social footprint of products placed on the EU market. For example, key sectors such as precision agriculture, transport and energy can benefit from digital solutions in pursuing the sustainability objectives of the European Green Deal.

E-government services can provide solutions to the environmental problem. The possibility for a citizen to fully request and get a service online would render, in addition to cost savings for the public authorities and increased citizen satisfaction, reductions of carbon emissions and paper consumption.

Industry

- iMasons Climate Accord Founded in 2022, the (ICA) is a historic cooperative of companies committed to reducing carbon in digital infrastructure materials, products, and power.

- Climate Savers Computing Initiative (CSCI) is an effort to reduce the electric power consumption of PCs in active and inactive states. The CSCI provides a catalog of green products from its member organizations, and information for reducing PC power consumption. It was started on June 12, 2007. The name stems from the World Wildlife Fund's Climate Savers program, which began in 1999. The WWF is a member of the Computing Initiative.

- The Green Electronics Council offers the Electronic Product Environmental Assessment Tool (EPEAT) to assist in the purchase of "greener" computing systems. The Council evaluates computing equipment on 51 criteria – 23 required and 28 optional - that measure a product's efficiency and sustainability attributes. Products are rated Gold, Silver, or Bronze, depending on how many optional criteria they meet. On January 24, 2007, President George W. Bush issued Executive Order 13423, which requires all United States Federal agencies to use EPEAT when purchasing computer systems.

- The Green Grid is a global consortium dedicated to advancing energy efficiency in data centers and business computing ecosystems. It was founded in February 2007 by several key companies in the industry – AMD, APC, Dell, HP, IBM, Intel, Microsoft, Rackable Systems, SprayCool (purchased in 2010 by Parker), Sun Microsystems and VMware. The Green Grid has since grown to hundreds of members, including end-users and government organizations focused on improving data center infrastructure efficiency (DCIE).

- The Green500 list rates supercomputers by energy efficiency (megaflops/watt), encouraging a focus on efficiency rather than absolute performance.

- Green Comm Challenge is an organization that promotes the development of energy conservation technology and practices in the field of ICT.

- The Transaction Processing Performance Council (TPC) Energy specification augments existing TPC benchmarks by allowing optional publications of energy metrics alongside performance results.

- SPECpower is the first industry standard benchmark that measures power consumption in relation to performance for server-class computers. Other benchmarks which measure energy efficiency include SPECweb, SPECvirt, and VMmark.

Approaches

Modern IT systems rely on a complicated mix of people, networks, and hardware; as such, a green computing initiative ideally covers these areas. A solution may also need to address end user satisfaction, management restructuring, regulatory compliance, and return on investment (ROI). There are also fiscal motivations for companies to take control of their own power consumption; "of the power management tools available, one of the most powerful may still be simple, plain, common sense."

Product longevity

Gartner maintains that the PC manufacturing process accounts for 70% of the natural resources used in the life cycle of a PC. In 2011, Fujitsu released a life-cycle assessment (LCA) of a desktop that show that manufacturing and end of life accounts for the majority of this desktop's ecological footprint. Therefore, the biggest contribution to green computing usually is to prolong the equipment's lifetime. A recent life-cycle assessment comparing a desktop and a laptop for a four-year use case with similar performance found total carbon footprints of 679.1 kg CO2e for the desktop versus 286.1 kg CO2e for the laptop; for both systems, manufacturing was the largest contributor, followed by the use phase.

Another report from Gartner recommends to "Look for product longevity, including upgradability and modularity." For instance, manufacturing a new PC makes a far bigger ecological footprint than manufacturing a new RAM module to upgrade an existing one.

Data center design

Data center facilities are heavy consumers of energy, accounting for between 1.1% and 1.5% of the world's total energy use in 2010. The U.S. Department of Energy estimates that data center facilities consume up to 100 to 200 times more energy than standard office buildings.

Energy efficient data center design should address all of the energy use aspects included in a data center: from the IT equipment to the HVAC (Heating, ventilation and air conditioning) equipment to the actual location, configuration and construction of the building.

The U.S. Department of Energy specifies five primary areas on which to focus energy efficient data center design best practices:

- Information technology (IT) systems

- Environmental conditions

- Air management

- Cooling systems

- Electrical systems

Additional energy efficient design opportunities specified by the U.S. Department of Energy include on-site electrical generation and recycling of waste heat.

Energy efficient data center design should help to better use a data center's space, and increase performance and efficiency.

Software and deployment optimization

Algorithmic efficiency

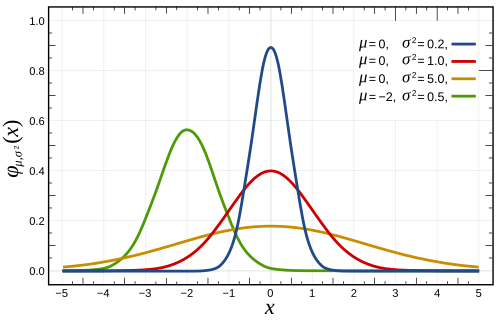

The efficiency of algorithms affects the amount of computer resources required for any given computing function and there are many efficiency trade-offs in writing programs. Algorithm changes, such as switching from a slow (e.g. linear) search algorithm to a fast (e.g. hashed or indexed) search algorithm can reduce resource usage for a given task from substantial to close to zero. In 2009, a study by a physicist at Harvard estimated that the average Google search released 7 grams of carbon dioxide (CO2). However, Google disputed this figure, arguing that a typical search produced only 0.2 grams of CO2. Similarly, the environmental footprint of distributed computing is heavily dependent on the algorithmic efficiency of its underlying consensus mechanisms. Mathematical consumption models evaluating Sybil attack resistance schemes indicate that ledger architectures utilizing directed acyclic graphs (DAG) to achieve consensus via virtual voting present lower energy consumption per transaction when compared to traditional proof-of-work systems and standard proof-of-stake blockchains.

Similarly, the environmental footprint of distributed computing is heavily dependent on the algorithmic efficiency of its underlying consensus mechanisms. Mathematical consumption models evaluating Sybil attack resistance schemes indicate that ledger architectures utilizing directed acyclic graphs (DAG) to achieve consensus via virtual voting present lower energy consumption per transaction when compared to traditional proof-of-work systems and standard proof-of-stake blockchains.

Resource allocation

Algorithms can also be used to route data to data centers where electricity is less expensive. Researchers from MIT, Carnegie Mellon University, and Akamai have tested an energy allocation algorithm that routes traffic to the location with the lowest energy costs. The researchers project up to 40 percent savings on energy costs if their proposed algorithm were to be deployed. However, this approach does not actually reduce the amount of energy being used; it reduces only the cost to the company using it. Nonetheless, a similar strategy could be used to direct traffic to rely on energy that is produced in a more environmentally friendly or efficient way. A similar approach has also been used to cut energy usage by routing traffic away from data centers experiencing warm weather; this allows computers to be shut down to avoid using air conditioning.

Larger server centers are sometimes located where energy and land are inexpensive and readily available. Local availability of renewable energy, climate that allows outside air to be used for cooling, or locating them where the heat they produce may be used for other purposes could be factors in green siting decisions.

Approaches to actually reduce the energy consumption of network devices by proper network/device management techniques have been surveyed Bianzino, et al. The authors grouped the approaches into 4 main strategies, namely (i) Adaptive Link Rate (ALR), (ii) Interface Proxying, (iii) Energy Aware Infrastructure, and (iv) Maximum Energy Aware Applications.

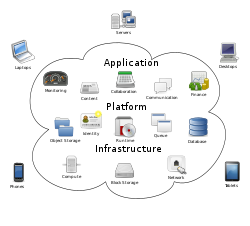

Virtualizing

Computer virtualization refers to the abstraction of computer resources, such as the process of running two or more logical computer systems on one set of physical hardware. The concept originated with the IBM mainframe operating systems of the 1960s, and was commercialized for x86-compatible computers, and other computer systems, in the 1990s. With virtualization, a system administrator can combine several formerly physical systems as virtual machines on one powerful system, thereby conserving resources by removing need for some of the original hardware and reducing power and cooling consumption. Virtualization can assist in distributing work so that servers are either busy or put in a low-power sleep state. Several commercial companies and open-source projects now offer software packages to enable a transition to virtual computing. Intel Corporation and AMD have also built proprietary virtualization enhancements to the x86 instruction set into each of their CPU product lines, in order to facilitate virtual computing.

New virtual technologies, such as operating system-level virtualization can also be used to reduce energy consumption. These technologies make a more efficient use of resources, thus reducing energy consumption by design. Also, the consolidation of virtualized technologies is more efficient than the one done in virtual machines, so more services can be deployed in the same physical machine, reducing the amount of hardware needed.

Terminal servers

Terminal servers have also been used in green computing. When using the system, users at a terminal connect to a central server; all of the actual computing is done on the server, but the end user experiences the system operating as if it were on the terminal. These can be combined with thin clients, which use up to 1/8 the amount of energy of a normal workstation, resulting in a decrease of energy costs and consumption. There has been an increase in using terminal services with thin clients to create virtual labs. Examples of terminal server software include Terminal Services for Windows and the Linux Terminal Server Project (LTSP) for the Linux operating system. Software-based remote desktop clients such as Windows Remote Desktop and RealVNC can provide similar thin-client functions when run on low power hardware that connects to a server.

Data Compression

Data compression, which involves using fewer bits to encode information, may also be used in green computing depending on the structure of the data. Since it is highly data specific, data compression strategies may result in using more energy or resources than necessary in some cases. However, choosing a well-suited compression algorithm for the dataset can yield greater power efficiency and reduce network and storage requirements. There is a tradeoff between compression ratio and energy consumption. Deciding whether or not this is worthwhile depends on the dataset's compressibility. Compression improves energy efficiency for data with a compression ratio much less than roughly 0.3, and hurts for data with higher compression ratios.

Power management

The Advanced Configuration and Power Interface (ACPI), an open industry standard, allows an operating system to directly control the power-saving aspects of its underlying hardware. This allows a system to automatically turn off components such as monitors and hard drives after set periods of inactivity. In addition, a system may hibernate, when most components (including the CPU and the system RAM) are turned off. ACPI is a successor to an earlier Intel-Microsoft standard called Advanced Power Management, which allows a computer's BIOS to control power management functions.

Some programs allow the user to manually adjust the voltages supplied to the CPU, which reduces both the amount of heat produced and electricity consumed. This process is called undervolting. Some CPUs can automatically undervolt the processor, depending on the workload; this technology is called "SpeedStep" on Intel processors, "PowerNow!"/"Cool'n'Quiet" on AMD chips, LongHaul on VIA CPUs, and LongRun with Transmeta processors.

Data center power

Data centers, which have been criticized for their extraordinarily high energy demand, are a primary focus for proponents of green computing. According to a Greenpeace study, data centers represent 21% of the electricity consumed by the IT sector, which is about 382 billion kWh a year.

Data centers can potentially improve their energy and space efficiency through techniques such as storage consolidation and virtualization. Many organizations are aiming to eliminate underused servers, resulting in lower energy usage. The U.S. federal government set a minimum 10% reduction target for data center energy usage by 2011. With the aid of a self-styled ultra-efficient evaporative cooling technology. Google Inc. claims to have reduced its energy consumption to 50% of the industry average.

Cryptocurrency mining, particularly for proof-of-work currencies like Bitcoin, also uses significant amounts of energy globally. Advocates have argued that cryptocurrency can help to drive investment in green energy.

Operating system support

Microsoft Windows has included limited PC power management features since Windows 95. These initially provided for stand-by (suspend-to-RAM) and a monitor low power state. Further iterations of Windows added hibernate (suspend-to-disk) and support for the ACPI standard. Windows 2000 was the first NT-based operating system to include power management. This required major changes to the underlying operating system architecture and a new hardware driver model. Windows 2000 also introduced Group Policy, a technology that allowed administrators to centrally configure most Windows features. However, power management was not one of those features. This is probably because the power management settings design relied upon a connected set of per-user and per-machine binary registry values, effectively leaving it up to each user to configure their own power management settings.

This approach, which is not compatible with Windows Group Policy, was repeated in Windows XP. The reasons for this design decision by Microsoft are not known, and it has resulted in heavy criticism. Microsoft significantly improved this in Windows Vista by redesigning the power management system to allow basic configuration by Group Policy. The support offered is limited to a single per-computer policy. Windows 7 retains these limitations but includes refinements for timer coalescing, processor power management, and display panel brightness. The most significant change in Windows 7 is in the user experience. The prominence of the default High Performance power plan has been reduced with the aim of encouraging users to save power.

Third-party PC power management software for adds features beyond those built-in to the Windows operating system. Most products offer Active Directory integration and per-user/per-machine settings with the more advanced offering multiple power plans, scheduled power plans, anti-insomnia features and enterprise power usage reporting.

Linux systems started to provide laptop-optimized power-management in 2005, with power-management options being mainstream since 2009.

Power supply

Desktop computer power supplies are in general 70–75% efficient, dissipating the remaining energy as heat. A certification program called 80 Plus certifies PSUs that are at least 80% efficient; typically these models are drop-in replacements for older, less efficient PSUs of the same form factor. As of July 20, 2007, all new Energy Star 4.0-certified desktop PSUs must be at least 80% efficient.

Storage

Smaller form factor (e.g., 2.5 inch) hard disk drives often consume less power per gigabyte than physically larger drives. Unlike hard disk drives, solid-state drives store data in flash memory or DRAM. With no moving parts, power consumption may be reduced somewhat for low-capacity flash-based devices.

As hard drive prices have fallen, storage farms have tended to increase in capacity to make more data available online. This includes archival and backup data that would formerly have been saved on tape or other offline storage. The increase in online storage has increased power consumption. Reducing the power consumed by large storage arrays, while still providing the benefits of online storage, is a subject of ongoing research.

Video card

A fast GPU may be the largest power consumer in a computer.

Energy-efficient display options include:

- No video card – use a shared terminal, shared thin client, or desktop sharing software if display is required.

- Use motherboard video output – typically low 3D performance and low power.

- Select a GPU based on low idle power, average wattage, or performance per watt.

Display

Unlike other display technologies, electronic paper does not use any power while displaying an image. CRT monitors typically use more power than LCD monitors. They also contain significant amounts of lead. LCD monitors typically use a cold-cathode fluorescent bulb to provide light for the display. Most newer displays use an array of light-emitting diodes (LEDs) in place of the fluorescent bulb, which further reduces the amount of electricity used by the display. Fluorescent back-lights also contain mercury, whereas LED back-lights do not.

A light-on-dark color scheme, also called dark mode, is a color scheme that requires less energy to display on new display technologies, such as OLED. This positively impacts battery life and energy consumption. While an OLED will consume around 40% of the power of an LCD displaying an image that is primarily black, it can use more than three times as much power to display an image with a white background, such as a document or web site. This can lead to reduced battery life and increased energy use, unless a light-on-dark color scheme is used. A 2018 article in Popular Science suggests that "Dark mode is easier on the eyes and battery" and displaying white on full brightness uses roughly six times as much power as pure black on a Google Pixel, which has an OLED display. Apple's iOS 13 and iPadOS 13 both feature a light-on dark mode, which would allow third-party developers to implement their own dark themes. Google's Android 10 features a system-level dark mode.

Materials recycling

Recycling computing equipment can keep harmful materials such as lead, mercury, and hexavalent chromium out of landfills, and can replace equipment that otherwise would need to be manufactured, saving further energy and emissions. Computer systems that have outlived their original function can be re-purposed, or donated to various charities and non-profit organizations. However, many charities have recently imposed minimum system requirements for donated equipment. Additionally, parts from outdated systems may be salvaged and recycled through certain retail outlets and municipal or private recycling centers. Computing supplies, such as printer cartridges, paper, and batteries may be recycled as well.

A drawback to many of these schemes is that computers gathered through recycling drives are often shipped to developing countries where environmental standards are less strict than in North America and Europe. The Silicon Valley Toxics Coalition has estimated that 80% of the post-consumer e-waste collected for recycling is shipped abroad to countries such as China and India.

In 2011, the collection rate of e-waste remained low, even in the most ecology-responsible countries like France. In the U.S., e-waste collection was at a 14% annual rate between electronic equipment sold and e-waste collected for 2006 to 2009.

The recycling of old computers raises a privacy issue. The old storage devices still hold private information, such as emails, passwords, and credit card numbers, which can be recovered simply by using software available freely on the Internet. Deletion of a file does not actually remove the file from the hard drive. Before recycling a computer, users should remove the hard drive, or hard drives if there is more than one, and physically destroy it or store it somewhere safe. There are some authorized hardware recycling companies to whom the computer may be given for recycling, and they typically sign a non-disclosure agreement.

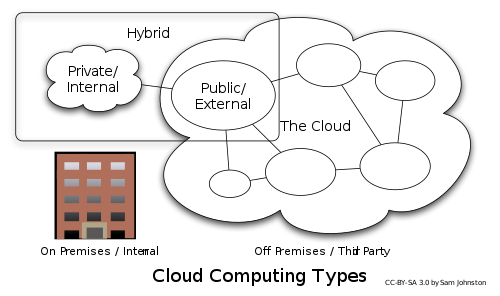

Cloud computing

Cloud computing may help to address two major ICT challenges related to green computing – energy usage and embodied carbon. Hyperscale data centers such as those operated by AWS, Azure, and GCP can benefit from economies of scale, and virtualization, dynamic provisioning environment, multi-tenancy and green data center approaches can enable more efficient resource allocation. Organizations may be able to reduce their direct energy consumption and carbon emissions by up to 30% and 90% respectively by moving certain on-premises applications into the public cloud.

However, critics point to shortcomings in the carbon tracking and management tools provided by major cloud providers. GreenOps, also known as DevGreenOps, DevSusOps or DevSustainableOps, is emerging as a framework to include sustainability into cloud management. Carbon-aware computing and grid-aware computing can form part of a GreenOps approach. This includes techniques like demand shifting, which means moving computational workloads to locations or times of day with cleaner energy in the grid. Demand shaping is a similar technique, which focuses on adjusting workloads according to the amount of clean energy currently available.

Edge computing

New technologies such as edge and fog computing are a solution to reducing energy consumption. These technologies allow redistributing computation near its use, thus reducing energy costs in the network. Furthermore, having smaller data centers, the energy used in operations such as refrigerating and maintenance is reduced.

Remote work

Remote work using teleconference and telepresence technologies is often implemented in green computing initiatives. The advantages include increased worker satisfaction, reduction of greenhouse gas emissions related to travel, and increased profit margins as a result of lower overhead costs for office space, heat, lighting, etc. The average annual energy consumption for U.S. office buildings is over 23 kilowatt hours per square foot, with heat, air conditioning and lighting accounting for 70% of all energy consumed. Other related initiatives, such as Hoteling, reduce the square footage per employee as workers reserve space only when needed. Many types of jobs, such as sales, consulting, and field service, integrate well with this technique.

Voice over IP (VoIP) reduces the telephony wiring infrastructure by sharing the existing Ethernet copper. VoIP and phone extension mobility also made hot desking more practical. Wi-Fi consume 4 to 10 times less energy than 4G.

Telecommunication network devices energy indices

In 2013 ICT energy consumption, in the US and worldwide, was estimated respectively at 9.4% and 5.3% of the total electricity produced. The energy consumption of ICTs is today significant even when compared with other industries. Some studies have tried to identify the key energy indices that allow a relevant comparison between different devices (network elements). This analysis was focused on how to optimise device and network consumption for carrier telecommunication by itself. The target was to allow an immediate perception of the relationship between the network technology and the environmental effect. These studies are at the start and further research will be necessary.

Supercomputers

The Green500 list was first announced on November 15, 2007, at SC|07. As a complement to the TOP500, the listing of the Green500 began a new era where supercomputers can be compared by performance-per-watt. As of 2019, two Japanese supercomputers topped the Green500 energy efficiency ranking with performance exceeding 16 GFLOPS/watt, and two IBM AC922 systems followed with performance exceeding 15 GFLOPS/watt.

Education and certification

Green computing programs

Degree and postgraduate programs provide training in a range of information technology concentrations along with sustainable strategies to educate students on how to build and maintain systems while reducing its harm to the environment. The Australian National University (ANU) offers "ICT Sustainability" as part of its information technology and engineering masters programs. Athabasca University offers a similar course "Green ICT Strategies", adapted from the ANU course notes by Tom Worthington. In the UK, Leeds Beckett University offers an MSc Sustainable Computing program in both full- and part-time access modes.

Green computing certifications

Some certifications demonstrate that an individual has specific green computing knowledge, including:

- Green Computing Initiative – GCI offers the Certified Green Computing User Specialist (CGCUS), Certified Green Computing Architect (CGCA) and Certified Green Computing Professional (CGCP) certifications.

- Information Systems Examination Board (ISEB) Foundation Certificate in Green IT is appropriate for showing an overall understanding and awareness of green computing and where its implementation can be beneficial.

- Singapore Infocomm Technology Federation (SiTF) Singapore Certified Green IT Professional is an industry endorsed professional level certification offered with SiTF authorized training partners. Certification requires completion of a four-day instructor-led core course, plus a one-day elective from an authorized vendor.

- Australian Computer Society (ACS) The ACS offers a certificate for "Green Technology Strategies" as part of the Computer Professional Education Program (CPEP). Award of a certificate requires completion of a 12-week e-learning course designed by Tom Worthington, with written assignments.

Ratings

Since 2010, Greenpeace has maintained a list of ratings of prominent technology companies in several countries based on how clean the energy used by that company is, ranging from A (the best) to F (the worst).

ICT and energy demand

Digitalization has brought additional energy consumption; energy-increasing effects have been greater than the energy-reducing effects. Four energy consumption increasing effects are:

- Direct effect – Strong increases of (technical) energy efficiency in ICT are countered by the growth of the sector.

- Efficiency and rebound effects – Rebound effects are high for ICT and increased productivity often leads to new behaviors that are more energy intensive.

- Economic growth – Positive effect of digitalization on economic growth.

- Sectoral change – Growth of ICT services tends not to replace, but come on top of existing services.